Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

What Does Data Democratization Mean for Modern Data Management?

What does data democratization mean for organizations and how does it influence decision-making?

Introduction

As organizations increasingly prioritize data democratization, they encounter a paradox: the need for open access to insights versus the necessity of stringent security measures. This transformative concept empowers all employees, regardless of their technical expertise, to access and utilize critical insights. Such an initiative not only enhances decision-making processes but also fosters a culture of innovation and collaboration within organizations. However, organizations often struggle to balance the need for accessibility with the imperative of maintaining security and compliance. Navigating these complexities is crucial for organizations that aspire to harness the full potential of their data assets.

Define Data Democratization: Understanding the Concept

Information democratization is essential for empowering all employees within a company to access critical insights, regardless of their technical background. This initiative seeks to remove barriers that have limited access to information for non-technical staff. By enabling all employees to interact with information, companies can encourage informed decision-making based on data. This is particularly crucial in the AI era, where timely and informed decisions can significantly influence business outcomes. In fact, 87% of business leaders acknowledge that information insights are essential for managing operational efficiency, underscoring the significance of democratizing information across all levels of the organization.

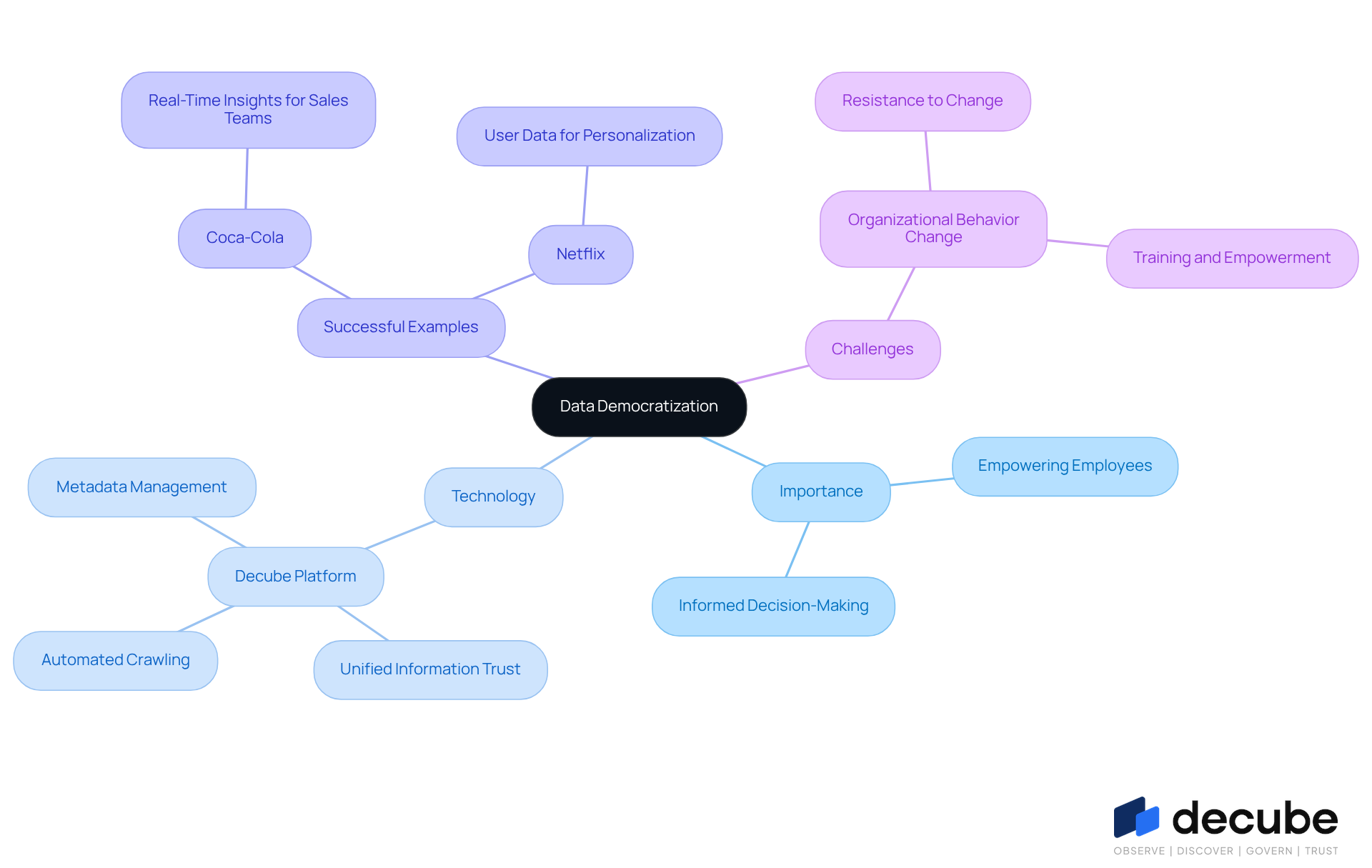

Decube plays a crucial role in this transformation by providing a genuinely unified information trust platform that improves observability and governance. Its automated crawling capability guarantees that metadata is easily handled and automatically refreshed once information sources are linked, removing the necessity for manual updates. This feature makes managing information easier and enhances security by establishing clear approval processes, enabling entities to control who can access or modify details.

Moreover, information accessibility boosts staff involvement by allowing everyone - from executives to front-line personnel - to utilize insights, thus aiding in the company's goals. Successful examples of information accessibility initiatives, such as those executed by Coca-Cola, which supplies its sales teams with real-time insights into consumer preferences, and Netflix, which equips its teams with user information for personalized recommendations, demonstrate how offering widespread access to information can lead to enhanced operational efficiency and promote a culture of innovation. As organizations work to adjust to the growing significance of information, adopting inclusiveness not only enhances decision-making but also enables employees to use information effectively in their roles. However, it is important to recognize that 62% of business leaders struggle to alter organizational behaviors to adopt information accessibility, highlighting the challenges that must be addressed for successful implementation. Overcoming these challenges will enable organizations to maximize the advantages of accessible information.

Explore the Historical Context of Data Democratization

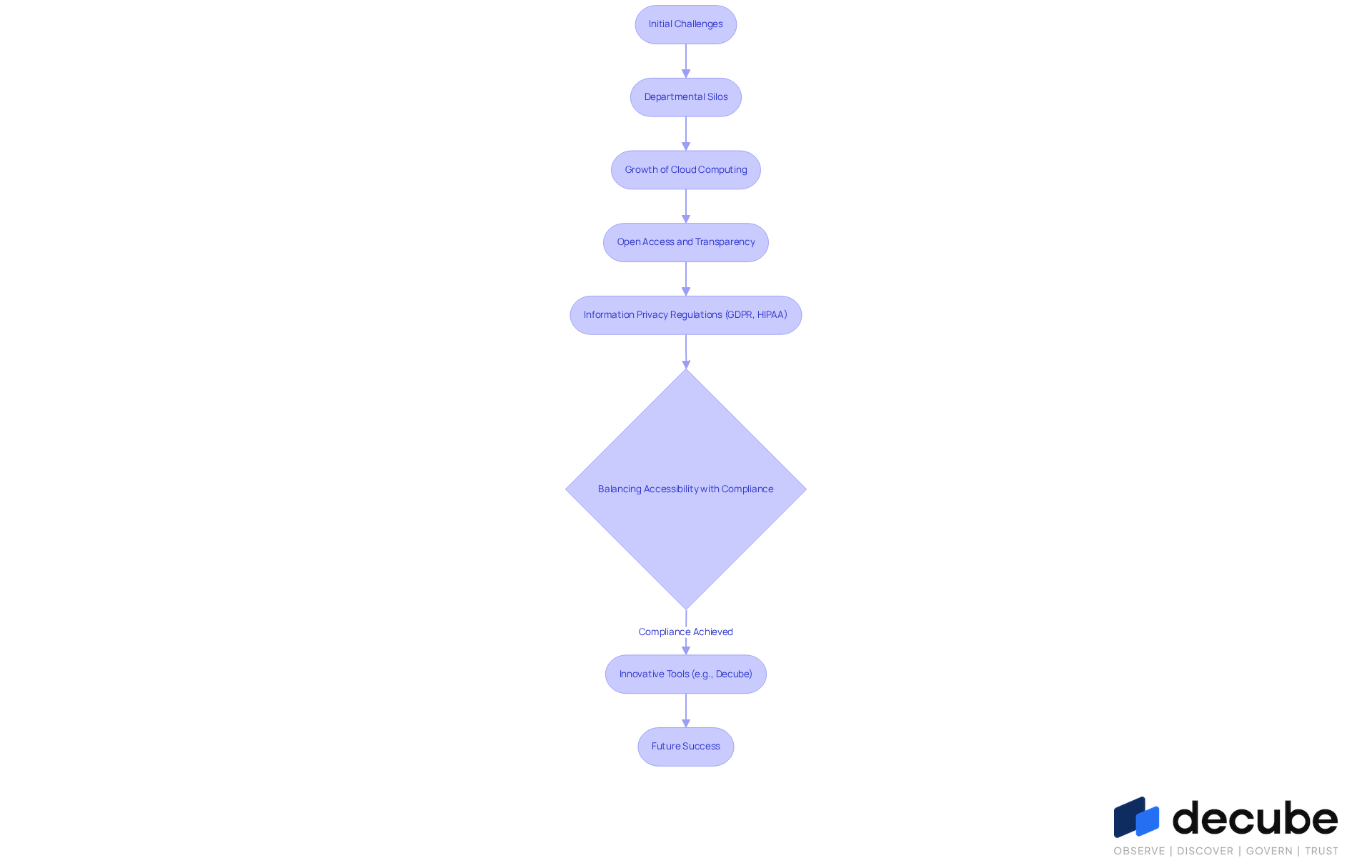

The evolution of information democratization prompts us to consider what does data democratization mean in relation to the significant shift towards open access and transparency in organizational practices. Organizations began to recognize information as a strategic asset, highlighting what does data democratization mean for the necessity of inclusive access.

Initially, information was often confined to departmental silos, which resulted in inefficiencies and lost opportunities for collaboration. The growth of cloud computing and advanced analytics tools significantly accelerated this transition, enabling organizations to centralize information storage and provide easier access for a broader audience.

As information privacy regulations like GDPR and HIPAA were established, the challenge of balancing accessibility with compliance became a central theme in discussions about what does data democratization mean. Currently, tools like Decube's automated crawling feature are essential in advancing this evolution by ensuring that metadata is automatically refreshed once sources are connected, thereby enhancing information observability and governance.

Moreover, Decube enables entities to manage who can access or modify information through a specified approval process, addressing the challenge of balancing accessibility with compliance. Insights from users highlight how effective Decube's automated column-level lineage is, offering an ideal combination of cataloging and observability. This feature allows business users to quickly identify issues within reports and dashboards, fostering collaboration and improving decision-making across all levels.

As organizations progressively embrace information accessibility strategies, the role of innovative tools will be pivotal in shaping their future success.

Identify Key Characteristics of Data Democratization

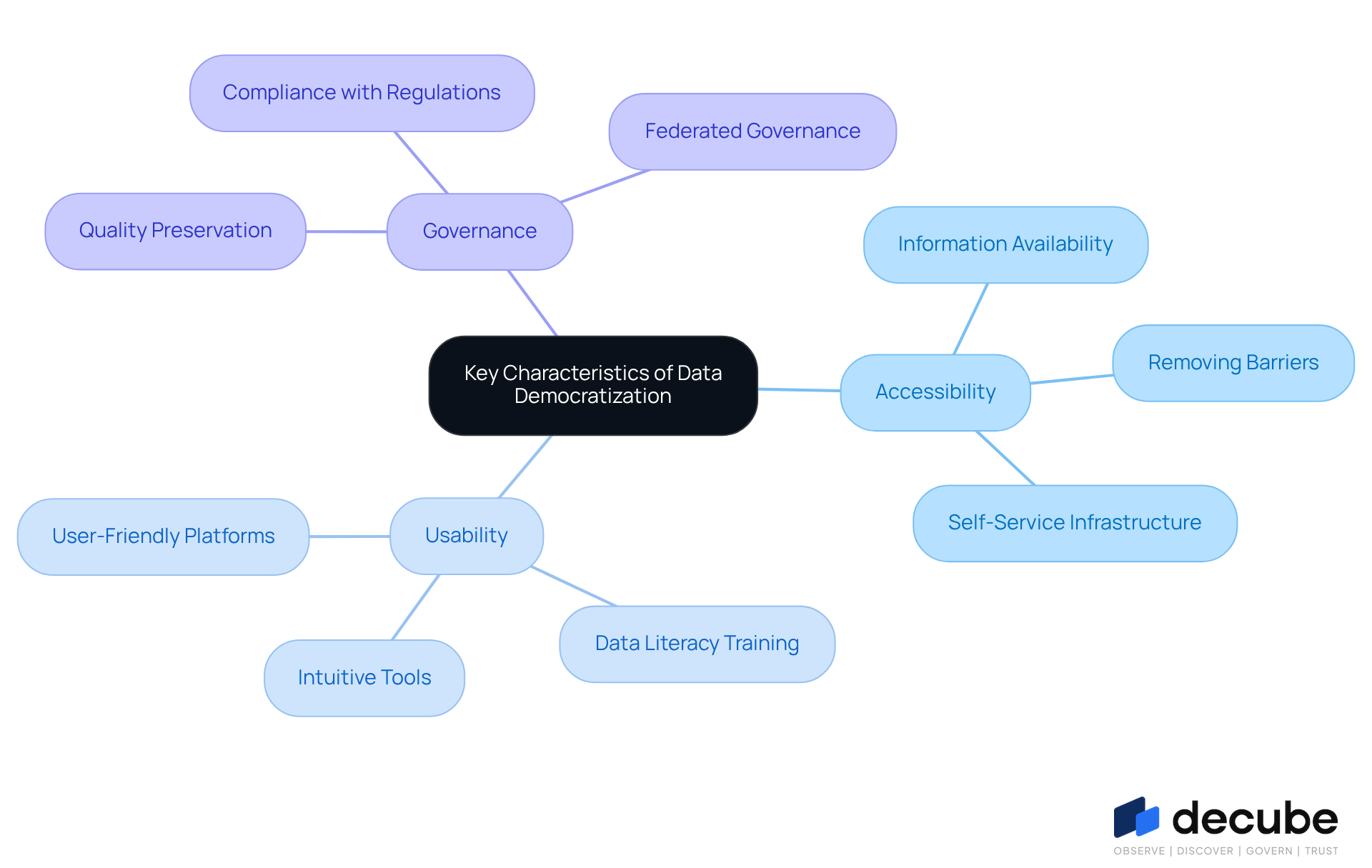

The key characteristics of information democratization - accessibility, usability, and governance - are critical for organizational success. Accessibility ensures that information is available to all staff, effectively breaking down obstacles that impede access. Usability highlights the delivery of intuitive tools and training, allowing employees to interact with information competently, regardless of their technical expertise. Governance is essential for preserving information quality and compliance, ensuring that democratized information remains secure and trustworthy.

Additionally, incorporating information catalogs significantly enhances trust and quality, allowing teams to effectively uncover and understand information assets. Moreover, fostering a culture of information literacy is essential, enabling employees to confidently interpret and utilize insights. Organizations that excel in these areas often experience improved collaboration and heightened innovation, positioning themselves for success in a competitive landscape.

For example, as highlighted by Emily Winks, a Data Governance Specialist:

"A strategy for information accessibility is a thorough approach to make valuable insights easily reachable and comprehensible to all individuals within a company, not solely technical professionals or information authorities."

Organizations that prioritize robust information governance frameworks, such as Decube, enhance information accessibility and foster a culture of evidence-based decision-making, which encourages experimentation and problem-solving, leading to a competitive advantage.

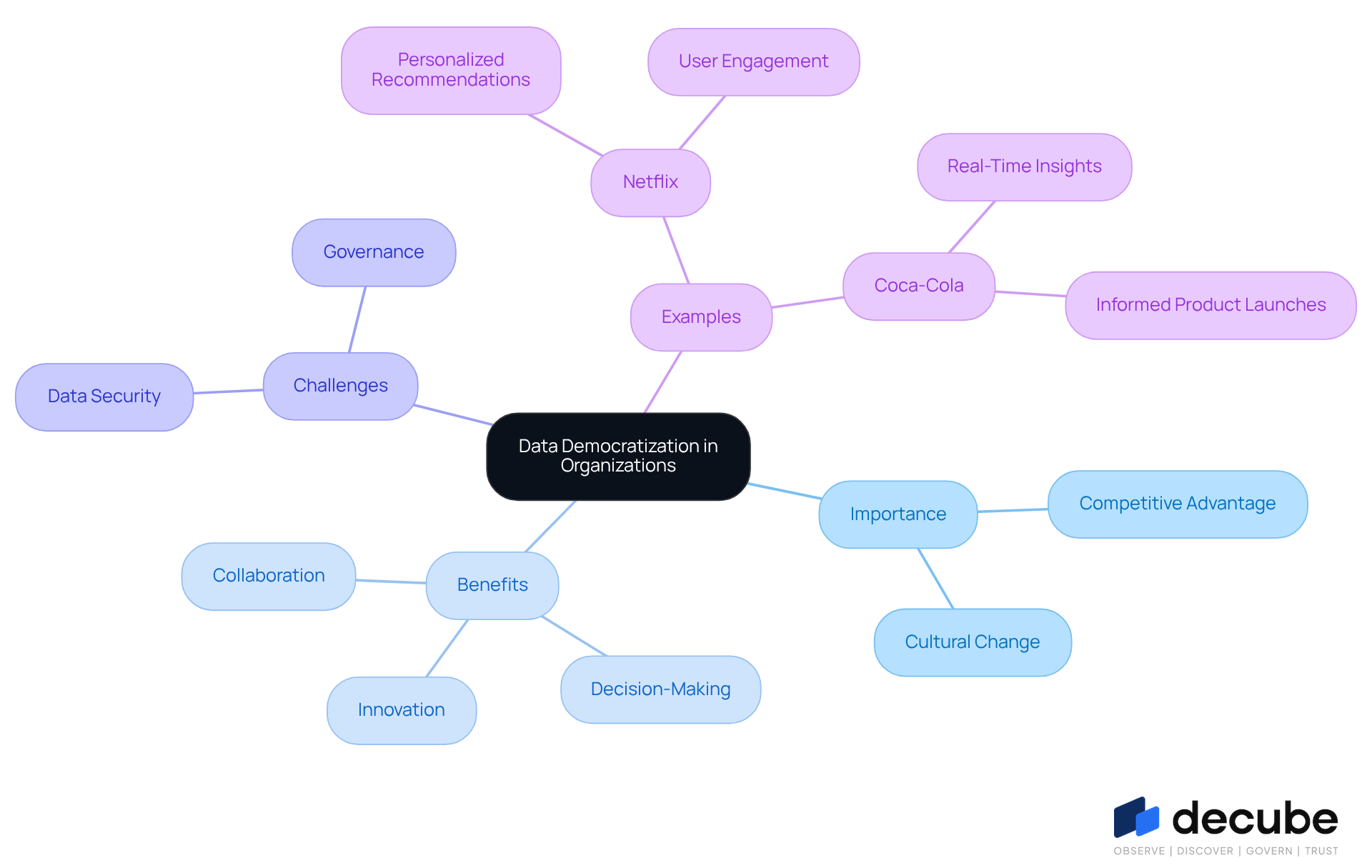

Discuss the Importance of Data Democratization in Organizations

In an era where data drives competitive advantage, organizations striving to maintain a competitive edge in today's dynamic business landscape must understand what data democratization means. By providing all staff access to information, organizations foster a culture of innovation and adaptability. This accessibility accelerates decision-making by enabling employees to perform basic analytics independently. Indeed, 73% of business leaders assert that access to information enhances decision-making, prompting the question: what does data democratization mean for their organizations? Moreover, it fosters collaboration across departments, dismantling silos and promoting teamwork on data-driven initiatives.

For instance, Netflix has effectively employed information democratization to enhance content suggestions, greatly increasing viewer engagement and satisfaction. Similarly, Coca-Cola empowers its sales teams with real-time insights into consumer preferences, enabling more informed product launches.

Despite the benefits, organizations face significant challenges in ensuring data security and governance. This is where Decube's automated crawling feature plays a crucial role in addressing these challenges, enabling effortless metadata management and secure access control, which improves information observability and governance. Furthermore, Decube's pricing model provides adaptable choices that meet diverse organizational requirements, guaranteeing that information accessibility is both attainable and secure.

Cultural changes within the entity are essential to completely adopt information accessibility. These entities show that adopting open access to information not only improves customer understanding and marketing approaches but also promotes operational efficiencies. Successfully addressing these challenges can lead to enhanced growth and competitive advantage in an increasingly data-driven world.

Conclusion

Data democratization is not just a trend; it represents a critical evolution in how organizations manage and leverage their data resources. This inclusive approach empowers all employees to access and utilize critical insights, regardless of their technical expertise. It enhances decision-making and fosters a culture of innovation that can significantly impact business outcomes. As organizations increasingly recognize data as a strategic asset, this shift not only enhances decision-making but also positions organizations to thrive in a competitive landscape.

Throughout the discussion, key arguments have highlighted the role of innovative tools like Decube in facilitating this transformation. By improving information observability and governance, organizations can overcome traditional barriers to data access. Successful case studies from industry leaders such as Coca-Cola and Netflix illustrate how effective data democratization can enhance operational efficiency and collaboration, ultimately driving business success. However, despite the potential benefits, organizations often face significant resistance when attempting to shift their data culture, particularly regarding security and organizational culture.

Embracing data democratization requires a fundamental shift in how organizations think about and utilize their data resources. By adopting this concept, businesses can unlock the full potential of their data, leading to informed decision-making and improved outcomes. As the landscape of data management continues to evolve, organizations are encouraged to prioritize inclusivity and accessibility in their data strategies, ensuring that every employee can contribute to and benefit from the insights derived from data. Ultimately, organizations that prioritize data democratization will not only enhance their operational capabilities but also foster a culture of continuous improvement and innovation.

Frequently Asked Questions

What is data democratization?

Data democratization is the process of empowering all employees within a company to access critical insights, regardless of their technical background, thereby removing barriers that limit information access for non-technical staff.

Why is data democratization important in the AI era?

In the AI era, timely and informed decisions significantly influence business outcomes. Data democratization enables informed decision-making based on data, which is crucial for operational efficiency.

What percentage of business leaders recognize the importance of information insights?

87% of business leaders acknowledge that information insights are essential for managing operational efficiency.

How does Decube contribute to data democratization?

Decube provides a unified information trust platform that improves observability and governance. Its automated crawling capability ensures that metadata is easily handled and refreshed automatically, enhancing information management and security.

What benefits does information accessibility bring to employees?

Information accessibility boosts staff involvement by allowing all employees, from executives to front-line personnel, to utilize insights, aiding in the company's goals and enhancing decision-making.

Can you provide examples of companies that have successfully implemented information accessibility?

Coca-Cola supplies its sales teams with real-time insights into consumer preferences, and Netflix equips its teams with user information for personalized recommendations, both demonstrating enhanced operational efficiency and a culture of innovation.

What challenges do business leaders face in adopting information accessibility?

62% of business leaders struggle to alter organizational behaviors to adopt information accessibility, highlighting the challenges that must be addressed for successful implementation.

What are the potential advantages of overcoming challenges related to information accessibility?

Overcoming these challenges enables organizations to maximize the advantages of accessible information, leading to improved decision-making and effective use of information in employees' roles.

List of Sources

- Define Data Democratization: Understanding the Concept

- The Data Democratization Dilemma: Unlocking Potential, Managing Risk (https://blend360.com/thought-leadership/the-data-democratization-dilemma-unlocking-potential-managing-risk)

- What you should know about data democratization and what it can do for your business data operations | LightsOnData (https://lightsondata.com/data-democratization-business-data-operations)

- Data Democratization Strategy for Business Decisions | IBM (https://ibm.com/think/topics/data-democratization)

- Data Democratization: Empowering Teams with Data Insights (https://acceldata.io/blog/data-democratization-transforming-team-efficiency-with-easy-access-to-insights)

- Data Democratization 2026: AI Solutions for Company-Wide Analytics (https://linkedin.com/pulse/data-democratization-2026-ai-solutions-company-wide-wyaac)

- Explore the Historical Context of Data Democratization

- Viacom: Democratization of Data Science - Case - Faculty & Research - Harvard Business School (https://hbs.edu/faculty/Pages/item.aspx?num=53776)

- Data Democratization: What It Means and Why It Matters (https://anomalo.com/blog/data-democratization-what-it-means-and-why-it-matters)

- (PDF) Data Democratization: Empowering Non-Technical Users with Self-Service BI Tools and Techniques to Access and Analyze Data Without Heavy Reliance on IT Teams (https://researchgate.net/publication/374306763_Data_Democratization_Empowering_Non-Technical_Users_with_Self-Service_BI_Tools_and_Techniques_to_Access_and_Analyze_Data_Without_Heavy_Reliance_on_IT_Teams)

- Identify Key Characteristics of Data Democratization

- The Ultimate Guide to Data Democratization (https://timextender.com/blog/data-empowered-leadership/the-ultimate-guide-to-data-democratization)

- Data Democratization Strategy: The Ultimate Guide in 2024! (https://atlan.com/what-is/data-democratization-strategy)

- Data Democratization: Definition, Importance, and Best Practices (https://denodo.com/en/glossary/data-democratization-definition-importance-best-practices)

- intelliswift.com (https://intelliswift.com/insights/blogs/5-reasons-why-data-governance-is-critical-for-data-democratization)

- Data Democratization: What It is and Why It Matters (https://thoughtspot.com/data-trends/business-analytics/data-democratization)

- Discuss the Importance of Data Democratization in Organizations

- Explore 50 Quotes About Data That Inspire and Inform (https://linkedin.com/pulse/explore-50-quotes-data-inspire-inform-raghavendra-narayana-4yj2f)

- Why Data Democratization Matters Today (https://integrate.io/blog/why-data-democratization-matters-today)

- Data Democratization: Empowering Teams with Data Insights (https://acceldata.io/blog/data-democratization-transforming-team-efficiency-with-easy-access-to-insights)

- What is Data Democratization? Benefits and Best Practices | RecordPoint (https://recordpoint.com/blog/data-democratization)

- The Benefits and Opportunities of Data Democratization | Data Dynamics (https://datadynamicsinc.com/blog-democratize-your-data-empower-your-organization-the-benefits-and-opportunities-of-data-democratization)

_For%20light%20backgrounds.svg)