Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Effective Lineage Process Implementation

Master effective lineage process implementation with best practices for data management success.

Introduction

Organizations face increasing pressure to understand the flow of data through their systems to ensure compliance and operational excellence. Effective data lineage practices safeguard against compliance risks, improve data quality, streamline operations, and enhance team collaboration. However, organizations often struggle to implement effective data lineage practices amidst evolving regulations and technologies. Failure to establish these practices can lead to significant compliance risks and operational inefficiencies. This article outlines strategies and best practices for establishing robust data lineage processes that enable organizational success.

Understand the Importance of Data Lineage in Management

Monitoring and illustrating information as it moves through different systems is crucial for organizations to ensure compliance and operational efficiency. Understanding data lineage is essential for several reasons:

- Regulatory Compliance: Organizations must adhere to regulations such as GDPR, HIPAA, and SOC 2. The information lineage process offers a transparent audit trail, illustrating how information is gathered, processed, and stored. This transparency supports organizations in the lineage process to meet regulatory requirements and builds trust with stakeholders, ultimately strengthening audit readiness.

- Information Quality Assurance: By tracking the path of information, companies can recognize and correct quality problems, such as duplicates or erroneous transformations. Industry insights reveal that poor information quality can cost organizations an average of $15 million annually. This underscores the importance of effective information tracking to maintain high standards.

- Operational Efficiency: The lineage process of effective tracking of origins streamlines workflows, reducing the time spent on troubleshooting and enhancing overall management efficiency. Organizations that adopt strong information tracking practices can react more rapidly to related incidents, minimizing interruptions and ensuring business continuity.

- Improved Cooperation: Information flow encourages better communication between information creators and users by offering a common understanding of information movements and changes. This clarity is vital for teamwork in information governance, allowing teams to collaborate more effectively in managing assets. Decube's automated crawling feature further enhances this collaboration by ensuring that metadata is auto-refreshed and access is securely controlled.

In summary, by mastering information flow, organizations can not only mitigate compliance risks but also foster a culture of trust and adaptability in an ever-changing regulatory landscape.

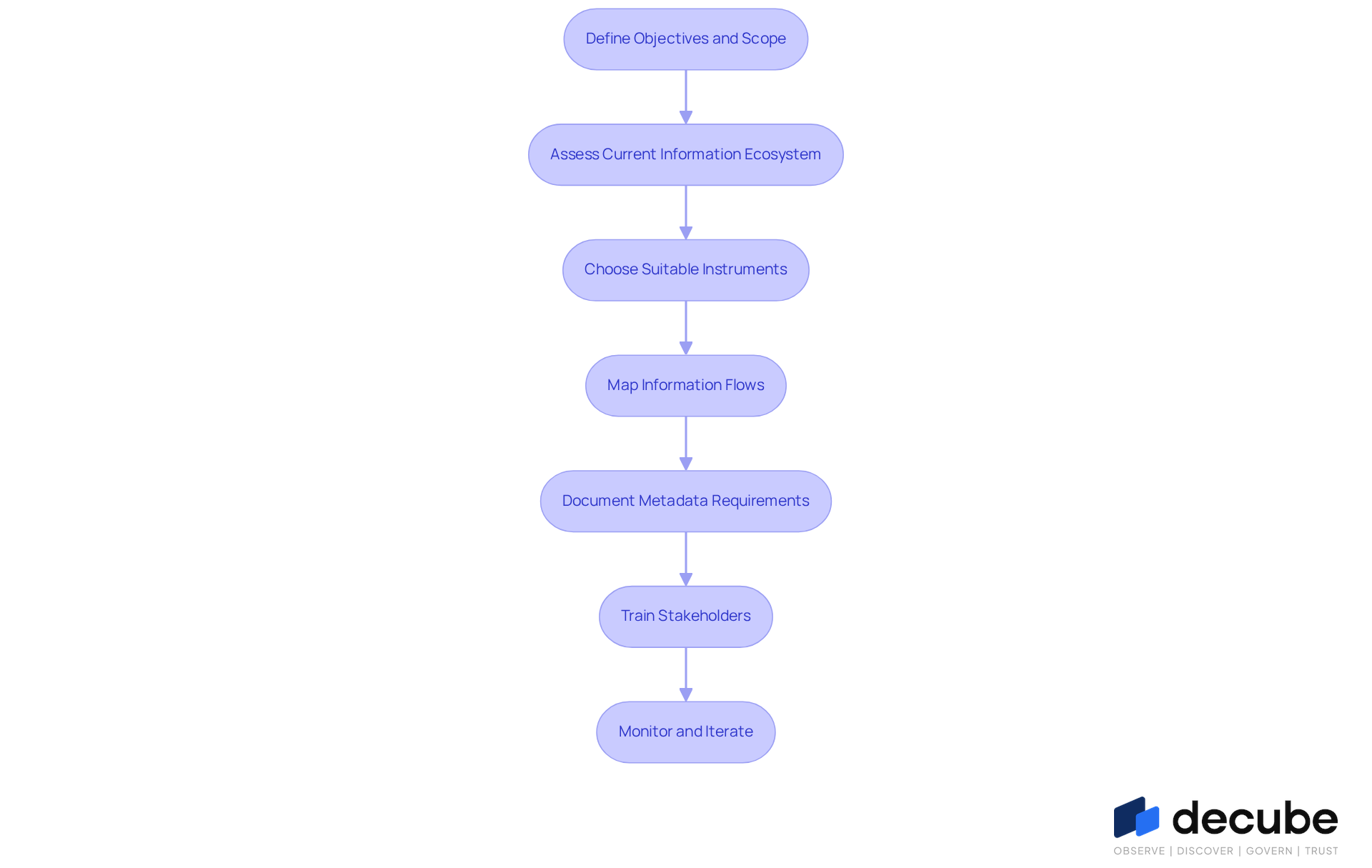

Establish Clear Steps for Implementing Lineage Processes

Implementing effective data lineage processes requires a structured approach to ensure success:

- Define Objectives and Scope: Begin by recognizing the particular business goals that information tracking will assist. Clearly identify which information resources are essential for your entity and outline the extent of the implementation process. Establishing clear objectives is crucial, as organizations that do so often achieve higher success rates in their initiatives.

- Assess Current Information Ecosystem: Evaluate your existing information architecture, including information sources, storage solutions, and processing systems. Understanding your current environment can be challenging, but it is essential for identifying gaps and opportunities for improvement, ensuring that the implementation is customized to your entity’s requirements.

- Choose Suitable Instruments: Select information tracking tools that align with your organization's requirements. Seek solutions that provide automated tracking of data flow, smooth integration features with current systems, and intuitive interfaces to encourage adoption among stakeholders.

- Map Information Flows: Create a visual representation of how information flows through your systems. This mapping should include all transformations, aggregations, and movements of information from source to destination, providing a comprehensive view of the lineage process of information flow.

- Document Metadata Requirements: Create a metadata management plan that encompasses comprehensive documentation of information definitions, ownership, and provenance. This documentation is vital for preserving clarity and adherence, as it guarantees that all stakeholders have access to precise information about information assets.

- Train Stakeholders: It's crucial to train all relevant stakeholders-data engineers, analysts, and business users-on the importance of data tracking and how to use the tools effectively. Equipping users with information improves the overall efficiency of the tracing activities.

- Monitor and Iterate: After implementation, continuously observe the processes and gather feedback from users. Utilize this feedback to enhance and upgrade the tracking system over time, promoting a culture of ongoing improvement.

Implementing these steps not only enhances governance but also ensures compliance across the organization. This structured approach not only streamlines data management but also fortifies organizational integrity.

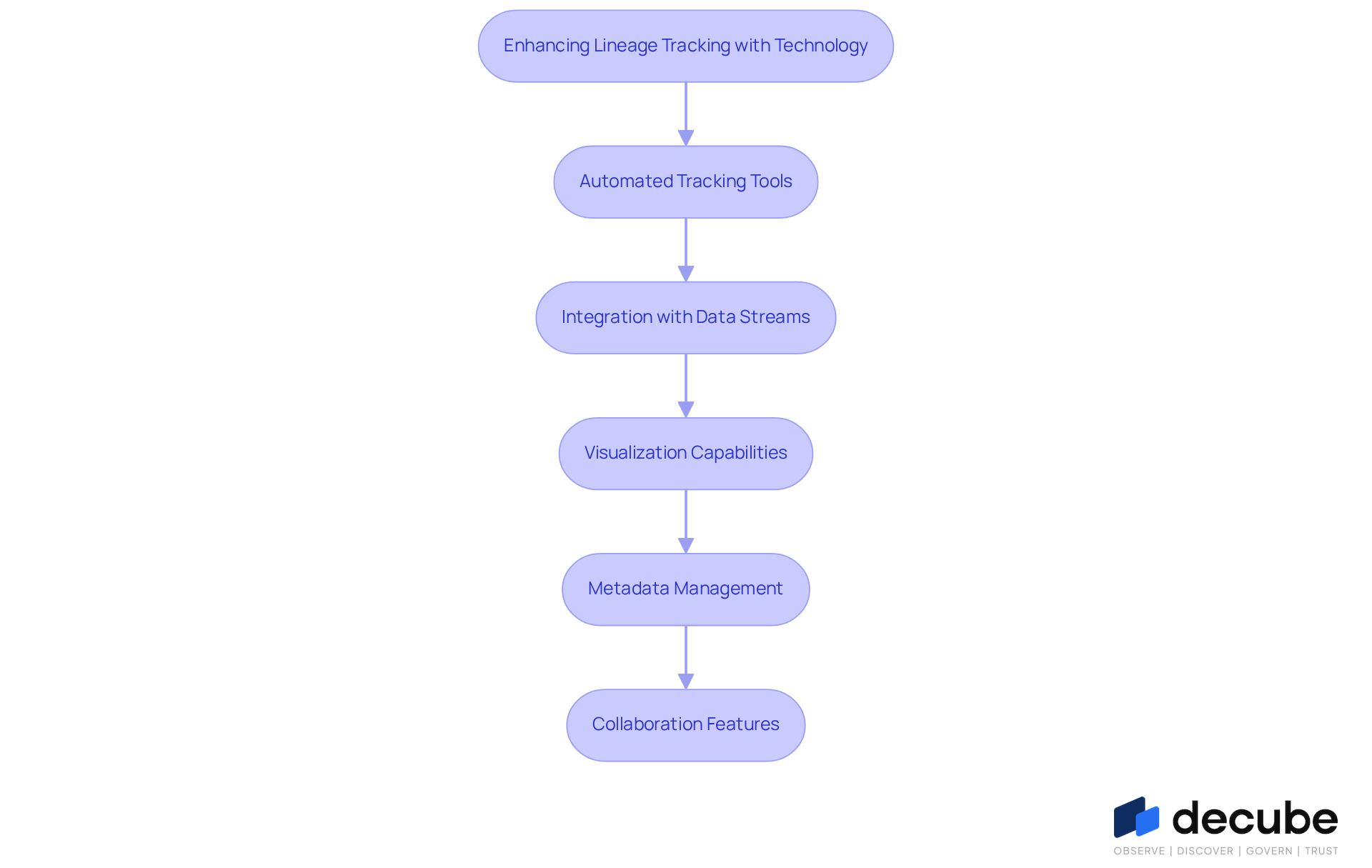

Leverage Technology for Enhanced Lineage Tracking

In today's data-driven landscape, effective information flow monitoring is crucial for organizational success. Technology plays an essential part in enhancing this monitoring, particularly with platforms like Decube that provide advanced features. Organizations can enhance their processes through the following strategies:

- Automated Tracking Tools: Implement automated tracking tools, such as those offered by Decube, that monitor information flows in real-time. This approach helps teams easily uncover and map connections, ensuring precise and timely information management while significantly reducing manual effort and minimizing mistakes.

- Integration with Data Streams: Ensure smooth incorporation of tracking tools with current information pipelines and ETL workflows. Manual updates often lead to inaccuracies and delays in information management, making integration essential for automatic updates as information progresses through various stages. Organizations can utilize Decube's capabilities to automate their lineage process for tracking information, streamlining management processes and enhancing quality.

- Visualization Capabilities: Select tools with robust visualization capabilities that allow for clear representation of complex information flows. Efficient visual representations, like those offered by Decube, enable stakeholders to understand the information journey and swiftly recognize potential issues, thereby enhancing overall governance.

- Metadata Management: Create an extensive metadata management framework that records vital details about information assets, including their origin. Decube's automated crawling feature ensures that metadata is auto-refreshed once sources are connected, eliminating the need for manual updates. This system should record definitions, transformations, and ownership, ensuring clarity and accountability. Furthermore, confirming data origins with business stakeholders is essential to ensure that the data accurately represents business semantics and can help prevent misunderstandings.

- Collaboration Features: Choose tools that encourage teamwork among analytics teams. Decube improves communication with features like shared dashboards and annotations, ensuring that all stakeholders are aligned on information flow practices and promoting a culture of transparency. Regular evaluations of ancestry coverage are also necessary to ensure it reflects the current environment and identifies new business-critical assets.

By embracing these technological advancements, organizations can not only streamline their processes but also foster a culture of data integrity and accountability.

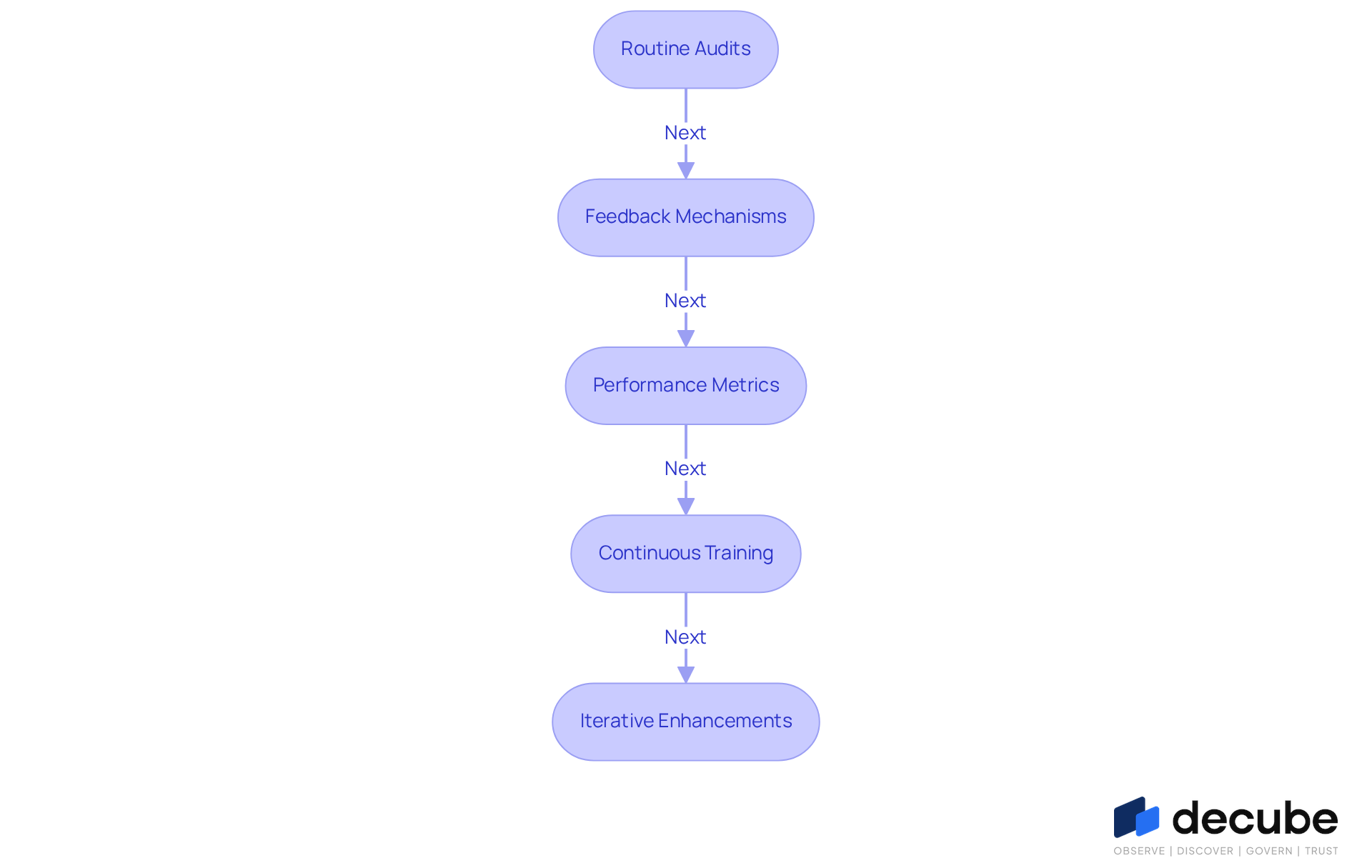

Monitor and Refine Lineage Processes Regularly

To enhance the effectiveness of data lineage processes, organizations must proactively monitor and refine their systems:

- Routine Audits: Audits must confirm that recorded origins match actual data flows and transformations, promptly addressing any discrepancies. Regular evaluations of information flow procedures ensure precision and adherence.

- Feedback Mechanisms: Establish robust feedback systems that empower users to report issues or suggest enhancements to the tracking system. Neglecting user feedback can lead to persistent inefficiencies in the tracking system, making this feedback crucial for identifying areas needing attention and enhancing overall performance.

- Performance Metrics: Identify essential performance indicators (KPIs) to assess the efficiency of data flows. Measurements such as the time taken to address quality issues and the precision of documentation provide valuable insights into operational performance.

- Continuous Training: Provide ongoing education for stakeholders to keep them informed about updates to tracking methods and tools. This guarantees that all users are prepared to employ the ancestry system efficiently, promoting a culture of information literacy and governance.

- Iterative Enhancements: Utilize insights obtained from evaluations, feedback, and performance metrics to apply iterative enhancements in tracking procedures. This iterative enhancement enables organizations to adapt to evolving information landscapes and regulatory requirements.

By regularly monitoring and refining the lineage process, organizations can uphold high standards of data governance and ensure compliance with industry regulations. This proactive approach not only strengthens data governance but also ensures organizations remain agile in a rapidly evolving regulatory environment.

Conclusion

Organizations often struggle with compliance risks and data quality issues, making effective data lineage processes essential. Understanding and implementing effective data lineage processes is paramount for organizations aiming to enhance compliance, operational efficiency, and data quality. A structured approach to data lineage is essential, as it mitigates compliance risks and fosters transparency and collaboration within teams. Recognizing the significance of data lineage enables organizations to build trust with stakeholders and ensure robust data governance.

Key insights discussed include:

- Defining clear objectives

- Assessing the current information ecosystem

- Selecting suitable tools

- Documenting metadata requirements

Additionally, leveraging technology through automated tracking and visualization capabilities is essential for optimizing data management. Regular monitoring and refinement of lineage processes ensure that organizations remain agile and responsive to evolving regulatory demands.

Ultimately, embracing best practices for data lineage implementation is crucial for positioning organizations to succeed in a complex data landscape. By prioritizing data lineage, businesses can enhance their operational integrity, improve information quality, and foster a collaborative environment that drives informed decision-making. Taking action on these insights will empower organizations to navigate the challenges of data management effectively and sustainably.

Frequently Asked Questions

What is data lineage and why is it important?

Data lineage refers to the process of monitoring and illustrating how information moves through different systems. It is important for ensuring regulatory compliance, maintaining information quality, enhancing operational efficiency, and improving cooperation among teams.

How does data lineage support regulatory compliance?

Data lineage provides a transparent audit trail that shows how information is gathered, processed, and stored. This transparency helps organizations adhere to regulations like GDPR, HIPAA, and SOC 2, builds trust with stakeholders, and strengthens audit readiness.

What role does data lineage play in information quality assurance?

By tracking the path of information, organizations can identify and correct quality issues, such as duplicates or erroneous transformations. This is crucial since poor information quality can cost organizations an average of $15 million annually.

How does data lineage contribute to operational efficiency?

Effective tracking of information origins streamlines workflows, reduces troubleshooting time, and enhances overall management efficiency. Organizations with strong information tracking practices can respond more quickly to incidents, minimizing disruptions and ensuring business continuity.

In what ways does data lineage improve cooperation among teams?

Data lineage fosters better communication between information creators and users by providing a common understanding of information movements and changes. This clarity aids teamwork in information governance, allowing teams to collaborate more effectively in managing assets.

What features can enhance collaboration in data lineage management?

Features like automated crawling, which ensures that metadata is auto-refreshed and access is securely controlled, can significantly enhance collaboration among teams managing data lineage.

List of Sources

- Understand the Importance of Data Lineage in Management

- Five reasons why data lineage is essential for regulatory compliance | Collibra (https://collibra.com/blog/five-reasons-why-data-lineage-is-essential-for-regulatory-compliance)

- Benefits of Data Lineage for Better Data Quality | Metaplane (https://metaplane.dev/blog/what-is-lineage-and-how-does-it-help-data-quality)

- 20 Data Science Quotes by Industry Experts (https://coresignal.com/blog/data-science-quotes)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Establish Clear Steps for Implementing Lineage Processes

- Data Management Quotes To Live By | InfoCentric (https://infocentric.com.au/2022/04/28/data-management-quotes)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Leverage Technology for Enhanced Lineage Tracking

- Data lineage in action: Case studies across industries | Secoda (https://secoda.co/blog/data-lineage-in-action-case-studies-across-industries)

- Automated Data Lineage: A Comprehensive Overview (https://anomalo.com/blog/automated-data-lineage-a-comprehensive-overview)

- Best Data Lineage Tools Compared 2026: Features and Factors (https://alation.com/blog/data-lineage-tools)

- Data Lineage Tracking: How It Works & Best Practices | Snowflake (https://snowflake.com/en/fundamentals/data-lineage/tracking)

- Monitor and Refine Lineage Processes Regularly

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- 10 Steps to Updating Your 2026 Data Governance Strategy (https://lovelytics.com/post/10-steps-to-updating-your-2026-data-governance-strategy)

_For%20light%20backgrounds.svg)