Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Set Data Contract Alert Thresholds for Airflow: A Step-by-Step Guide

Learn how to set data contract alert thresholds for Airflow effectively in this step-by-step guide.

Introduction

Establishing effective data contracts is crucial for organizations striving to ensure data integrity and quality. This guide outlines the essential steps for setting alert thresholds for data contracts in Airflow, aimed at enhancing data governance strategies. Organizations frequently encounter challenges in balancing the efficiency and reliability of their alert systems. Teams must develop strategies to navigate these complexities and ensure the robustness of their data contracts.

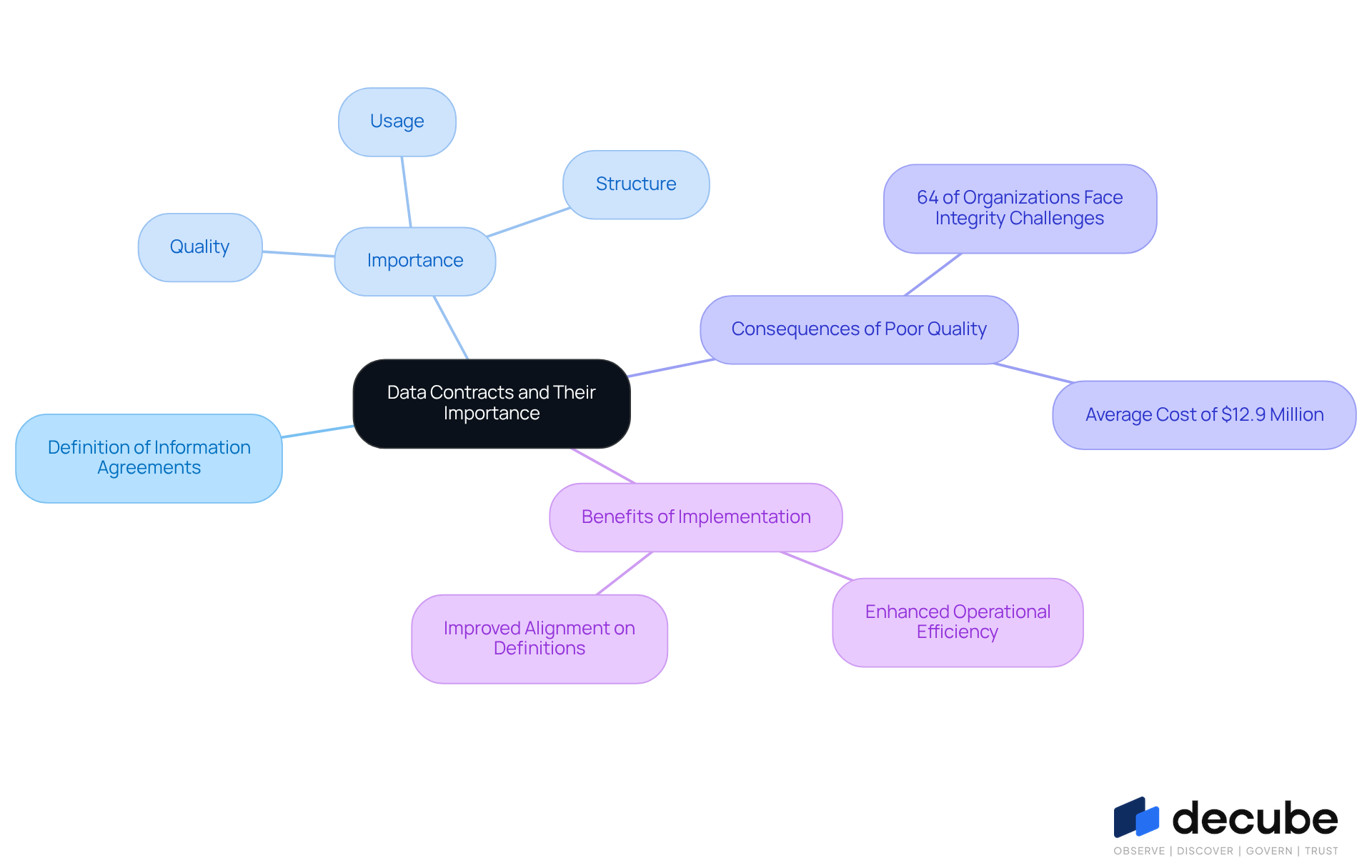

Understand Data Contracts and Their Importance

Information agreements serve as formal arrangements that define expectations for quality, structure, and usage, ensuring effective information flow. They are crucial for minimizing unexpected changes or errors within information pipelines. Without clear agreements, teams may struggle with trust and alignment, leading to confusion and inefficiencies.

By establishing specific metrics and thresholds for information quality, organizations can proactively manage integrity, significantly lowering the risk of issues related to information. According to Gartner, 64% of organizations recognize information quality as their primary integrity challenge, emphasizing the crucial need for effective governance. Moreover, inadequate information quality costs organizations an average of $12.9 million each year, as highlighted by Gartner, underscoring the financial consequences of overlooking this aspect.

Practical examples show that organizations adopting information agreements have experienced significant enhancements in information quality and operational efficiency. For instance, organizations that have embraced information agreements report improved alignment on definitions and formats, tackling the issue of inconsistent information, which impacts 45% of enterprises. This understanding directly influences the establishment of the data contract alert threshold for airflow, enhancing oversight and compliance with agreements.

Ultimately, a robust understanding of information agreements can significantly enhance operational efficiency and mitigate risks associated with poor information quality.

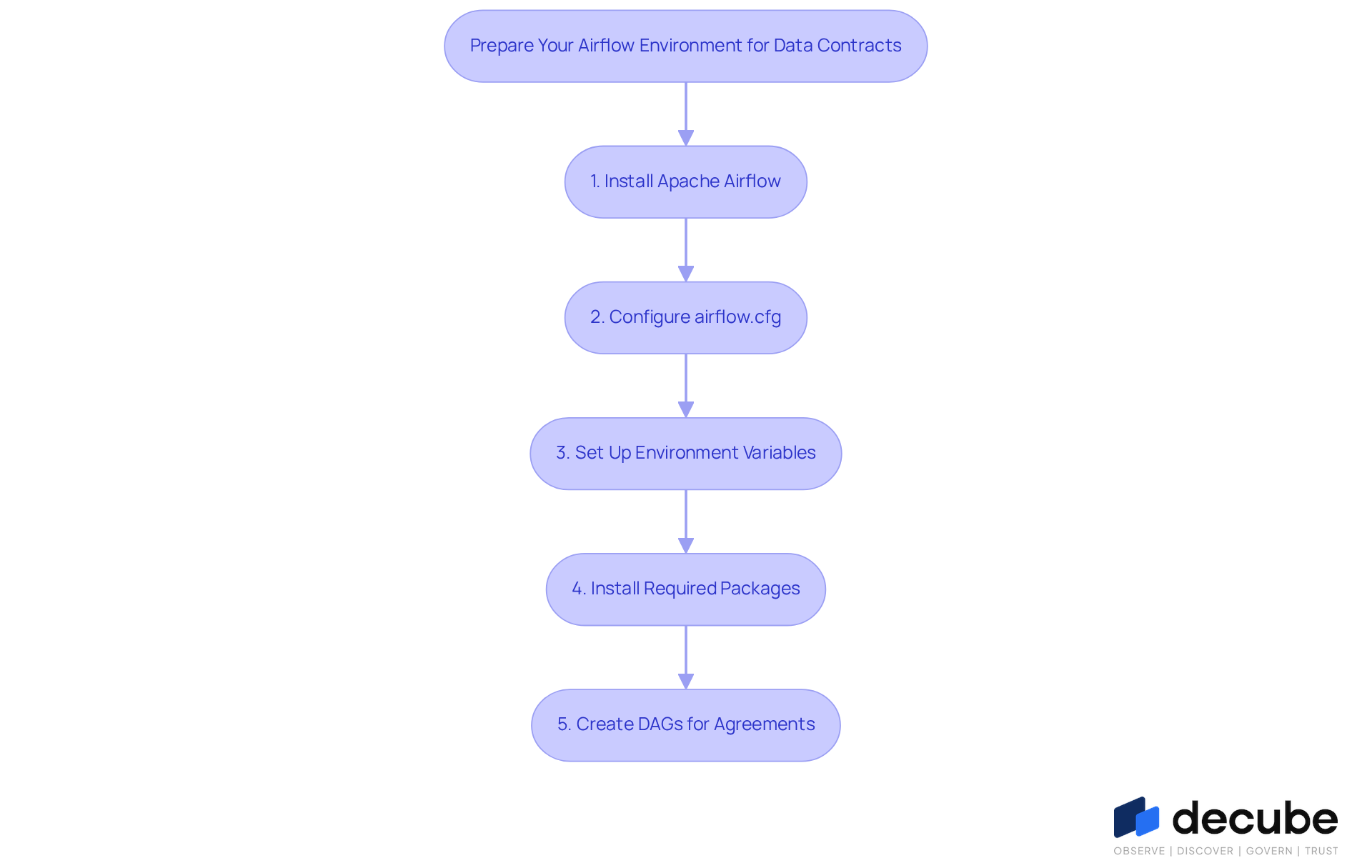

Prepare Your Airflow Environment for Data Contracts

To establish a robust Airflow environment for data contracts, it is crucial to follow a systematic approach that ensures accuracy and efficiency:

-

Install Apache Airflow: Begin by installing Apache Airflow using pip:

pip install apache-airflow -

Configure: Access the

airflow.cfgfile in your workflow home directory. Here, configure key settings such as the executor type and database connection. Ensure your database backend (e.g., PostgreSQL or MySQL) is correctly set up to support your data governance needs. -

Set Up Environment Variables: Define the necessary environment variables for your workflow instance. This includes setting the

AIRFLOW_HOMEvariable to point to your designated Airflow directory, which is crucial for proper operation. -

Install Required Packages: Depending on your specific information agreements, you may need additional packages. For instance, if you plan to use Slack for notifications, install the Slack SDK with the following command:

pip install slack-sdk -

Create DAGs for Agreements: Develop Directed Acyclic Graphs (DAGs) that will manage your information agreements. Ensure these DAGs include tasks for verifying information against the established agreements, which is essential to maintain the data contract alert threshold for airflow and ensure data integrity and quality.

Automated contract checks shift your information processes from reactive to proactive, enhancing both efficiency and quality. Decube's ML-powered assessments help you automatically identify thresholds for table tests like volume and freshness after connecting your source. Furthermore, Decube's intelligent notifications guarantee that messages are organized to prevent overwhelming your team, sending them straight to your email or Slack. As highlighted by Mario Konschake, Director of Product-Data Platform, "Investing in quality information is essential for cross-functional teams to make precise, comprehensive decisions with fewer risks and greater returns." Ultimately, a well-structured workflow not only enhances data governance but also empowers teams to make informed decisions with confidence. Furthermore, Decube's automated crawling feature seamlessly integrates into your workflow setup, ensuring that metadata is effortlessly managed and access control is secure, which fosters improved collaboration across teams. Clear ownership of information is essential for ensuring that it flows smoothly from sources to consumers, further supporting your governance efforts.

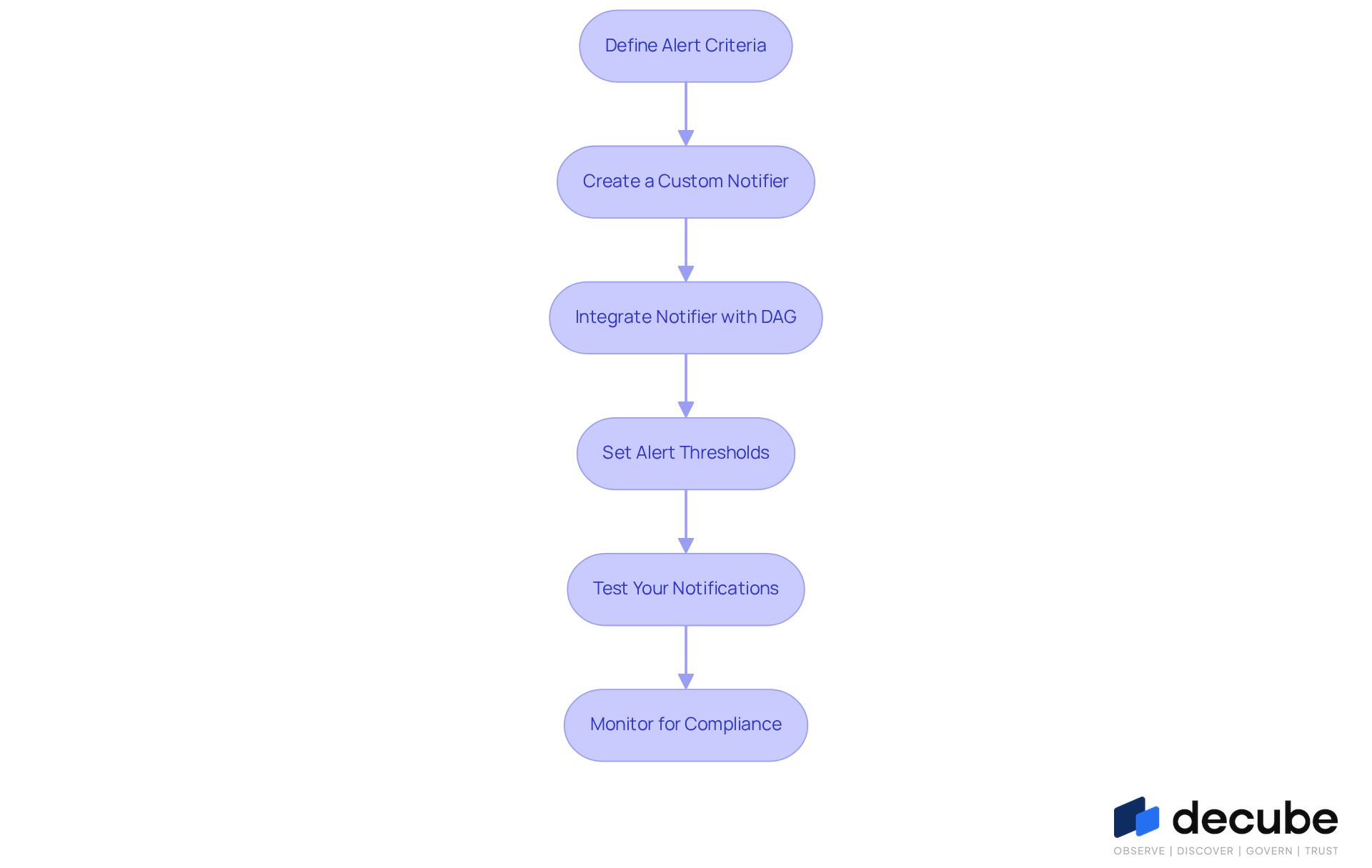

Set Alert Thresholds for Data Contracts in Airflow

To effectively monitor data contracts in Airflow, establishing alert thresholds is crucial:

-

Define Alert Criteria: Based on your information agreements, determine the key metrics that require monitoring. This could include information freshness, schema compliance, or volume thresholds. Establishing clear notification criteria is vital; industry data indicates that there is typically one quality issue for every ten tables annually. Data contracts play a vital role in promoting data integrity and reliability, ensuring adherence to predefined quality standards.

-

Create a Custom Notifier: Implement a custom notifier in Airflow that will manage notifications. You can create a notifier by extending the

BaseNotifierclass:from airflow.utils.email import send_email from airflow.models import BaseOperator class CustomNotifier(BaseOperator): def execute(self, context): # Logic to send alerts send_email(to='your_email@example.com', subject='Data Contract Alert', html_content='Alert details here') -

Integrate Notifier with DAG: In your DAG definition, integrate the custom notifier with the tasks that validate data against the contracts. Use the

on_failure_callbackparameter to trigger alerts when a task fails:from airflow import DAG from datetime import datetime dag = DAG('data_contract_dag', start_date=datetime(2023, 1, 1)) validate_data_task = PythonOperator( task_id='validate_data', python_callable=validate_data, on_failure_callback=CustomNotifier(), dag=dag ) -

Set Alert Thresholds: Use Airflow's built-in alerting features to set thresholds. For example, you can configure email alerts for specific conditions in your DAG:

email_on_failure=True, email_on_retry=True,Managing alert thresholds effectively is crucial to prevent overwhelming users with notifications, as studies show that engagement rates drop significantly when notification channels receive excessive alerts. Regular monitoring of data quality through Decube's solutions, including ML-powered anomaly detection, can help maintain operational efficiency and compliance.

-

Test Your Notifications: Execute your DAG and simulate failures to ensure that notifications are triggered correctly. Monitor your email or Slack for notifications to confirm that the alerting mechanism is functioning as expected. Research on notification management strategies highlights the importance of regular evaluations.

Following these steps will help you create an effective alerting system that ensures compliance with your information agreements, reducing quality risks and enhancing operational efficiency.

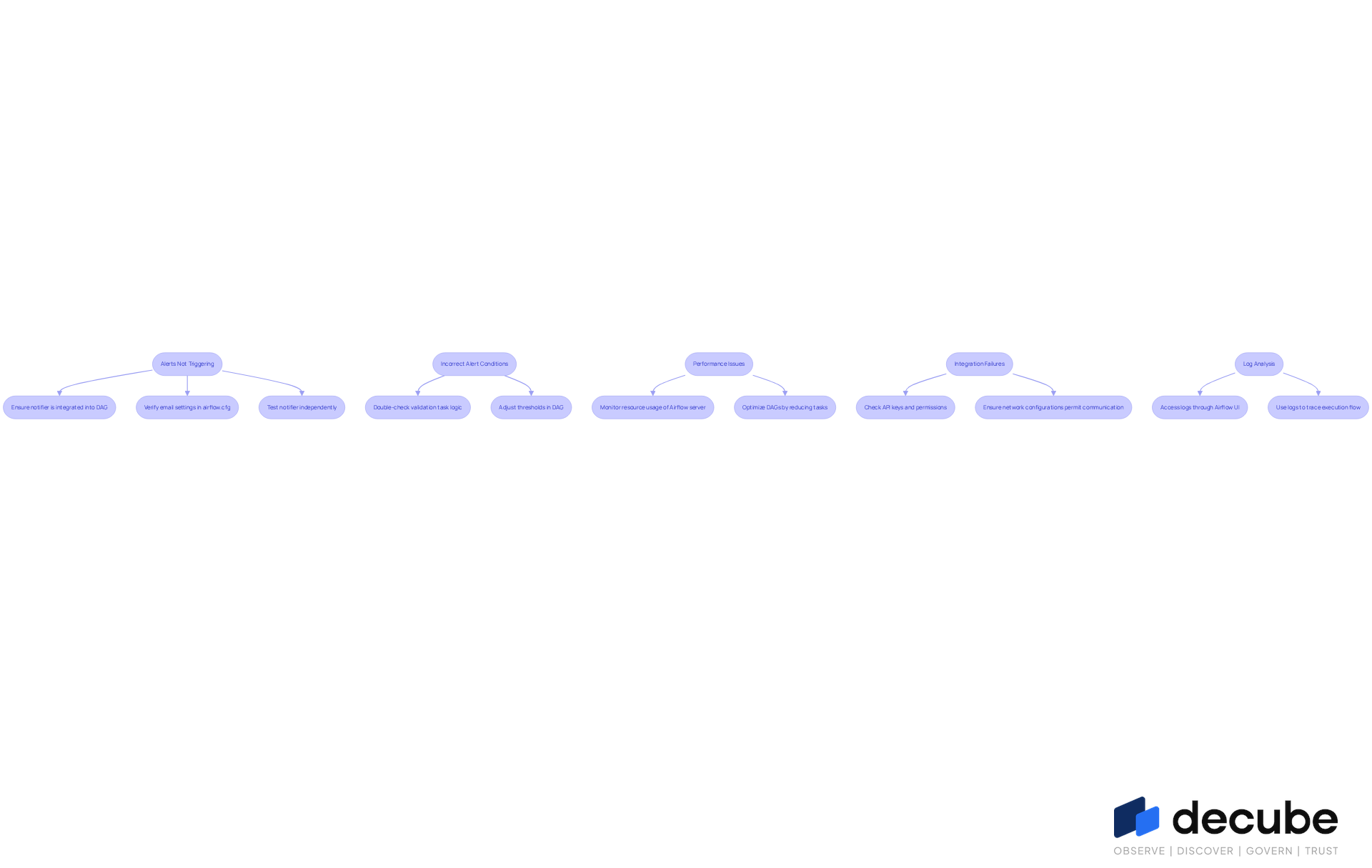

Troubleshoot Common Issues with Data Contract Alerts

Establishing effective warning thresholds for information agreements requires careful troubleshooting, particularly when defining the data contract alert threshold for airflow. Here’s how to address them:

-

Alerts Not Triggering: If alerts are not being sent, consider the following steps:

- Ensure that the notifier is correctly integrated into your DAG.

- Verify that the email settings in

airflow.cfgare configured properly, including SMTP server details. - Test the notifier independently to confirm it works as expected.

-

Incorrect Alert Conditions: If alerts are triggered incorrectly, review your alert criteria:

- Double-check the logic in your validation tasks to ensure they align with the data contract requirements.

- Adjust the thresholds in your DAG to better reflect the expected data quality metrics.

-

Performance Issues: If your Airflow instance is slow or unresponsive:

- Monitor the resource usage of your Airflow server. Ensure it has adequate CPU and memory allocated.

- Optimize your DAGs by reducing the number of tasks or simplifying the logic where possible.

-

Integration Failures: If integrating with external systems (like Slack) fails:

- Check the API keys and permissions for the external service.

- Ensure that the network configurations permit communication between the system and the external service.

-

Log Analysis: Utilize Airflow's logging capabilities to diagnose issues:

- Access the logs for your tasks through the Airflow UI to identify any errors or warnings that may indicate what went wrong.

- Use the logs to trace back the execution flow and pinpoint where the failure occurred.

Addressing these challenges not only enhances the reliability of your workflows but also ensures that the data contract alert threshold for airflow is maintained.

Conclusion

Establishing data contract alert thresholds in Airflow is crucial for maintaining data integrity and operational efficiency. By implementing clear agreements on data quality, structure, and usage, organizations can significantly reduce the risk of errors. Errors in data management can lead to costly repercussions, while this proactive approach safeguards information quality and addresses the financial implications of maintaining high data standards, particularly the costs linked to poor data quality.

The article outlined a comprehensive step-by-step guide to prepare your Airflow environment for data contracts, including the installation of Apache Airflow, configuration of key settings, and the creation of DAGs that manage information agreements. Key strategies for setting alert thresholds were also discussed, emphasizing the importance of:

- Defining alert criteria

- Integrating custom notifiers

- Troubleshooting common issues

These insights underscore the necessity of regular monitoring and adjustment to ensure that alert systems function effectively and support data governance efforts. This trust fosters collaboration and improves overall data governance.

In conclusion, the successful implementation of data contract alert thresholds is essential for organizations aiming to enhance their data management practices. Prioritizing data quality and establishing robust alert systems enables teams to make informed decisions confidently, leading to improved outcomes. By adopting these practices, organizations can not only mitigate risks but also fully leverage their data assets for strategic advantage.

Frequently Asked Questions

What are data contracts and why are they important?

Data contracts are formal agreements that define expectations for quality, structure, and usage of information. They are important for ensuring effective information flow and minimizing unexpected changes or errors within information pipelines.

What issues can arise without clear data contracts?

Without clear data contracts, teams may struggle with trust and alignment, leading to confusion and inefficiencies in their processes.

How do data contracts help in managing information quality?

Data contracts establish specific metrics and thresholds for information quality, allowing organizations to proactively manage data integrity and significantly lower the risk of issues related to information.

What statistics highlight the importance of information quality?

According to Gartner, 64% of organizations recognize information quality as their primary integrity challenge, and inadequate information quality costs organizations an average of $12.9 million each year.

What benefits have organizations experienced by adopting information agreements?

Organizations that have adopted information agreements report significant enhancements in information quality and operational efficiency, including improved alignment on definitions and formats, which helps tackle inconsistent information affecting 45% of enterprises.

How do data contracts impact operational efficiency?

A robust understanding of information agreements can enhance operational efficiency by mitigating risks associated with poor information quality and ensuring compliance with established agreements.

List of Sources

- Understand Data Contracts and Their Importance

- Data Quality: Why It Matters and How to Achieve It (https://gartner.com/en/data-analytics/topics/data-quality)

- Data Quality Challenges: 2025 Planning Insights (https://precisely.com/data-integrity/2025-planning-insights-data-quality-remains-the-top-data-integrity-challenges)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Prepare Your Airflow Environment for Data Contracts

- Case Study: How Make Uses Data Contracts to Test Data at the Source (https://soda.io/blog/make-data-contracts-shift-left)

- Set Alert Thresholds for Data Contracts in Airflow

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- 7 Data Quality Metrics to Monitor Continuously | Revefi (https://revefi.com/blog/data-quality-metrics-monitoring)

- Troubleshoot Common Issues with Data Contract Alerts

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- Case Study: How Make Uses Data Contracts to Test Data at the Source (https://soda.io/blog/make-data-contracts-shift-left)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Data Quality Issues and Challenges | IBM (https://ibm.com/think/insights/data-quality-issues)

_For%20light%20backgrounds.svg)