Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

How to Build a Data Dictionary: Essential Steps for Data Engineers

Learn essential steps for data engineers on how to build a data dictionary effectively.

Introduction

Despite its critical importance, many organizations fail to recognize the essential role of a well-structured data dictionary in effective data management. A data dictionary serves as the backbone of effective data management, ensuring data integrity and compliance. By clearly defining data elements, relationships, and business rules, it enhances information quality and streamlines collaboration across teams.

Many organizations struggle to keep their data dictionaries updated and relevant, raising the question: how can data engineers systematically build and maintain a robust data dictionary that evolves with organizational needs?

This article delves into essential steps for creating a comprehensive data dictionary, exploring its key components and best practices for ongoing maintenance. By implementing these strategies, organizations can significantly enhance their data governance and decision-making capabilities.

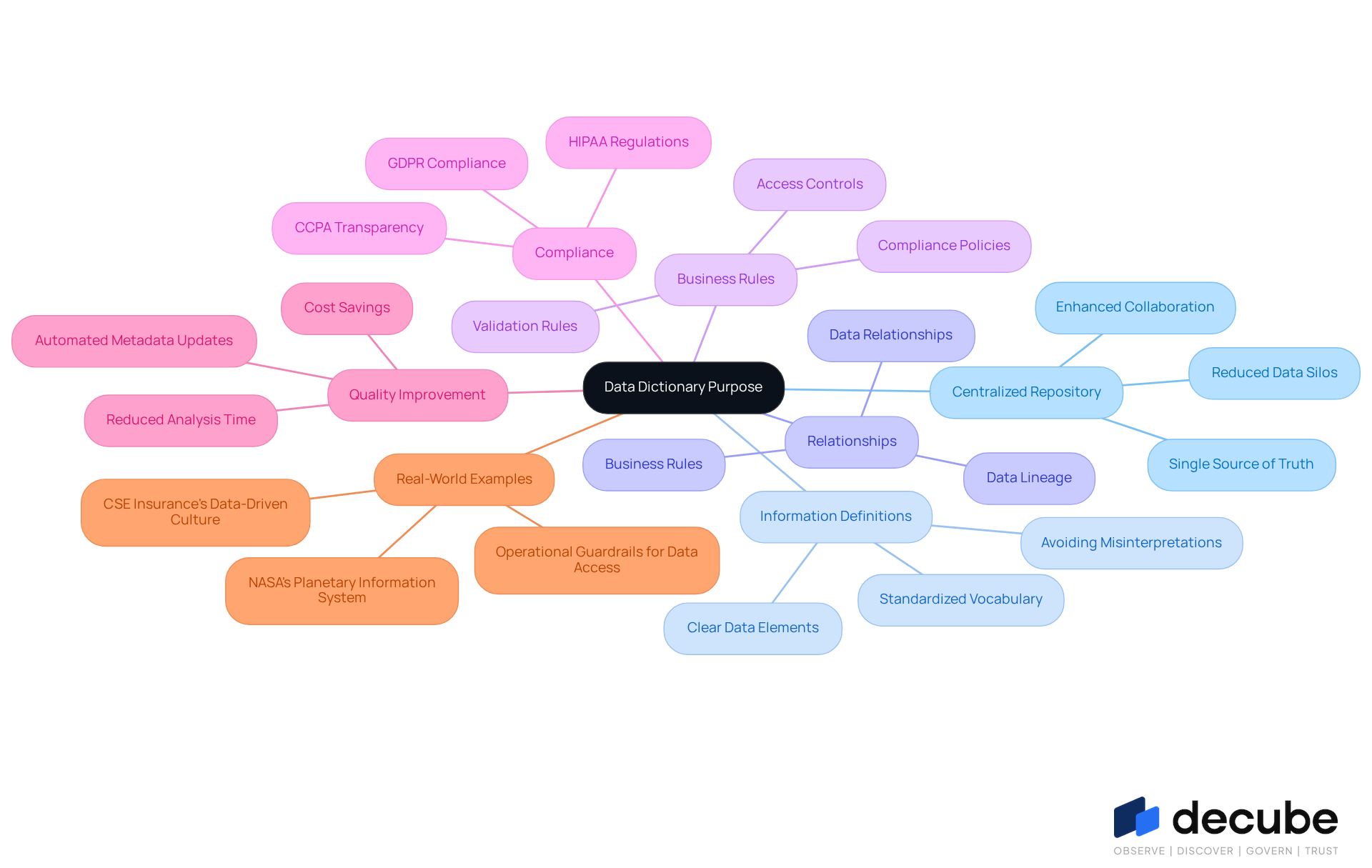

Understand the Purpose of a Data Dictionary

A centralized information repository is crucial for defining and describing dataset elements, ensuring all stakeholders share a common understanding. It records information definitions, relationships, and business rules, which are essential for maintaining information integrity and facilitating effective information governance. Clarifying the purpose of an information dictionary helps engineers understand its critical role in enhancing information quality and ensuring compliance with regulations like GDPR and HIPAA.

With Decube's automated crawling capability, there is no manual updating of metadata; once sources are linked, the metadata is automatically refreshed, enabling seamless integration with current information stacks. This capability simplifies the documentation process and enhances information observability and governance through secure access control.

An information glossary should be collaboratively developed and approved by team leads to conclude the document, which is essential for its effectiveness. Moreover, a centralized information repository improves quality by maintaining clear definitions and enforcing validation rules, reducing inconsistencies and mistakes.

For example, organizations with well-documented information experience a 20% decrease in analysis time, highlighting the efficiency achieved through a centralized information repository. Furthermore, the financial effect of inadequate information quality, costing organizations an average of $12.9 million annually, emphasizes the significance of investing in a centralized information repository.

Real-world instances, like NASA's Planetary Information System, illustrate how a well-organized information glossary supports complex environments by supplying standardized metadata. This understanding allows information engineers to navigate the stages of creating an effective information repository, leading to better decision-making and operational success.

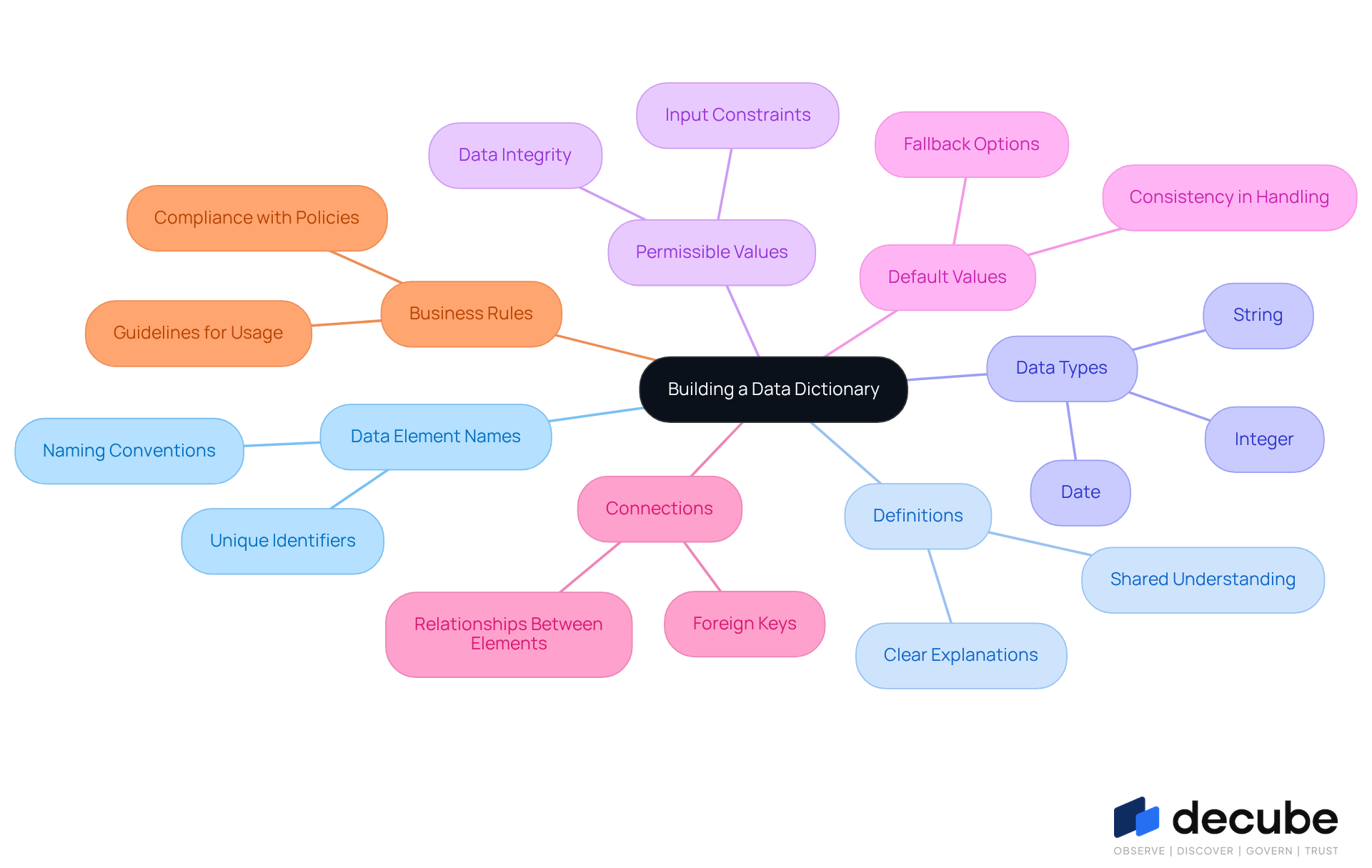

Identify Key Components to Include

Understanding how to build a data dictionary is crucial for creating a reliable information dictionary, as it requires careful attention to detail in documenting essential elements that ensure its functionality and reliability. These components include:

- Data Element Names: The precise names as they appear in the database, serving as unique identifiers for each data point.

- Definitions: Clear and concise explanations of what each information component represents, which are crucial for shared understanding among stakeholders.

- Data Types: Specification of the kind of information (e.g., integer, string, date) for each component, which aids in validation and processing.

- Permissible Values: Constraints on the allowable inputs for each information component, ensuring information integrity and adherence to business regulations.

- Default Values: The values that will be utilized if none are indicated, maintaining consistency in information handling.

- Connections: Documentation of how various information elements relate to one another, including foreign keys and dependencies, which is essential for understanding information flow and lineage.

- Business Rules: Guidelines that control how information can be utilized or altered, maintaining compliance with organizational policies and regulatory requirements.

By integrating Decube's automated crawling capabilities, organizations can significantly enhance the effectiveness of their information repositories by ensuring that metadata is efficiently managed and automatically updated. For instance, the exact element names and definitions can be kept current without manual intervention. Furthermore, utilizing Decube's sophisticated quality oversight, which features ML-driven tests and intelligent notifications, aids in preserving the integrity of the information repository by guaranteeing that permitted values and business regulations are consistently upheld. By systematically identifying and documenting these components, data engineers can create a strong reference repository that serves as a dependable guide for all information-related activities, particularly in understanding how to build a data dictionary. Ultimately, this systematic approach not only enhances data accuracy but also streamlines collaboration and governance across teams, making it indispensable for effective information management.

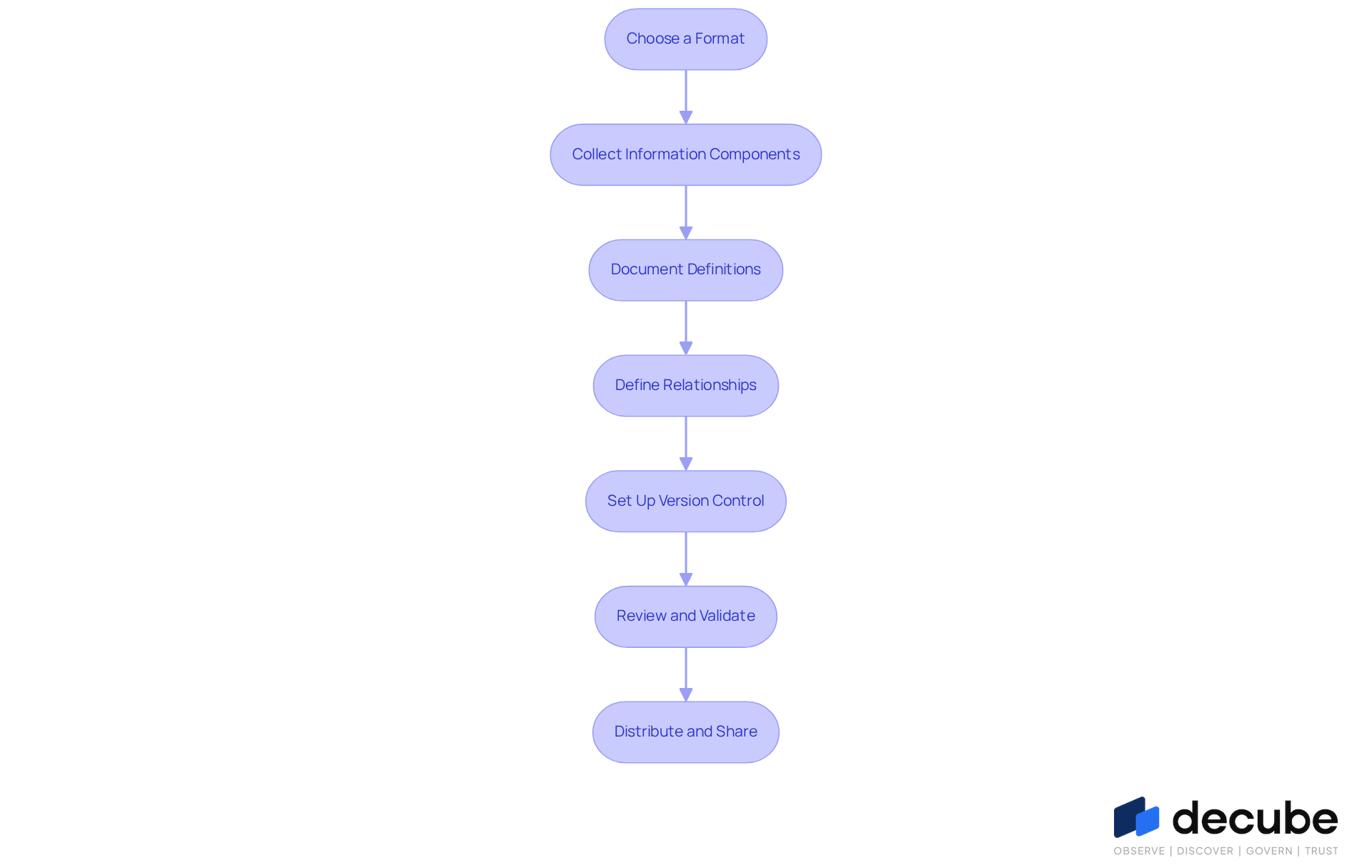

Create and Organize Your Data Dictionary

To effectively create and organize a data dictionary, it is crucial to follow a structured approach that ensures accuracy and completeness:

- Choose a Format: Select between a spreadsheet, a database, or specialized information management software. Each format offers unique advantages; for instance, spreadsheets are user-friendly, while dedicated software can automate updates and enhance collaboration. Centralizing and activating metadata can help organizations deliver new information assets 70% faster. Decube’s platform seamlessly integrates with existing information systems, simplifying the management and updating of your reference guide.

- Collect Information Components: Assemble an extensive list of all information components to be included in the dictionary. This process should involve reviewing existing databases and consulting with stakeholders to ensure completeness. Utilizing Decube’s automated column-level lineage feature can help identify and catalog these components efficiently.

- Document Definitions: Write clear and concise definitions for each information component. Aim for language that is accessible to both technical and non-technical users, promoting a shared understanding across teams. As Jatin S. observes, the platform's automated crawling feature assists in maintaining uniformity in these names across information assets, ensuring consistency in your information repository.

- Define Relationships: Map out the connections between different information elements, including primary and foreign key relationships, as well as any dependencies. This clarity aids in comprehending information flow and integrity. Decube’s information contracts facilitate collaboration among stakeholders, enhancing the understanding of these relationships.

- Set Up Version Control: Implement a version control system to monitor changes to the information dictionary. This practice is vital for preserving precision and uniformity over time, particularly as information changes. Decube’s comprehensive capabilities in metadata management can assist in this process, ensuring that updates are tracked effectively.

- Review and Validate: Share the draft of the information glossary with stakeholders for feedback. This collaborative step ensures that all necessary information is captured and that definitions are accurate, reducing the risk of miscommunication. Significantly, 49% of executives mention information inaccuracies and bias as an obstacle to technology adoption, emphasizing the necessity of a well-maintained information reference. Decube’s smart alerts can notify teams of any discrepancies or updates needed.

- Distribute and Share: Once completed, make the information guide accessible to all pertinent teams. Utilizing a centralized platform like Decube can facilitate easy access and updates, enhancing its utility.

Ultimately, understanding how to build a data dictionary not only enhances governance but also drives informed decision-making across the organization.

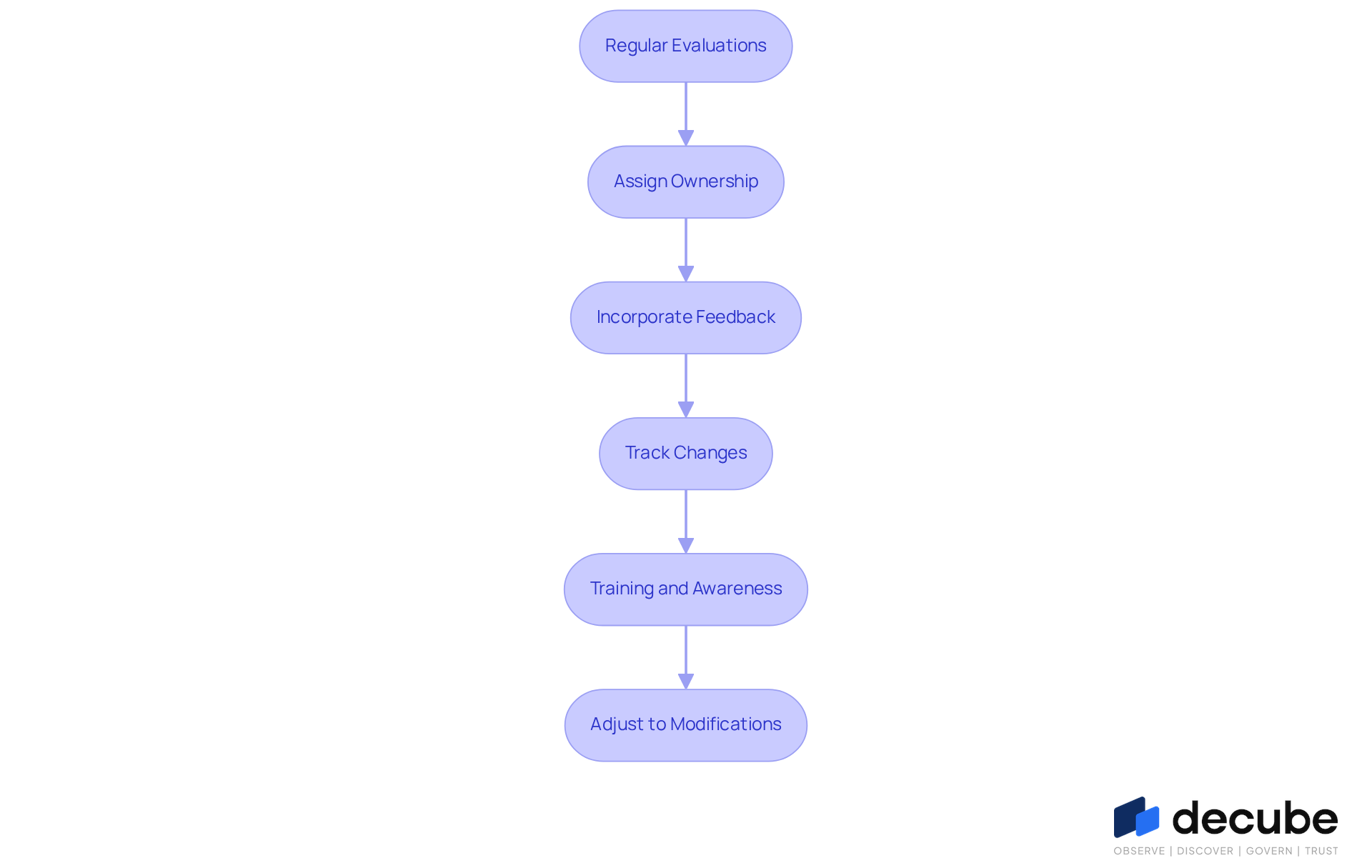

Maintain and Update Your Data Dictionary

To maintain an effective and relevant information repository, a robust maintenance routine is essential. Here are best practices for maintaining and updating your data dictionary:

- Regular Evaluations: Arrange frequent assessments of the information reference, ideally quarterly or bi-annually, to ensure all details represent current information environments. Regular audits are essential; without them, organizations risk outdated information that can lead to poor decision-making.

- Assign Ownership: Designate a specific team or individual responsible for the maintenance of the information repository. This role entails overseeing alterations in information elements and ensuring prompt updates. Clear ownership aligns definitions with real-world usage, making the information reference a trusted source.

- Incorporate Feedback: Actively solicit user feedback on the data reference to identify areas for improvement. This practice aids in recognizing areas for enhancement and guarantees that the resource meets the requirements of all stakeholders. Active collaboration promotes a culture of documentation, enhancing the resource's relevance.

- Track Changes: Keep a record of modifications made to the information reference. This log assists in comprehending the evolution of information definitions and is invaluable for audits and compliance checks. Effective versioning captures what changed, when, and why, reinforcing transparency and accountability.

- Training and Awareness: Provide training for new team members on effectively utilizing the information guide. Keeping everyone informed about its importance and usage enhances its value. Organizations that emphasize training in information governance and quality experience enhanced management outcomes.

- Adjust to Modifications: Be ready to modify the information guide as new information sources are incorporated or as business requirements change. Flexibility is key to maintaining its relevance.

Ultimately, understanding how to build a data dictionary is vital for informed decision-making and organizational success.

Conclusion

A well-structured data dictionary is crucial for data engineers seeking to improve data governance and facilitate effective decision-making. By centralizing information and defining relationships and business rules, a data dictionary serves as a foundational tool that enhances shared understanding among stakeholders and improves information quality.

Throughout the article, key components necessary for creating a reliable data dictionary were outlined, such as:

- Data element names

- Definitions

- Data types

- Permissible values

- Business rules

A structured approach to documenting these components is vital for ensuring data accuracy and integrity. Additionally, the role of automated tools like Decube in streamlining the documentation process and maintaining up-to-date metadata was emphasized, showcasing how technology can enhance collaboration and efficiency.

Without a data dictionary, organizations risk confusion and miscommunication among stakeholders, leading to poor decision-making. Ultimately, the significance of maintaining and regularly updating a data dictionary cannot be overlooked. Organizations that prioritize this practice not only mitigate the risks associated with outdated information but also foster a culture of accountability and transparency. This proactive approach not only enhances data quality but also empowers teams to make informed decisions swiftly, positioning themselves to thrive in an increasingly data-driven world.

Frequently Asked Questions

What is the purpose of a data dictionary?

A data dictionary serves as a centralized information repository that defines and describes dataset elements, ensuring all stakeholders share a common understanding of information definitions, relationships, and business rules.

How does a centralized information repository enhance information quality?

It improves quality by maintaining clear definitions, enforcing validation rules, and reducing inconsistencies and mistakes, ultimately leading to better data governance and compliance with regulations like GDPR and HIPAA.

What role does Decube's automated crawling capability play in managing metadata?

Decube's automated crawling capability eliminates the need for manual updating of metadata; once sources are linked, the metadata is automatically refreshed, simplifying the documentation process and enhancing information observability and governance.

Why is it important to collaboratively develop an information glossary?

A collaboratively developed and approved information glossary by team leads is essential for the effectiveness of the document, ensuring that all stakeholders have a shared understanding of the information.

What are the benefits of having a well-documented information repository?

Organizations with well-documented information experience a 20% decrease in analysis time and can avoid the financial impact of inadequate information quality, which costs an average of $12.9 million annually.

Can you provide an example of a successful implementation of a centralized information repository?

NASA's Planetary Information System is an example that illustrates how a well-organized information glossary supports complex environments by providing standardized metadata, aiding information engineers in effective decision-making and operational success.

List of Sources

- Understand the Purpose of a Data Dictionary

- Data Dictionary: The What, Why And How — Eval Academy (https://evalacademy.com/articles/data-dictionary-the-what-why-and-how)

- The Benefits of a Centralized Data Dictionary | Alation (https://alation.com/blog/benefits-centralized-data-dictionary)

- Data Dictionary Tools: Features, Benefits and Use in 2026 (https://ovaledge.com/blog/data-dictionary-tools)

- How to Improve Healthcare Data Quality While Staying HIPAA Compliant (https://accountablehq.com/post/how-to-improve-healthcare-data-quality-while-staying-hipaa-compliant)

- Data Dictionary 2026: Components, Examples, Implementation (https://atlan.com/what-is-a-data-dictionary)

- Identify Key Components to Include

- Data Dictionary 2026: Components, Examples, Implementation (https://atlan.com/what-is-a-data-dictionary)

- Data Dictionary Best Practices (2026 Guide) (https://ovaledge.com/blog/data-dictionary-best-practices)

- Data Dictionary: The Essential Guide to Understanding & Managing Data | Splunk (https://splunk.com/en_us/blog/learn/data-dictionary.html)

- What Is A Data Dictionary? Main Components And Benefits (https://rudderstack.com/blog/what-is-a-data-dictionary)

- What Is a Data Dictionary Useful For in Data Management? | Decube (https://decube.io/post/what-is-a-data-dictionary-useful-for-in-data-management)

- Create and Organize Your Data Dictionary

- Data Dictionary Tools: Features, Benefits and Use in 2026 (https://ovaledge.com/blog/data-dictionary-tools)

- Data Dictionary Best Practices (2026 Guide) (https://ovaledge.com/blog/data-dictionary-best-practices)

- Best Data Dictionary Software | 2026 Verified Rankings (https://gitnux.org/best/data-dictionary-software)

- How to Create a Data Dictionary in 10 Simple Steps | Airbyte (https://airbyte.com/data-engineering-resources/how-to-create-a-data-dictionary)

- 4 Steps to Create a Data Dictionary for Data Engineers | Decube (https://decube.io/post/4-steps-to-create-a-data-dictionary-for-data-engineers)

- Maintain and Update Your Data Dictionary

- 8 Proven Ways to Maintain an Up-to-Date Data Dictionary (https://4thoughtmarketing.com/articles/maintain-up-to-date-data-dictionary)

- Data Management Trends in 2026: Moving Beyond Awareness to Action - Dataversity (https://dataversity.net/articles/data-management-trends)

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- Data Dictionary: Essential Tool for Accurate Data Management (https://acceldata.io/blog/why-a-data-dictionary-is-critical-for-data-accuracy-and-control)

- Data Dictionary Best Practices (2026 Guide) (https://ovaledge.com/blog/data-dictionary-best-practices)

_For%20light%20backgrounds.svg)