Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

What Is Data Cataloguing? Key Features and Importance Explained

Discover the essentials of data cataloguing and its significance in modern data management.

Introduction

Organizations face challenges in adapting to the fast-paced changes in data management practices. Data cataloguing emerges as a pivotal strategy, offering organizations a structured way to compile and access metadata that illuminates their information assets. By effectively utilizing data cataloguing, organizations can enhance their decision-making processes and ensure compliance.

We will explore the core concepts, key features, and significant benefits of data cataloguing, illustrating its impact on decision-making and compliance across various industries.

Define Data Cataloguing: Understanding the Core Concept

In an era where information overload is prevalent, effective information cataloguing is crucial for organizational success. What is data cataloguing refers to the systematic creation and maintenance of a centralized repository that aggregates metadata describing an organization's information assets. Think of this repository as an inventory that allows users to discover and manage their information effectively.

A comprehensive catalog includes details about:

- Sources

- Types

- Lineage

- Quality metrics

These elements are essential for informed decision-making. This organization not only enhances accessibility but also strengthens governance by establishing what is data cataloguing as a vital element of contemporary management strategies. It acts as a vital connection between information producers and users, ensuring clarity about the available information and its contextual significance within the organization.

Organizations utilizing efficient information catalogs report a 65% decrease in information discovery time, greatly enhancing self-service analytics capabilities. This improvement not only streamlines processes but also empowers users to make data-driven decisions swiftly. As organizations prepare for the future, understanding what is data cataloguing will be essential for maintaining a competitive edge in data management.

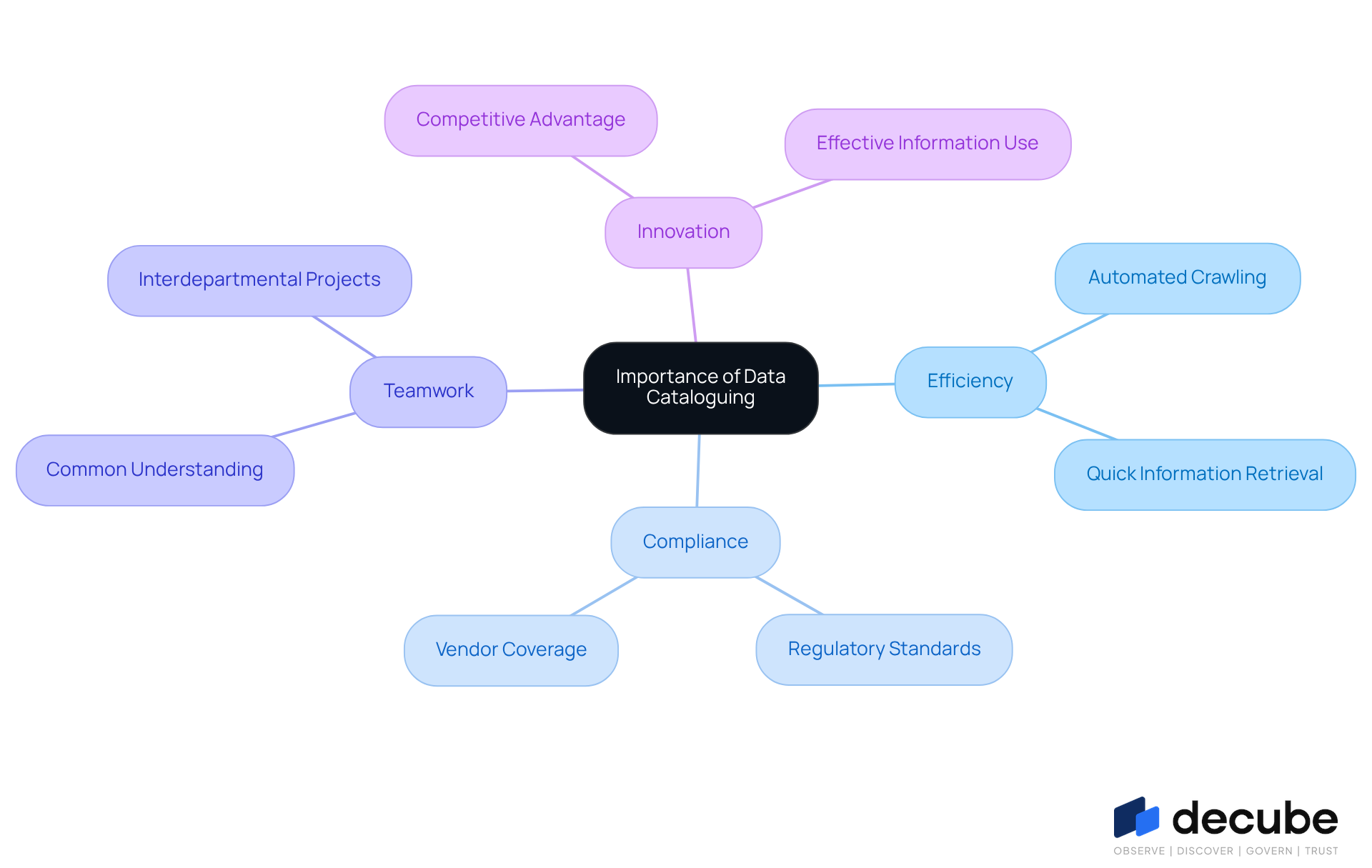

Explore the Importance of Data Cataloguing in Modern Data Management

In an era where information is paramount, grasping what is data cataloguing has become essential for organizational success. Decube's automated crawling feature significantly enhances information observability and governance by ensuring that metadata is effortlessly managed and auto-refreshed once sources are connected. This efficiency enables users to find necessary information swiftly, which is crucial for organizations that depend on timely insights for decision-making.

Furthermore, information organization plays a crucial role in facilitating adherence to regulatory standards like GDPR and HIPAA by offering clear visibility into lineage and usage. For example, organizations that adopt strong practices in what is data cataloguing, similar to those provided by a leading service, have reported enhanced compliance rates, with many reaching up to 90-95% vendor coverage in less than ten days, as supported by recent research findings.

Additionally, the platform encourages teamwork among groups by establishing a common understanding of information assets, which is vital for interdepartmental projects. Insights from users, such as Piyush P., highlight how effective the automated column-level lineage is, enabling business users to determine if reports or dashboards have issues, thereby improving quality and oversight.

Ultimately, this platform enables organizations to utilize their information more effectively, fostering innovation and competitive advantage while ensuring compliance with governance policies.

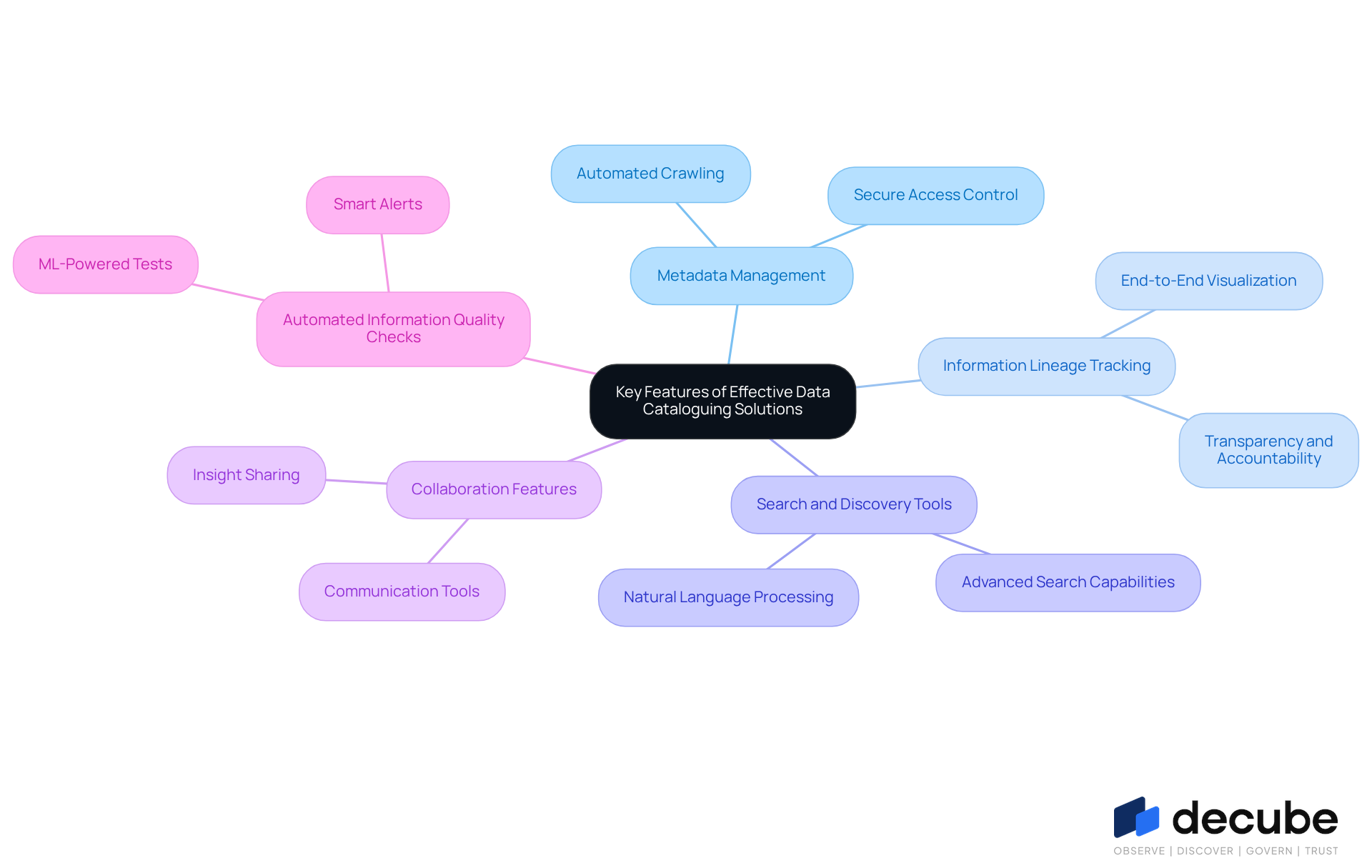

Identify Key Features of Effective Data Cataloguing Solutions

Organizations often face challenges in managing vast amounts of data effectively. Efficient solutions for what is data cataloguing generally encompass several essential features that improve their functionality. These features include:

- Metadata Management: Decube's automated crawling feature allows for effortless metadata management, automatically refreshing data from connected sources without manual updates. This capability offers a comprehensive perspective of resources while ensuring secure access control through designated approval flows.

- Information Lineage Tracking: Decube excels in end-to-end information lineage visualization, enabling users to trace the origin and flow of information seamlessly. This transparency is essential for upholding accountability and comprehending dependencies.

- Search and Discovery Tools: Advanced search capabilities enable users to find pertinent information quickly, often using natural language processing to simplify queries, enhancing the overall user experience.

- Collaboration Features: Tools that facilitate communication and sharing of insights among users are essential for enhancing teamwork and data-driven decision-making. The platform facilitates collaboration by offering clear insight into information quality and lineage, enhancing interactions among teams.

- Automated Information Quality Checks: Decube integrates ML-powered tests and smart alerts to maintain the integrity of the information, ensuring that users can trust the details they are accessing. These automated checks assist in identifying problems early, leading to enhanced oversight and observability. Additionally, preset field monitors allow users to choose which fields to monitor, enhancing the effectiveness of these checks.

Together, these features create a robust information catalog that addresses what is data cataloguing and meets the needs of modern organizations, particularly in terms of observability and governance.

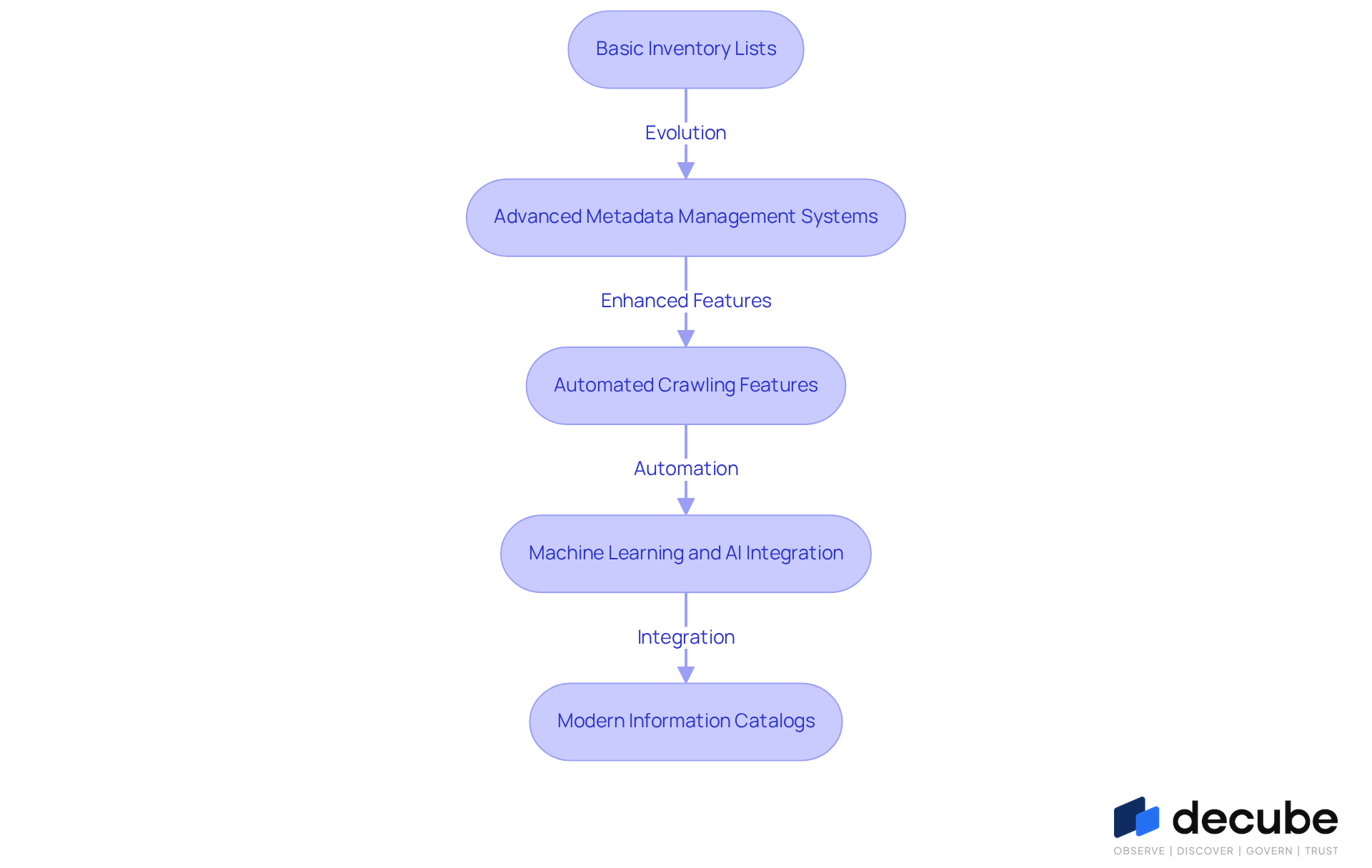

Trace the Evolution of Data Cataloguing Practices

The evolution of data organization has shifted from basic inventory lists to advanced metadata management systems, reflecting the growing complexity of information environments. Initially, these catalogs focused on static information assets, primarily serving as tools for basic inventorying. However, the emergence of large information sets and the intricacies of modern information environments necessitated the development of solutions that illustrate what is data cataloguing in a more dynamic and interactive way.

The platform's automated crawling feature illustrates this evolution, enabling seamless metadata management by refreshing information automatically when sources are connected. Moreover, Decube's unified information trust platform integrates machine learning and AI technologies, revolutionizing the field through automated metadata generation and enhanced discovery capabilities.

Today, modern information catalogs illustrate what is data cataloguing, as they are not just repositories of knowledge; they play a vital role in management frameworks, supporting compliance and enhancing information quality across industries. Key features such as secure access regulation and comprehensive information lineage visualization are essential for effective management and decision-making processes.

This evolution mirrors a broader trend where organizations increasingly prioritize information solution platforms that leverage automation and AI to improve governance and decision-making. According to projections, the global information collection market is anticipated to expand to roughly 13.42 billion USD by 2035, at a compound annual growth rate of 23.1%. This underscores the growing significance of what is data cataloguing in the evolving information landscape.

However, organizations struggle with poor metadata quality and lack of ownership, hindering their ability to fully utilize information catalogs. To remain competitive, organizations must prioritize the integration of advanced information catalogs.

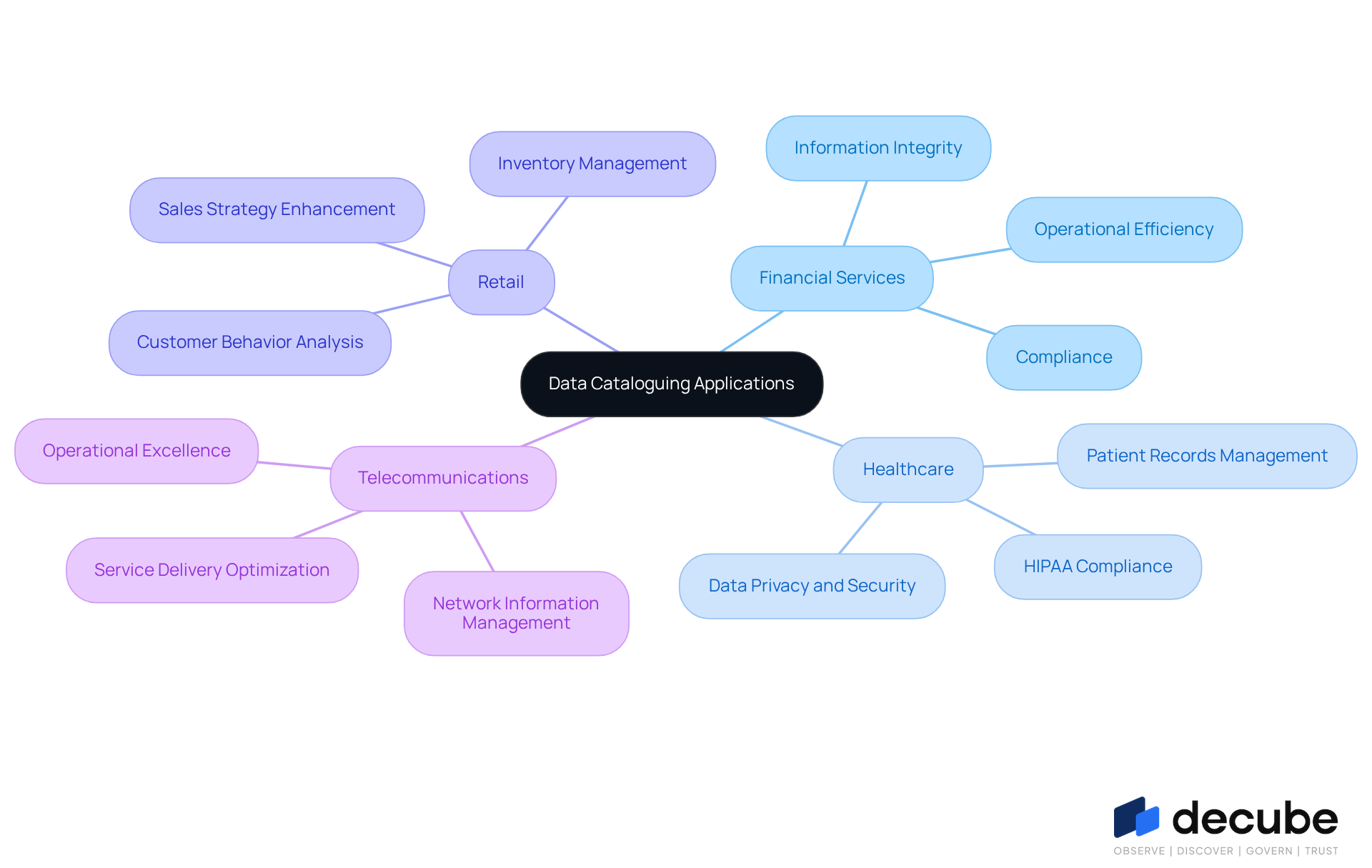

Examine Real-World Applications of Data Cataloguing Across Industries

Data organization is crucial for industries aiming to tackle specific operational challenges effectively. In the financial services industry, companies employ information organization to meticulously monitor transactions and ensure information integrity, which is essential for meeting stringent regulatory compliance requirements. For instance, organizations with strong information governance programs achieve 15-20% higher operational efficiency, underscoring the importance of effective information management in a regulated environment.

Furthermore, compliance statistics indicate that 66% of banks face challenges with information quality and integrity issues, emphasizing the need for strong cataloguing practices. By implementing thorough information catalogs, such as those provided by alternative sources, financial institutions can improve their risk management strategies, ensuring that they maintain precise and accessible records for audits and regulatory reporting. According to Piyush P., "Decube's automated crawling feature allows us to manage metadata effortlessly, ensuring our information remains accurate and up-to-date."

Experts agree that what is data cataloguing is effective in aiding compliance and also fosters a culture of information literacy in organizations. This is vital as 87% of organizations have low business intelligence and analytics maturity, suggesting a considerable chance for enhancement through efficient information management. Decube's automated monitoring and analytics features further improve information quality and governance, enabling business users to pinpoint issues in reports and dashboards effectively.

Beyond financial services, other sectors also gain advantages from information cataloguing. In healthcare, organizations manage patient records and ensure compliance with regulations like HIPAA, enhancing information privacy and security. Retail firms examine customer behavior and enhance inventory management through catalogs, resulting in better sales strategies. Similarly, telecommunications providers manage vast amounts of network information, ensuring efficient service delivery and operational excellence. Ultimately, understanding what is data cataloguing through its strategic implementation can redefine operational success across various sectors.

Conclusion

Organizations often struggle with disorganized data, leading to inefficiencies and missed opportunities. Understanding data cataloguing is essential for optimizing information management strategies. By systematically organizing and maintaining a centralized repository of metadata, businesses can enhance accessibility, improve data governance, and empower users to make informed, data-driven decisions. This concept improves information discovery and encourages collaboration across departments.

The article delves into several key aspects of data cataloguing, including its definition, importance, and the essential features of effective solutions. It highlights how automated tools can streamline metadata management, improve information lineage tracking, and enhance user collaboration. Additionally, it addresses the evolution of data cataloguing practices and showcases real-world applications across industries such as finance, healthcare, and retail, illustrating the tangible benefits of robust information management.

As organizations navigate a complex data landscape, prioritizing effective data cataloguing is crucial. Embracing advanced solutions that leverage automation and AI enhances compliance and governance while driving operational efficiency and competitive advantage. Investing in data cataloguing tools can fundamentally reshape how organizations leverage their data for strategic advantage.

Frequently Asked Questions

What is data cataloguing?

Data cataloguing refers to the systematic creation and maintenance of a centralized repository that aggregates metadata describing an organization's information assets, allowing users to discover and manage their information effectively.

What elements are included in a comprehensive data catalog?

A comprehensive data catalog includes details about sources, types, lineage, and quality metrics of information assets.

How does data cataloguing enhance decision-making?

By organizing information effectively, data cataloguing improves accessibility and strengthens governance, which leads to informed decision-making.

What impact does data cataloguing have on information discovery time?

Organizations utilizing efficient information catalogs report a 65% decrease in information discovery time, enhancing self-service analytics capabilities.

How does Decube's automated crawling feature enhance data cataloguing?

Decube's automated crawling feature improves information observability and governance by managing and auto-refreshing metadata once sources are connected, enabling users to find necessary information quickly.

How does data cataloguing help organizations comply with regulatory standards?

Effective data cataloguing offers clear visibility into lineage and usage, facilitating adherence to regulatory standards like GDPR and HIPAA.

What benefits do organizations experience from strong data cataloguing practices?

Organizations that adopt strong data cataloguing practices have reported enhanced compliance rates, with many achieving up to 90-95% vendor coverage in less than ten days.

How does data cataloguing promote teamwork among departments?

Data cataloguing establishes a common understanding of information assets, which is vital for interdepartmental projects and collaboration.

What role does automated column-level lineage play in data cataloguing?

Automated column-level lineage enables business users to identify issues in reports or dashboards, improving quality and oversight of information.

Why is understanding data cataloguing essential for organizations?

Understanding data cataloguing is essential for organizations to utilize their information more effectively, foster innovation, maintain a competitive advantage, and ensure compliance with governance policies.

List of Sources

- Define Data Cataloguing: Understanding the Core Concept

- Summit Partners | Data Trends: Data Catalogs Hit the Mainstream (https://summitpartners.com/resources/data-trends-data-catalogs-hit-the-mainstream)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Data Catalogs in 2026: Definitions, Trends, and Best Practices for Modern Data Management (https://promethium.ai/guides/data-catalogs-2026-guide-modern-data-management)

- Why the 2026 Data Catalog Is The Google for Enterprise Data | Tredence (https://tredence.com/blog/data-catalog-enterprise-data-2026)

- Data Catalog Market Size & Share | Industry Report, 2030 (https://grandviewresearch.com/industry-analysis/data-catalog-market-report)

- Explore the Importance of Data Cataloguing in Modern Data Management

- GDPR vs HIPAA: Key Differences & Compliance 2026 (https://atlassystems.com/blog/gdpr-vs-hipaa)

- Advancing Compliance with HIPAA and GDPR in Healthcare: A Blockchain-Based Strategy for Secure Data Exchange in Clinical Research Involving Private Health Information - PMC (https://pmc.ncbi.nlm.nih.gov/articles/PMC12563691)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Identify Key Features of Effective Data Cataloguing Solutions

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- What Is a Data Catalog? Definition, Evolution & Key Features (2026) (https://ovaledge.com/blog/data-catalog-and-its-evolution)

- Data Catalogs in 2026: Definitions, Trends, and Best Practices for Modern Data Management (https://promethium.ai/guides/data-catalogs-2026-guide-modern-data-management)

- Why the 2026 Data Catalog Is The Google for Enterprise Data | Tredence (https://tredence.com/blog/data-catalog-enterprise-data-2026)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Trace the Evolution of Data Cataloguing Practices

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- What Is a Data Catalog? Definition, Evolution & Key Features (2026) (https://ovaledge.com/blog/data-catalog-and-its-evolution)

- Why the 2026 Data Catalog Is The Google for Enterprise Data | Tredence (https://tredence.com/blog/data-catalog-enterprise-data-2026)

- Examine Real-World Applications of Data Cataloguing Across Industries

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Governance in Healthcare: Real-World Scenarios and Case Studies Explained (https://accountablehq.com/post/data-governance-in-healthcare-real-world-scenarios-and-case-studies-explained)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Data Governance Market Size, Growth Drivers, Size And Forecast 2031 (https://mordorintelligence.com/industry-reports/data-governance-market)

_For%20light%20backgrounds.svg)