Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding Data Quality Definition: Key Insights for Data Engineers

Explore the essential data quality definition for engineers to ensure reliable information management.

Introduction

Data reliability is fundamental for organizations aiming for operational excellence and fostering stakeholder trust. For data engineers, understanding the definition and dimensions of data quality is not just beneficial; it is essential for ensuring accurate analytics and informed decision-making. Data integrity is often compromised by human oversight and reliance on obsolete data. Addressing these challenges is crucial for transforming data into a strategic asset that drives informed decision-making.

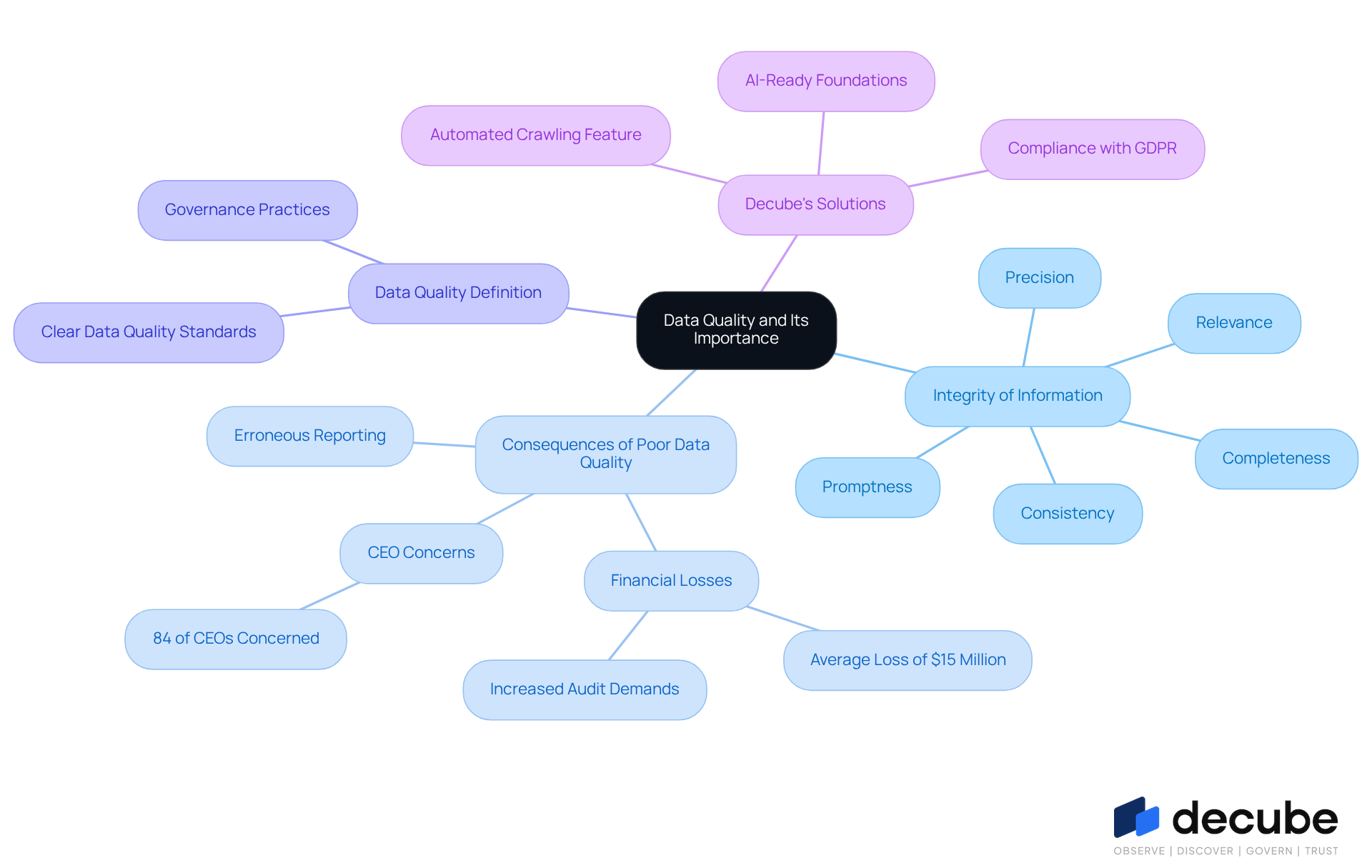

Define Data Quality and Its Importance for Data Engineers

The integrity of information is paramount for organizations aiming to maintain operational excellence and stakeholder trust. Information integrity includes the precision, completeness, consistency, promptness, and relevance of information for its intended purpose. For information engineers, upholding high standards is crucial, as it directly influences the reliability of analytics, reporting, and machine learning results. High-quality information minimizes errors, improves decision-making, and builds trust among stakeholders.

On the other hand, inadequate information standards can expose organizations to significant risks, including erroneous reporting and compliance failures. Inadequate information standards lead to substantial financial losses, averaging $15 million annually for organizations, leading to flawed business strategies and decisions. Moreover, 84% of CEOs convey worry regarding the reliability of information impacting their decisions, emphasizing the critical need for reliable information in business intelligence.

A clear data quality definition is essential as the first step in establishing effective governance practices, ensuring that information remains a valuable asset rather than a liability. Decube's automated crawling feature enhances information observability and governance by ensuring that metadata is automatically updated without manual intervention. This capability enables engineers to uphold high quality of information effortlessly, as it reduces the risk of outdated or incorrect details.

Furthermore, with GDPR’s Article 5 requiring that personal information must be accurate and current, Decube's solutions assist organizations in adhering to these regulations, emphasizing the significance of dependable information management. With Decube's unified information trust platform, organizations can establish AI-ready foundations that promote strong governance and observability, ultimately resulting in enhanced collaboration and decision-making. This pursuit is essential for organizations aiming for long-term success and compliance.

Explore Core Dimensions of Data Quality

Understanding the core dimensions of information quality is crucial for ensuring reliability and actionable insights. These dimensions include:

- Accuracy: This dimension ensures that information is correct and free from errors. In healthcare, accurate patient information is crucial for billing and treatment decisions; even a small percentage of inaccuracies can lead to significant billing errors.

- Completeness: Completeness refers to the extent to which all necessary information is present. Missing records can lead to significant delays and errors in decision-making processes, such as a voter’s absence from a voting list.

- Consistency: Consistency ensures that information is uniform across different datasets and systems. For instance, discrepancies between order and shipping records in retail can result in incorrect fulfillment, emphasizing the necessity for consistent information across operational areas.

- Timeliness: Data must be up-to-date and available when needed. In financial services, prompt information availability is essential to prevent competitive drawbacks; this can lead to lost business opportunities and diminished customer trust.

- Validity: Validity assesses whether information adheres to established formats and standards. For example, ensuring that email addresses follow the correct format is vital for maintaining communication integrity.

- Distinctiveness: Distinctiveness ensures that each information entry is unique and not replicated. High uniqueness scores build trust in information, while duplicate entries often lead to confusion and misreporting.

Comprehending these dimensions enables information engineers to apply focused strategies for enhancing integrity. By prioritizing these dimensions, organizations can significantly enhance their decision-making capabilities and operational efficiency.

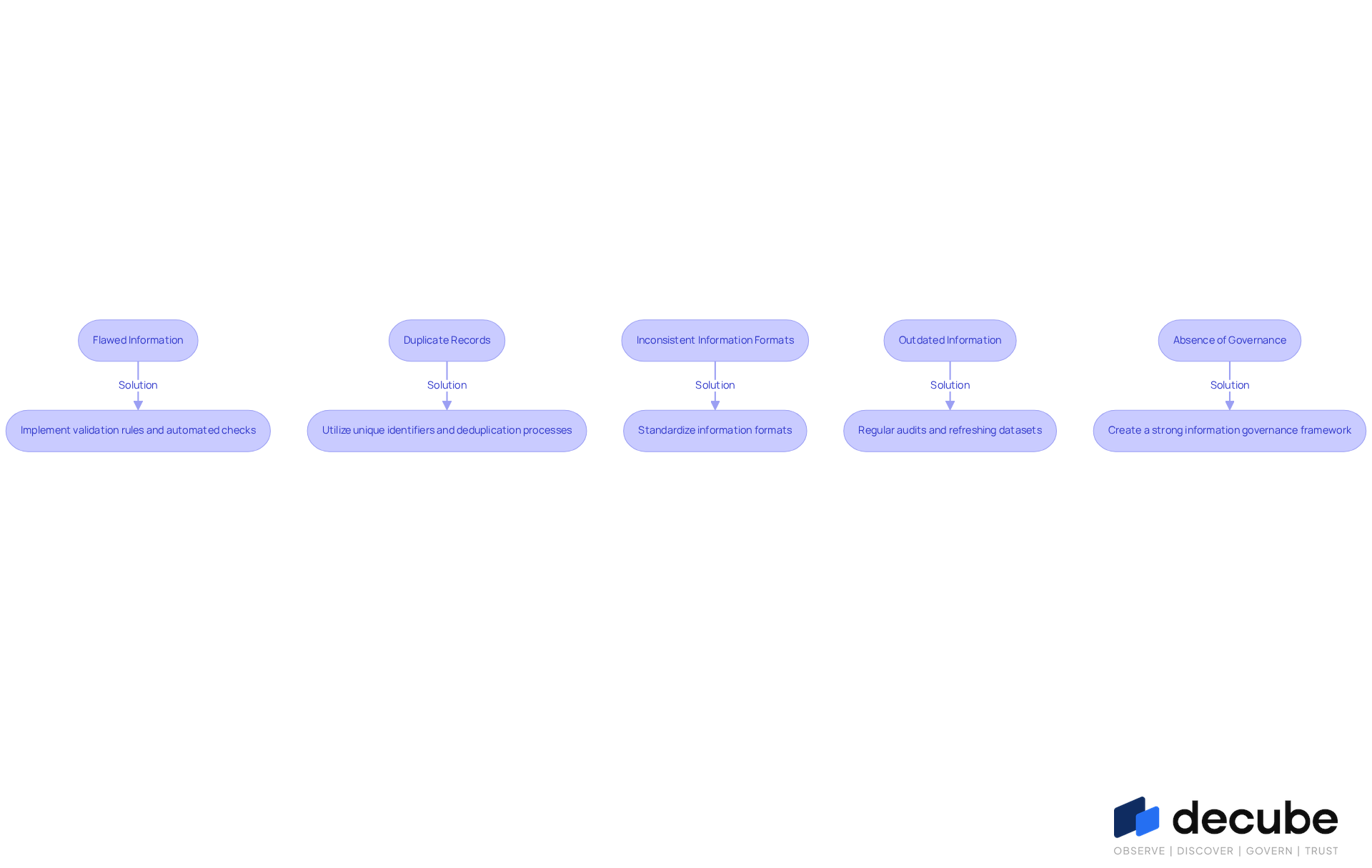

Identify Challenges in Maintaining Data Quality and Solutions

Maintaining the data quality definition is crucial for business success, yet it presents several challenges that can adversely affect outcomes. Here are some common issues and their corresponding solutions:

- Flawed Information: Human error during information entry often leads to inaccuracies, which can have serious repercussions for decision-making. Solution: Implement validation rules and automated checks to catch errors early, reducing the risk of erroneous information influencing decisions.

- Duplicate Records: The presence of duplicate entries can inflate metrics and create confusion. Solution: Utilize unique identifiers and deduplication processes to ensure information integrity and clarity in reporting.

- Inconsistent Information Formats: Variations in information formats across different systems can hinder usability. Solution: Standardize information formats across all platforms to maintain consistency and facilitate smoother integration.

- Outdated Information: Stale information can lead to poor decision-making and missed opportunities. Solution: Regular audits and refreshing datasets are essential to keep information current and relevant.

- Absence of Governance: In the absence of clear policies and accountability, information standards can decline. Solution: Create a strong information governance framework that includes established information standards and assigns ownership to ensure compliance and oversight.

By addressing these challenges head-on, information engineers can elevate standards, resulting in improved operational efficiency and more informed decision-making.

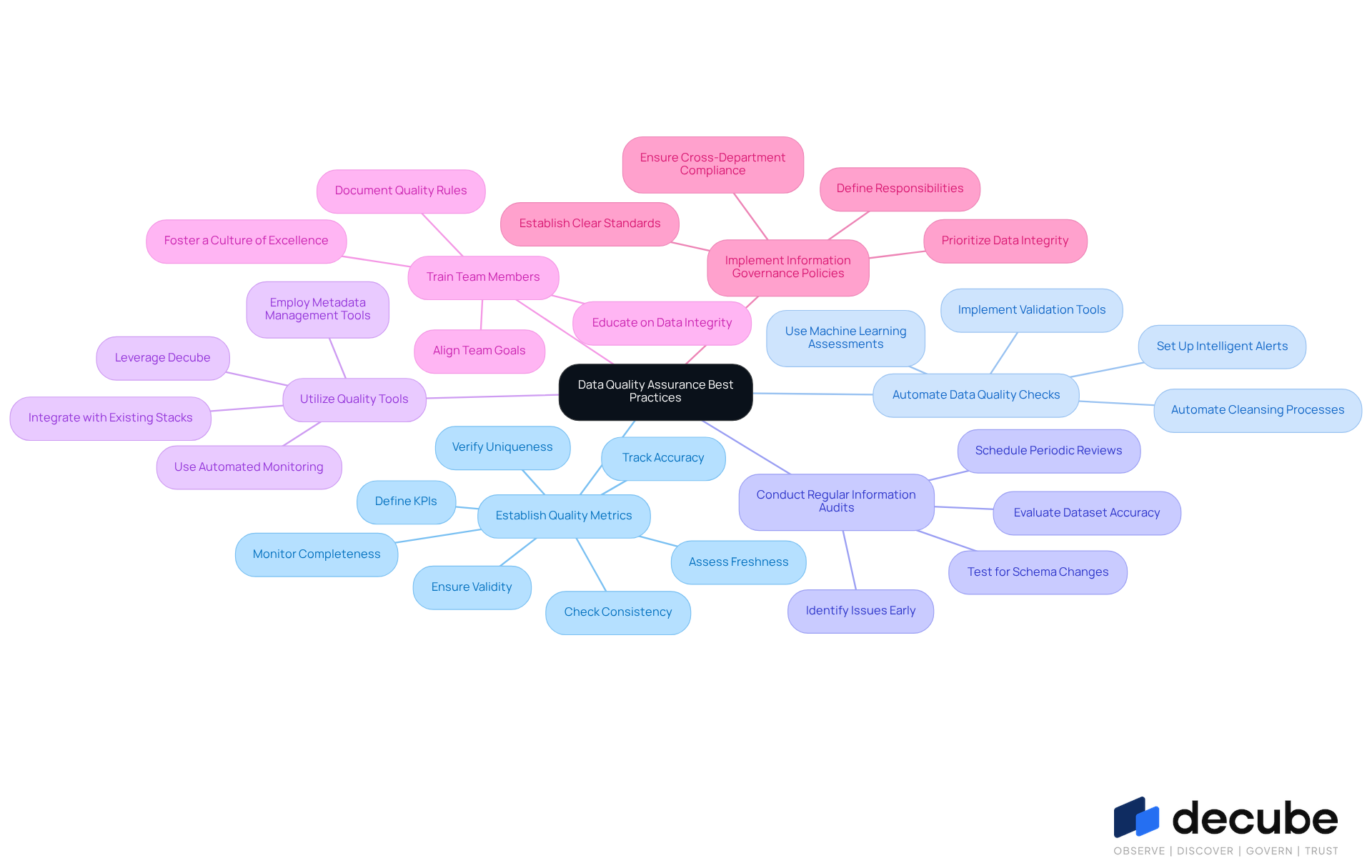

Implement Best Practices and Tools for Data Quality Assurance

To ensure robust data quality, data engineers must adopt a series of best practices that address various aspects of information integrity:

- Establish Quality Metrics: Define key performance indicators (KPIs) such as accuracy, completeness, validity, consistency, freshness, and uniqueness. Consistent tracking of these metrics is crucial for upholding high information standards.

- Automate Data Quality Checks: Implement tools that automate validation, cleansing, and monitoring processes. Automation reduces manual errors and ensures consistency across datasets. Tools such as Decube offer machine learning-driven assessments that automatically identify thresholds for information integrity, ensuring that information remains precise and dependable. Furthermore, Decube's intelligent alerts organize notifications to avoid overwhelming users, facilitating effective management of quality information.

- Conduct Regular Information Audits: Periodically review datasets to evaluate their accuracy, completeness, and consistency. Regular audits assist in identifying and correcting issues before they escalate, ensuring that information remains dependable for decision-making. This is highlighted in the case study on "Production Testing for Information Accuracy," which shows that scheduled tests are crucial for identifying issues arising from upstream schema changes, unexpected edge cases, or pipeline failures.

- Utilize Quality Tools: Leverage advanced tools such as Decube, which offers seamless integration with existing information stacks and automated monitoring capabilities. Decube's features, comprising information reconciliation and automated crawling for metadata management, improve overall governance and observability, simplifying the task for teams to uphold information integrity.

- Train Team Members: Ensure that all team members comprehend the importance of information integrity and are equipped with the necessary skills to sustain it. Training fosters a culture of excellence, enabling teams to proactively address information issues. Anurag Sharma emphasizes the importance of documenting rules for consistency, which aids in onboarding new team members and improves team alignment.

- Implement Information Governance Policies: Establish clear policies that outline information standards and responsibilities within the organization. Effective governance guarantees that information integrity is prioritized and upheld across all departments.

By integrating these practices, organizations can foster a culture of data excellence that aligns with the data quality definition and enhances decision-making capabilities. Successful implementations often combine multiple approaches, such as transformation-level testing with dbt and comprehensive monitoring with observability platforms, to achieve optimal results.

Conclusion

Data quality is a cornerstone for data engineers, influencing the reliability of analytics and decision-making across organizations. A clear understanding of data quality enhances operational efficiency and fosters stakeholder trust, making it critical for data-driven enterprises.

The article delves into the fundamental dimensions of data quality, including:

- Accuracy

- Completeness

- Consistency

- Timeliness

- Validity

- Distinctiveness

Each dimension plays a pivotal role in ensuring that data serves its intended purpose without leading to costly errors or misinformed decisions. Furthermore, it highlights the challenges faced by data engineers, such as:

- Flawed information

- Duplicate records

- Outdated datasets

While providing actionable solutions to address these issues effectively.

The pursuit of high data quality empowers organizations to thrive in a competitive landscape. By adopting best practices, leveraging advanced tools, and fostering a culture of data integrity, data engineers can significantly enhance their organizations' decision-making capabilities. Investing in data quality safeguards against errors and positions organizations for sustained success and innovation.

Frequently Asked Questions

What is data quality and why is it important for data engineers?

Data quality refers to the integrity of information, which includes precision, completeness, consistency, promptness, and relevance. It is crucial for data engineers because high-quality information directly influences the reliability of analytics, reporting, and machine learning results, ultimately impacting decision-making and stakeholder trust.

What are the risks associated with inadequate information standards?

Inadequate information standards can expose organizations to significant risks such as erroneous reporting and compliance failures, leading to substantial financial losses, averaging $15 million annually. This can result in flawed business strategies and decisions.

How do CEOs view the reliability of information in decision-making?

According to the article, 84% of CEOs express concern regarding the reliability of information that impacts their decisions, highlighting the critical need for dependable information in business intelligence.

Why is a clear data quality definition essential?

A clear data quality definition is essential as it serves as the first step in establishing effective governance practices, ensuring that information remains a valuable asset rather than a liability.

How does Decube enhance information observability and governance?

Decube enhances information observability and governance through its automated crawling feature, which ensures that metadata is automatically updated without manual intervention. This capability helps engineers maintain high information quality by reducing the risk of outdated or incorrect details.

What role does Decube play in compliance with GDPR regulations?

Decube's solutions assist organizations in adhering to GDPR's Article 5, which requires personal information to be accurate and current, emphasizing the significance of reliable information management.

What benefits does Decube's unified information trust platform provide?

Decube's unified information trust platform helps organizations establish AI-ready foundations that promote strong governance and observability, leading to enhanced collaboration and decision-making, which is essential for long-term success and compliance.

List of Sources

- Define Data Quality and Its Importance for Data Engineers

- New Global Research Points to Lack of Data Quality and Governance as Major Obstacles to AI Readiness (https://prnewswire.com/news-releases/new-global-research-points-to-lack-of-data-quality-and-governance-as-major-obstacles-to-ai-readiness-302251068.html)

- The Impact of Poor Data Quality (and How to Fix It) - Dataversity (https://dataversity.net/articles/the-impact-of-poor-data-quality-and-how-to-fix-it)

- The Impact of Poor Data Quality: Risks, Challenges, and Solutions - Data Ladder (https://dataladder.com/the-impact-of-poor-data-quality-risks-challenges-and-solutions)

- The Consequences of Poor Data Quality: Uncovering the Hidden Risks (https://actian.com/blog/data-management/the-costly-consequences-of-poor-data-quality)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- Explore Core Dimensions of Data Quality

- A Continual Quest for Improving Data Quality | U.S. Bureau of Economic Analysis (BEA) (https://bea.gov/news/blog/2026-03-16/continual-quest-improving-data-quality)

- The 6 Data Quality Dimensions with Examples | Collibra | Collibra (https://collibra.com/blog/the-6-dimensions-of-data-quality)

- Data Quality Metrics & KPIs for Data Engineering Teams in 2026 | Uvik Software (https://uvik.net/blog/data-quality-metrics-kpis)

- Data Quality Dimensions: Key Metrics & Best Practices for 2026 (https://ovaledge.com/blog/data-quality-dimensions)

- 6 Data Quality Dimensions: Complete Guide with Examples and Measurement Methods (https://icedq.com/6-data-quality-dimensions)

- Identify Challenges in Maintaining Data Quality and Solutions

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- Failure to Manage Data Quality: A Recipe for Disaster (https://medium.com/@rotimi.fawumi/failure-to-manage-data-quality-a-recipe-for-disaster-4149de4c4cce)

- New SoftServe Study: Big Decisions Made with Bad Data (https://softserveinc.com/en-us/news/bad-data-makes-bad-decisions)

- Data Quality Issues: 6 Solutions for Enterprises in 2026 (https://actian.com/data-quality-issues-6-solutions-for-enterprises)

- Bad Data is Costing Businesses Customers and the Numbers Prove It (https://prnewswire.com/news-releases/bad-data-is-costing-businesses-customers-and-the-numbers-prove-it-302746070.html)

- Implement Best Practices and Tools for Data Quality Assurance

- 4 Best Tools to Automate Data Quality Checks in ETL Pipelines 2026 | Airbyte (https://airbyte.com/data-engineering-resources/tools-automate-data-quality-checks-etl)

- How to build reliable data pipelines with data quality checks | dbt Labs (https://getdbt.com/blog/data-pipeline-quality-checks)

- Automated Data Quality: Fix Bad Data & Get AI-Ready in 2025 (https://atlan.com/automated-data-quality)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://finance.yahoo.com/news/data-priorities-2026-ai-adoption-190600933.html)

- Automating Data Quality in Data Engineering: A Game-Changer for Pipelines (https://medium.com/@one.step.analytics.on.data/automating-data-quality-in-data-engineering-a-game-changer-for-pipelines-821ac669c98c)

_For%20light%20backgrounds.svg)