Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Data Policies: Key Steps for Effective Governance

Explore essential steps for effective data policies and governance in organizations.

Introduction

Organizations must confront the complexities of data governance to succeed in a data-driven environment. Effective governance policies ensure compliance with regulatory standards while enhancing decision-making and operational efficiency. Organizations often struggle to implement effective data governance due to the complexity of regulations and the rapid pace of technological change. What strategies can organizations employ to create and sustain policies that address current challenges and adapt to the evolving information landscape?

Define Key Components of a Data Governance Policy

In 2026, organizations must navigate the complexities of information management to ensure effective oversight and compliance. Key components include:

- Purpose and Scope: Clearly defining the objectives of the information management policy and the information domains it encompasses is crucial. This alignment with organizational objectives guarantees that oversight efforts are concentrated and efficient.

- Roles and Responsibilities: Specifying who is responsible for information governance-such as information stewards, information owners, and governance committees-establishes accountability and clarity in management processes.

- Information Classification: Establishing a framework for categorizing information based on sensitivity and significance is essential. This classification helps organizations implement the right security measures and compliance protocols effectively, especially as they face increasing regulatory scrutiny.

- Information Quality Standards: Defining metrics and standards for information quality, including accuracy, completeness, and consistency, is essential for maintaining high-quality information that supports informed decision-making. Establishing these standards ensures that organizations can rely on their data for critical decision-making processes. With the volume of information projected to reach 180 zettabytes by 2025, ensuring quality is more critical than ever.

- Compliance and Regulatory Requirements: Outlining legal and regulatory obligations related to information management-such as GDPR, HIPAA, and SOC 2-ensures that organizations adhere to necessary compliance standards, mitigating risks associated with non-compliance. Failure to comply with regulations can lead to significant legal and financial repercussions.

- Monitoring and Reporting: Establishing processes for overseeing information management practices and reporting on their effectiveness is crucial. This continuous assessment aids in pinpointing areas for enhancement and guarantees ongoing adherence. This proactive approach not only identifies weaknesses but also strengthens the overall information management strategy, especially as the information management market is expected to expand considerably, reaching USD 24.07 billion by 2034.

By prioritizing these components, organizations position themselves not just to meet current demands but to thrive in a future where information governance is paramount.

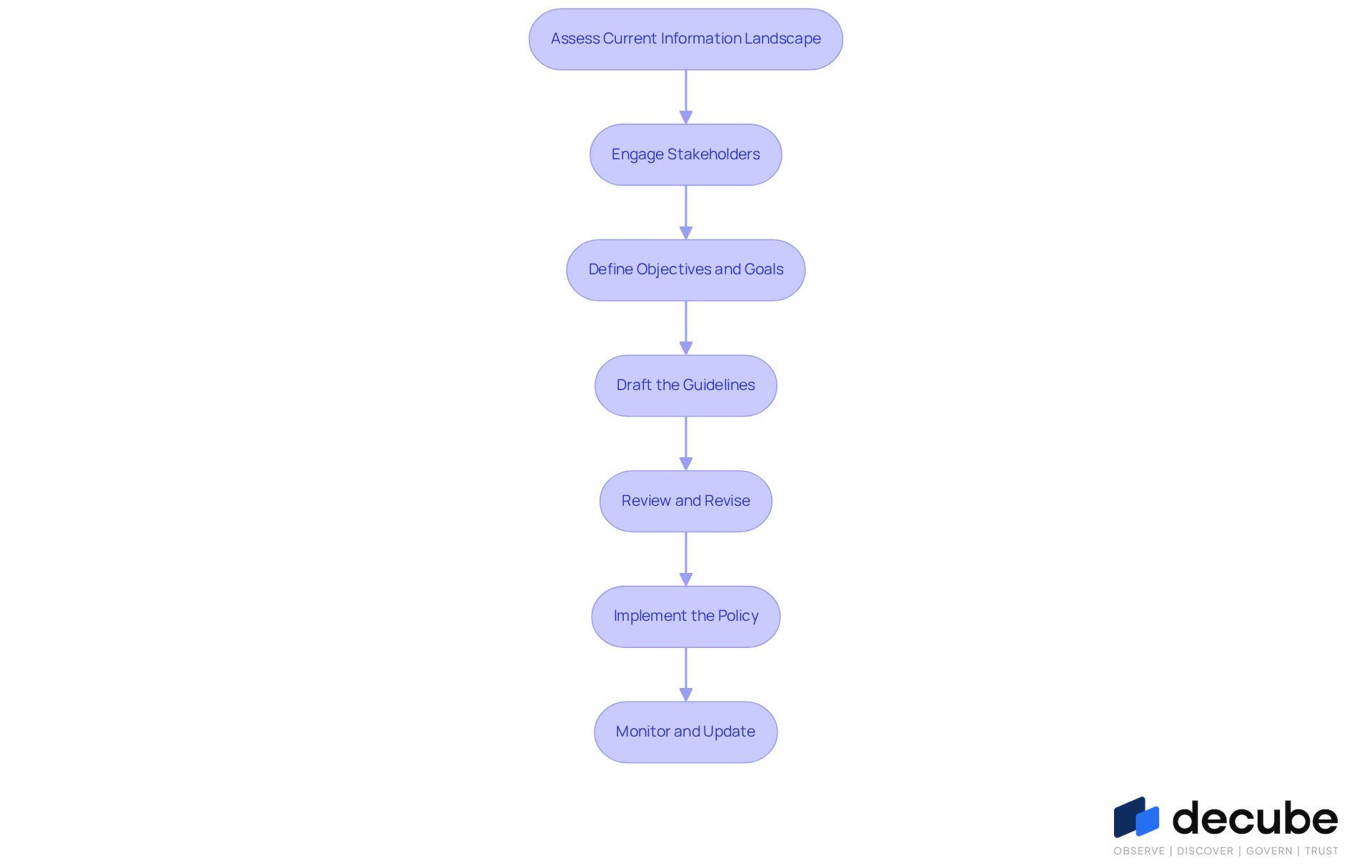

Implement Steps for Developing Your Data Governance Policy

To establish robust data policies, a systematic approach is essential.

- Assess Current Information Landscape: Evaluate existing information management practices to identify gaps in governance. Identifying gaps in governance can be challenging, but it is essential for effective policy development that aligns with data policies. Decube's ML-powered tests help identify thresholds for information quality, ensuring your evaluation is based on accurate and timely data.

- Engage Stakeholders: Involve key stakeholders from various departments to gather insights and ensure buy-in. Engaging stakeholders enhances management strategies by fostering collaboration and trust. This collaboration not only fosters ownership but also enhances the overall effectiveness of the governance framework. Decube's smart alerts can facilitate communication by grouping notifications, ensuring stakeholders are informed without being overwhelmed.

- Define Objectives and Goals: Clearly express the aims of the governance framework, aligning them with business objectives. This alignment guarantees that the framework supports the overall strategy of the organization and complies with the data policies that address specific business challenges. Employing Decube's automated crawling feature can simplify the process of refreshing metadata, ensuring that your objectives are grounded in the most recent information.

- Draft the Guidelines: Create a draft of the information management guidelines, incorporating the key components identified earlier. Ensure that the language is clear and accessible to all stakeholders, making it easier for them to understand their roles and responsibilities. The intuitive design of Decube's platform can assist in visualizing information flows, making it easier to convey complex governance concepts.

- Review and Revise: Circulate the draft among stakeholders for feedback and make necessary revisions. This iterative process aids in improving the data policies and addressing any concerns, ensuring that they meet the needs of all parties involved. Decube's data lineage feature can provide insights into how data is utilized across the company, helping to identify potential areas of concern during the review process.

- Implement the Policy: Once finalized, communicate the policy to all employees and provide training on its importance and application. This step is crucial for ensuring compliance and adherence, as organizations that invest in training see a reduction in data-related incidents. Decube's comprehensive information trust platform can support training efforts by providing clear visibility into quality and governance practices.

- Monitor and Update: Create a procedure for routinely examining and revising the guidelines to reflect changes in the information landscape and regulatory requirements. Regular updates to the policy ensure that it remains relevant and effective in a rapidly evolving information landscape. With Decube's automated monitoring features, companies can continuously monitor quality metrics and regulations, making it easier to remain compliant and proactive.

Establish Best Practices for Maintaining Data Governance Policies

To ensure the long-term effectiveness of data governance policies, organizations must adopt a series of strategic best practices:

- Regular Training and Awareness Programs: Ongoing training sessions are essential to reinforce the significance of information governance. Employees must understand their roles and responsibilities to foster a culture of accountability. Treating information as a strategic asset is crucial for optimizing resource allocation and enhancing decision-making. Decube's intuitive design enhances trust in information, enabling teams to effectively monitor quality and identify issues early.

- Establish a Management Committee: A dedicated management committee should oversee data management practices, review data policies, and address compliance issues. Regular meetings are essential for discussing management issues and ensuring alignment across departments. This committee can function as a strategic tool for agility and growth, as effective oversight has become essential for business success. Decube's platform supports this by providing comprehensive monitoring features that enhance collaboration among teams.

- Conduct Regular Audits: Periodic reviews of information management practices help evaluate compliance and pinpoint areas for enhancement. This proactive approach is essential for upholding high standards and ensuring that management and data policies are effectively implemented. This statistic highlights a significant gap in prioritization among information leaders, emphasizing the critical importance of regular audits to strengthen oversight effectiveness. Users have observed that Decube's automated crawling feature simplifies metadata management, ensuring that information remains accurate and compliant.

- Utilize Metrics and KPIs: Defining key performance indicators (KPIs) enables organizations to assess the effectiveness of their information management initiatives. Regular reviews of these metrics enable data-driven decision-making and highlight progress over time. Feedback from Decube users highlights the significance of metrics in comprehending lineage and quality, which are essential for informed decision-making.

- Encourage Feedback: Creating avenues for employee input on governance practices can reveal challenges and opportunities for improvement. This input is invaluable for continuous improvement. Decube's robust interface streamlines the feedback process, empowering teams to effectively communicate issues and propose improvements.

- Stay Informed on Regulatory Changes: Organizations must remain vigilant about changes in data protection regulations and industry standards. Updating policies accordingly ensures ongoing compliance and relevance in a rapidly evolving landscape. With Decube's strong management features, organizations can maintain oversight and adapt to regulatory changes seamlessly.

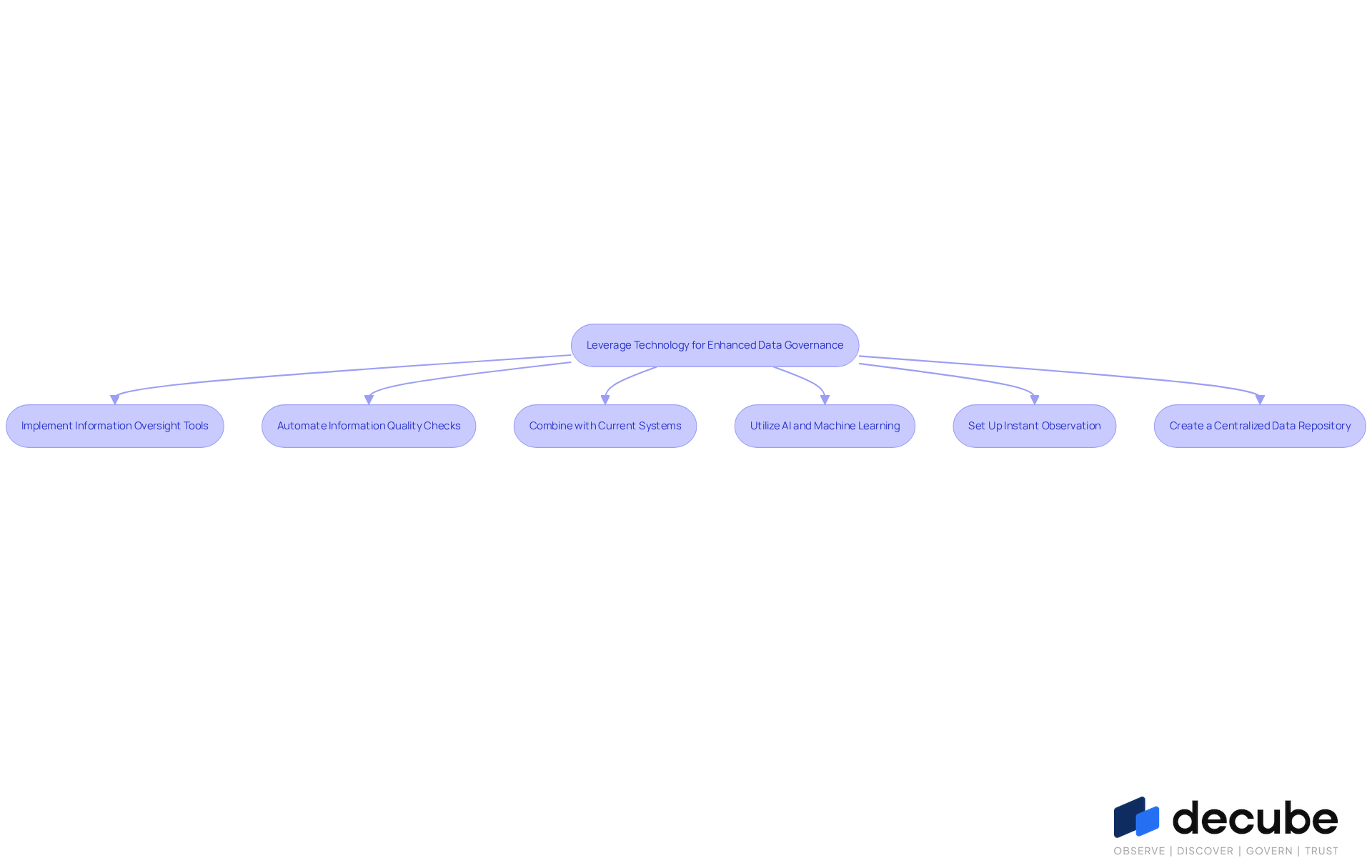

Leverage Technology for Enhanced Data Governance

Organizations often struggle with data governance due to the complexities of managing diverse information resources effectively. They can significantly enhance their data governance efforts by leveraging advanced technology in the following ways:

- Implement Information Oversight Tools: Organizations should utilize specialized software solutions designed for information management, including information cataloging, lineage tracking, and compliance management tools. These tools not only streamline management processes but also boost information visibility, allowing organizations to handle their resources more effectively.

- Automate Information Quality Checks: Automation plays a crucial role in conducting regular information quality assessments, identifying issues such as duplicates, inconsistencies, and missing values. This proactive method not only aids in upholding high quality standards but also aligns with the increasing trend of automation in management, which is anticipated to become a standard practice by 2026.

- Combine with Current Systems: It is essential to ensure that information management tools merge seamlessly with existing information management systems. This integration enables a smooth flow of information and enhances the overall efficiency of management practices, minimizing the risk of isolated information and fostering cooperation across departments.

- Utilize AI and Machine Learning: Organizations should leverage AI and machine learning technologies to improve information management processes, such as anomaly detection and predictive analytics. These technologies provide valuable insights that guide management choices and enhance adherence, making them vital for contemporary information management strategies.

- Set Up Instant Observation: Deploying real-time monitoring solutions allows organizations to track usage and adherence to regulatory standards. This capability enables quick responses to potential problems, ensuring information integrity and compliance with regulatory standards.

- Create a Centralized Data Repository: Developing a centralized repository for all data management documentation, policies, and procedures is crucial. This repository should be easily accessible to all stakeholders, promoting transparency and collaboration, which are vital for effective governance in today's data-driven landscape.

Without a cohesive strategy, organizations risk falling behind in their data governance efforts, potentially compromising their data policies and operational integrity.

Conclusion

In today's data-driven environment, effective data governance policies are essential for organizational success. By clearly defining the purpose, roles, information classification, and compliance requirements, organizations can build a strong framework that meets regulatory standards and improves decision-making. Proactive monitoring and regular updates are crucial to keeping policies relevant and effective in the face of evolving challenges.

Throughout the article, we have outlined key steps for developing and maintaining a data governance policy. From assessing the current information landscape and engaging stakeholders to implementing technology solutions and conducting regular audits, each step plays a critical role in fostering a culture of accountability and collaboration. Best practices, such as ongoing training and establishing a dedicated management committee, further reinforce the commitment to high-quality information governance.

In a world where data is increasingly viewed as a strategic asset, organizations must prioritize their data governance initiatives. Embracing advanced technologies and maintaining a continuous improvement mindset will not only enhance compliance and security but also drive organizational growth and innovation. Ultimately, organizations that prioritize data governance will not only safeguard their assets but also unlock new avenues for innovation and competitive advantage.

Frequently Asked Questions

What are the key components of a data governance policy?

The key components include Purpose and Scope, Roles and Responsibilities, Information Classification, Information Quality Standards, Compliance and Regulatory Requirements, and Monitoring and Reporting.

Why is defining the Purpose and Scope important in a data governance policy?

Defining the Purpose and Scope is crucial as it aligns the information management policy with organizational objectives, ensuring that oversight efforts are concentrated and efficient.

What roles are typically involved in information governance?

Key roles include information stewards, information owners, and governance committees, which establish accountability and clarity in management processes.

How does Information Classification benefit organizations?

Information Classification helps organizations categorize information based on sensitivity and significance, enabling them to implement appropriate security measures and compliance protocols.

What are Information Quality Standards and why are they important?

Information Quality Standards define metrics for accuracy, completeness, and consistency of information, ensuring that organizations can rely on high-quality data for informed decision-making.

What compliance and regulatory requirements should organizations consider?

Organizations should outline legal obligations related to information management, such as GDPR, HIPAA, and SOC 2, to adhere to necessary compliance standards and mitigate risks.

Why is Monitoring and Reporting essential in a data governance policy?

Monitoring and Reporting processes are essential for overseeing information management practices, assessing their effectiveness, and identifying areas for enhancement to ensure ongoing adherence.

What is the projected growth of the information management market?

The information management market is expected to expand significantly, reaching USD 24.07 billion by 2034.

List of Sources

- Define Key Components of a Data Governance Policy

- Data Governance Market Size, Share | Trends Analysis [2034] (https://fortunebusinessinsights.com/data-governance-market-108640)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- Top data governance trends: The future of data in 2026 - Murdio (https://murdio.com/insights/data-governance-trends)

- Why data governance is the cornerstone of trustworthy AI in 2026 (https://strategy.com/software/blog/why-data-governance-is-the-cornerstone-of-trustworthy-ai-in-2026)

- Implement Steps for Developing Your Data Governance Policy

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- How To Make Data Governance A Competitive Advantage (https://forbes.com/councils/forbestechcouncil/2026/04/09/how-to-make-data-governance-a-competitive-advantage)

- Data Governance Market Size, Share | Trends Analysis [2034] (https://fortunebusinessinsights.com/data-governance-market-108640)

- Establish Best Practices for Maintaining Data Governance Policies

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Governance in 2026: Key Strategies for Enterprise Compliance and Innovation (https://community.trustcloud.ai/article/data-governance-in-2025-what-enterprises-need-to-know-today)

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- How To Make Data Governance A Competitive Advantage (https://forbes.com/councils/forbestechcouncil/2026/04/09/how-to-make-data-governance-a-competitive-advantage)

- Leverage Technology for Enhanced Data Governance

- Real-Time Data Integration Statistics – 39 Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/real-time-data-integration-growth-rates)

- Data Governance in 2026: How AI and Cloud Will Redefine Enterprise Control | Zenxsys Blog (https://zenxsys.com/blog/data-governance-in-2026-how-ai-and-cloud-will-redefine-enterprise-control-blog)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Why data governance is the cornerstone of trustworthy AI in 2026 (https://strategy.com/software/blog/why-data-governance-is-the-cornerstone-of-trustworthy-ai-in-2026)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

_For%20light%20backgrounds.svg)