Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Schema Database Design: Best Practices for Data Engineers

Optimize your schema database design with best practices for enhanced efficiency and data integrity.

Introduction

A well-designed database schema serves as the backbone of any effective information system, fundamentally shaping how data is organized, accessed, and maintained. For data engineers, mastering schema design is crucial; it not only enhances performance and integrity but also facilitates scalability in an ever-evolving digital landscape. Given the increasing complexity of data environments, engineers must consider how to ensure their schema designs meet both current and future demands while upholding data integrity and governance.

Understand the Importance of Schema Design in Databases

The schema database serves as the foundational framework of an information system, dictating how . A significantly enhances information integrity, optimizes efficiency, and facilitates scalability. It elucidates the relationships between data, allowing the system to manage queries and transactions effectively. For example, normalization minimizes redundancy and bolsters consistency, while denormalization can improve read efficiency in specific scenarios. The design of an efficient structure is crucial for engineers, as it directly influences key , including query execution speed and overall system effectiveness.

Adhering to established practices, such as and maintaining referential integrity, can lead to and user experience. Empirical evidence indicates that organizations with robust structural designs experience fewer inconsistencies and improved operational effectiveness, ultimately supporting better decision-making and a competitive advantage. Experts emphasize that a well-structured framework not only facilitates rapid information retrieval but also ensures that and accessible only to authorized individuals. Investing in thoughtful schema database design is vital for adapting to changing business requirements and achieving sustained success.

With Decube's , engineers can ensure that metadata is managed seamlessly and continuously updated without manual intervention. This feature operates by automatically refreshing metadata as information sources are integrated, thereby enhancing observability and governance. It provides secure access control, enabling organizations to specify who can view or modify information. Furthermore, the implementation of information agreements fosters collaboration among stakeholders, ensuring that quality is maintained in decentralized information management environments. By integrating these elements, organizations can achieve a more cohesive structural design that promotes both and .

Implement Best Practices for Schema Creation and Management

To create and manage effective database schemas, data engineers should adhere to several :

- Normalization: Implement normalization methods to eliminate redundancy and ensure . Aim for at least to maintain a clean structure, as normalization is crucial for avoiding update anomalies and ensuring data consistency. This process significantly enhances overall database design, making it more adaptable to evolving business needs.

- : Utilize clear and for tables and columns to improve readability and maintainability. This practice minimizes confusion and streamlines collaboration among team members.

- Documentation: Keep , including relationships and constraints, to facilitate understanding among team members. Well-documented structures support better communication and knowledge transfer within teams.

- Version Control: Treat structural changes similarly to code alterations by employing to track modifications and ensure rollback capabilities. This approach enhances reliability and safety in managing structural updates.

- : Regularly monitor system efficiency and adjust the structure as necessary to improve query execution times. Continuous performance analysis aids in identifying bottlenecks and enhancing responsiveness, ensuring that the database meets user demands.

Moreover, implementing information contracts can significantly enhance collaboration among stakeholders, ensuring that the products derived from these schemas are reliable and of . By establishing clear agreements on information definitions and expectations, engineers can transform raw data into dependable assets, fostering a culture of accountability and trust within decentralized management frameworks.

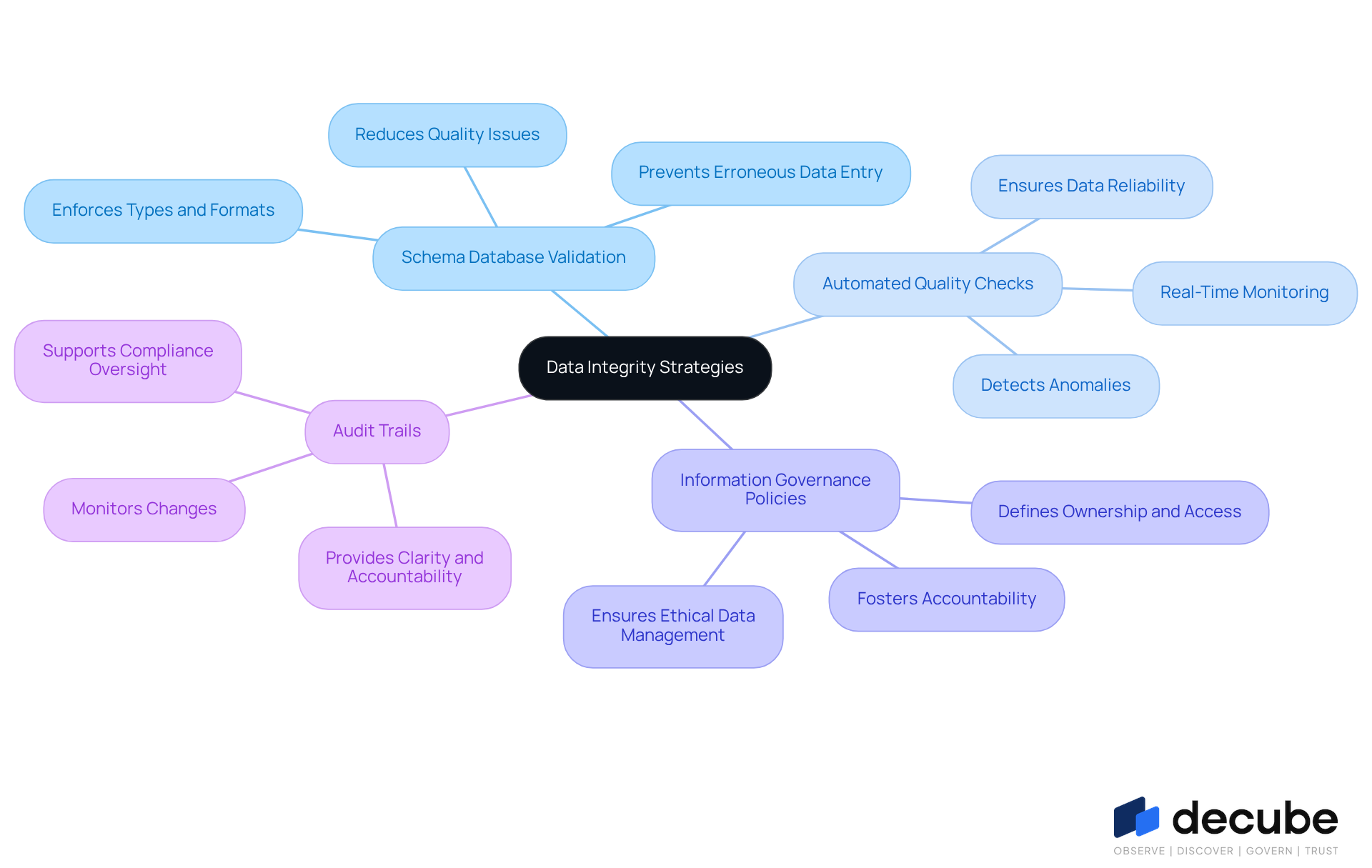

Ensure Data Integrity with Validation and Governance Strategies

, and data engineers can implement several strategies to uphold it effectively:

- Schema database validation: Implement to enforce types, formats, and constraints at the database level. This proactive approach prevents erroneous information from being entered, significantly . Notably, only 12% of organizations report that their information is of sufficient quality and accessibility for AI, underscoring the necessity for robust validation practices.

- : Employ as it flows through pipelines. This real-time monitoring facilitates the detection of anomalies and inconsistencies, ensuring that information remains accurate and reliable throughout its lifecycle. Organizations that integrate integrity into their operating models are better positioned to meet regulatory expectations and harness dependable AI.

- : Develop clear that define ownership, access controls, and compliance requirements. Such policies ensure that information is managed responsibly and ethically, fostering a culture of accountability. As organizations transition to decentralized information architectures, effective governance becomes increasingly vital.

- : Maintain comprehensive to monitor changes made to the schema and information. This practice provides clarity and accountability in information management, enabling organizations to oversee compliance and address any issues that arise. By 2026, governance will be integral to how information is organized and monitored from the outset, reinforcing the importance of these practices.

Incorporating these strategies not only enhances information integrity but also bolsters the overall quality and reliability of management practices, empowering organizations to leverage their assets effectively.

Leverage Advanced Technologies for Enhanced Schema Efficiency

Integrating advanced technologies can significantly enhance , particularly when employing a unified information trust platform like Decube. Data engineers should consider the following approaches:

- AI-Driven Instruments: Leverage that automate repetitive tasks, suggest enhancements, and predict efficiency issues based on historical data. These instruments streamline the design process, allowing engineers to concentrate on more strategic initiatives.

- : Implement to continuously monitor structural performance. For instance, Decube's feature enables business users to identify issues in reports or dashboards, providing insights into data flow across components and breaking down incidents by attributes. This functionality facilitates , ensuring the schema database remains efficient and responsive to evolving data patterns. Organizations can harness to pinpoint bottlenecks and optimize query performance, ultimately leading to enhanced overall efficiency.

- Cloud-Based Solutions: Adopt that offer scalability and flexibility. These platforms enable adaptable structural modifications as data requirements evolve, ensuring that the database can grow alongside the organization. The ability to adjust resources as necessary is crucial for maintaining efficiency during peak usage periods.

- : Employ advanced to visualize structural relationships and dependencies. This visualization aids in creating and managing complex data systems, simplifying the identification of potential issues and improving structural arrangements for greater efficiency.

By integrating these technologies, including the , engineers can enhance the efficiency of the schema database, resulting in improved database functionality and more effective information management. Insights from experts underscore the significance of in monitoring performance across various data components, illustrating the practical application of these technologies.

Conclusion

A well-structured schema database is vital for optimizing data management and ensuring information integrity. Its design significantly influences the efficiency and scalability of information systems, making it a critical focus for data engineers. By grasping the intricacies of schema design, engineers can develop frameworks that not only enhance performance but also accommodate the evolving needs of organizations.

This article has highlighted key practices for effective schema creation and management, including:

- Normalization

- Consistent naming conventions

- Robust documentation

By emphasizing the importance of validation and governance strategies - such as automated quality checks and audit trails - data integrity can be maintained. Moreover, leveraging advanced technologies like AI-driven tools and cloud-based solutions can markedly improve schema efficiency, enabling organizations to adapt seamlessly to changing data requirements.

Ultimately, investing in thoughtful schema design and management is essential for achieving operational excellence and maintaining a competitive edge in today's data-driven landscape. By adopting these best practices and technologies, data engineers can transform their databases into powerful tools that facilitate informed decision-making and foster a culture of accountability and trust. Embracing these strategies will not only enhance database performance but also empower organizations to leverage their data as a strategic asset for future growth.

Frequently Asked Questions

What is the role of a schema database in information systems?

The schema database serves as the foundational framework that dictates how information is organized, stored, and retrieved within an information system.

How does a well-structured schema database impact information integrity and efficiency?

A well-structured schema enhances information integrity, optimizes efficiency, and facilitates scalability by elucidating the relationships between data, which helps manage queries and transactions effectively.

What are normalization and denormalization in the context of schema design?

Normalization minimizes redundancy and bolsters consistency in a database, while denormalization can improve read efficiency in specific scenarios.

Why is the design of an efficient schema structure crucial for engineers?

The design directly influences key performance metrics such as query execution speed and overall system effectiveness, making it vital for engineers to focus on efficient schema design.

What practices can enhance application performance and user experience in schema design?

Implementing suitable indexing and maintaining referential integrity are established practices that can lead to notable enhancements in application performance and user experience.

How does a robust structural design benefit organizations?

Organizations with robust structural designs experience fewer inconsistencies, improved operational effectiveness, better decision-making, and a competitive advantage.

What is the significance of security in schema database design?

A well-structured framework ensures that sensitive data remains secure and accessible only to authorized individuals, enhancing overall data security.

How does Decube's automated crawling feature contribute to schema database management?

It manages metadata seamlessly by automatically refreshing it as information sources are integrated, enhancing observability and governance without manual intervention.

What are information agreements, and how do they foster collaboration?

Information agreements facilitate collaboration among stakeholders by ensuring quality is maintained in decentralized information management environments.

What overall benefits can organizations achieve through thoughtful schema database design?

Thoughtful schema database design supports operational efficiency, information security, and adaptability to changing business requirements, ultimately leading to sustained success.

List of Sources

- Understand the Importance of Schema Design in Databases

- Why Is Database Design Important? | Miro (https://miro.com/diagramming/why-is-database-design-important)

- Importance of Database Schema in Modern Applications (https://asoftica.com/blog/importance-of-database-schema-in-modern-applications)

- Complete Guide to Database Schema Design (https://integrate.io/blog/complete-guide-to-database-schema-design-guide)

- pingcap.com (https://pingcap.com/article/database-schema-why-it-matters-in-sql-data-management)

- The Benefits of Following Best Practices in Database Schema Design (https://medium.com/@pankaj_pandey/the-benefits-of-following-best-practices-in-database-schema-design-28ad0815d5e7)

- Implement Best Practices for Schema Creation and Management

- Introduction to Database Normalization - GeeksforGeeks (https://geeksforgeeks.org/dbms/introduction-of-database-normalization)

- 10 Essential Database Management Best Practices for 2026 (https://scalelist.com/database-management-best-practices)

- What Makes a Great DBA in 2026? (https://dbta.com/Columns/DBA-Corner/What-Makes-a-Great-DBA-in-2026-173474.aspx)

- Refonte Learning : Database Administration in 2026: Top 5 Trends Shaping the Future (https://refontelearning.com/blog/database-administration-in-2026-top-5-trends-shaping-the-future)

- 10 Enterprise-Grade Database Design Best Practices for 2026 - DataTeams AI (https://datateams.ai/blog/database-design-best-practices)

- Ensure Data Integrity with Validation and Governance Strategies

- Most Data Validation Techniques Will Fail You. These 3 Won't. (https://montecarlodata.com/blog-data-validation-techniques)

- 2026 AI Predictions: Why Data Integrity Matters More Than Ever | Precisely (https://precisely.com/data-integrity/2026-ai-predictions-why-data-integrity-matters-more-than-ever)

- The 2026 Guide to Data Management | IBM (https://ibm.com/think/topics/data-management-guide)

- Guide: How to improve data quality through validation and quality checks - data.org (https://data.org/guides/how-to-improve-data-quality-through-validation-and-quality-checks)

- Top 12 Data Management Predictions for 2026 - hyperight.com (https://hyperight.com/top-12-data-management-predictions-for-2026)

- Leverage Advanced Technologies for Enhanced Schema Efficiency

- Key Use Cases and Success Stories of Real-time Analytics Databases (https://imply.io/use-cases/key-use-cases-and-success-stories-of-real-time-analytics-databases)

- Why AI Database Tools Are a Must-Have in 2026 (https://stackby.com/blog/ai-database-tools)

- Database Development with AI in 2026 - Brent Ozar Unlimited® (https://brentozar.com/archive/2026/01/database-development-with-ai-in-2026)

- What is Real-Time Analytics? A Complete Guide (2026) | Engineering | ClickHouse Resource Hub (https://clickhouse.com/resources/engineering/what-is-real-time-analytics)

_For%20light%20backgrounds.svg)