Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Enhancing Sensitive Data Security

Enhance sensitive data security with best practices for classification, access control, encryption, and monitoring.

Introduction

Organizations struggle to keep sensitive data secure as cyber threats and regulatory demands escalate. Implementing best practices for data security is crucial for organizations to protect their valuable information and enhance operational integrity. What strategies can organizations implement to ensure robust data protection? This article outlines four essential practices organizations must adopt to fortify their sensitive data security:

- Effective data classification

- Continuous monitoring protocols

- Regular security audits

- Employee training and awareness

Adopting these practices is essential for organizations aiming to navigate the complexities of modern data governance effectively.

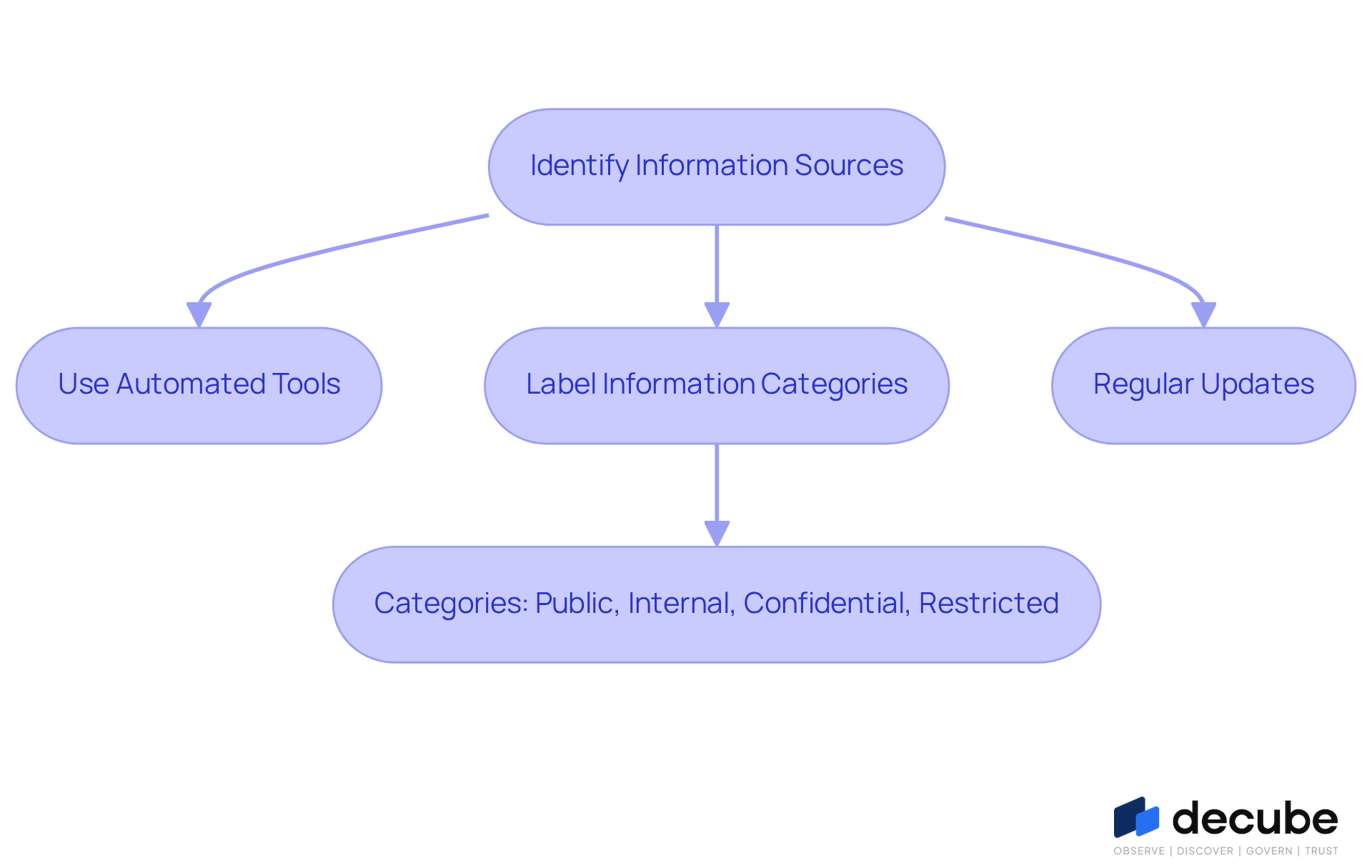

Classify and Inventory Sensitive Data

To effectively safeguard sensitive information, organizations must first recognize the critical importance of asset classification and inventory management. This process begins with identifying all information sources, including databases, file systems, and cloud storage. Automated tools, such as Decube's crawling feature, are vital for connecting to different information sources and refreshing metadata automatically. By labeling information into categories like 'Public', 'Internal', 'Confidential', and 'Restricted', organizations can manage sensitive material effectively.

Regular updates to this classification are essential to reflect changes in information usage and evolving regulatory requirements. For instance, a financial institution may classify customer financial records as 'Confidential', implementing stricter access controls to safeguard this sensitive information. In 2026, a notable proportion of entities are anticipated to employ automated tools for information classification, emphasizing the increasing acknowledgment of their significance in information governance.

Specialists in information governance highlight that efficient classification not only improves protection but also assists in adhering to applicable regulations, like GDPR and PDPA. By maintaining a precise inventory of information assets, organizations can prioritize their protective efforts, ensuring they are well-equipped to safeguard sensitive material and reduce potential risks. Failing to recognize these pitfalls can expose organizations to significant security vulnerabilities. This oversight can result in severe penalties and reputational damage. Ultimately, neglecting these essential practices can jeopardize not only data security but also organizational integrity.

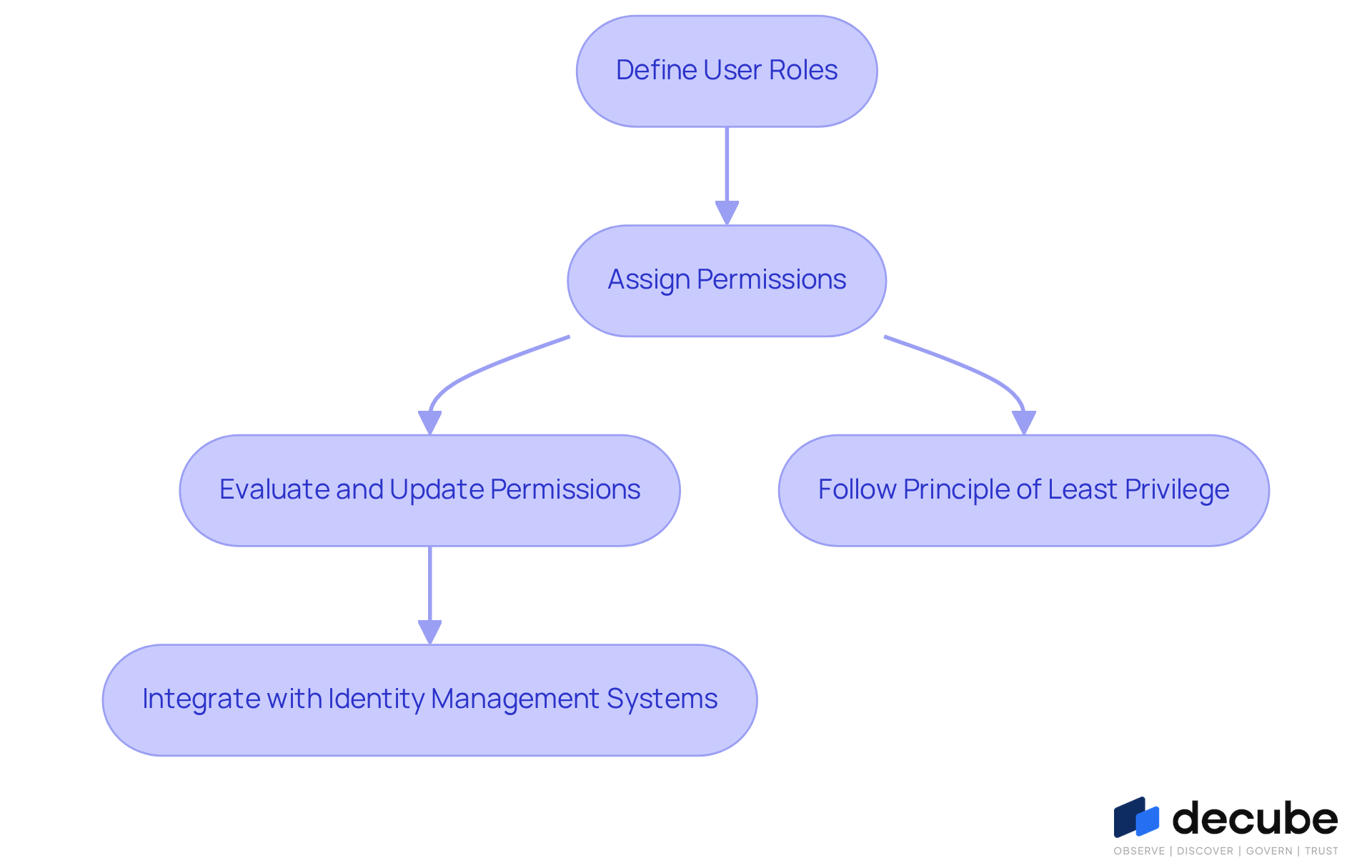

Enforce Role-Based Access Controls

Establishing role-based access controls is essential for sensitive data security in any organization. Start by clearly defining user roles based on specific job functions and responsibilities. Each role should be granted permissions that follow the principle of least privilege, ensuring users have access only to the information necessary for their tasks. For instance, a data analyst may need permission to view certain datasets for analysis but should not have the authority to alter or remove sensitive information.

Regular evaluations and updates of permissions are essential to reflect any changes in roles or organizational structure. Additionally, combining RBAC with identity management systems can simplify user provisioning and de-provisioning procedures, thereby improving overall protection and compliance.

Decube's automated crawling capability ensures that once your sources are linked, metadata refreshes automatically, allowing real-time updates to control permissions. This capability not only enhances data observability but also ensures that only authorized users can view or edit sensitive data security, thereby reinforcing the security framework.

Research from The Brainy Insights indicates that RBAC solutions can cut unauthorized access incidents by almost 30%, aligning well with Decube's automated features for secure access management. Additionally, as the RBAC market is projected to reach USD 26.5 billion by 2032, the increasing relevance of these controls cannot be overstated.

However, managing RBAC can become cumbersome, especially in complex systems. Failure to streamline RBAC processes may lead to inefficiencies and increased security risks. By tackling these challenges and utilizing effective RBAC strategies with Decube's automated features, organizations can significantly enhance their sensitive data security and streamline their operational efficiency.

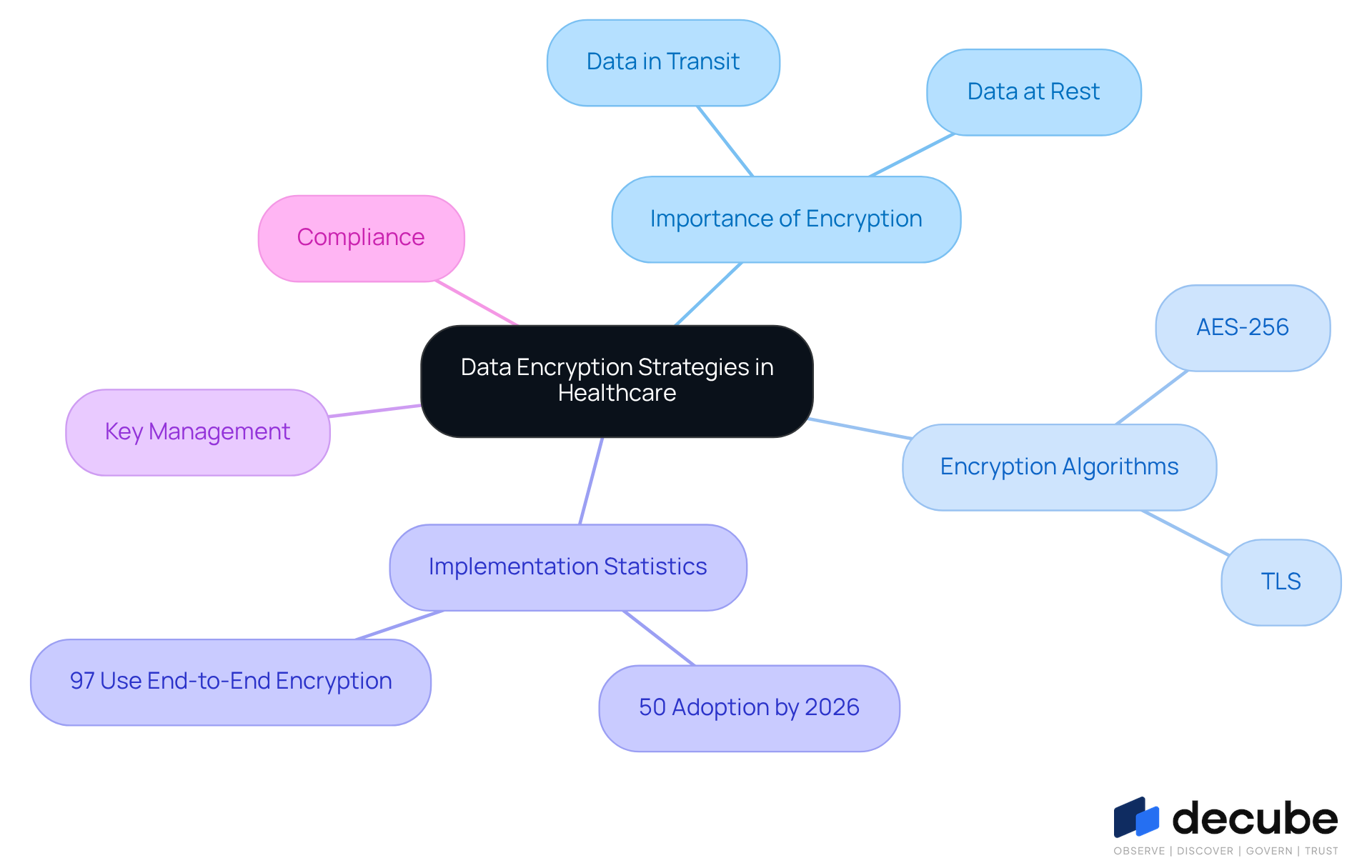

Implement Data Encryption Strategies

In the healthcare sector, ensuring sensitive data security through data encryption is not just important; it is imperative. Organizations must implement encryption to ensure sensitive data security for information at rest, such as databases and file storage, as well as for information in transit, including communications over networks.

Implementing strong encryption algorithms, such as AES-256, is essential for ensuring sensitive data security. For example, healthcare providers can enhance sensitive data security by encrypting patient records held in their databases and ensuring that any information transmitted over the internet is secured using protocols like TLS.

As of 2026, it is anticipated that 50% of organizations will implement AES-256 encryption, reflecting a growing awareness of its significance in safeguarding information. Additionally, establishing a robust key management strategy is vital for sensitive data security to safeguard encryption keys from unauthorized access.

Frequent assessments and modifications of encryption methods are essential to uphold sensitive data security and conform with advancing safety standards and compliance mandates, especially with the upcoming 2026 HIPAA adjustments that require more rigorous protection measures.

Security specialists emphasize that end-to-end encryption is a leading strategy for sensitive data security, with 97% of protection officers using it to secure information. For instance, XYZ Healthcare has set a benchmark by effectively using AES-256 encryption to protect patient information.

Establish Continuous Monitoring Protocols

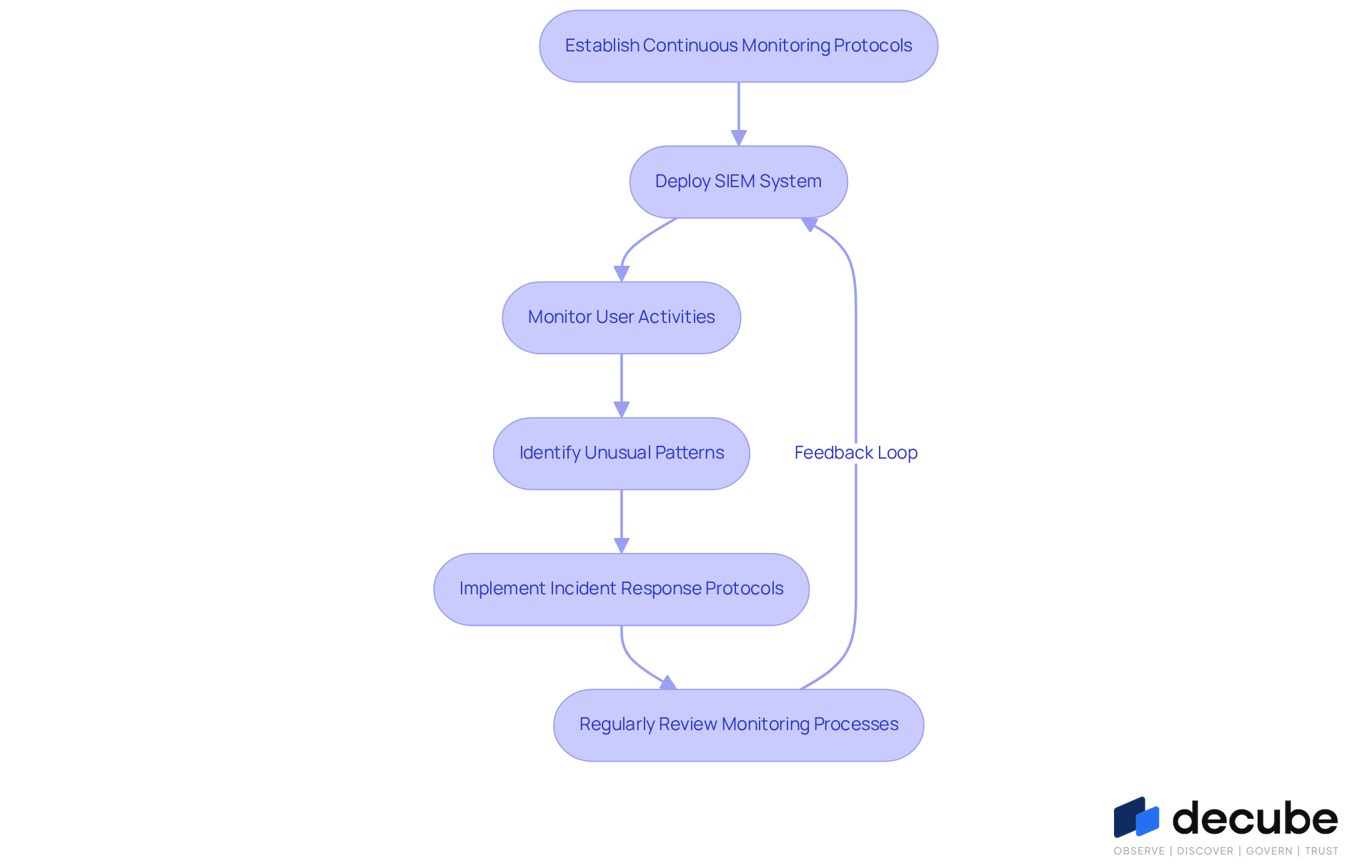

Organizations often struggle to keep pace with evolving threats to sensitive data security, making constant oversight essential. This involves ongoing evaluation and examination of information environments to identify potential threats. For example, a financial organization might deploy a Security Information and Event Management (SIEM) system to monitor user activities and identify unusual patterns indicative of potential breaches. By 2026, approximately 66% of organizations are expected to utilize SIEM systems, highlighting an increasing recognition of their importance in sensitive data security.

Additionally, robust incident response protocols are essential for promptly addressing detected threats. Regular reviews of monitoring processes are vital for adapting to emerging threats and meeting evolving regulatory requirements. Cybersecurity analysts assert that effective monitoring is a fundamental aspect of a comprehensive security strategy, highlighting the necessity for continuous vigilance to protect sensitive data security in a dynamic threat landscape. Without such vigilance, organizations risk severe repercussions, including data breaches and loss of trust.

Conclusion

Enhancing sensitive data security is essential for organizations to protect their critical information assets in an increasingly complex digital environment. By focusing on effective data classification, role-based access controls, robust encryption strategies, and continuous monitoring, organizations can significantly reduce their vulnerability to data breaches and compliance failures.

Key strategies discussed include:

- The necessity of classifying and inventorying sensitive data to manage it effectively.

- Enforcing role-based access controls to limit information access to authorized personnel.

- Employing strong encryption methods to safeguard data at rest and in transit.

- Establishing continuous monitoring protocols to detect and respond to potential threats.

Each of these practices plays a vital role in creating a comprehensive security framework that not only protects sensitive information but also aligns with regulatory requirements.

In conclusion, prioritizing these best practices is essential for organizations in today's digital landscape. Organizations must recognize the importance of enhancing sensitive data security to maintain trust, comply with regulations, and safeguard their reputation. Implementing these strategies not only mitigates risks but also strengthens organizational integrity and trust.

Frequently Asked Questions

What is the importance of classifying and inventorying sensitive data?

Classifying and inventorying sensitive data is crucial for safeguarding information, as it helps organizations recognize and manage their data sources effectively.

What are the steps involved in classifying sensitive data?

The process begins with identifying all information sources, including databases, file systems, and cloud storage, followed by labeling information into categories such as 'Public', 'Internal', 'Confidential', and 'Restricted'.

How do automated tools assist in data classification?

Automated tools, like Decube's crawling feature, connect to different information sources and refresh metadata automatically, facilitating the classification process.

Why is it important to regularly update data classifications?

Regular updates are essential to reflect changes in information usage and evolving regulatory requirements, ensuring that the classification remains relevant and effective.

Can you provide an example of data classification in practice?

A financial institution may classify customer financial records as 'Confidential' and implement stricter access controls to protect this sensitive information.

What is the anticipated trend regarding automated tools for information classification by 2026?

A notable proportion of entities are expected to employ automated tools for information classification, highlighting their growing importance in information governance.

How does efficient data classification support regulatory compliance?

Efficient classification helps organizations adhere to applicable regulations, such as GDPR and PDPA, by ensuring proper management and protection of sensitive data.

What are the risks of failing to classify and inventory sensitive data?

Neglecting these practices can expose organizations to significant security vulnerabilities, leading to severe penalties and reputational damage.

What is the overall impact of neglecting data classification and inventory management?

Neglecting these essential practices can jeopardize data security and organizational integrity, increasing the risk of security breaches and compliance issues.

List of Sources

- Classify and Inventory Sensitive Data

- Data Inventory Guide for Compliance and Security 2026 (https://ovaledge.com/blog/what-is-data-inventory-guide)

- Data Inventory and Classification (https://soteria.io/data-inventory-and-classification)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Enforce Role-Based Access Controls

- Role-based Access Control Market Size & Share | Analysis, Trends & Forecast, [2032] (https://marketsandmarkets.com/Market-Reports/role-based-access-control-market-46615680.html)

- Role-Based Access Control Market Size, Report By 2032 | The Brainy Insights (https://thebrainyinsights.com/report/role-based-access-control-market-13919?srsltid=AfmBOoqvOKC2Dk8OkLMhfH6mPUEWM_PtdbZc4wpj7g4uuk510-ivfPDW)

- Role Based Access Control Market Size, Share & Growth, 2033 (https://marketdataforecast.com/market-reports/role-based-access-control-market)

- Role-based Access Control Market Size, Share, Report, 2034 (https://fortunebusinessinsights.com/role-based-access-control-market-111247)

- The 20 Best Quotes from Cyber Risk Leaders (https://revival-holdings.com/20-best-quotes-from-cyber-risk-leaders)

- Implement Data Encryption Strategies

- What AES-256 Encryption Cannot Protect: The 6 Security Gaps Businesses Must Address (https://kiteworks.com/secure-file-sharing/encryption-security-gaps)

- 2026 & Beyond: Your Data Encryption Strategy is Begging for Disruption - Paperclip Data Management & Security (https://paperclip.com/2026-beyond-your-data-encryption-strategy-is-begging-for-disruption)

- Encryption Software Statistics and Facts (2026) (https://scoop.market.us/encryption-software-statistics)

- 2026 HIPAA Changes: New Security Rule Requirements (https://hipaavault.com/resources/2026-hipaa-changes)

- Data Privacy Statistics: US 2025 | Infrascale (https://infrascale.com/data-privacy-statistics-usa)

- Establish Continuous Monitoring Protocols

- 8 Tweetable Cybersecurity Quotes | Blue-Pencil (https://blue-pencil.ca/8-tweetable-cybersecurity-quotes-to-help-you-and-your-business-stay-safer)

- Continuous Monitoring in 2026: Best Practices for Regulated Industries (https://telos.com/blog/2026/04/14/continuous-monitoring-in-highly-regulated-industries-best-practices)

- 50+ Cloud Security Statistics in 2026 (https://sentinelone.com/cybersecurity-101/cloud-security/cloud-security-statistics)

- 41 Cybersecurity Quotes to Protect Your Digital Life (https://acecloudhosting.com/blog/cybersecurity-quotes)

_For%20light%20backgrounds.svg)