Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Data Validation Services: Best Practices for Data Engineers

Discover key best practices for implementing effective data validation services in organizations.

Introduction

As organizations increasingly depend on accurate data for decision-making, the integrity of that information has never been more critical. This discussion highlights the critical role of data validation services. Despite the growing importance of data validation, many data engineers face significant challenges in its implementation. Failure to adopt best practices in data validation can lead to costly errors and diminished trust in data-driven decisions.

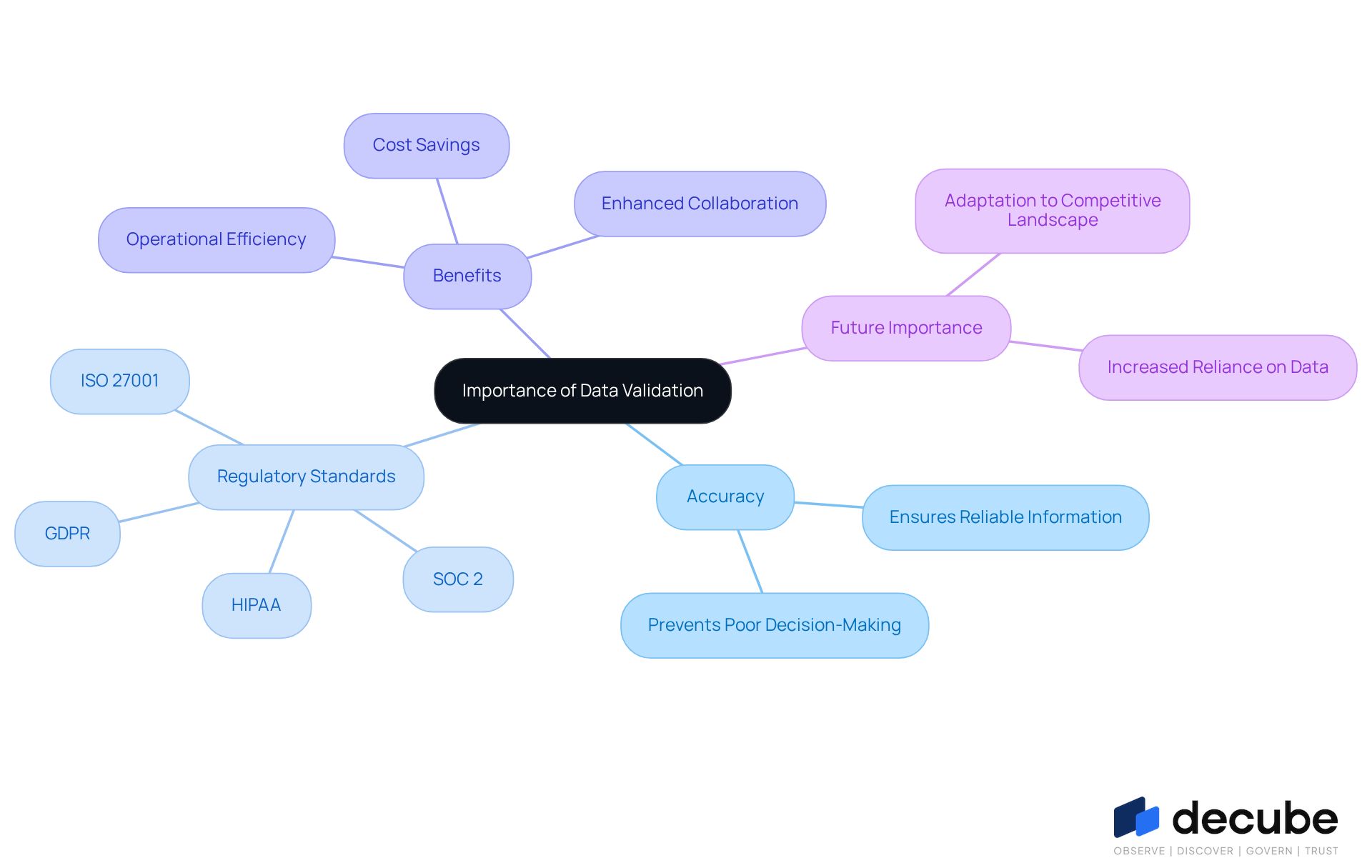

Understand the Importance of Data Validation in Organizations

In an era where data drives decisions, the accuracy of information verification has never been more critical. Information verification is a crucial procedure that ensures the accuracy, consistency, and reliability of information within a company. It acts as a safeguard against errors that can lead to poor decision-making and operational inefficiencies. In the present information-focused environment, where entities increasingly rely on analytics and AI, the importance of verifying information is paramount. Effective information verification supports quality assurance and ensures compliance with regulatory standards such as:

- SOC 2

- ISO 27001

- HIPAA

- GDPR

By adopting strong information verification practices, organizations can enhance their governance frameworks, ensuring that the information is both accurate and reliable. This trust is essential for promoting collaboration between information producers and consumers, ultimately leading to enhanced business results. For example, a retail customer gained significantly from validation rules that avoided erroneous discount suggestions, preserving considerable sums of money.

Additionally, with Decube's automated crawling capability, organizations can effortlessly manage metadata without manual updates, ensuring that information is continuously refreshed and accessible while maintaining secure access control through a designated approval flow. This feature makes it easier for organizations to keep track of their data quality and governance, allowing them to monitor quality and detect issues early on. Data validation services can save more money than some machine learning models previously built for clients, highlighting their impact on operational efficiency and decision-making capabilities.

As we enter 2026, the capability to verify information efficiently will grow even more essential, and failure to adapt to these verification needs could result in organizations falling behind in a competitive landscape. In this context, Mark Twain's warning that 'information is like garbage; you need to know what to do with it before gathering it' underscores the necessity of robust governance to ensure high-quality information. As organizations navigate the complexities of data, the ability to verify information will determine their success in the coming years.

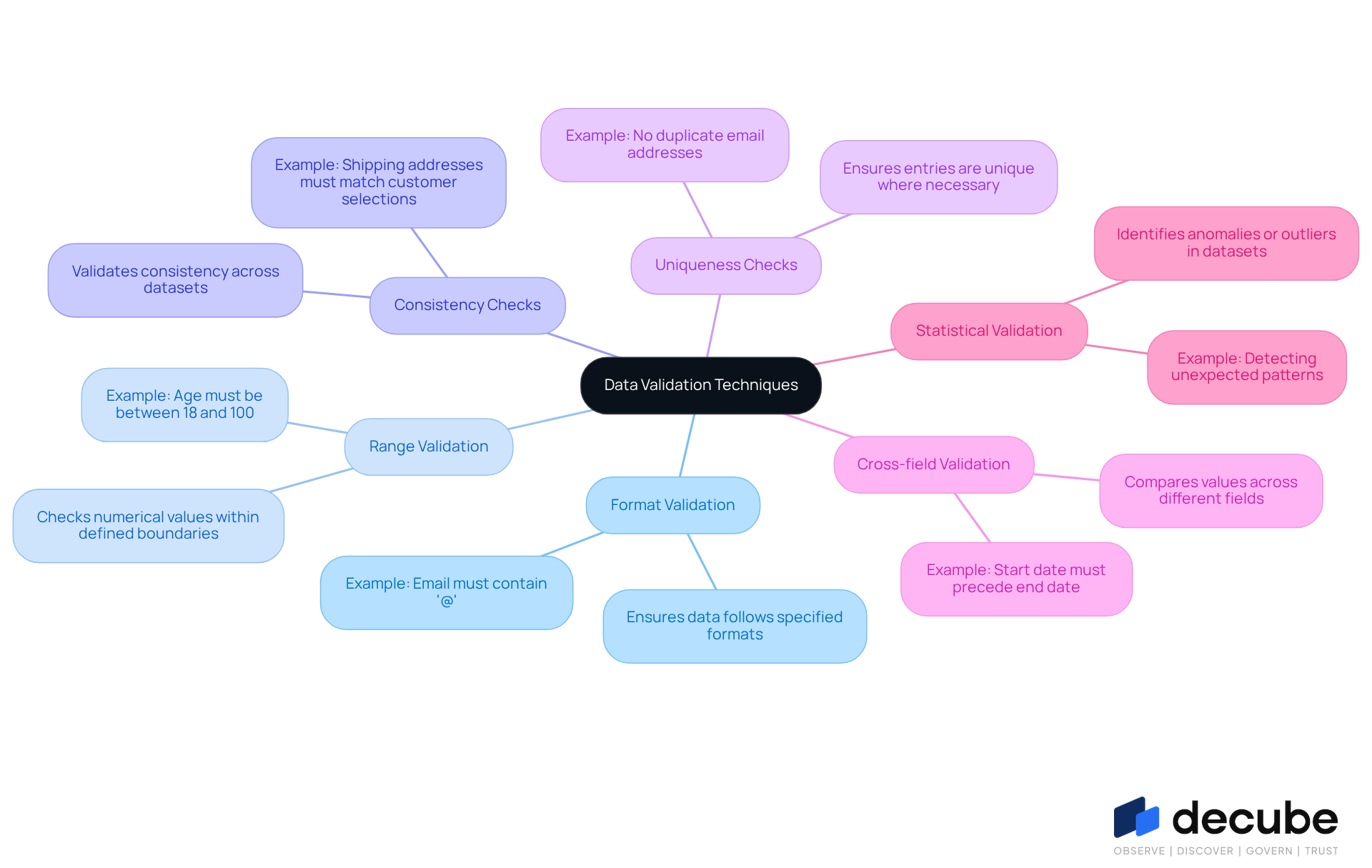

Explore Effective Data Validation Techniques

In an era where data integrity is paramount, engineers face significant challenges in ensuring the accuracy of their information. To address these challenges, they can employ a variety of effective validation techniques. Key methods include:

- Format Validation: This technique ensures that entries conform to specified formats, such as date formats or email addresses. For example, confirming that an email includes an '@' symbol is a standard procedure that helps uphold information integrity. Statistics suggest that organizations adopting format validation experience a considerable decrease in entry mistakes, improving overall quality.

- Range Validation: This method checks that numerical values fall within defined boundaries, preventing out-of-bounds entries. For instance, ensuring that ages range from 18 to 100 helps prevent unrealistic figures that could distort analysis.

- Consistency Checks: These checks validate that information across different datasets is consistent, identifying discrepancies that may indicate errors. For example, ensuring that shipping addresses match customer country selections prevents mismatches that could lead to delivery failures.

- Uniqueness Checks: This method guarantees that entries are unique where necessary, such as in primary keys. By removing duplicate records, organizations can uphold information integrity and accuracy, which is essential for trustworthy reporting.

- Cross-field Validation: This method compares values across different fields to ensure logical consistency. For example, confirming that a start date comes before an end date aids in preserving the logical flow of information.

- Statistical Validation: Utilizing statistical methods to identify anomalies or outliers in datasets is essential for maintaining information quality. This method can uncover unexpected patterns that may suggest underlying problems, such as corruption or entry errors.

Ultimately, the adoption of these validation techniques is not just beneficial; it is essential for maintaining the integrity of data-driven decision-making processes.

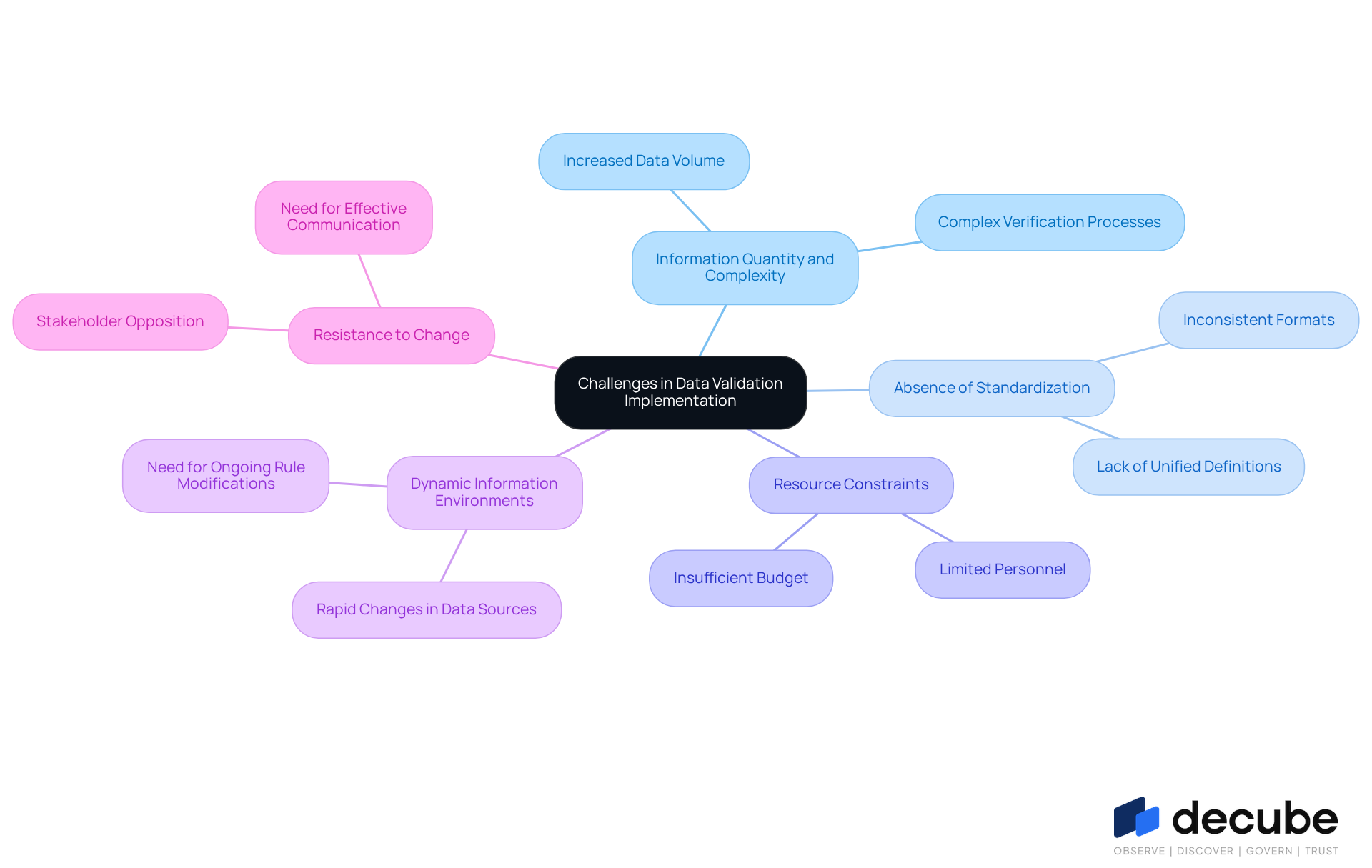

Identify Common Challenges in Data Validation Implementation

Organizations face numerous challenges when striving to implement effective data validation processes:

- Information Quantity and Complexity: As information volumes increase, verifying large collections becomes more complex, necessitating the adoption of effective management strategies.

- Absence of Standardization: Inconsistent information formats and definitions across various departments complicate verification efforts. Standardizing data formats is crucial to guarantee that all teams are aligned, promoting smoother checks.

- Resource Constraints: Resource limitations frequently obstruct the establishment of thorough verification processes. Organizations must prioritize resource distribution to ensure that verification efforts are adequately supported.

- Dynamic Information Environments: Rapid changes in information structures and sources present new verification challenges. Ongoing modifications to verification rules are essential to keep up with these changes, ensuring that information remains precise and trustworthy.

- Resistance to Change: Stakeholders may oppose implementing new verification processes, particularly if they view them as introducing complexity to their workflows. Effective communication and training can help reduce this resistance, promoting a commitment to information integrity.

Addressing these challenges enables information engineers to develop robust quality assurance strategies. By adopting best practices, organizations can significantly enhance the reliability of their data, leading to improved decision-making outcomes.

Adopt Best Practices for Successful Data Validation

To ensure data integrity, data engineers must implement effective validation practices that address common challenges:

- Establish Clear Validation Rules: Define specific criteria for what constitutes valid information, ensuring all stakeholders understand these rules. Standard verification guidelines should be set for essential information fields to minimize formatting mistakes and uphold information integrity.

- Automate Verification Processes: Utilize Decube's automated tools, including ML-powered tests and preset field monitors, to streamline verification, reducing manual effort and minimizing human error. Automated verification offers quick checks against set guidelines, boosting efficiency and reliability in data entry.

- Implement Continuous Monitoring: Regularly oversee information quality and verification methods using Decube's smart alerts and automated oversight features to identify and address issues swiftly. Without regular oversight, information quality may deteriorate, leading to potential errors.

- Engage Stakeholders: Involve information creators and users in the assessment phase to ensure alignment and support. When stakeholders are involved, it fosters teamwork and helps everyone stay on the same page regarding quality standards.

- Document Verification Procedures: Maintain thorough documentation of verification rules and processes to facilitate training and compliance. Thorough documentation facilitates continuous training and assists teams in following set verification standards, resulting in enhanced information accuracy.

- Utilize Technology: Employ advanced tools and technologies, such as Decube's AI-driven verification solutions and automated crawling features, to improve the quality of assessment processes. The platform's comprehensive capabilities in metadata extraction and information profiling support robust information governance.

Ultimately, a well-structured framework for data validation services not only enhances data quality but also fosters trust among stakeholders.

Conclusion

In an era where data drives decision-making, the role of effective data validation services is crucial for organizational success. Organizations that prioritize robust validation practices not only safeguard the accuracy and reliability of their information but also enhance their decision-making capabilities and operational efficiencies. By implementing structured validation techniques, businesses can ensure compliance with regulatory standards and foster trust among stakeholders, ultimately driving better outcomes.

Throughout the article, key strategies for successful data validation were discussed, including:

- The establishment of clear validation rules

- Automation of verification processes

- Continuous monitoring of information quality

The challenges faced by data engineers, such as the increasing complexity of information and resistance to change, were also highlighted, emphasizing the need for a proactive approach to overcome these obstacles. Data engineers often struggle with the increasing complexity of information and resistance to change, which can hinder effective validation practices. Engaging stakeholders and utilizing advanced technologies can further streamline validation efforts and improve data integrity.

As organizations navigate the complexities of data management, adopting best practices for data validation becomes essential for long-term success. By embracing these best practices, organizations can not only improve data quality but also gain a competitive edge in their industry. Ultimately, the commitment to effective data validation will not only enhance operational performance but also redefine competitive advantage in the marketplace.

Frequently Asked Questions

Why is data validation important for organizations?

Data validation is crucial for ensuring the accuracy, consistency, and reliability of information, which helps prevent errors that can lead to poor decision-making and operational inefficiencies.

What role does information verification play in an organization?

Information verification acts as a safeguard against inaccuracies, supports quality assurance, and ensures compliance with regulatory standards such as SOC 2, ISO 27001, HIPAA, and GDPR.

How can effective information verification benefit an organization?

It enhances governance frameworks, promotes trust between information producers and consumers, and ultimately leads to improved business results.

Can you provide an example of how data validation has helped an organization?

A retail customer benefited from validation rules that prevented erroneous discount suggestions, saving considerable amounts of money.

What features does Decube offer to assist with data validation?

Decube offers automated crawling capabilities to manage metadata without manual updates, ensuring information is continuously refreshed and accessible while maintaining secure access control through a designated approval flow.

How does data validation impact operational efficiency?

Data validation services can save more money than some machine learning models and enhance decision-making capabilities, highlighting their significance in operational efficiency.

Why is the ability to verify information expected to grow in importance by 2026?

As organizations face increasing competition and complexities in data management, efficient information verification will be essential for their success.

What does Mark Twain's quote about information imply regarding data governance?

The quote emphasizes the necessity of robust governance to ensure high-quality information, suggesting that organizations need to know how to manage information effectively before collecting it.

List of Sources

- Understand the Importance of Data Validation in Organizations

- Data Quality Case Studies: How We Saved Clients Real Money Thanks to Data Validation (https://appsilon.com/post/data-quality)

- Compelling Quotes About Data | 6sense (https://6sense.com/blog/compelling-quotes-about-data)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- 2026 Analytics: The Future of Data-Driven Decision Making (https://sift-ag.com/news/2026-analytics-the-future-of-data-driven-decision-making)

- Explore Effective Data Validation Techniques

- Data validation techniques: How to keep your data accurate, useful, and privacy-compliant (https://usercentrics.com/guides/marketing-measurement)

- The Best 10 Data Validation Best Practices in 2025 - Numerous.ai (https://numerous.ai/blog/data-validation-best-practices)

- Data Quality Testing: Techniques & Best Practices in 2026 | Atlan (https://atlan.com/data-quality-testing)

- future-processing.com (https://future-processing.com/blog/data-validation)

- Chapter 5 Statistical checks | The Data Validation Cookbook (https://data-cleaning.github.io/validate/sect-statisticalchecks.html)

- Identify Common Challenges in Data Validation Implementation

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Data Engineering Challenges: Validation and Cleansing (https://medium.com/@remisharoon/data-engineering-challenges-validation-and-cleansing-d50496a1c176)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://finance.yahoo.com/news/data-priorities-2026-ai-adoption-190600344.html)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Adopt Best Practices for Successful Data Validation

- Data Validation Automation: A Key to Efficient Data Management (https://functionize.com/ai-agents-automation/data-validation)

- The Best 10 Data Validation Best Practices in 2025 - Numerous.ai (https://numerous.ai/blog/data-validation-best-practices)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

_For%20light%20backgrounds.svg)