Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Define Data Quality: Essential Steps for Data Engineers

Learn to define data quality through essential dimensions and steps for effective data management.

Introduction

In an era where data drives decision-making, understanding data quality is paramount for data engineers. As organizations increasingly rely on accurate and timely data for decision-making, the risks associated with poor data quality are significant, leading to financial losses and operational inefficiencies. Data engineers must define and enhance data quality standards to ensure their systems are robust and reliable.

This article explores the critical dimensions of data quality, offering actionable steps and tools that empower engineers to elevate their data management practices and drive organizational success. By establishing robust data quality standards, data engineers can safeguard their organizations against these risks.

Understand the Concept of Data Quality

Data integrity is a critical component of effective decision-making in today's data-driven landscape. It encompasses the state of a dataset, evaluated through characteristics such as accuracy, completeness, consistency, and timeliness. High-quality information is essential for effective decision-making and operational efficiency, particularly in data engineering, where relevance and timeliness are critical for its intended use. Organizations face significant challenges when information standards fall short, leading to financial repercussions averaging $12.9 million annually. This highlights the necessity for engineers to prioritize excellence in their processes.

In AI applications, information accuracy directly impacts business results, serving as the foundation for model training and insights generation. For instance, organizations that implement robust information integrity measures can significantly enhance their AI initiatives, resulting in improved operational efficiency and informed decision-making. Industry research indicates that the influence of information integrity on AI applications is substantial; without reliable data, AI models struggle to perform optimally.

Information engineers must recognize that ensuring superior information integrity is not merely a technical necessity but a strategic priority. By concentrating on information integrity, they can reduce risks linked to compliance and governance standards, ultimately promoting a metrics-driven culture that enhances overall business outcomes. As the landscape of information management evolves, the emphasis on integrity will be essential for sustaining competitive advantage.

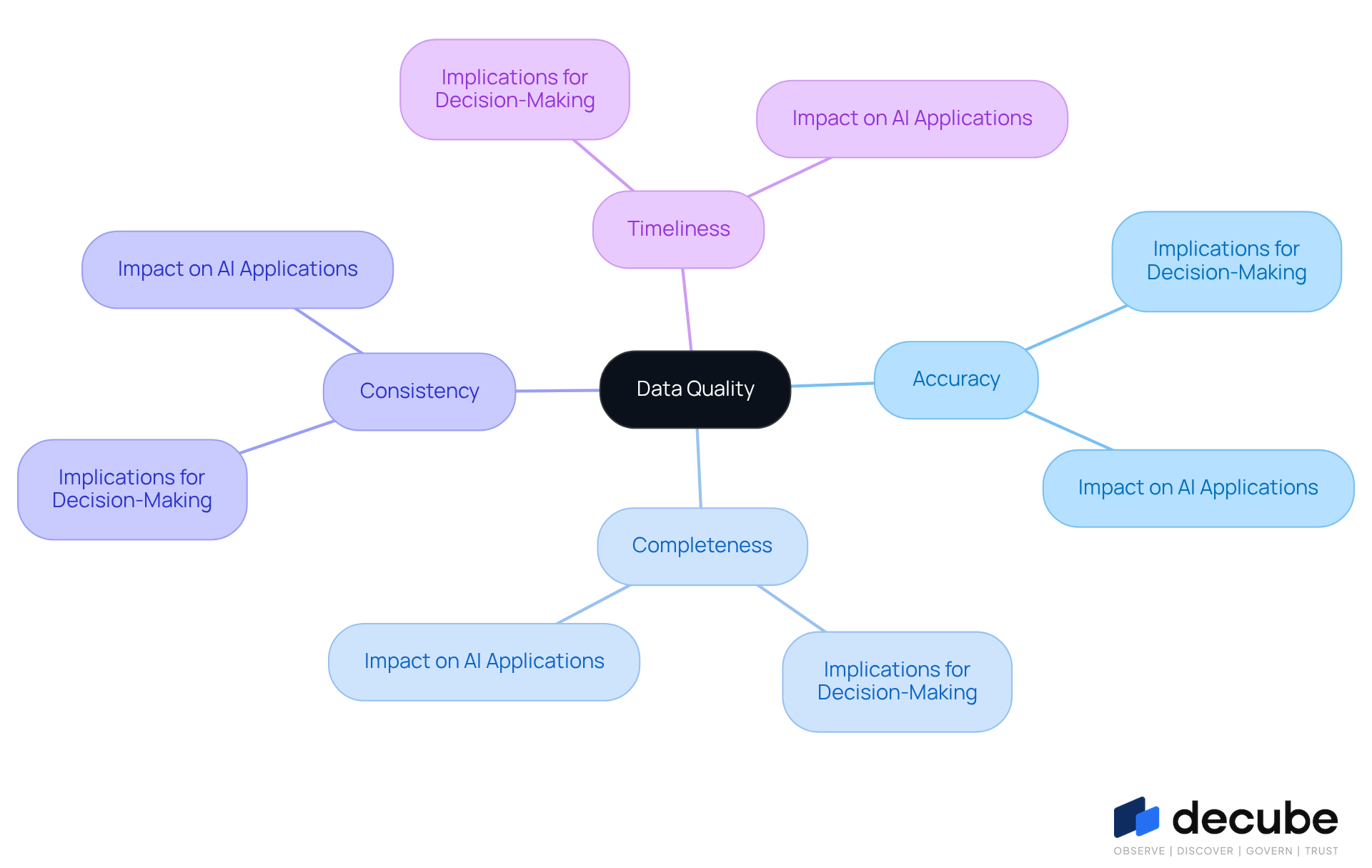

Identify Key Dimensions of Data Quality

Effective data management hinges on several critical dimensions that directly impact decision-making processes:

- Accuracy: Data needs to accurately reflect the real-world scenarios it aims to model. Imprecise information can lead to flawed insights and decisions, making accuracy a top priority for organizations. Decube improves accuracy by utilizing an intuitive design and automated monitoring features. This approach helps maintain trust and ensures that information is precise and consistent.

- Completeness: All necessary information should be present; absent information can lead to incorrect conclusions and hinder decision-making processes. Organizations that prioritize completeness often see improved outcomes in their analytics initiatives. Utilizing Decube's automated crawling feature can lead to significantly improved analytics outcomes for organizations, contributing to information completeness and establishing a competitive edge.

- Consistency: Information should be consistent across various datasets and systems to avoid discrepancies that can undermine trust in the information. Inconsistent information can quickly erode confidence in AI solutions, making consistency vital for successful implementations. Decube's advanced information quality monitoring, including ML-powered tests, helps identify inconsistencies early, ensuring that the information remains reliable across various platforms.

- Timeliness: Data must be up-to-date and available when needed to support decision-making. Delays in data availability can hinder timely decision-making, resulting in lost opportunities. With Decube's intelligent alerts and automated oversight, engineers can receive prompt notifications regarding quality issues, enabling quick action.

- Uniqueness: Each entry should be distinct to prevent duplication, which can distort analysis and lead to incorrect conclusions. Ensuring uniqueness is crucial for maintaining information integrity. Decube's information reconciliation features assist in identifying and resolving discrepancies, supporting the uniqueness dimension of quality.

- Validity: Data should conform to defined formats and standards, ensuring it is usable for its intended purpose. Valid information enhances the reliability of analytics and AI applications. Decube's platform enables the creation of agreements, which outline the standards and expectations for information usage, thereby enhancing validity.

Comprehending these dimensions allows engineers to establish a strong framework for assessing and enhancing information integrity. For example, Smava attained zero downtime and automated the creation of over 1,000 dbt models by concentrating on information integrity, while UK logistics firm HIVED achieved 99.9% pipeline reliability with zero information incidents over three years. Investing in high information standards is not just beneficial; it is essential for organizations aiming to thrive in an increasingly data-driven landscape.

Assess and Improve Data Quality in Your Systems

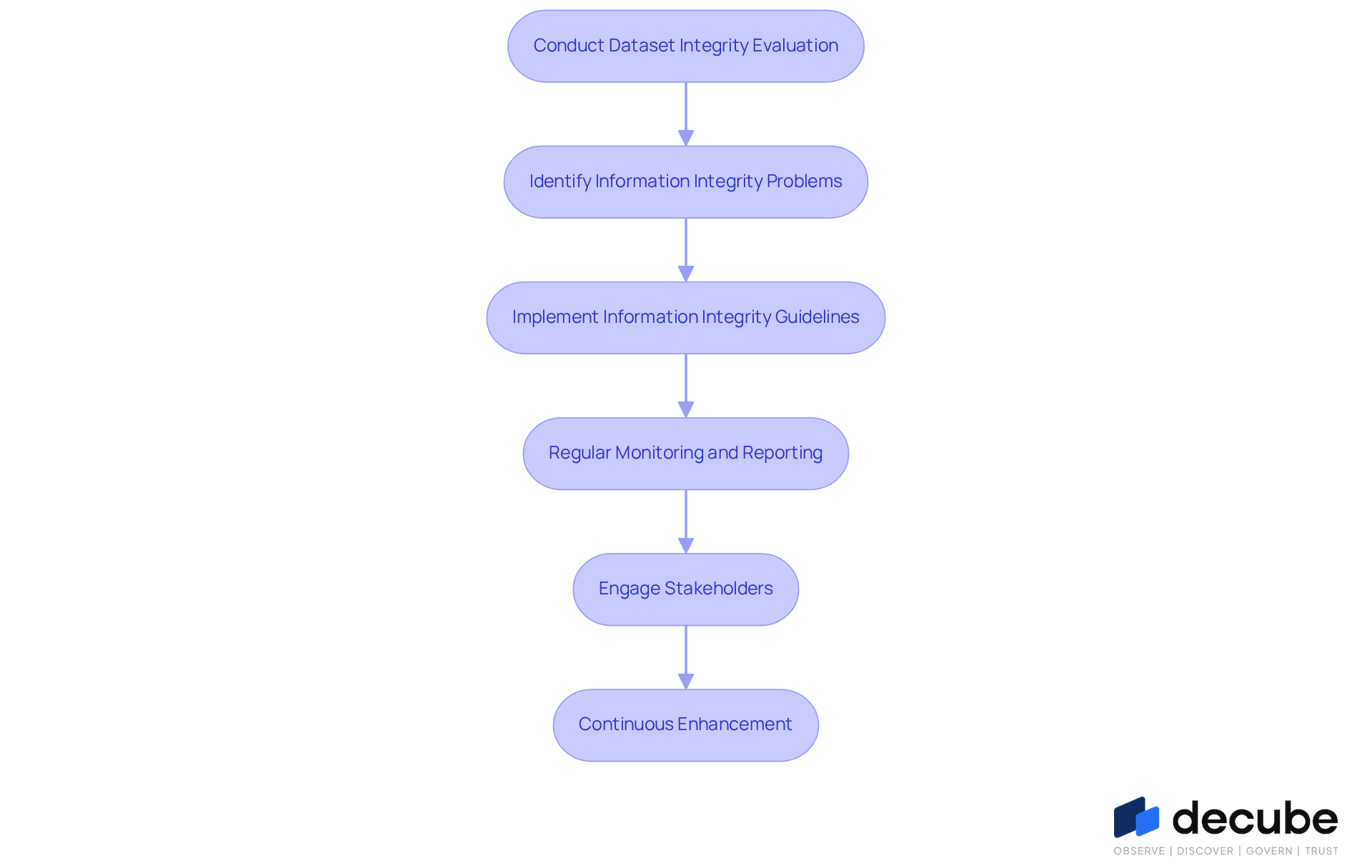

To ensure high-quality data, a systematic approach to assessing and enhancing data integrity is essential:

- Conduct a Dataset Integrity Evaluation: Assess datasets against key dimensions of information integrity, utilizing metrics such as error rates, completeness percentages, and consistency checks. This step is crucial to define data quality as it establishes a baseline for assessing information integrity.

- Identify Information Integrity Problems: Analyze the assessment results to pinpoint specific areas where information integrity is lacking. Without a systematic approach, it can be quite challenging to define data quality issues. Look for patterns in mistakes or discrepancies, such as schema drift or ingestion disruptions, which account for significant portions of information integrity incidents.

- Implement Information Integrity Guidelines: Establish validation rules and automated checks to prevent information integrity issues from arising. This means adding checks during data entry or ETL processes, which can greatly decrease the frequency of mistakes.

- Regular Monitoring and Reporting: Establish a robust system for continuous observation of information integrity. Employ visual dashboards to monitor metrics and trends over time, facilitating proactive recognition of potential issues before they intensify.

- Engage Stakeholders: Collaborate with information users and business teams to understand their requirements and expectations. Not engaging stakeholders can result in misaligned information standards and practices, which is essential for improving information standards and practices, ensuring alignment with business objectives.

- Continuous Enhancement: Treat information management as a continual process. Consistently assess and revise your information management strategies based on stakeholder input and changing business needs. This iterative method promotes a culture of responsibility and openness, crucial for upholding high information standards in intricate settings.

Ultimately, neglecting these steps can compromise the integrity of critical business information.

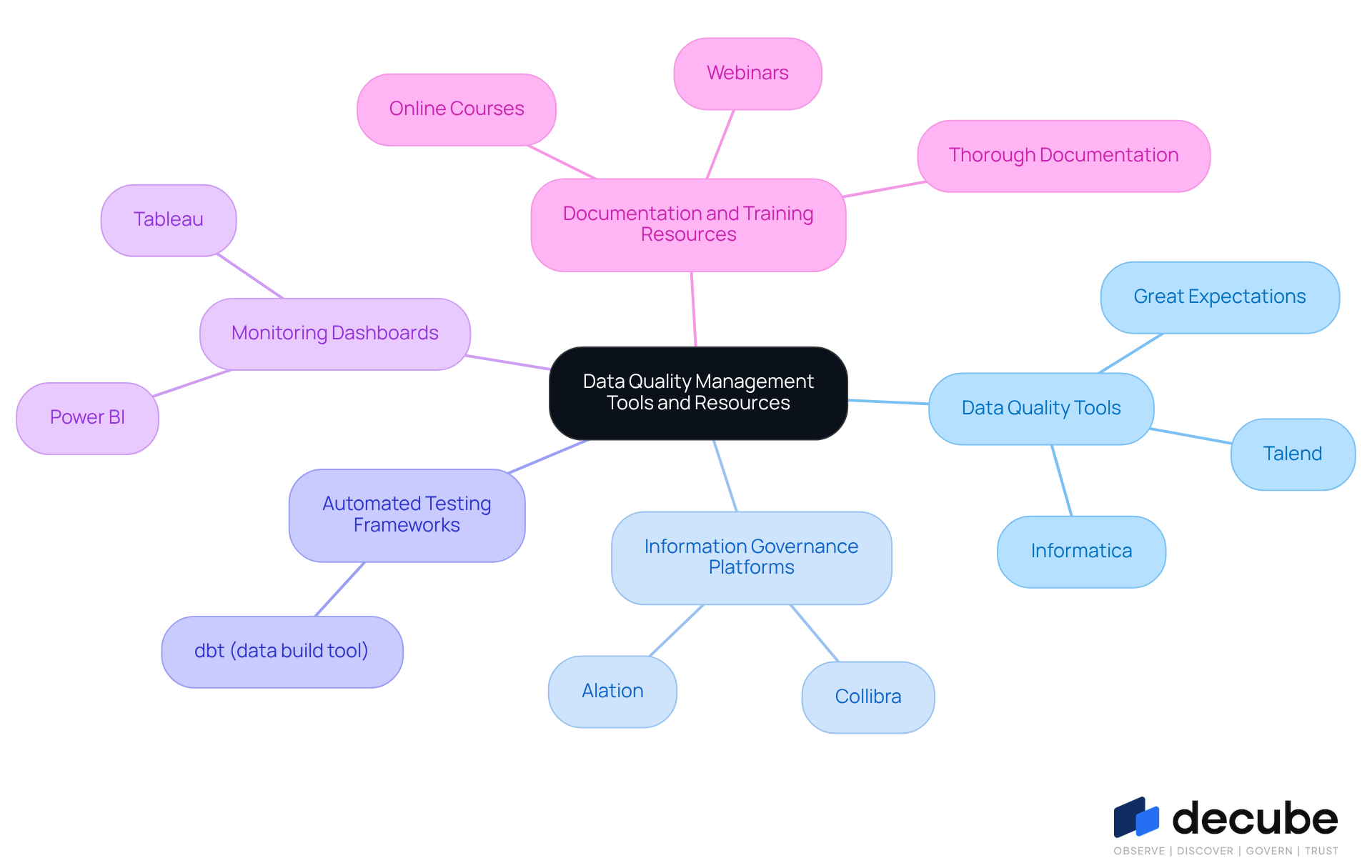

Utilize Tools and Resources for Data Quality Management

Organizations often struggle to define data quality, which can lead to significant operational challenges. To effectively manage data quality, consider the following tools and resources:

- Data Quality Tools: Leverage specialized software such as Talend, Informatica, and Great Expectations, which offer robust features for data profiling, cleansing, and monitoring. These tools are essential for confirming that information meets established performance benchmarks and help organizations to define data quality, resulting in substantial advancements in information reliability and operational effectiveness.

- Information Governance Platforms: Implement comprehensive platforms like Collibra or Alation that facilitate information governance through capabilities such as cataloging and lineage tracking. These platforms assist organizations in overseeing their information assets, ensuring compliance, and improving the management of information standards.

- Automated Testing Frameworks: Utilize frameworks like dbt (data build tool) to automate information integrity checks within your pipelines. This automation guarantees that information complies with standards before being used, significantly reducing the risk of errors and enhancing overall integrity.

- Monitoring Dashboards: Create visual dashboards using tools like Tableau or Power BI to track information integrity metrics and trends. These dashboards facilitate proactive management of information integrity issues, allowing teams to identify and address potential problems before they escalate.

- Documentation and Training Resources: Utilize online courses, webinars, and thorough documentation to stay updated on best practices in information integrity management and governance. Ongoing education is essential for adapting to changing information environments and maintaining high-quality standards.

Ultimately, the right tools and resources can help define data quality in data management practices, leading to more informed decision-making and operational success.

Conclusion

Data quality is not just a technical requirement; it is a strategic imperative that shapes organizational success. Understanding and implementing high standards of data integrity is essential, as it can significantly impact an organization's effectiveness. By prioritizing data quality, data engineers enhance the reliability of insights and the effectiveness of AI applications, ultimately driving better business outcomes.

The article highlighted several key dimensions of data quality, including:

- Accuracy

- Completeness

- Consistency

- Timeliness

- Uniqueness

- Validity

Each of these attributes plays a vital role in ensuring that data is reliable and useful for decision-making. The systematic approach to assessing and improving data quality-through integrity evaluations, stakeholder engagement, and continuous enhancement-was emphasized as critical for maintaining high standards in a data-driven environment. Utilizing the right tools and resources, such as data quality software and governance platforms, further empowers organizations to uphold these standards effectively.

In conclusion, the significance of data quality cannot be overstated. Organizations must embrace a culture that values and prioritizes data integrity, as this commitment will not only mitigate risks but also position the organization for innovation and growth. By taking proactive steps to define and manage data quality, data engineers can enhance their contributions to their organizations and ensure that they remain competitive in an increasingly complex landscape.

Frequently Asked Questions

What is data quality and why is it important?

Data quality refers to the integrity of a dataset, evaluated through characteristics such as accuracy, completeness, consistency, and timeliness. It is important because high-quality information is essential for effective decision-making and operational efficiency, particularly in data engineering.

What challenges do organizations face regarding data quality?

Organizations face significant challenges when information standards fall short, which can lead to financial repercussions averaging $12.9 million annually.

How does data quality affect AI applications?

In AI applications, information accuracy directly impacts business results, serving as the foundation for model training and insights generation. Robust information integrity measures can significantly enhance AI initiatives, resulting in improved operational efficiency and informed decision-making.

Why should information engineers prioritize data integrity?

Information engineers should prioritize data integrity because it is not just a technical necessity but a strategic priority. Ensuring superior information integrity reduces risks linked to compliance and governance standards and promotes a metrics-driven culture that enhances overall business outcomes.

What is the impact of information integrity on business outcomes?

Information integrity has a substantial influence on AI applications and overall business outcomes. Without reliable data, AI models struggle to perform optimally, which can hinder decision-making and operational efficiency.

List of Sources

- Understand the Concept of Data Quality

- pipeline.zoominfo.com (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- World Quality Report 2025: AI adoption surges in Quality Engineering, but enterprise-level scaling remains elusive (https://prnewswire.com/news-releases/world-quality-report-2025-ai-adoption-surges-in-quality-engineering-but-enterprise-level-scaling-remains-elusive-302614772.html)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Quality: Why It Matters and How to Achieve It (https://gartner.com/en/data-analytics/topics/data-quality)

- Identify Key Dimensions of Data Quality

- Top 8 Data Quality Metrics in 2026 | Dagster (https://dagster.io/learn/data-quality-metrics)

- Forrester Wave Report 2026 for Data Quality: how to read it and why it matters (https://ataccama.com/blog/the-forrester-wave-data-quality-solutions-2026-how-to-read-it-and-why-it-matters-now)

- Why data quality is key to AI success in 2026 (https://strategy.com/software/blog/why-data-quality-is-key-to-ai-success-in-2026)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://finance.yahoo.com/news/data-priorities-2026-ai-adoption-190600933.html)

- Assess and Improve Data Quality in Your Systems

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://finance.yahoo.com/news/data-priorities-2026-ai-adoption-190600933.html)

- A Continual Quest for Improving Data Quality | U.S. Bureau of Economic Analysis (BEA) (https://bea.gov/news/blog/2026-03-16/continual-quest-improving-data-quality)

- Data Quality Metrics: How to Measure Data Accurately | Alation (https://alation.com/blog/data-quality-metrics)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- Ataccama Data Trust Assessment reveals data quality gaps blocking AI and compliance (https://ataccama.com/news/ataccama-data-trust-assessment-reveals-data-quality-gaps-blocking-ai-and-compliance)

- Utilize Tools and Resources for Data Quality Management

- The 2026 Open-Source Data Quality and Data Observability Landscape | DataKitchen (https://datakitchen.io/blog/the-2026-open-source-data-quality-and-data-observability-landscape)

- ETL ROI Calculation Examples and Stats — 30 Statistics Every Data Leader Should Know in 2026 (https://integrate.io/blog/etl-roi-calculation-examples-and-stats)

- Top 9 AI-Powered Data Governance Tools for 2026 (https://kiteworks.com/cybersecurity-risk-management/ai-data-governance-tools-2026)

- 10 Best Data Quality Tools for 2026 - A Comprehensive Guide - Murdio (https://murdio.com/insights/10-best-data-quality-tools-for-2025-a-comprehensive-guide)

_For%20light%20backgrounds.svg)