Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices to Ensure Data Consistency in Your Pipelines

Discover essential practices for achieving data consistency in your pipelines effectively.

Introduction

Data consistency within pipelines is critical for organizational success in today's data-driven landscape. Establishing robust practices around data governance, quality checks, monitoring, and team collaboration enhances the reliability of information and fosters a culture of accountability. Organizations often face significant hurdles in maintaining data integrity due to the complexities of data management. Failure to implement these practices can lead to unreliable data, undermining decision-making processes.

Establish a Robust Data Governance Framework

Inconsistent information management can lead to significant operational challenges, making a robust governance framework essential for organizations. To ensure data consistency in your pipelines, begin by establishing a strong governance framework. This framework should incorporate clear policies and procedures for information management, information standards, and adherence to relevant regulations such as GDPR and HIPAA. Here are key steps to implement:

- Define Information Ownership: Assign custodians responsible for quality and governance within their domains. This accountability helps maintain a uniform approach to information management throughout the organization. Decube's Business Glossary initiative enhances this by promoting domain-level ownership and shared understanding among teams.

- Establish Information Standards: Formulate standardized definitions and formats for information elements to avoid discrepancies. This encompasses naming conventions, types, and acceptable values. The automated crawling feature of the platform guarantees that metadata is seamlessly handled and maintained current, supporting these standards.

- Implement Information Policies: Establish policies that dictate how information is collected, stored, and processed. Ensure these policies are communicated effectively to all stakeholders. With the automated monitoring and ML-driven tests, organizations can continuously evaluate information quality and adherence to these policies.

- Regular Audits and Reviews: Conduct periodic audits to assess compliance with governance policies and identify areas for improvement. This proactive approach helps ensure data consistency and preserve information integrity over time. The intelligent notifications and information reconciliation features enable prompt recognition of inconsistencies, ensuring that information remains precise and reliable.

Implementing these steps not only enhances governance but also fosters a culture of accountability and precision in information management. Ultimately, a well-structured governance framework not only safeguards information integrity but also empowers organizations to thrive in a data-driven landscape.

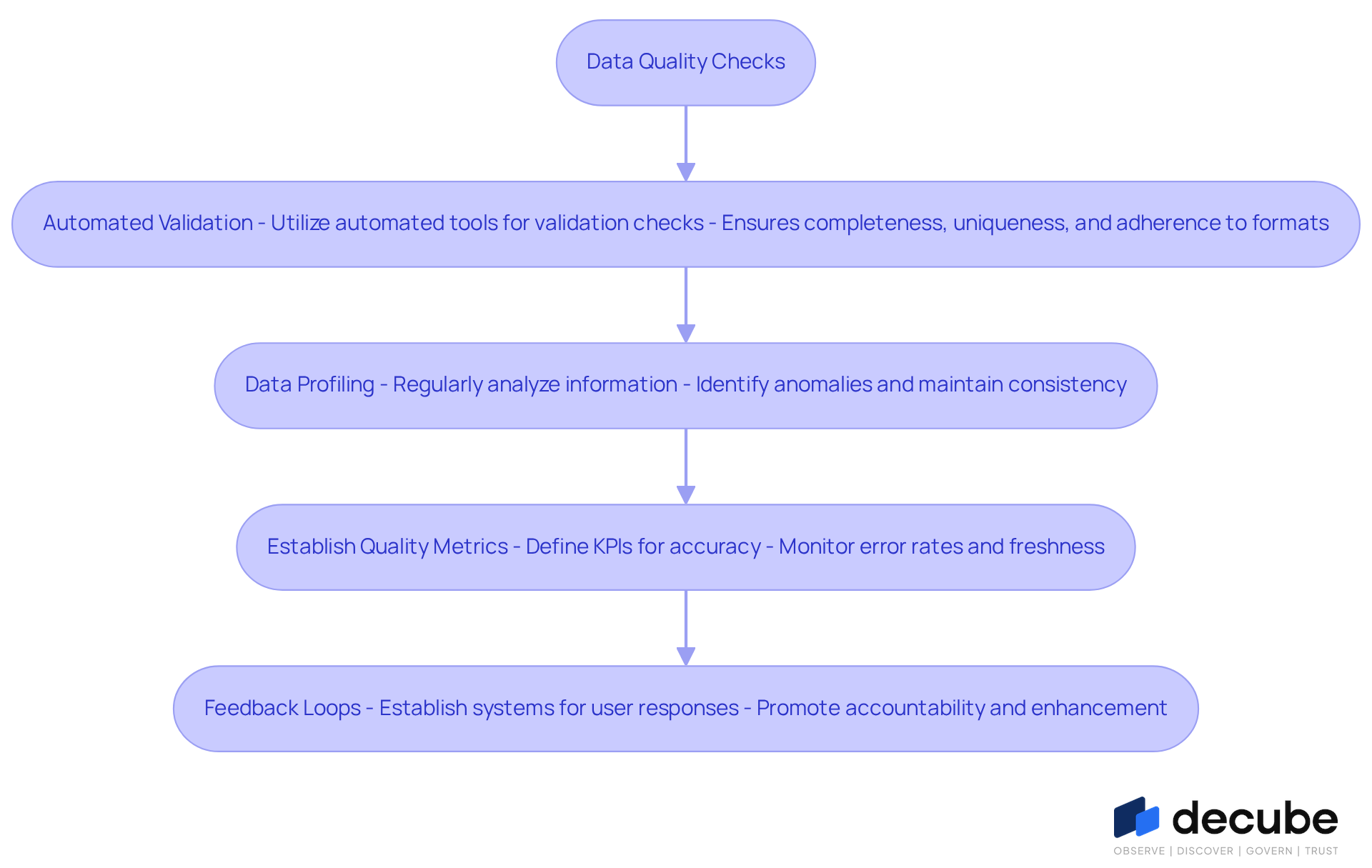

Implement Rigorous Data Quality Checks

Inaccurate information can lead to significant operational challenges, making data consistency checks essential in information workflows. Here are some effective strategies:

- Automated Validation: Utilize automated tools for validation checks at different phases of the pipeline. This includes ensuring completeness, uniqueness, and adherence to specified formats, allowing for real-time monitoring and quick identification of issues. This significantly reduces the risk of errors propagating through the system.

- Data Profiling: Regularly analyze your information using Decube's extensive features to gain insights into its structure, standards, and distribution. This practice helps identify anomalies and maintain data consistency early in the process, enabling proactive measures to address potential issues before they escalate.

- Establish Quality Metrics: Define key performance indicators (KPIs) for information accuracy, such as error rates, freshness of information, and adherence to established standards. Ongoing observation of these metrics with ML-driven tests is crucial for preserving high information standards and ensuring data consistency by swiftly managing any discrepancies.

- Feedback Loops: Establish systems for responses from information users to pinpoint issues with standards. This collaborative method, backed by smart alerts and smooth integration, promotes a culture of accountability and ongoing enhancement, enabling teams to improve information accuracy processes over time.

Ultimately, the integrity of your information processes can determine the success of your decision-making efforts.

Enhance Monitoring and Observability of Data Pipelines

Without effective monitoring, organizations risk significant quality issues that can disrupt workflows. To ensure data consistency, improving the monitoring and observability of your workflows is essential. Here are some best practices to implement:

- Real-Time Monitoring Tools: Utilize observability tools that provide immediate insights into information flows and workflow performance. These tools can notify teams of anomalies and potential problems as they occur, significantly decreasing quality issues by 40-60% through proactive detection.

- End-to-End Visibility: Employ automated column-level lineage capabilities to ensure that monitoring encompasses the complete pipeline, from ingestion to final output. This comprehensive perspective aids in identifying bottlenecks and inconsistencies across stages, enhancing overall information governance.

- Set Alerts and Thresholds: Configure intelligent notifications within the platform for key metrics such as information volume, processing time, and error rates. Establish thresholds that trigger notifications when anomalies occur, allowing for swift action. Organizations that invest in DataOps and workflow automation report an average ROI of 3.7x, underscoring the significance of this practice.

- Regular Health Assessments: Conduct routine health evaluations of your information flows using monitoring solutions to assess their performance and reliability. This proactive method helps preserve quality and data consistency, minimizing the necessity for firefighting. For instance, one organization found that regular health checks led to a 30% decrease in data-related incidents over six months.

By adopting these practices, organizations can transform their workflow efficiency and data reliability.

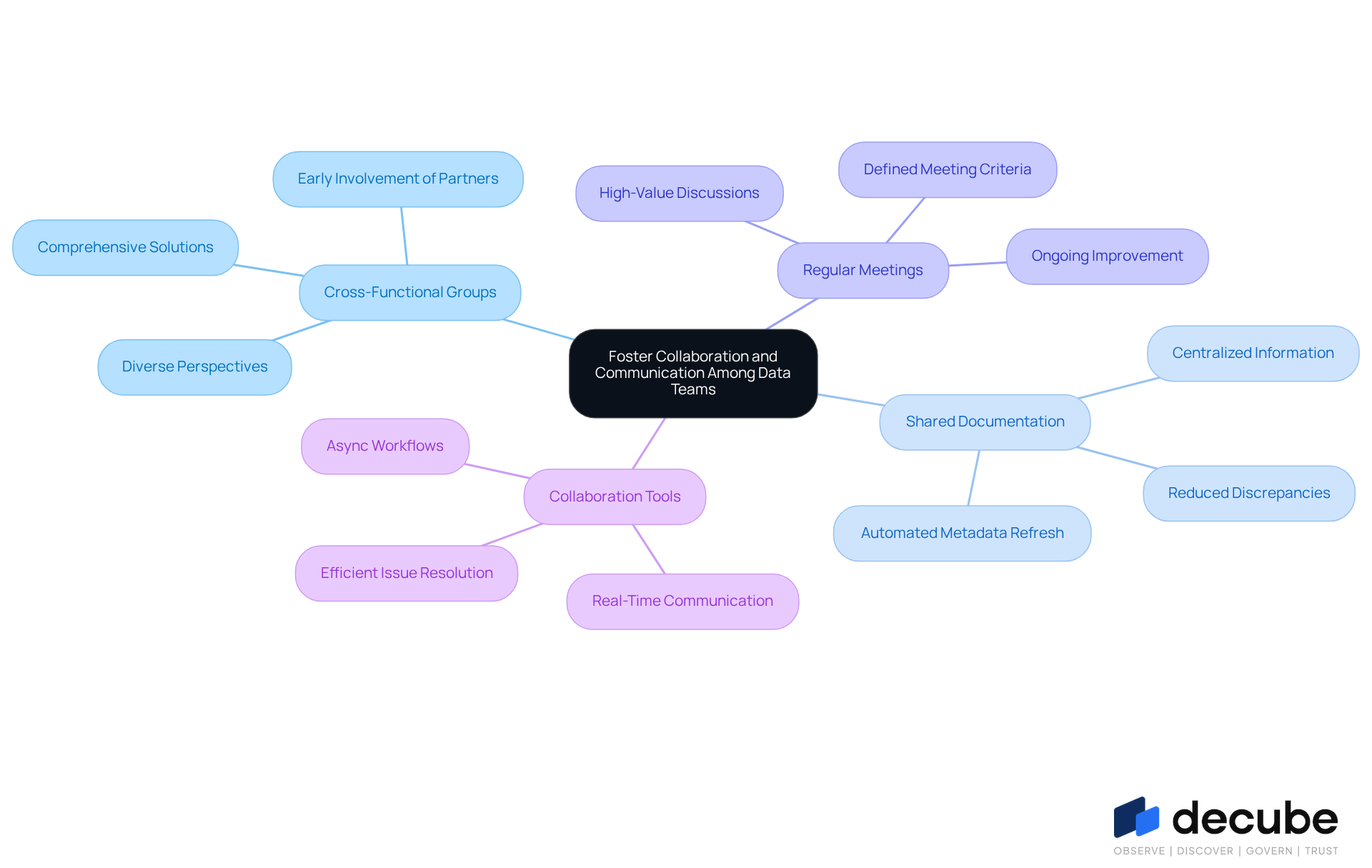

Foster Collaboration and Communication Among Data Teams

To achieve data consistency in information management, fostering collaboration and communication among teams is crucial. Here are some strategies to enhance teamwork:

- Cross-Functional Groups: Form cross-functional groups that consist of participants from engineering, science, and business units. This diversity encourages a comprehensive approach to information management, as various perspectives contribute to more resilient solutions. Involving product and technical partners early can assist in navigating intricacies and ensuring agreement on information strategies.

- Shared Documentation: Maintain centralized documentation that outlines information definitions, governance policies, and quality standards. This practice ensures data consistency by providing all team members with access to consistent information, significantly reducing discrepancies and misunderstandings. Decube's automated crawling feature enhances this process by automatically refreshing metadata, ensuring that all documentation remains up-to-date without manual intervention. This capability saves time and improves information governance by providing accurate, up-to-date details for decision-making.

- Regular Meetings: Arrange frequent meetings to address quality concerns, exchange insights, and coordinate on best practices. Many teams struggle with unproductive meetings that waste time and resources, making it essential to define strict criteria for high-value discussions that address complex problems or resolve conflicts. This method encourages ongoing improvement and teamwork in solving problems.

- Collaboration Tools: Utilize collaboration tools such as Slack or Microsoft Teams to facilitate real-time communication among team members. These platforms enable quick resolution of information-related queries and issues, creating a more efficient and adaptable management process. Teams that embrace async workflows consistently outperform those relying on constant meetings and messages.

By adopting these collaborative practices, organizations can enhance their information management efforts, leading to improved data consistency across pipelines. Industry leaders stress that effective collaboration not only addresses quality issues but also enhances overall organizational performance, making it a critical focus for teams in 2026. Additionally, participating in webinars like Decube's on data observability can provide valuable insights into maintaining data integrity and optimizing data strategies.

Conclusion

In a landscape where data drives decision-making, ensuring data consistency in pipelines is paramount for organizational success. Establishing and maintaining data consistency is crucial for organizations aiming to thrive in a data-driven environment. Implementing a strong data governance framework helps organizations standardize their information management practices and ensures compliance with regulations. This foundational step sets the stage for effective data quality checks, comprehensive monitoring, and enhanced collaboration among teams, ultimately leading to improved decision-making and operational efficiency.

Key strategies discussed include:

- Defining information ownership

- Establishing clear information standards

- Implementing rigorous data quality checks throughout the data lifecycle

Additionally, fostering collaboration and communication among data teams emerges as a vital practice that enhances accountability and encourages a unified approach to data management. These practices collectively foster an environment that prioritizes data integrity and quickly addresses inconsistencies.

Organizations must understand that adopting these best practices is essential for achieving data consistency in their pipelines. Committing to a structured governance framework, using advanced monitoring tools, and fostering teamwork enables businesses to safeguard data quality and improve operational performance. Ultimately, the commitment to these practices can transform data management from a challenge into a strategic advantage.

Frequently Asked Questions

Why is a robust data governance framework essential for organizations?

A robust data governance framework is essential because inconsistent information management can lead to significant operational challenges. It ensures data consistency in pipelines and supports adherence to regulations like GDPR and HIPAA.

What are the key steps to implement a data governance framework?

The key steps to implement a data governance framework include defining information ownership, establishing information standards, implementing information policies, and conducting regular audits and reviews.

How do you define information ownership within a governance framework?

Information ownership is defined by assigning custodians responsible for quality and governance within their domains, promoting a uniform approach to information management throughout the organization.

What are information standards, and why are they important?

Information standards are standardized definitions and formats for information elements, including naming conventions, types, and acceptable values. They are important to avoid discrepancies and ensure consistency in information management.

What role do information policies play in data governance?

Information policies dictate how information is collected, stored, and processed. They ensure that all stakeholders are informed about these practices, promoting compliance and quality in information management.

How can organizations assess compliance with their governance policies?

Organizations can assess compliance through periodic audits that identify areas for improvement. Automated monitoring and ML-driven tests can continuously evaluate information quality and adherence to policies.

What features support the maintenance of information standards?

The automated crawling feature of the platform supports the maintenance of information standards by ensuring that metadata is handled seamlessly and kept current.

How do regular audits contribute to data governance?

Regular audits contribute to data governance by assessing compliance with governance policies, identifying areas for improvement, and helping to ensure data consistency and information integrity over time.

What benefits does a well-structured governance framework provide to organizations?

A well-structured governance framework safeguards information integrity, fosters a culture of accountability and precision in information management, and empowers organizations to thrive in a data-driven landscape.

List of Sources

- Establish a Robust Data Governance Framework

- Data Governance in 2026: Key Strategies for Enterprise Compliance and Innovation (https://community.trustcloud.ai/article/data-governance-in-2025-what-enterprises-need-to-know-today)

- Data Governance Statistics And Facts (2025): Emerging Technologies, Challenges And Adoption, AI, ROI, and Data Quality Insights (https://electroiq.com/stats/data-governance)

- The Role Of Data Governance In Meeting Evolving Privacy Regulations (https://forbes.com/councils/forbesbusinesscouncil/2025/05/05/the-role-of-data-governance-in-meeting-evolving-privacy-regulations)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- Data Governance & Compliance Framework: Best Practices 2026 (https://ovaledge.com/blog/data-governance-and-compliance)

- Implement Rigorous Data Quality Checks

- Why Data Quality Should Start At The Beginning, Not The End (https://forbes.com/councils/forbestechcouncil/2026/01/05/why-data-quality-should-start-at-the-beginning-not-the-end)

- Databricks Data Quality Framework 2026 – AI-Ready Data | Prolifics (https://prolifics.com/usa/resource-center/news/databricks-data-quality-framework)

- 4 Best Tools to Automate Data Quality Checks in ETL Pipelines 2026 | Airbyte (https://airbyte.com/data-engineering-resources/tools-automate-data-quality-checks-etl)

- How to Build Data Quality Rules for AI Success in 2026 (https://atlan.com/know/data-quality-rules)

- The 2026 Data Quality and Data Observability Commercial Software Landscape | DataKitchen (https://datakitchen.io/blog/the-2026-data-quality-and-data-observability-commercial-software-landscape)

- Enhance Monitoring and Observability of Data Pipelines

- What's New in ArcGIS Data Pipelines (February 2026) (https://esri.com/arcgis-blog/products/arcgis-online/announcements/whats-new-in-arcgis-data-pipelines-february-2026)

- Data Pipeline Efficiency Statistics (https://integrate.io/blog/data-pipeline-efficiency-statistics)

- CDC (Change Data Capture) Adoption Stats – 40+ Statistics Every Data Leader Should Know in 2026 (https://integrate.io/blog/cdc-change-data-capture-adoption-stats)

- Data Pipeline Observability Solutions Market | Global Market Analysis Report - 2035 (https://futuremarketinsights.com/reports/data-pipeline-observability-solutions-market)

- Key Data Observability Trends in 2025 | Secoda (https://secoda.co/blog/key-data-observability-trends)

- Foster Collaboration and Communication Among Data Teams

- Top collaboration strategies for distributed teams in 2026 | Zoho Workplace (https://zoho.com/workplace/articles/collaboration-strategies.html)

- Why cross-functional collaboration is critical in data governance (https://onna.com/blog/why-cross-functional-collaboration-is-critical-in-data-governance)

- Why Cross-Functional Collaboration is Essential for Data Analysis (https://hockeystack.com/blog-posts/why-cross-functional-collaboration-is-essential-for-data-analysis)

- How Cross-Functional IT Teams Can Work Toward A Common Goal | InformationWeek (https://informationweek.com/it-leadership/how-cross-functional-it-teams-can-work-toward-a-common-goal)

- Top Data Collaboration Tools for Data Teams in 2026 (https://zerve.ai/blog/data-collaboration-tools)

_For%20light%20backgrounds.svg)