Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices to Enhance AI Accuracy in Data Pipelines

Enhance AI accuracy in data pipelines with best practices for governance and quality assurance.

Introduction

Organizations must confront the pressing challenge of ensuring data quality to harness the full potential of artificial intelligence. The accuracy of AI systems relies heavily on the quality of information flowing through their pipelines. Organizations struggle to maintain high standards in data governance and quality assurance, which are essential for AI success. A commitment to best practices in these areas is essential for success.

By optimizing their data pipelines, businesses can significantly enhance AI accuracy, leading to more reliable outcomes and strategic advantages. Organizations must implement critical steps to optimize their data pipelines and ensure the integrity of their AI models. Without a commitment to data integrity, organizations risk compromising the effectiveness of their AI initiatives.

Establish Robust Data Governance Frameworks

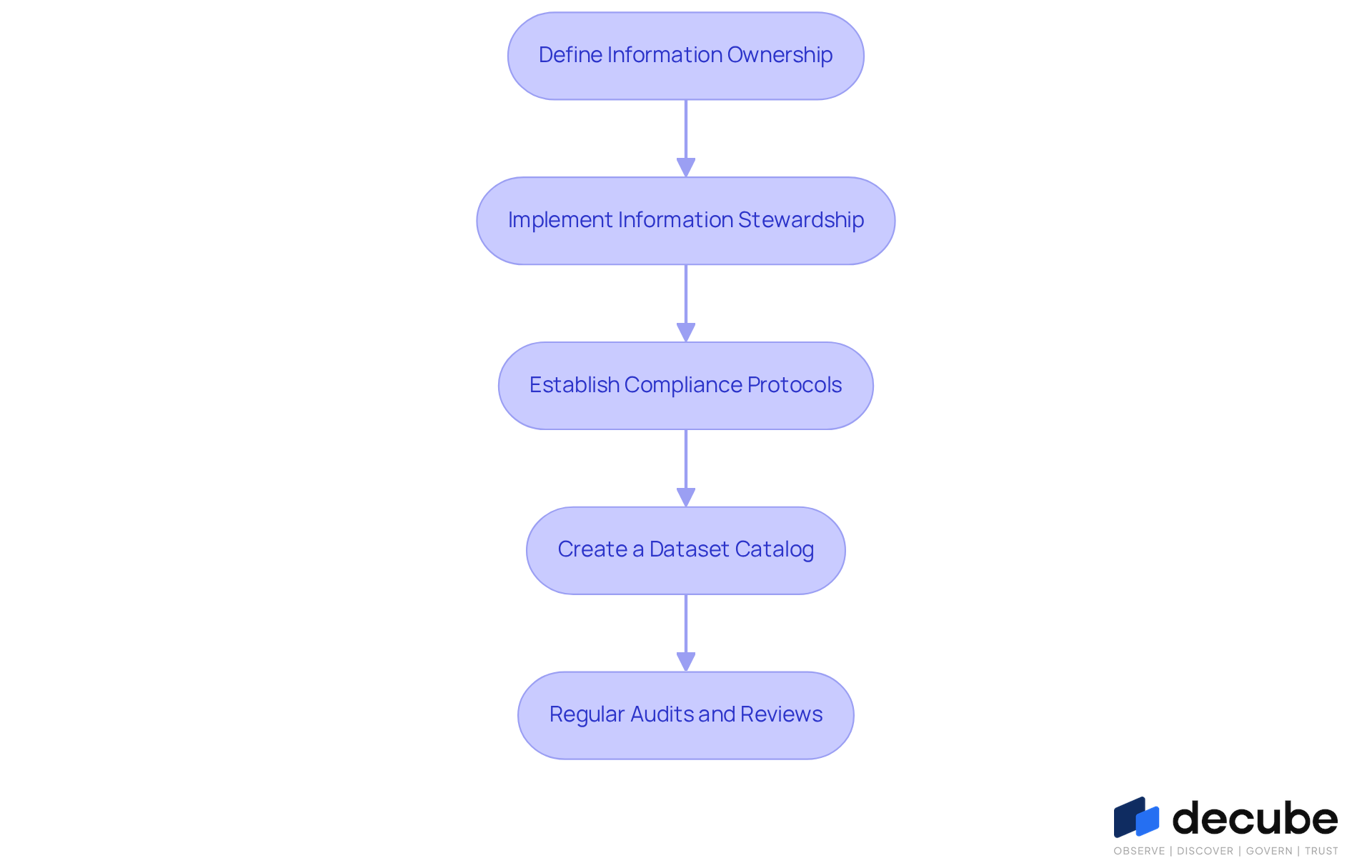

Inadequate governance frameworks can lead to significant challenges in managing information effectively. To enhance AI accuracy in information pipelines, organizations must establish strong governance frameworks that prioritize ownership and stewardship. This involves defining clear policies and procedures for information management, ensuring accountability, and adhering to relevant regulations. Key steps include:

- Define Information Ownership: Assign clear ownership of information assets to ensure accountability and responsibility for information quality. Effective governance necessitates that individuals or teams are assigned as information owners, accountable for the integrity and AI accuracy of the information they oversee.

- Implement Information Stewardship: Designate information stewards who oversee governance practices and ensure adherence to policies. These stewards play a vital role in ensuring quality information and facilitating communication between information producers and consumers.

- Establish Compliance Protocols: Develop protocols to ensure adherence to industry regulations, such as GDPR and HIPAA, which govern privacy and security. Organizations must continuously monitor compliance to mitigate risks associated with information breaches and regulatory penalties.

- Create a Dataset Catalog: Implement a dataset catalog that offers visibility into information assets, their lineage, and usage. This tool assists in comprehending information flow and improves decision-making by ensuring that stakeholders have access to precise and pertinent information.

- Regular Audits and Reviews: Conduct regular audits to assess compliance with governance policies and identify areas for improvement. These evaluations assist entities in upholding high information standards and adjusting to changing regulatory demands.

This structured approach not only mitigates risks but also enhances the overall quality of information, thereby improving AI accuracy and leading to informed decision-making. Ultimately, a well-defined governance structure empowers organizations to leverage accurate information for strategic advantage.

Implement Data Quality Assurance Measures

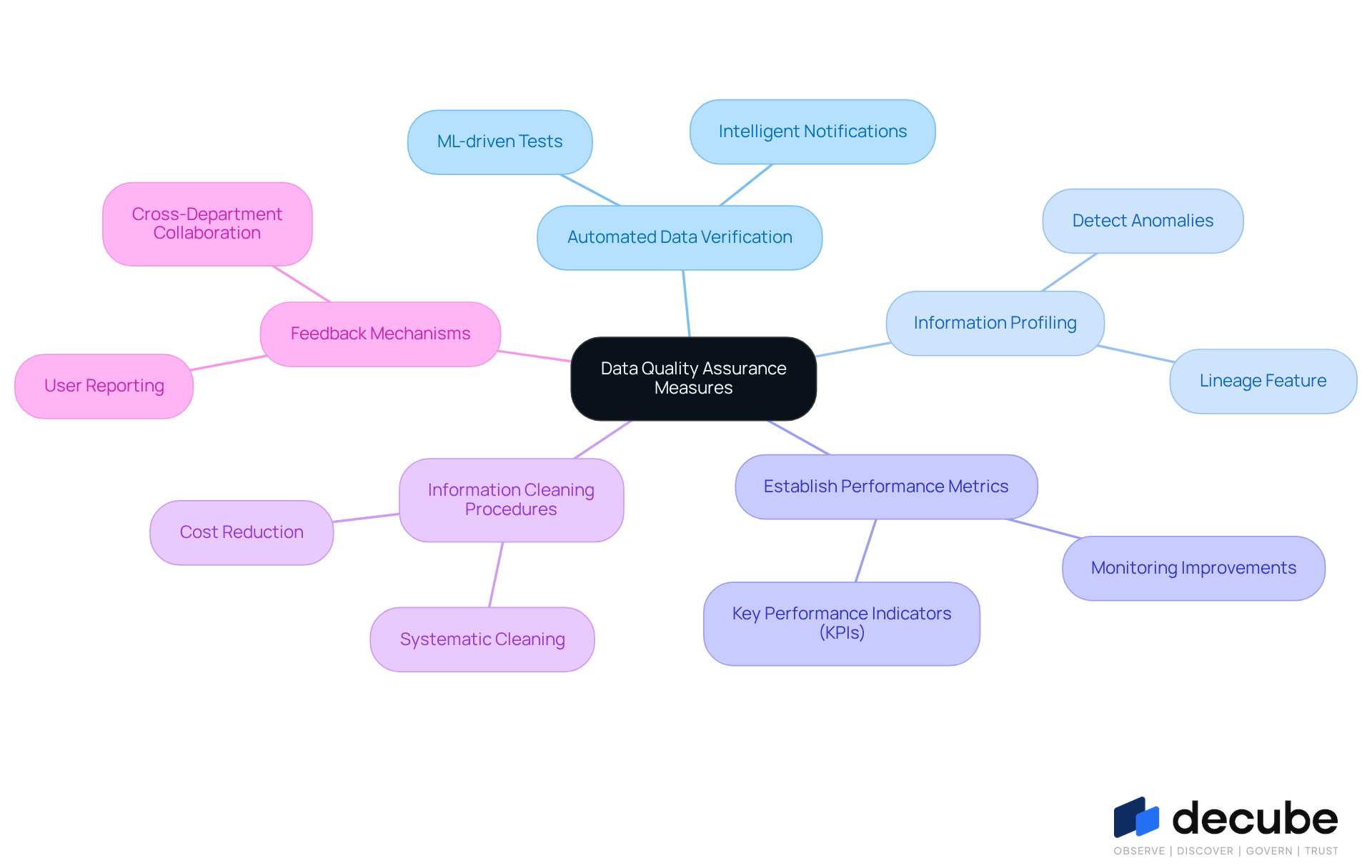

To maintain high information integrity in AI pipelines, organizations must implement robust assurance measures. Key practices include:

- Automated Data Verification: Implement automated tools, such as those provided by a specific platform, to validate information at various stages of the pipeline, ensuring accuracy, completeness, and consistency. Decube's ML-driven tests identify limits for information integrity, which reduces human errors and enhances reliability. Furthermore, the platform's intelligent notifications assist teams in remaining aware of any information accuracy issues, simplifying the monitoring procedure.

- Information Profiling: Regularly profile information to detect anomalies, duplicates, and missing values. Decube's platform enables this proactive step, permitting prompt correction, which is crucial as 96% of organizations face information issues when training AI models. Moreover, the lineage feature offers insight into information flow, enhancing transparency and collaboration across teams.

- Establish Performance Metrics: Define key performance indicators (KPIs) for information integrity, such as accuracy rates and error frequencies. Monitoring these metrics helps organizations track improvements and maintain high standards over time. As Andrew Ng mentions, 'If 80 percent of our work involves preparation of information, then ensuring the integrity of that information is the most vital task for a machine learning team.' Decube's extensive abilities in metadata extraction and standard management support this effort.

- Information Cleaning Procedures: Implement systematic information cleaning procedures to address identified issues. Ensuring that only high-standard information is utilized in AI models is essential, as poor information standards can create confusion and diminish trust in AI outputs. The costs associated with inadequate information standards can be significant, with entities losing an average of 12.9 million dollars each year because of information issues. Decube's information reconciliation features assist organizations in identifying discrepancies effectively.

- Feedback Mechanisms: Establish feedback loops that allow information users to report issues with standards. This enables ongoing enhancement in information management practices, promoting a culture of responsibility and teamwork among groups. Data integrity is a collective duty that requires collaboration among different departments, such as operations, standards, engineering, and IT. The intelligent notifications and monitoring tools simplify this process, guaranteeing that teams are swiftly notified of any information integrity incidents.

When organizations prioritize these quality assurance measures, they can significantly improve the AI accuracy and dependability of their AI models. Ultimately, these measures empower organizations to harness AI's full potential while ensuring data integrity.

Utilize Advanced Monitoring Tools for Data Observability

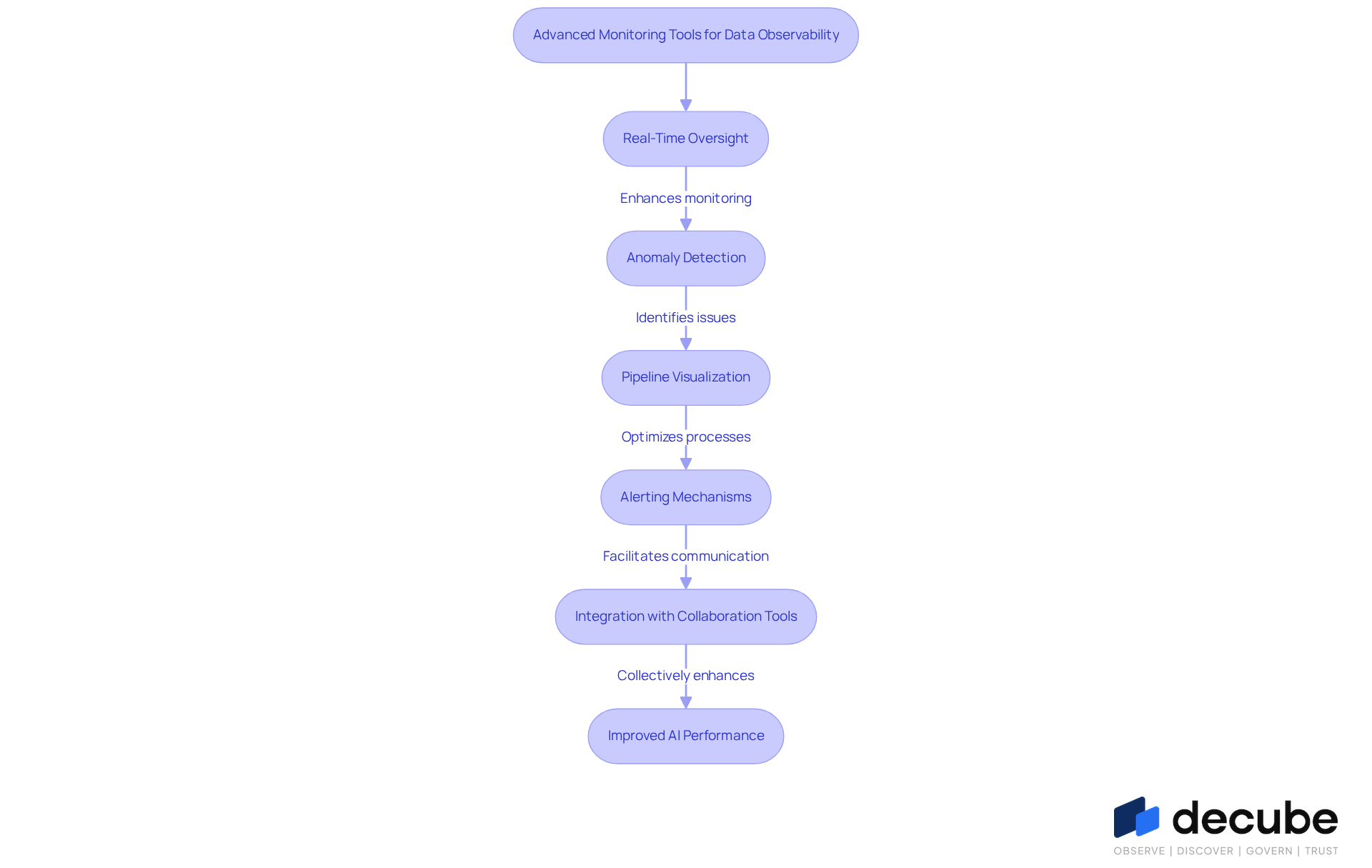

To enhance AI precision and maintain the integrity of information pipelines, organizations must adopt advanced monitoring tools that improve information observability, such as those provided by other platforms. Key practices include:

- Real-Time Oversight: Implement real-time oversight solutions that consistently monitor information flow and performance metrics, allowing for prompt identification of problems that may affect AI effectiveness. The platform's user-friendly design and automated crawling capability guarantee that once information sources are linked, metadata is automatically updated, improving overall observability.

- Anomaly Detection: Utilize machine learning algorithms, as demonstrated in Decube's ML-driven assessments, to recognize anomalies in information patterns, enabling proactive measures against possible quality concerns. Without effective anomaly detection, organizations risk facing significant quality issues that could undermine AI performance. AI copilots play a crucial role in analyzing telemetry data, making anomaly detection even more effective.

- Pipeline Visualization: Utilize visualization tools, such as a specific end-to-end lineage feature, to create clear maps of information flows, helping to pinpoint bottlenecks or inefficiencies within the pipeline. This clarity assists in optimizing information processes and ensuring smooth operations.

- Alerting Mechanisms: Establish robust alerting systems, like Decube's smart alerts, that promptly notify teams of critical issues, ensuring timely intervention and resolution. Effective alerting can significantly reduce downtime and enhance overall pipeline reliability. However, organizations should be cautious of over-reliance on AI for monitoring, as it may diminish critical diagnostic skills among engineers.

- Integration with Collaboration Tools: Seamlessly integrate monitoring tools with collaboration platforms like MS Teams or Slack to streamline communication and accelerate the resolution of information incidents. Decube's integration capabilities foster a collaborative environment where teams can quickly address and rectify issues.

Utilizing advanced monitoring tools allows organizations to enhance their information observability, which directly contributes to improved AI accuracy and overall performance. Neglecting to invest in information observability could result in missed opportunities and diminished competitiveness in the AI sector.

Establish Continuous Improvement and Feedback Loops

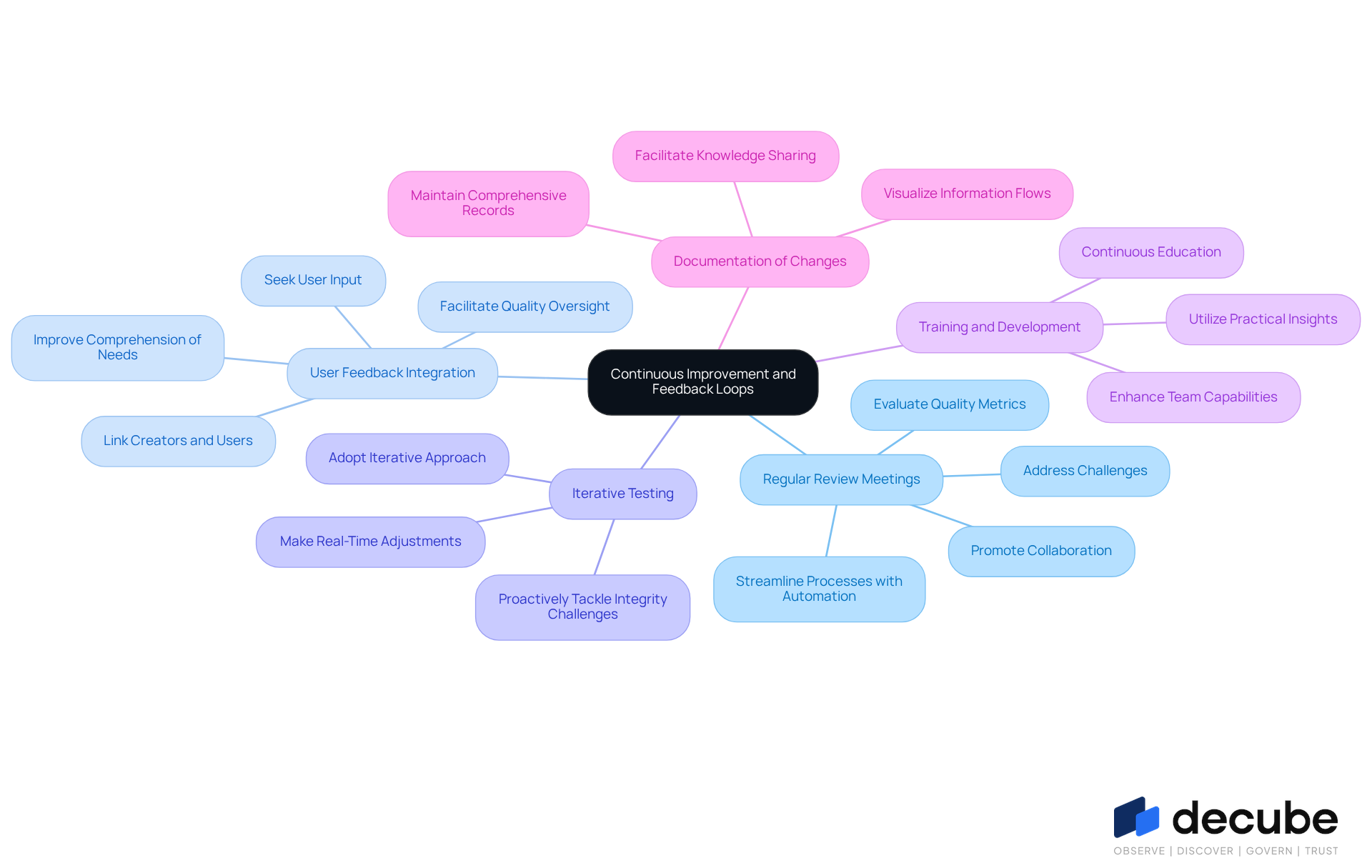

To enhance information management and AI accuracy, organizations must prioritize the establishment of feedback loops and iterative processes. Key strategies include:

- Regular Review Meetings: Schedule consistent meetings to evaluate quality metrics, address challenges, and pinpoint areas for improvement. This practice addresses communication barriers and promotes collaboration among information stakeholders. Decube's automated crawling feature streamlines this process by automatically refreshing metadata, which enhances information governance and observability.

- User Feedback Integration: Actively seek input from information users regarding information standards and usability. Linking information creators with information users improves comprehension of each other's requirements and responsibilities, leading to measurable improvements. Decube's user-friendly design facilitates this integration, enabling users to oversee information quality effectively and share insights across teams, ensuring that practices align with user needs.

- Iterative Testing: Adopt an iterative approach to testing information processes to enhance AI accuracy in models. Contemporary feedback loops backed by information and analytics allow organizations to make real-time adjustments based on performance results. With Decube's machine learning-driven assessments and intelligent notifications, teams can proactively recognize and tackle information integrity challenges, ensuring ongoing enhancement and pertinence.

- Training and Development: Provide continuous education for teams to keep them informed about best practices and emerging technologies in information management. This investment in skill development enhances team capabilities and responsiveness to evolving challenges. Utilizing Decube's extensive features in information profiling and management can further improve training efforts by offering practical insights into governance.

- Documentation of Changes: Maintain comprehensive documentation of modifications made to information processes. This transparency facilitates knowledge sharing and helps teams understand the rationale behind changes, promoting a collaborative environment. Decube's automated column-level lineage capability supports this documentation, enabling teams to visualize information flows and comprehend the influence of changes on quality.

Implementing these strategies allows organizations to continuously improve their data practices, ensuring their AI systems adapt to evolving demands while maintaining AI accuracy and reliability.

Conclusion

Organizations face significant challenges in achieving AI accuracy without robust practices in their data pipelines. Establishing these practices is essential for leveraging the full potential of artificial intelligence. By focusing on data governance, quality assurance, advanced monitoring, and continuous improvement, organizations can ensure that their AI systems operate with high reliability and effectiveness.

A strong data governance framework is crucial, encompassing ownership definition, stewardship implementation, and compliance protocol establishment. Additionally, data quality assurance measures such as automated verification, information profiling, and systematic cleaning procedures are necessary. Advanced monitoring tools enhance observability, allowing organizations to detect anomalies and optimize information flow. Finally, fostering a culture of continuous improvement through feedback loops and iterative testing ensures that data practices remain relevant and effective.

In today's data-driven landscape, prioritizing these best practices is vital for organizations to maintain a competitive edge. By investing in governance frameworks, quality assurance, monitoring tools, and continuous feedback mechanisms, businesses can significantly improve AI accuracy in their data pipelines. Ultimately, neglecting these essential practices could lead to missed opportunities and diminished competitive advantage in a data-driven market.

Frequently Asked Questions

Why is a robust data governance framework important?

A robust data governance framework is essential for managing information effectively, enhancing AI accuracy in information pipelines, and mitigating risks associated with information breaches and regulatory penalties.

What are the key components of an effective data governance framework?

Key components include defining information ownership, implementing information stewardship, establishing compliance protocols, creating a dataset catalog, and conducting regular audits and reviews.

What does defining information ownership entail?

Defining information ownership involves assigning clear responsibility for information assets to individuals or teams, ensuring accountability for the integrity and accuracy of the information they manage.

What role do information stewards play in data governance?

Information stewards oversee governance practices, ensure adherence to policies, and facilitate communication between information producers and consumers, playing a vital role in maintaining information quality.

Why are compliance protocols necessary in data governance?

Compliance protocols are necessary to ensure adherence to industry regulations, such as GDPR and HIPAA, which govern privacy and security, and to monitor compliance continuously to mitigate risks.

What is a dataset catalog and why is it important?

A dataset catalog is a tool that provides visibility into information assets, their lineage, and usage, assisting in understanding information flow and improving decision-making by ensuring access to accurate and relevant information.

How do regular audits and reviews contribute to data governance?

Regular audits and reviews help assess compliance with governance policies, identify areas for improvement, and assist organizations in maintaining high information standards and adapting to changing regulatory demands.

List of Sources

- Establish Robust Data Governance Frameworks

- Companies Are Scaling AI on Data They Don't Trust, New Study Finds (https://prnewswire.com/news-releases/companies-are-scaling-ai-on-data-they-dont-trust-new-study-finds-302761641.html)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- Data Governance in 2026: Key Strategies for Enterprise Compliance and Innovation (https://community.trustcloud.ai/article/data-governance-in-2025-what-enterprises-need-to-know-today)

- Implement Data Quality Assurance Measures

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://finance.yahoo.com/news/data-priorities-2026-ai-adoption-190600933.html)

- AI Data Quality in 2026: Challenges & Best Practices (https://ai-innovate.com/ai-data-quality-2026)

- AI Data Quality in 2026: Challenges & Best Practices (https://aimultiple.com/data-quality-ai)

- The Data Problems Undermining Midmarket AI Projects In 2026 (https://mescomputing.com/news/2026/ai/the-data-problems-undermining-midmarket-ai-projects-in-2026)

- Data Quality Governance for Agentic AI Pipelines - AI CERTs News (https://aicerts.ai/news/data-quality-governance-for-agentic-ai-pipelines)

- Utilize Advanced Monitoring Tools for Data Observability

- The Most Effective Tools for Data Pipeline Monitoring (https://acceldata.io/blog/what-are-data-pipeline-monitoring-tools)

- Real time is going mainstream | Why Real-time is the new standard in data engineering (https://dataopsleadership.substack.com/p/real-time-is-going-mainstream-why)

- Rootly | Top 5 AI Observability Trends Shaping 2026 Ops Teams (https://rootly.com/sre/top-5-ai-observability-trends-shaping-2026-ops-teams-efa18)

- What 2026 Gartner Market Guide For Data Observability Tools Means For Your Data And AI Team: My Take (https://montecarlodata.com/blog-what-2026-gartner-market-guide-for-data-observability-tools-means-for-your-data-and-ai-team-my-take)

- Establish Continuous Improvement and Feedback Loops

- To Get Better Customer Data, Build Feedback Loops into Your Products (https://hbr.org/2023/07/to-get-better-customer-data-build-feedback-loops-into-your-products)

- Improving Data Quality by Using Feedback Loops (https://exasol.com/blog/data-quality-and-the-feedback-loop)

- Feedback Loops: Harness The Power of Your Data Goldmine | Digile (https://digile.com/blog/feedback-loops-harness-the-power-of-your-data-goldmine)

- Introducing the Global Data Quality feedback loop (https://cint.com/blog/introducing-the-global-data-quality-feedback-loop)

- Using Data and Feedback Loops to Drive Continuous Improvement | Agile Seekers (https://agileseekers.com/blog/using-data-and-feedback-loops-to-drive-continuous-improvement)

_For%20light%20backgrounds.svg)