Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Effective Cloud Data Security Solutions

Discover best practices for a robust cloud data security solution to protect sensitive information.

Introduction

As organizations increasingly depend on digital environments for sensitive information, the risk of data breaches has escalated, making cloud data security a paramount concern. For data engineers, navigating the complexities of safeguarding this data is essential, not only to protect against breaches but also to ensure compliance with stringent regulations. Yet, the path to effective cloud data security is riddled with challenges, including misconfigurations and the need to adapt to changing compliance requirements.

How can data engineers overcome these obstacles and establish robust security measures? Without robust security measures, organizations risk not only data loss but also regulatory penalties.

Define Cloud Data Security and Its Importance for Data Engineers

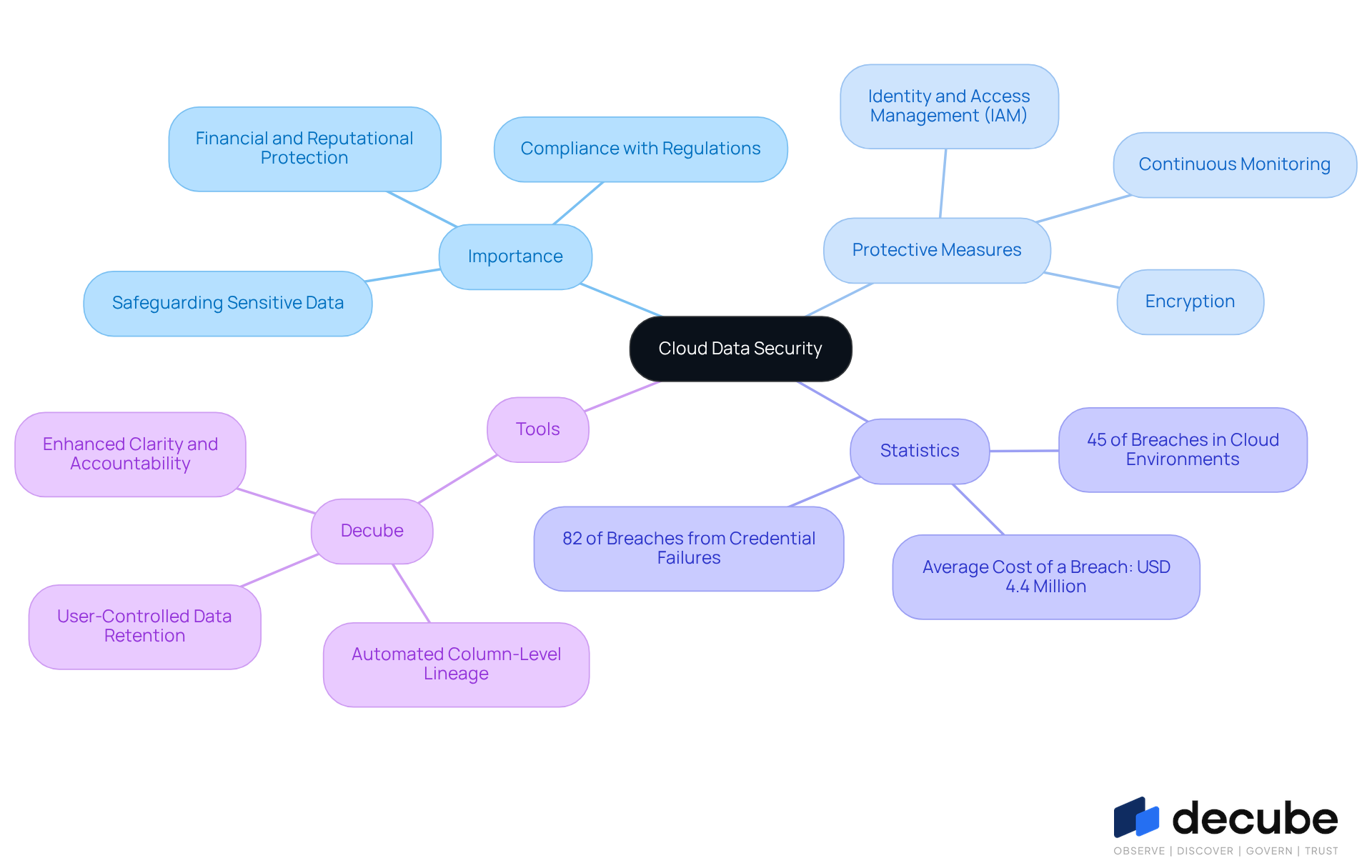

Cloud information protection is essential for safeguarding sensitive data in online environments against unauthorized access and cyber threats. For data engineers, mastering cloud information protection is crucial, as they are responsible for ensuring that information remains both reachable and safe. This responsibility includes implementing robust protective measures, including:

- Encryption

- Identity and access management (IAM)

- Continuous monitoring to ensure the safety of sensitive information

The importance of online information protection is underscored by concerning statistics:

- 45% of all breaches now happen in virtual environments, with the average expense of a breach reaching USD 4.4 million.

- Moreover, 82% of online breaches trace back to credential failures, underscoring the critical importance of stringent IAM practices.

Efficient online information protection not only preserves integrity but also guarantees adherence to regulatory standards, shielding organizations from possible breaches that could lead to significant financial and reputational harm.

Decube functions as a unified information trust platform that improves cloud security through advanced observability and governance features. With automated column-level lineage, engineers can effectively monitor the entire information flow across components, which enhances clarity and accountability in management. Additionally, Decube's Data Recon module enables user-controlled data retention, storing unmatched rows for up to 30 days, which empowers data engineers to manage data quality effectively.

Real-world examples illustrate the implementation of these protective measures. For instance, organizations that emphasize least-privilege access have recognized it as their primary priority in safeguarding their systems, demonstrating a proactive strategy to reduce risks linked to excessive permissions. Ongoing observation and automated information classification are being more widely embraced to improve visibility and control over sensitive information, further emphasizing the significance of digital asset protection in today’s online environment. By leveraging Decube's comprehensive governance framework, organizations can significantly enhance their data protection strategies and mitigate risks in the evolving digital landscape.

Identify Common Challenges in Cloud Data Security Implementation

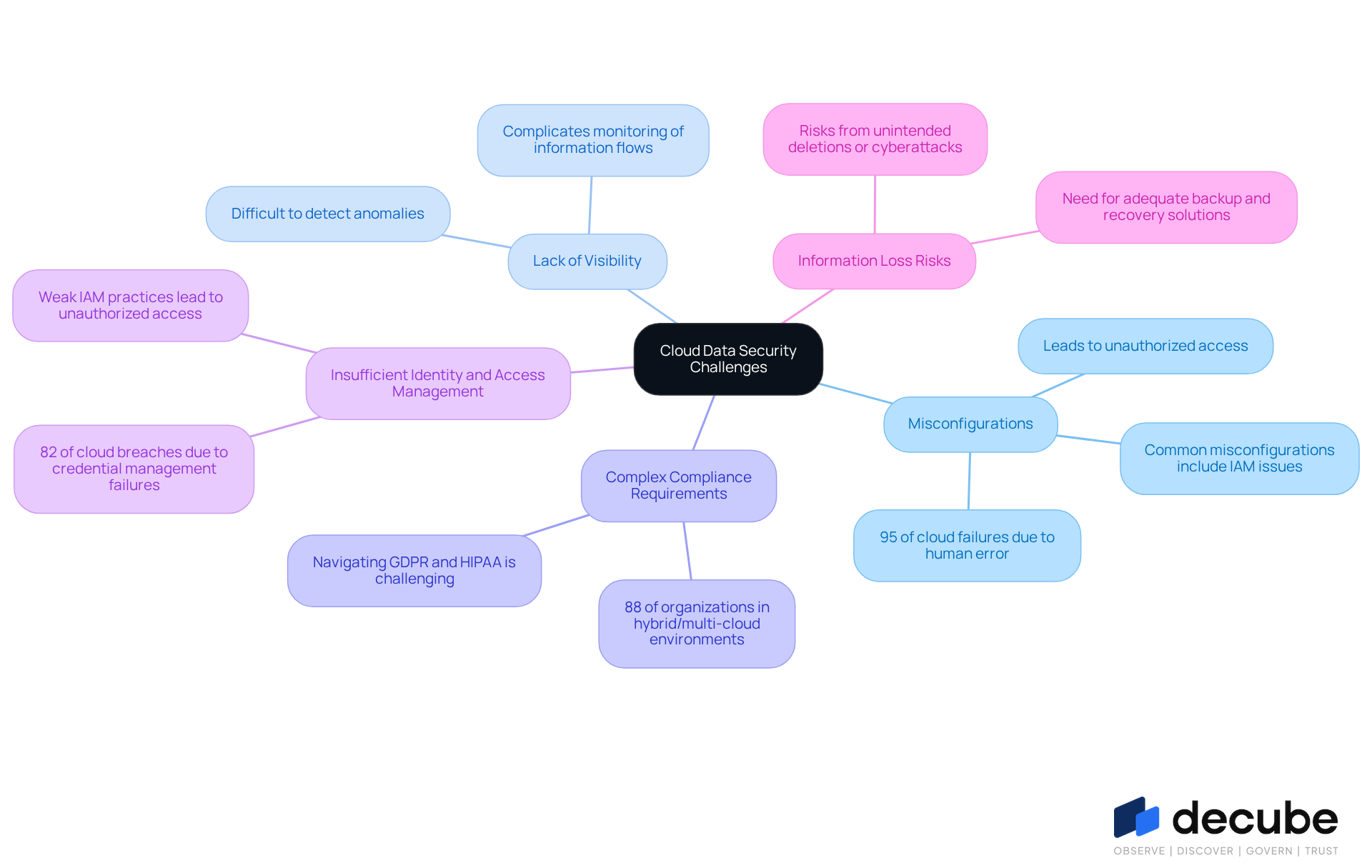

Data engineers face numerous challenges when implementing cloud data security solutions, each with significant implications for organizational safety:

- Misconfigurations: Misconfigurations are a leading cause of security breaches, with 95% of failures attributed to human error. Misconfigurations can lead to unauthorized access to sensitive information, underscoring the importance of careful configuration management.

- Lack of Visibility: Many organizations face challenges in achieving comprehensive visibility within their cloud environments. This lack of understanding complicates the monitoring of information flows and the detection of anomalies, making it difficult to respond to potential threats in real time.

- Complex Compliance Requirements: Navigating the intricate landscape of regulatory standards, such as GDPR and HIPAA, can be daunting. With 88% of organizations operating in hybrid or multi-cloud environments, ensuring compliance across multiple platforms adds to the complexity.

- Insufficient Identity and Access Management: Weak identity and access management (IAM) practices can lead to unauthorized access. Credential management failures account for 82% of cloud breaches, highlighting the necessity of establishing strong authentication measures to safeguard sensitive information.

- Information Loss Risks: Organizations encounter substantial risks of information loss from unintended deletions or cyberattacks. Without adequate backup and recovery solutions, the potential for significant information loss rises, necessitating comprehensive protection strategies.

Addressing these challenges requires a proactive approach, including regular audits, continuous monitoring, and adherence to best practices in a cloud data security solution. Without a proactive approach, organizations risk severe repercussions from data breaches and compliance failures.

Implement Effective Strategies for Cloud Data Security Solutions

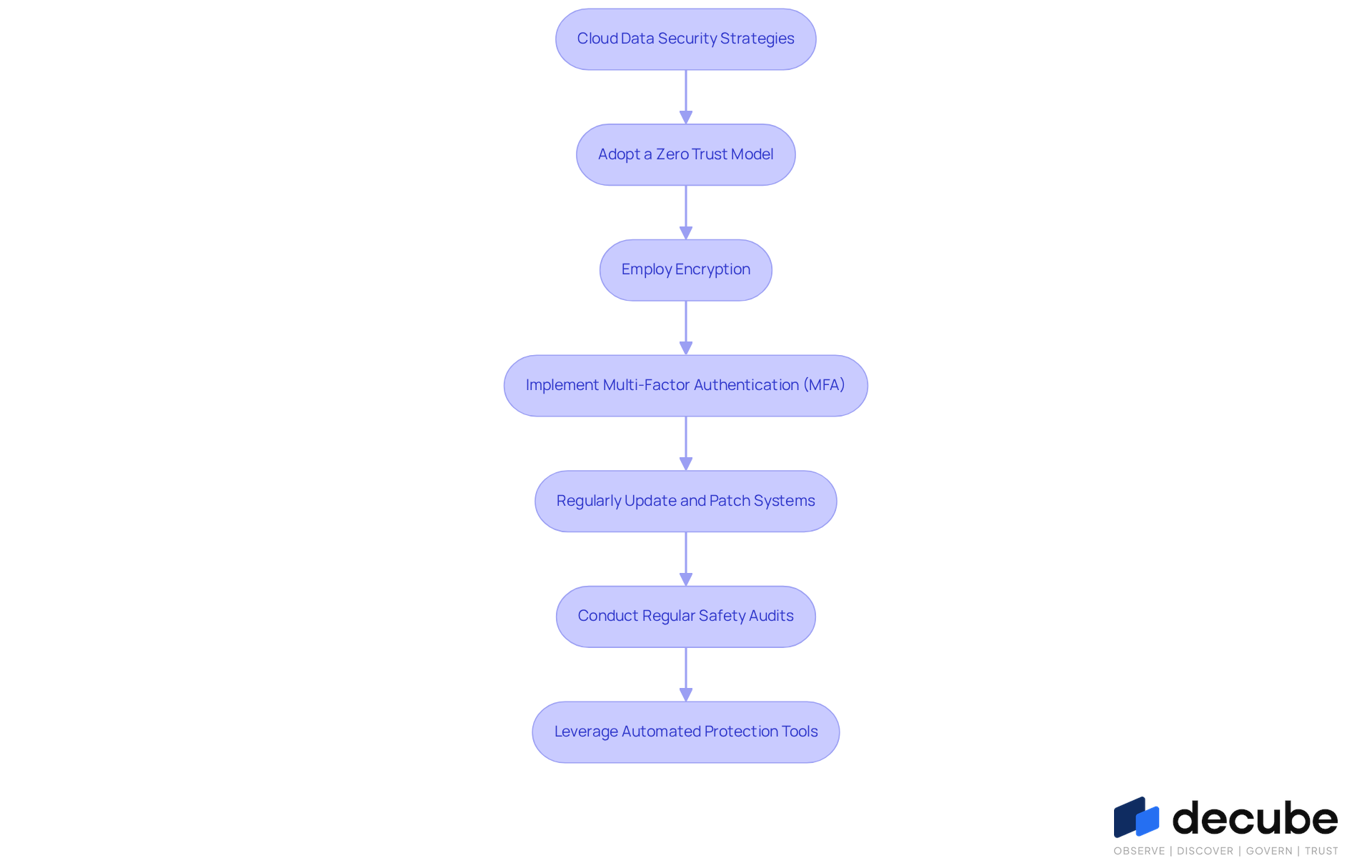

In an era where data breaches are increasingly common, implementing a cloud data security solution is paramount for organizations. Data engineers should consider the following strategies:

- Adopt a Zero Trust Model: Implementing a zero trust architecture ensures that no user or device is trusted by default, requiring validation for every request to enter.

- Employ Encryption: Securing information both at rest and during transmission adds a crucial layer of safety, safeguarding sensitive details from unauthorized entry.

- Implement Multi-Factor Authentication (MFA): MFA significantly reduces the risk of unauthorized access by requiring multiple forms of verification before granting access to sensitive data.

- Regularly Update and Patch Systems: Keeping cloud services and applications up to date helps protect against known vulnerabilities that could be exploited by attackers.

- Conduct Regular Safety Audits: Frequent audits assist in identifying weaknesses in protection protocols and ensure compliance with regulatory standards, enabling organizations to address potential vulnerabilities proactively.

- Leverage Automated Protection Tools: Utilizing AI-driven protection tools can enhance threat detection and response capabilities, allowing for quicker identification of anomalies and potential breaches.

By adopting these strategies, organizations can strengthen their defenses against evolving threats through a cloud data security solution. Ultimately, the implementation of these strategies not only protects sensitive information but also strengthens organizational resilience against future threats.

Ensure Compliance with Regulatory Standards in Cloud Data Security

Effective cloud data security solution hinges on strict adherence to regulatory standards, which is crucial for data engineers. Data engineers must ensure that their practices align with various regulations, including:

- General Data Protection Regulation (GDPR): Organizations are mandated to implement strong measures to safeguard personal information and uphold individuals' rights. With GDPR penalties potentially reaching €2.9 billion by 2023, the financial risks of non-compliance are significant. Information lineage plays a crucial role here, offering the visibility required to demonstrate compliance and minimize risk by tracing origins and transformations. This ultimately enhances quality and facilitates quicker root-cause analysis.

- Health Insurance Portability and Accountability Act (HIPAA): For organizations managing healthcare information, HIPAA compliance is essential to protect patient details. In 2023, HIPAA breach notifications reached 700 million records, underscoring the critical need for stringent security measures. Notably, 96% of healthcare organizations faced cyber threats, emphasizing the urgency of compliance in this sector. Implementing information lineage can improve collaboration between business and technical teams, ensuring that handling practices meet HIPAA requirements and fostering stronger audit readiness.

- Payment Card Industry Security Standard (PCI DSS): Businesses handling credit card transactions must comply with PCI DSS requirements to safeguard cardholder information. Following PCI DSS guidelines is crucial to prevent hefty penalties and keep customer trust intact. In fact, 67% of breaches were due to compliance failures, illustrating the risks associated with non-compliance. Information lineage can assist organizations in monitoring and managing information retrieval, thereby reinforcing their compliance stance and improving overall information governance.

- Federal Risk and Authorization Management Program (FedRAMP): Cloud service providers collaborating with U.S. federal agencies must adhere to FedRAMP standards to ensure information protection, which encompasses thorough evaluations and ongoing observation.

To achieve adherence, information engineers should create a comprehensive structure that includes regular audits, detailed documentation of protective measures, and ongoing surveillance of access and usage. This comprehensive approach not only ensures compliance but also fosters a culture of security within the organization. As Sahar Lester pointed out, "the average expense of a breach skyrocketed to $4.45 million in 2023 (IBM)," highlighting the importance of proactive measures. This proactive approach not only mitigates risks but also enhances the overall integrity of a cloud data security solution in cloud data management, particularly through the implementation of data lineage. Ultimately, a robust compliance framework not only protects sensitive information but also fortifies the organization's reputation in the digital landscape.

Conclusion

Organizations must recognize that effective cloud data security is essential to safeguard sensitive information against increasing cyber threats. The responsibility falls heavily on data engineers to implement and maintain robust security measures, ensuring that data remains both accessible and secure. By understanding the intricacies of cloud data security, organizations can bolster their defenses against breaches and comply with essential regulatory standards.

Throughout the article, key strategies for achieving effective cloud data security have been discussed, including:

- The adoption of a zero trust model

- The importance of encryption

- The necessity of robust identity and access management practices

Organizations face significant challenges in maintaining cloud data security, including misconfigurations and complex compliance requirements, emphasizing the need for a proactive approach to security. By leveraging tools like Decube and implementing continuous monitoring, organizations can enhance their data governance and mitigate risks associated with cloud environments.

Prioritizing cloud data security is essential to prevent devastating financial and reputational consequences from data breaches. Organizations must commit to ongoing education, regular audits, and the adoption of advanced security measures to navigate the evolving threat landscape. This commitment not only safeguards data assets but also establishes a security-focused culture crucial for long-term success.

Frequently Asked Questions

What is cloud data security?

Cloud data security refers to the measures and practices implemented to protect sensitive data stored in online environments from unauthorized access and cyber threats.

Why is cloud data security important for data engineers?

It is crucial for data engineers to master cloud data security to ensure that information remains both accessible and safe, as they are responsible for implementing protective measures to safeguard data.

What are some key protective measures for cloud data security?

Key protective measures include encryption, identity and access management (IAM), and continuous monitoring to ensure the safety of sensitive information.

What statistics highlight the importance of cloud data security?

Statistics indicate that 45% of all breaches occur in virtual environments, with the average cost of a breach reaching USD 4.4 million. Additionally, 82% of online breaches are linked to credential failures, emphasizing the need for stringent IAM practices.

How does effective cloud data security benefit organizations?

Efficient cloud data security preserves data integrity, ensures compliance with regulatory standards, and protects organizations from potential breaches that could result in significant financial and reputational harm.

What role does Decube play in cloud data security?

Decube functions as a unified information trust platform that enhances cloud security through advanced observability and governance features, such as automated column-level lineage and user-controlled data retention.

What is the significance of least-privilege access in cloud data security?

Organizations that prioritize least-privilege access demonstrate a proactive strategy to reduce risks associated with excessive permissions, thereby enhancing their security posture.

How are ongoing observation and automated information classification used in cloud data security?

Ongoing observation and automated information classification are increasingly adopted to improve visibility and control over sensitive information, highlighting the importance of digital asset protection in the online environment.

How can organizations enhance their data protection strategies?

By leveraging Decube's comprehensive governance framework, organizations can significantly improve their data protection strategies and mitigate risks in the evolving digital landscape.

List of Sources

- Define Cloud Data Security and Its Importance for Data Engineers

- Cloud Security Statistics [2026]: Key Data & Trends (https://app.stationx.net/articles/cloud-security-statistics)

- Thirty 2026 Cloud Cybersecurity Statistics (https://trustle.com/post/2026-cybersecurity-statistics)

- Cloud security challenges arise for data engineers (https://scworld.com/brief/cloud-security-challenges-arise-for-data-engineers)

- 205 Cybersecurity Stats and Facts for 2026 (https://vikingcloud.com/blog/cybersecurity-statistics)

- 12 Cloud Data Security Best Practices to Protect Sensitive Data (https://forcepoint.com/blog/insights/cloud-data-security-best-practices)

- Identify Common Challenges in Cloud Data Security Implementation

- Cloud Security Statistics [2026]: Key Data & Trends (https://app.stationx.net/articles/cloud-security-statistics)

- 98.6% of companies have misconfigurations in their cloud environments (https://cloudcomputing-news.net/news/98-6-of-companies-have-misconfigurations-in-their-cloud-environments)

- Top Key Cloud Security Statistics You Need in 2026 | TechMagic (https://techmagic.co/blog/cloud-security-statistics)

- Palo Alto Networks Exposes Multi-Million-Dollar Cloud Misconfigurations (https://sdxcentral.com/news/palo-alto-networks-exposes-multi-million-dollar-cloud-misconfigurations)

- 35+ Cloud Security Statistics, Data & Trends for 2026 (https://thenetworkinstallers.com/blog/cloud-security-statistics)

- Implement Effective Strategies for Cloud Data Security Solutions

- 50+ Cloud Security Statistics in 2026 (https://sentinelone.com/cybersecurity-101/cloud-security/cloud-security-statistics)

- Top 10 Quotes About Cloud Security (https://secureworld.io/industry-news/top-10-quotes-about-cloud-security)

- Implementing a Zero Trust Architecture | NCCoE (https://nccoe.nist.gov/projects/implementing-zero-trust-architecture)

- 2023 Cloud Security Report Shows Many Data Breaches - Press Release (https://cpl.thalesgroup.com/about-us/newsroom/2023-cloud-security-cyberattacks-data-breaches-press-release)

- Zero Trust Is the Big Idea. 2026 Is the Year It Got Small. (https://darkreading.com/cyber-risk/zero-trust-is-the-big-idea-2026-is-the-year-it-got-small-and-specific)

- Ensure Compliance with Regulatory Standards in Cloud Data Security

- Staying Ahead of GDPR Compliance Updates in 2026: What Tech & Data Leaders Need to Know (https://bigid.com/blog/gdpr-compliance-updates-for-tech-data-leaders)

- Cloud Data Security Guide: Essential Strategies for 2026 (https://ironcladfamily.com/blog/cloud-data-security)

- Compliance Statistics | 2026 Edition – Gitnux (https://gitnux.org/compliance-statistics)

- Cybersecurity Statistics and Insights 2026 - DataGlobeHub (https://dataglobehub.com/cybersecurity-statistics-and-insights)

_For%20light%20backgrounds.svg)