Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Data Pipeline Observability Solutions for Engineers

Discover top data pipeline observability solutions to enhance data integrity and governance.

Introduction

As organizations increasingly depend on data for decision-making, ensuring data quality and integrity has become a critical challenge. Engineers must navigate a complex landscape of information pipelines, where even minor discrepancies can result in significant operational setbacks. This article explores ten essential data pipeline observability solutions that empower engineers to enhance data trust, streamline governance, and maintain robust monitoring practices. These innovative tools not only mitigate risks but also transform how organizations manage their data assets.

Decube: Comprehensive Data Trust Platform for Observability and Governance

Decube emerges as a leading trust platform for information specifically designed for the AI era, providing a truly integrated solution for , discovery, and governance. Its core offerings encompass:

- Cataloging

- Governance

Key features such as column-level lineage mapping, , and enable organizations to maintain high standards of quality and trust. The platform's innovative tools, including Decube CoPilot, support custom information validation and , making it indispensable for engineers and AI/ML professionals.

User testimonials highlight Decube's and its effectiveness in maintaining trust in information. Users appreciate the platform's ability to streamline collaboration among teams and enhance information monitoring, facilitating early problem identification. In 2024, over 65% of information leaders prioritize governance, acknowledging its vital role in improving quality and compliance. This shift underscores the necessity for robust governance structures, especially as organizations face increasing information complexity and regulatory demands.

Case studies indicate that organizations implementing experience up to 45% lower breach costs, underscoring the . As the market is projected to reach $4.73 billion by 2030, Decube's comprehensive strategy positions it as a preferred choice for businesses aiming to refine their information management practices in a rapidly evolving landscape.

Monte Carlo: Data Observability for Real-Time Monitoring and Anomaly Detection

Monte Carlo is distinguished by its advanced information observability features, particularly in and . This platform , allowing teams to swiftly pinpoint and address problems before they escalate. Key functionalities, such as and , empower teams to maintain the integrity of their . Consequently, this ensures that information remains not only trustworthy but also useful, which is essential for informed decision-making in today’s fast-paced information environment.

Great Expectations: Open-Source Tool for Data Quality and Validation

pivotal in ensuring . It empowers teams to define for their datasets, , and meticulously document workflows related to information integrity. By seamlessly integrating with existing , Great Expectations enables organizations to uphold . Consequently, it becomes an indispensable tool for engineers who prioritize in their management practices.

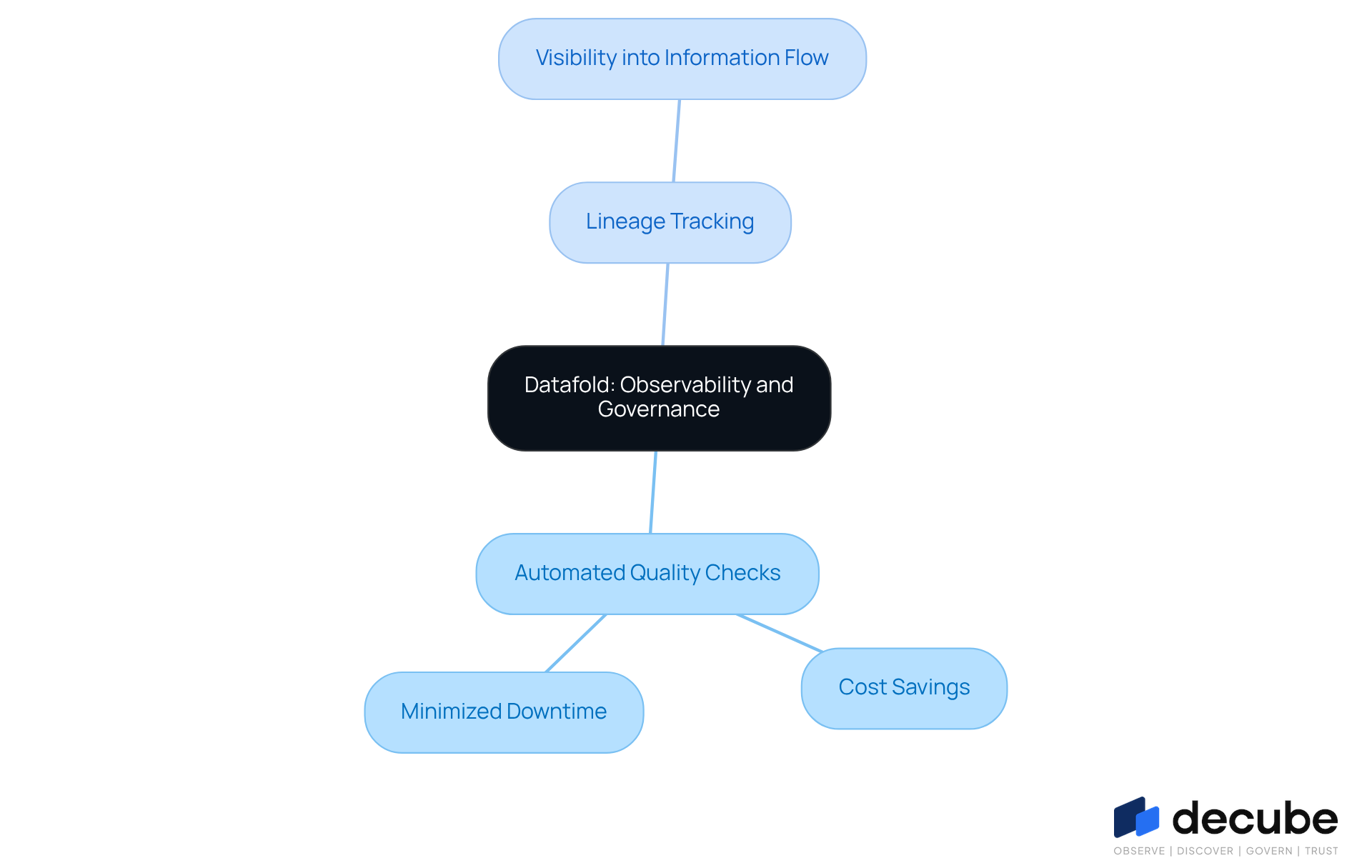

Datafold: Observability and Governance for Data Pipeline Integrity

Datafold stands out as a robust platform that offers solutions by integrating observability and governance to uphold pipeline integrity. It offers critical features such as automated , which are increasingly vital as organizations face an estimated annual cost of $12.9 million due to poor information quality. By implementing these checks, Datafold empowers teams to detect and resolve issues early, thereby significantly minimizing downtime and enhancing the reliability of .

Moreover, its provide essential visibility into , which is vital for solutions, allowing teams to effectively trace upstream and downstream relationships. This aspect is particularly important, given that 66% of banks encounter challenges related to quality and integrity, underscoring the need for . With an , Datafold is accessible for teams aiming to streamline their management processes, positioning it as an indispensable tool in the evolving landscape of information visibility.

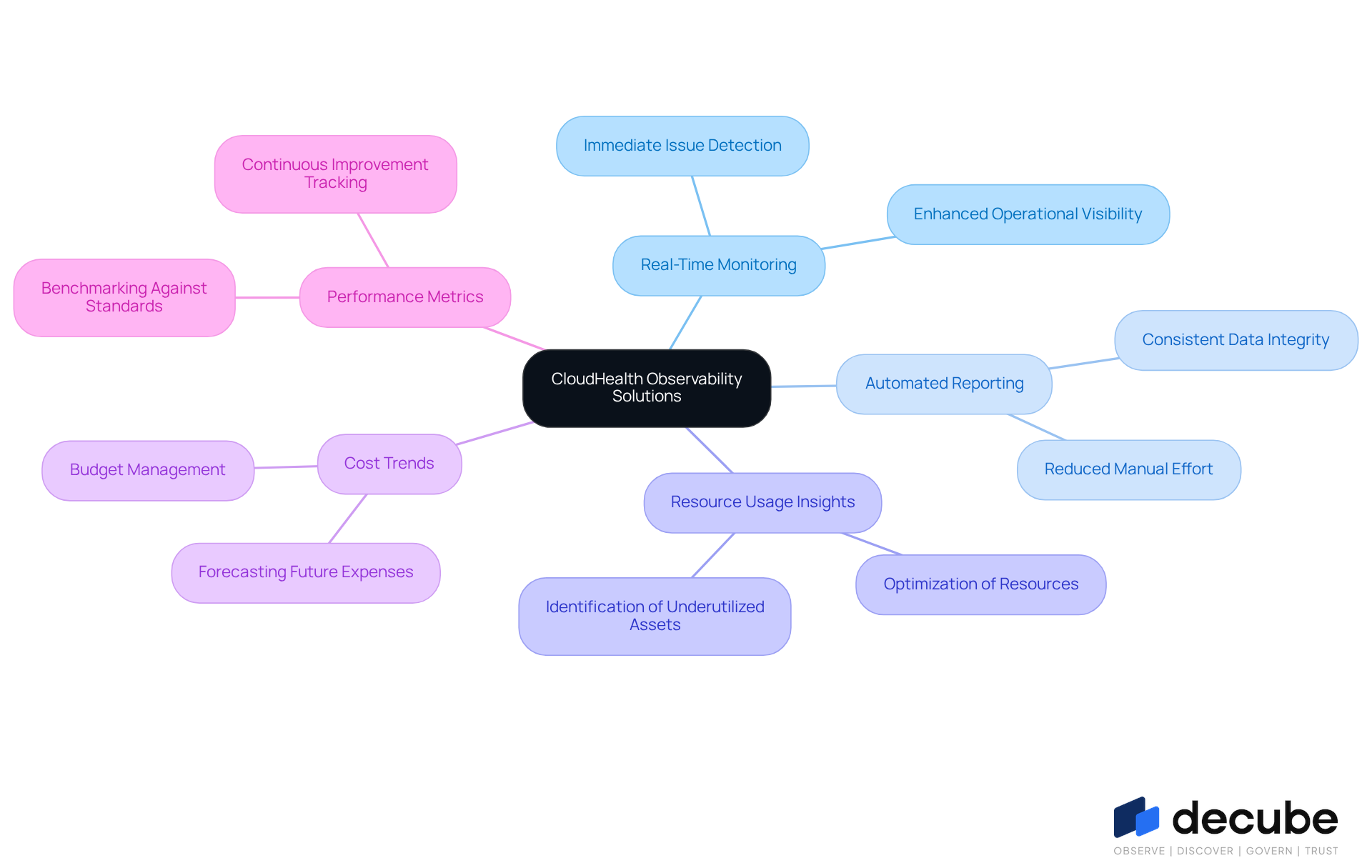

CloudHealth: Observability Solutions for Cloud-Based Data Pipelines

CloudHealth offers specialized tailored for , effectively aggregating and analyzing insights across diverse cloud environments. This platform provides , , and , enabling organizations to optimize their cloud resources efficiently.

Key features include and automated reporting, which enhance and ensure information integrity while adhering to industry standards. As organizations increasingly adopt hybrid and multi-cloud strategies, leveraging CloudHealth's capabilities becomes essential for maintaining optimal performance and cost-efficiency in information management.

Talend: Data Integration and Observability for Enhanced Pipeline Performance

Talend is recognized as a powerful integration platform that effectively combines with observability features, enabling organizations to integrate, transform, and govern information across various environments. Its integrated monitoring tools are vital in today’s data-driven landscape, enabling teams to maintain the performance and reliability of their . Given that organizations face an average of 67 data incidents per month, utilizing to proactively monitor and address these issues is essential for operational efficiency.

The significance of in is paramount, especially as the integration market is projected to reach approximately $30.3 billion by 2030. This anticipated growth signifies a transition towards advanced ETL practices that unify governance and accommodate both batch and streaming data processes. Real-world examples, such as Groupon's implementation of Talend Data Integration, illustrate how companies can enhance their and analytics capabilities, leading to improved decision-making and operational performance.

Current trends indicate that are increasingly critical, significantly reducing the time required to convert data from source to actionable insights. As organizations prioritize pipeline modernization, leveraging tools like Talend not only enhances but also mitigates the hidden costs associated with poor , which Gartner estimates to be around $15 million per company annually, emphasizing the need for data pipeline observability solutions. By embedding governance rules directly into ETL processes, organizations can bolster compliance and maintain , ultimately driving business success.

Databricks: Observability Solutions for Data Lakes and Analytics

Databricks provides robust tailored for data lakes and analytics, allowing teams to effectively . The platform's are vital for upholding and . As organizations increasingly rely on , maintaining the trustworthiness and actionability of information becomes paramount. Current trends indicate that for effective monitoring not only enhance information quality but also support compliance initiatives, positioning visibility as a critical component of modern analytics strategies. Success stories across various sectors illustrate how leveraging Databricks' features has led to notable improvements in reliability and operational efficiency, underscoring the platform's significance in today's .

Informatica: Data Observability for Compliance and Monitoring

Informatica offers comprehensive information observability solutions that emphasize compliance and monitoring, which are crucial for navigating the increasingly complex regulatory landscape of 2026. The platform excels in , a vital component for organizations striving to meet stringent . By leveraging automated and , Informatica empowers entities to maintain high information quality and effectively safeguard their assets. This capability becomes particularly important as regulatory bodies intensify scrutiny on , rendering not merely a best practice but an essential requirement for compliance.

Current trends highlight a growing focus on integrating within governance frameworks, ensuring that organizations can demonstrate accountability and transparency in their handling processes. As organizations adopt these advanced solutions, they position themselves not only to comply with regulations but also to enhance their overall information management strategies.

Apache Airflow: Workflow Automation and Observability for Data Pipelines

Decube is a unified information trust platform that enhances observability and governance within modern information stacks by utilizing . Unlike traditional tools such as Apache Airflow, which primarily focus on , Decube offers a comprehensive suite of features that empower data engineers to maintain throughout the data pipeline. Its allows teams to effortlessly track across various components, thereby ensuring transparency and fostering collaboration.

The platform's , along with intelligent alerts and tailored monitoring solutions - including custom SQL tests - provide robust mechanisms for the early detection of issues. This proactive approach minimizes the necessity for manual troubleshooting, allowing teams to focus on innovation rather than crisis management. Furthermore, Decube's automated crawling feature guarantees that and consistently updated, thereby enhancing governance and access control.

By integrating these advanced features, Decube not only streamlines information operations but also nurtures a culture of trust, positioning itself as an indispensable tool for enterprises aiming to elevate their analytics and governance with .

Looker: User-Friendly Data Observability for Business Intelligence

Looker is a prominent that effectively integrates , thereby enhancing insight-driven decision-making. By providing and analytics, Looker enables organizations to visualize and comprehend their data with clarity. Its automated reporting and empower teams to make .

As organizations increasingly acknowledge the importance of - especially in 2026, when analytics platforms are projected to offer five times faster implementation than previous solutions - Looker emerges as an essential tool for those aiming to leverage analytics for strategic advantage.

Conclusion

The exploration of essential data pipeline observability solutions reveals a critical landscape for engineers aiming to enhance data integrity and governance. As organizations increasingly depend on accurate and timely information, the significance of robust observability tools is paramount. These solutions facilitate real-time monitoring and anomaly detection, empowering teams to uphold high standards of data quality, which ultimately drives informed decision-making.

Throughout this discussion, key players such as Decube, Monte Carlo, Great Expectations, and others have been highlighted for their distinctive features that address the growing complexity of data management. From Decube's comprehensive data trust platform to Monte Carlo's automated alerts, each solution provides specific functionalities that contribute to effective governance and observability. The financial implications of implementing these tools are substantial, with organizations reporting reduced breach costs and enhanced operational efficiency as a result of prioritizing data integrity.

In an era where data is increasingly recognized as a strategic asset, leveraging these observability solutions is not merely advantageous but essential. Organizations are urged to adopt these tools to ensure compliance with regulatory standards and to cultivate a culture of trust and transparency in their data practices. By investing in advanced observability solutions, businesses can navigate the complexities of the data landscape with confidence, thereby enhancing their competitive edge in an ever-evolving market.

Frequently Asked Questions

What is Decube and what does it offer?

Decube is a comprehensive data trust platform designed for the AI era, providing integrated solutions for information visibility, discovery, and governance. Its core offerings include information observability, cataloging, governance, information products, and data pipeline observability solutions.

What are some key features of Decube?

Key features of Decube include column-level lineage mapping, automated governance with policy management, and machine learning-powered anomaly detection. These features help organizations maintain high standards of quality and trust in their data.

How does Decube support engineers and AI/ML professionals?

Decube offers innovative tools like Decube CoPilot, which supports custom information validation and real-time monitoring, making it an essential resource for engineers and AI/ML professionals.

What do user testimonials say about Decube?

User testimonials highlight Decube's intuitive design and effectiveness in maintaining trust in information. Users appreciate its ability to streamline collaboration among teams and enhance information monitoring, which facilitates early problem identification.

Why is governance important in information management?

In 2024, over 65% of information leaders prioritize governance, recognizing its vital role in improving quality and compliance. Robust governance structures are increasingly necessary due to growing information complexity and regulatory demands.

What financial benefits are associated with effective governance frameworks?

Case studies show that organizations implementing effective governance frameworks experience up to 45% lower breach costs, emphasizing the financial advantages of prioritizing integrity in information management.

What is the projected market size for information visibility by 2030?

The information visibility market is projected to reach $4.73 billion by 2030, indicating a growing demand for comprehensive information management solutions like Decube.

What distinguishes Monte Carlo from other data observability platforms?

Monte Carlo is known for its advanced information observability features, particularly in real-time monitoring and anomaly detection, which automate the identification of quality issues and empower teams to address problems swiftly.

What functionalities does Monte Carlo provide?

Monte Carlo offers automated alerts and intuitive dashboards that help maintain the integrity of data pipeline observability solutions, ensuring that information remains trustworthy and useful for decision-making.

What is Great Expectations and its purpose?

Great Expectations is an open-source framework designed to ensure information integrity through robust validation and profiling mechanisms, allowing teams to define expectations for datasets, automate testing, and document workflows.

How does Great Expectations integrate with existing information pipelines?

Great Expectations seamlessly integrates with existing information pipelines, enabling organizations to uphold consistent quality standards, making it an indispensable tool for engineers focused on reliability and precision.

_For%20light%20backgrounds.svg)