Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Data Schema Types Every Data Engineer Should Know

Explore essential data schema types for effective data engineering and governance.

Introduction

In the rapidly evolving landscape of data engineering, understanding various data schema types is essential for professionals aiming to optimize information management. Each schema offers unique advantages, enhancing data integrity and facilitating complex relationship modeling. This enables engineers to streamline processes and improve operational efficiency. However, with a multitude of schema options available, data engineers must discern which types are most crucial for their specific needs. This article explores ten essential data schema types that every data engineer should be familiar with, providing insights into their applications, benefits, and best practices for implementation.

Decube: Data Observability and Governance Schema

Decube's information observability and governance framework is designed to uphold integrity and reliability throughout the . By employing advanced machine learning techniques, Decube facilitates and , enabling engineers to swiftly identify and rectify issues. This framework represents a unified approach to , integrating observability, governance, and into a cohesive system.

Key features, such as automated crawling for seamless and , empower organizations to ensure , including SOC 2 and GDPR. Understanding how these frameworks function within the Decube ecosystem is crucial for engineers aiming to in 2026.

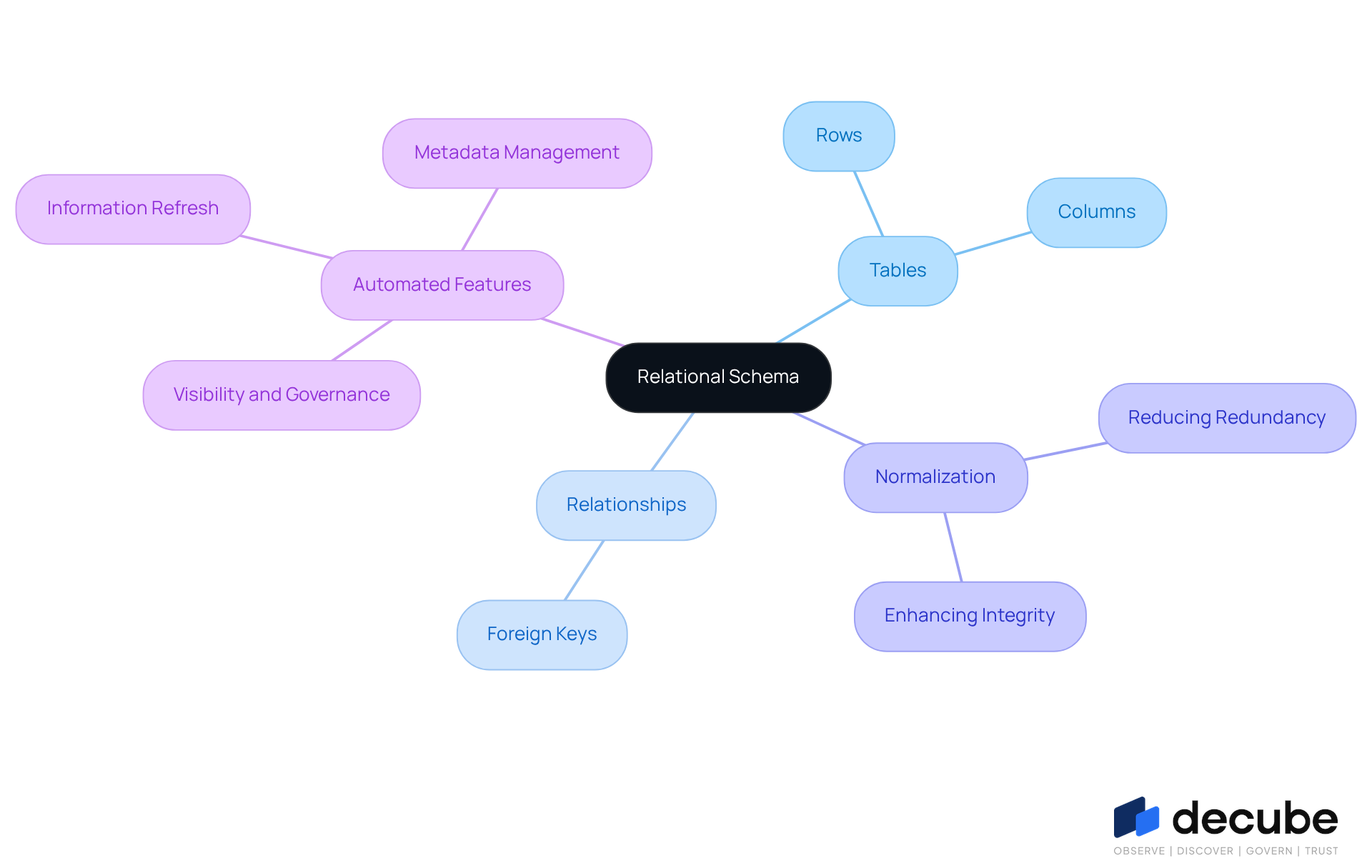

Relational Schema: Organizing Data into Tables

A organizes information into tables, consisting of rows and columns. This arrangement facilitates efficient retrieval and manipulation of data through SQL queries. Each table signifies an entity, with relationships between tables established via foreign keys. Understanding s is crucial for information engineers, as they must ensure an accurate representation of the and maintain the integrity of relationships. This structure type supports normalization in the , which reduces redundancy and enhances , making it a preferred choice for various applications.

Decube's automated crawling feature significantly enhances this framework by ensuring effortless management of metadata. It automatically refreshes information sources without the need for manual updates, thereby maintaining the precision and currency of the relational schema. This capability not only of information but also integrates seamlessly with agreements, fostering collaboration among stakeholders. Consequently, engineers are empowered to innovate while maintaining high standards of information quality and governance.

Hierarchical Schema: Tree-Like Data Structure

A hierarchical framework organizes information in a tree-like structure, where each record has a single parent and can have multiple offspring. This model is particularly effective for representing organizational structures, file systems, and other information that naturally fits into a hierarchy. For instance, many companies utilize a to manage employee information in a hierarchical manner, which facilitates clear reporting lines and departmental organization. Additionally, computer file systems often adopt this structure to improve efficient and management.

With Decube's , information specialists can effectively manage and consistently update metadata, thereby enhancing observability and governance. The platform's comprehensive information lineage visualization, including automated column-level lineage, allows professionals to monitor the complete flow of information across components. This capability is essential for understanding complex relationships within hierarchical structures.

Engineers must become proficient in implementing and querying hierarchical structures, as these can significantly simplify complex relationships and improve retrieval times. However, it is important to recognize that this framework may present challenges in flexibility, particularly when adapting to changes in information connections. To address these challenges, in 2026 include:

- Regularly reviewing and updating the schema to reflect organizational changes.

- Utilizing indexing strategies to optimize query performance.

- Ensuring through robust validation processes.

By adhering to these practices and leveraging Decube's features, including its unified information trust platform, engineers can enhance quality and governance, ultimately improving management efficiency and supporting informed decision-making across their organizations.

Star Schema: Simplifying Data Warehousing

The serves as a pivotal modeling technique in information warehousing, characterized by a central fact table linked to multiple dimension tables. This design notably and enhances performance by reducing the number of joins required, which is vital for efficient . Information engineers appreciate star designs for their intuitive layout, mirroring the way business users conceptualize information. By structuring data in this fashion, organizations can realize significant improvements in , facilitating quicker access and more effective reporting.

For instance, a retail company utilizing a can rapidly analyze sales trends across various dimensions, such as time and product categories, thereby supporting timely decision-making. Additionally, the 's compatibility with enhances its appeal, allowing companies to effectively and extract actionable insights with minimal processing effort. Designed to manage large datasets efficiently, the is suitable for organizations of all sizes. In summary, the remains a favored choice for analytical applications, driving performance enhancements and bolstering robust .

Snowflake Schema: Normalized Data Organization

A snowflake structure represents a more complex variant of the star schema, where dimension tables are normalized into multiple interconnected tables. This design minimizes redundancy and enhances by ensuring that each item is stored only once. While may lead to more complex queries due to the increased number of joins, they offer for organizations that prioritize accuracy and consistency.

Professionals specializing in must possess the skills necessary to design and query effectively, thereby leveraging their benefits within a for data warehousing.

Graph Schema: Representing Complex Relationships

A represents information through nodes (entities) and edges (connections), making it an ideal model for . This structure is particularly advantageous in applications such as:

- Social networks

- Recommendation systems

- Fraud detection

Engineers must understand how to design and query effectively, as these facilitate flexible and efficient information retrieval. By utilizing like Neo4j and TigerGraph, organizations can derive deeper insights from their data and identify hidden patterns that may not be evident in traditional relational models. As of 2026, the adaptability of graph databases continues to evolve, with experts noting their increasing recognition as AI infrastructure, .

Document Schema: Handling Unstructured Data

are crucial for managing , particularly in formats like JSON and XML. These frameworks facilitate flexible information representation, allowing for the integration of diverse structures without the constraints of a static framework. As organizations increasingly leverage for actionable insights, it is imperative for engineers to master the implementation and management of these . This expertise is vital for the effective storage, retrieval, and analysis of , ensuring that remain robust and adaptable to changing requirements.

In 2026, the importance of flexible in is paramount. With the proliferation of various information sources and formats, the capacity to adapt to evolving structures is essential. Best practices for data schema in JSON and XML documents include:

- Maintaining comprehensive documentation

- Ensuring

- Implementing version control to manage changes over time

Furthermore, current trends indicate a growing emphasis on the management of , with organizations exploring such as Decube's preset field monitors and reconciliation features to seamlessly incorporate and analyze this type of information. By adopting these practices, engineers can enhance and operational efficiency, ultimately supporting improved decision-making across their organizations.

Object-Oriented Schema: Data as Objects

An object-oriented structure organizes information as objects, embodying the principles of . This methodology allows for the natural and their interrelationships. Data engineers must master the design and execution of to fully leverage their advantages, such as encapsulation and inheritance. By adopting these , organizations can create more flexible and manageable models that accurately represent real-world scenarios.

For instance, in 2026, companies are increasingly utilizing object-oriented information modeling to enhance their management processes, which enables improved integration and flexibility in handling complex datasets. This approach not only enhances but also addresses the evolving needs of .

Network Schema: Complex Relationship Management

Network models provide a robust framework for illustrating complex relationships through a graph-like structure, enabling entities to establish multiple connections. This structural type is crucial for applications necessitating , such as social networks and supply chain management.

For instance, in supply chains, facilitate the mapping of suppliers, manufacturers, and distributors, which enhances logistics and inventory management. Data engineers must demonstrate proficiency in creating and querying to effectively navigate and manage intricate relationships.

By utilizing , organizations can uncover , improve , and ultimately .

The optimal methods for constructing in 2026 involve:

- Ensuring scalability

- Maintaining comprehensive documentation

- Implementing robust querying capabilities to accommodate evolving information requirements

Entity-Relationship Schema: Conceptual Data Modeling

An entity-relationship (ER) diagram serves as a conceptual model that visually illustrates the entities within a database and their interrelationships. This framework is crucial for , as it aids information specialists in understanding the organization of data and the connections between various entities.

By employing ER diagrams, organizations can:

- Identify potential issues in relationships

- Ensure their databases are structured for optimal performance

Furthermore, a solid grasp of is vital for data engineers, as these diagrams lay the groundwork for efficient .

Conclusion

Understanding the various data schema types is essential for any data engineer focused on optimizing data management and governance. Each schema - ranging from the hierarchical structure to the more complex graph schema - provides distinct advantages tailored to different data requirements and organizational frameworks. Mastering these schemas not only improves data integrity and retrieval efficiency but also empowers engineers to innovate and adapt to the ever-evolving data landscape.

This article explored key schema types, including relational, star, and snowflake schemas, emphasizing their roles in effectively organizing and managing data. Additionally, the significance of document and object-oriented schemas in handling unstructured data was discussed, alongside the importance of network and entity-relationship schemas in illustrating complex relationships and facilitating database design. By familiarizing themselves with these frameworks, data engineers can ensure robust data governance and operational effectiveness.

As data continues to grow exponentially, leveraging the appropriate schema type becomes increasingly crucial. Organizations must prioritize understanding and implementing these essential data schema types, as they form the backbone of effective data management strategies. By doing so, data engineers can enhance their operational capabilities and contribute to informed decision-making processes that drive organizational success.

Frequently Asked Questions

What is Decube's information observability and governance framework?

Decube's framework is designed to uphold integrity and reliability throughout the information lifecycle by employing advanced machine learning techniques for real-time monitoring and anomaly detection, enabling engineers to quickly identify and rectify issues.

What are the key features of Decube's framework?

Key features include automated crawling for seamless metadata management, column-level lineage mapping, and compliance support with industry standards such as SOC 2 and GDPR.

Why is understanding Decube's frameworks important for engineers?

Understanding how these frameworks function is crucial for engineers to enhance information governance and operational effectiveness.

How does a relational schema organize data?

A relational schema organizes information into tables consisting of rows and columns, facilitating efficient data retrieval and manipulation through SQL queries, with relationships established via foreign keys.

What advantages does a relational structure offer?

It supports normalization, reduces redundancy, enhances data integrity, and is a preferred choice for various applications.

How does Decube enhance the relational schema framework?

Decube's automated crawling feature ensures effortless management of metadata by automatically refreshing information sources, maintaining the precision and currency of the relational schema.

What is a hierarchical schema, and where is it commonly used?

A hierarchical schema organizes information in a tree-like structure where each record has a single parent and can have multiple offspring, commonly used for organizational structures and file systems.

How does Decube support hierarchical structures?

Decube's automated crawling capability allows information specialists to effectively manage and consistently update metadata, enhancing observability and governance.

What are some best practices for implementing hierarchical data structures?

Best practices include regularly reviewing and updating the schema, utilizing indexing strategies to optimize query performance, and ensuring information integrity through robust validation processes.

How can engineers benefit from using Decube's features?

Engineers can enhance quality and governance, improve management efficiency, and support informed decision-making across their organizations by leveraging Decube's unified information trust platform.

List of Sources

- Decube: Data Observability and Governance Schema

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- datagovernance.com (https://datagovernance.com/quotes)

- Why data governance is the cornerstone of trustworthy AI in 2026 (https://strategy.com/software/blog/why-data-governance-is-the-cornerstone-of-trustworthy-ai-in-2026)

- Data Governance Statistics And Facts (2025): Emerging Technologies, Challenges And Adoption, AI, ROI, and Data Quality Insights (https://electroiq.com/stats/data-governance)

- Hierarchical Schema: Tree-Like Data Structure

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- 20 Data Science Quotes by Industry Experts (https://coresignal.com/blog/data-science-quotes)

- 9 Must-read Inspirational Quotes on Data Analytics From the Experts (https://nisum.com/nisum-knows/must-read-inspirational-quotes-data-analytics-experts)

- Star Schema: Simplifying Data Warehousing

- Study: 84% of Technical Leaders Need Data Overhaul for AI Strategies to Succeed (https://salesforce.com/news/stories/data-analytics-trends-2026)

- Mastering Star Schema: Usage, Benefits & Best Practices (https://owox.com/blog/articles/star-schema-explained)

- Graph Schema: Representing Complex Relationships

- Graph Databases in 2026: The New Backbone of AI-Native Knowledge Systems (https://medium.com/@tongbing00/graph-databases-in-2026-the-new-backbone-of-ai-native-knowledge-systems-bb550c029464)

- Document Schema: Handling Unstructured Data

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Object-Oriented Schema: Data as Objects

- What’s in, and what’s out: Data management in 2026 has a new attitude (https://cio.com/article/4117488/whats-in-and-whats-out-data-management-in-2026-has-a-new-attitude.html)

- Inheritance: The Most Misused Pillar of OOP (https://dev.to/walternascimentobarroso/inheritance-the-most-misused-pillar-of-oop-2a3e)

- Object-Oriented Data Model and Its Application | Free Essay Example (https://studycorgi.com/object-oriented-data-model-and-its-application)

- Object Oriented Programming is an expensive disaster which must end (https://medium.com/@jacobfriedman/object-oriented-programming-is-an-expensive-disaster-which-must-end-2cbf3ea4f89d)

- Why the "bible" of data systems is getting a massive rewrite for 2026 (https://thenewstack.io/data-intensive-applications-rewrite-2026)

- Network Schema: Complex Relationship Management

- goodreads.com (https://goodreads.com/quotes/tag/networking-quotes)

_For%20light%20backgrounds.svg)