Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Data Validation Solutions for Reliable Data Pipelines

Discover effective data validation solutions to enhance accuracy and reliability in your data pipelines.

Introduction

In an era where data drives decision-making, ensuring the integrity of information within data pipelines is paramount. Organizations grapple with the overwhelming complexity and sheer volume of data, making effective validation a daunting task. The pressing need for robust data validation solutions is evident, as these not only enhance data quality but also mitigate the risks associated with inaccurate information. Without robust data validation, organizations risk undermining their decision-making processes, leading to potentially costly errors.

This article delves into effective strategies and best practices that empower organizations to master data validation. Mastering data validation is not just a technical necessity; it is essential for safeguarding the integrity of decision-making processes.

Understand the Importance of Data Validation in Modern Pipelines

Inaccurate information can lead to misguided decisions, making verification a critical process for organizations today. Information verification is a crucial procedure that guarantees the accuracy, completeness, consistency, timeliness, validity, uniqueness, and relevance of information flowing through contemporary information pipelines. In a time when organizations increasingly depend on information-driven decision-making, the integrity of this information is paramount. Effective data validation solutions prevent mistakes from propagating through the pipeline, which in turn avoids erroneous analytics and misguided business strategies.

Decube's automated crawling feature significantly enhances this process by providing effortless metadata management, including auto-refreshing capabilities and secure access control through a designated approval flow. With automated oversight and analytics, companies can ensure that their handling practices comply with legal standards such as GDPR and HIPAA. By ensuring compliance, organizations not only protect themselves from fines but also build trust with their customers and stakeholders. By applying strong information verification procedures backed by Decube's capabilities, organizations can improve their information governance frameworks with data validation solutions, ensuring that assets remain valuable rather than a burden.

For example, a financial services firm that implemented a continuous information verification framework reported a 30% decrease in errors related to information, greatly enhancing their operational efficiency and compliance stance. This demonstrates how emphasizing information verification, aided by Decube's comprehensive lineage visualization, can result in substantial business advantages. Moreover, entities should recognize that substandard information quality incurs almost $13 million each year, highlighting the financial consequences of insufficient information verification methods. Investing in automated processes such as those provided by Decube can improve governance efficiency, while regular evaluations of governance practices are crucial for ongoing enhancement. Ultimately, the cost of neglecting information verification can far exceed the investment in robust automated solutions like Decube.

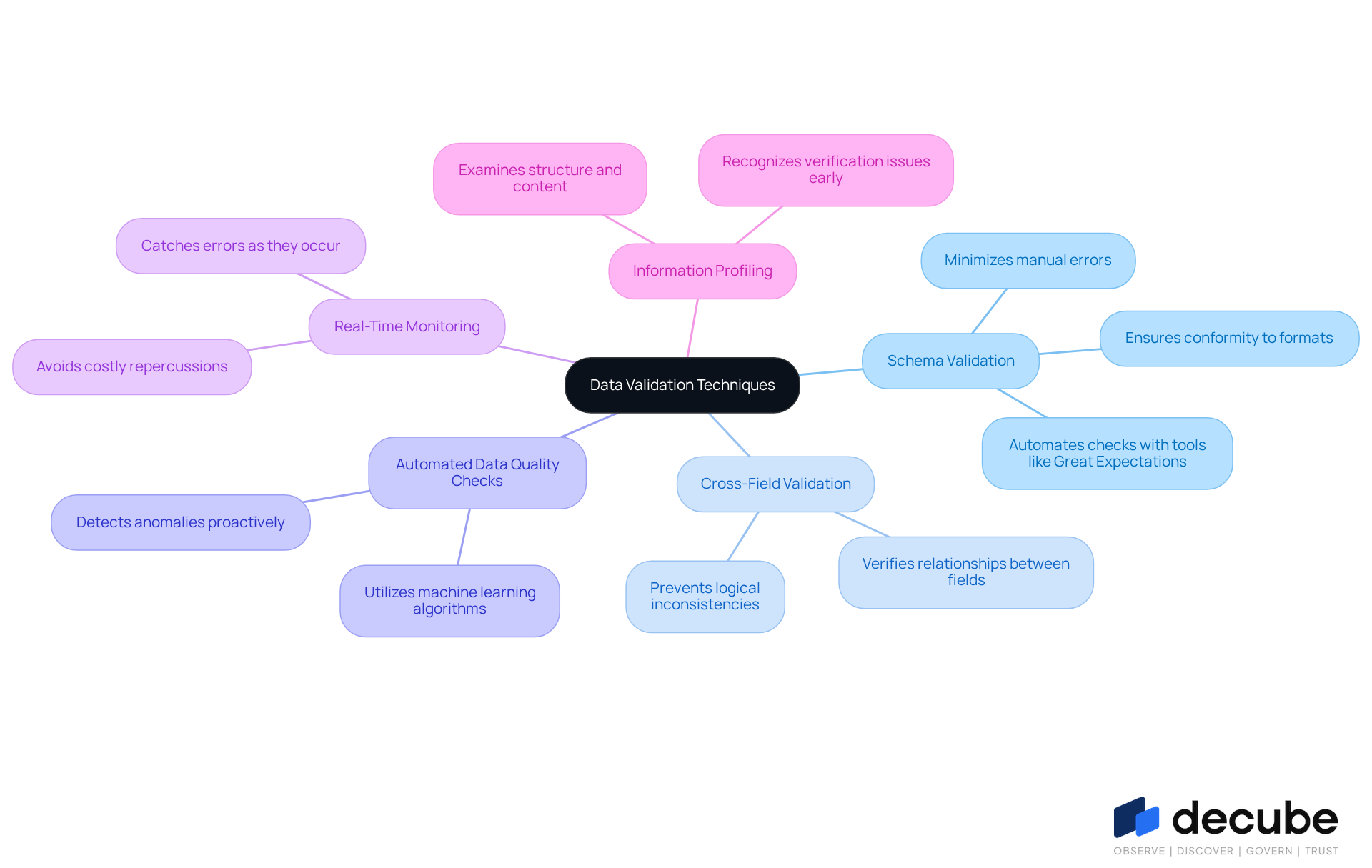

Explore Effective Data Validation Techniques for Enhanced Quality

To ensure high-quality data, organizations must adopt effective validation techniques that address potential pitfalls:

- Schema Validation: This technique ensures that information conforms to predefined formats and types, which is crucial for maintaining integrity. Tools like Great Expectations automate schema checks, significantly minimizing manual errors and improving overall information quality.

- Cross-Field Validation: This involves verifying the relationships between different fields within a dataset. For instance, ensuring that a 'start date' precedes an 'end date' helps prevent logical inconsistencies that could lead to erroneous analyses.

- Automated Data Quality Checks: Utilizing automated tools simplifies the verification procedure. Machine learning algorithms can detect anomalies in patterns, enabling companies to identify issues proactively before they affect subsequent processes.

- Real-Time Monitoring: Implementing real-time information verification allows organizations to catch errors as they occur, rather than after the fact. By catching errors in real-time, organizations can avoid costly repercussions and maintain data integrity.

- Information Profiling: Regularly examining information to comprehend its structure, content, and quality is crucial for recognizing possible verification issues early. This practice not only improves information quality but also supports adherence to regulatory standards.

By utilizing these methods, organizations can create a strong information verification framework that enhances data quality and fortifies organizational resilience against future challenges, ultimately resulting in better decision-making and enhanced business outcomes with data validation solutions.

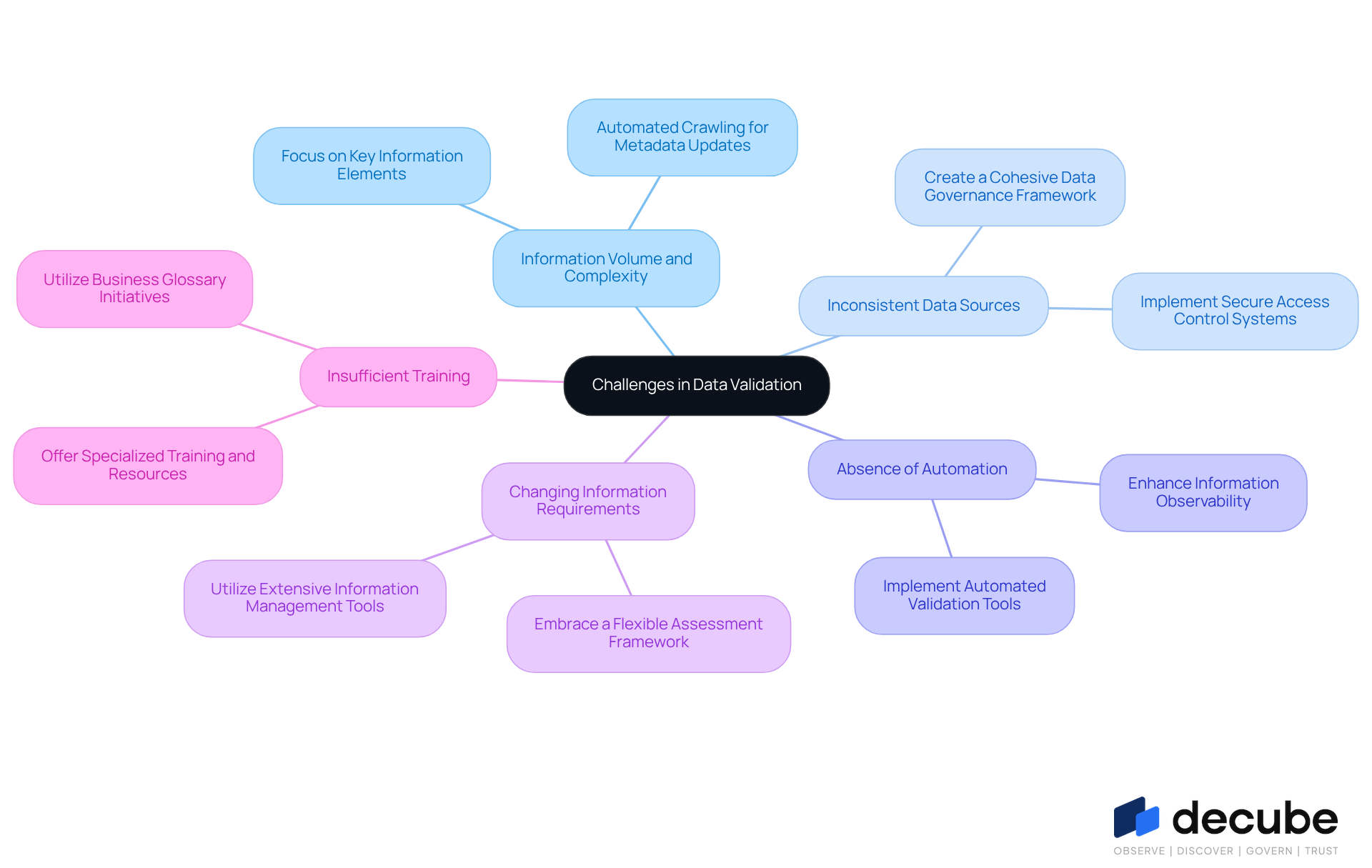

Identify and Overcome Common Challenges in Data Validation

As data volumes surge, organizations face mounting challenges in ensuring data validation, particularly as the complexity of information increases:

- Information Volume and Complexity: With the global information volume anticipated to reach 181 zettabytes by the end of 2025, verifying every piece of information becomes a daunting task. Organizations should focus their verification efforts on key information elements that significantly impact business outcomes. Decube's automated crawling feature ensures that metadata is consistently updated, enhancing the relevance of the information.

- Inconsistent Data Sources: Data obtained from multiple platforms frequently arrives in varying formats and standards, complicating verification efforts. Creating a cohesive data governance framework can standardize inputs, making the verification task more manageable. Decube improves this procedure by offering a secure access control system, enabling organizations to manage who can view or edit information effectively.

- Absence of Automation: Manual checks are not only time-consuming but also prone to human error. Implementing automated validation tools, such as those offered by Decube, can streamline these processes, significantly reducing the risk of inaccuracies. This automation not only improves efficiency but also enhances information observability, allowing teams to monitor quality continuously.

- Changing Information Requirements: As business needs evolve, so do information requirements. Organizations should embrace a flexible assessment framework that can adjust to these changes, ensuring that information quality is preserved over time. Decube's solutions facilitate this adaptability by offering extensive information management tools that evolve with organizational needs.

- Insufficient Training: Teams may lack the necessary skills to implement effective validation strategies. Offering specialized training and resources can enable teams to take responsibility for quality initiatives. By utilizing Decube's business glossary initiative, teams can deepen their comprehension of governance and enhance collaboration across departments.

Addressing these challenges head-on enables organizations to enhance their information verification processes, resulting in improved quality and reliability of the information. This underscores the critical need for robust verification practices to navigate the complexities of modern information management.

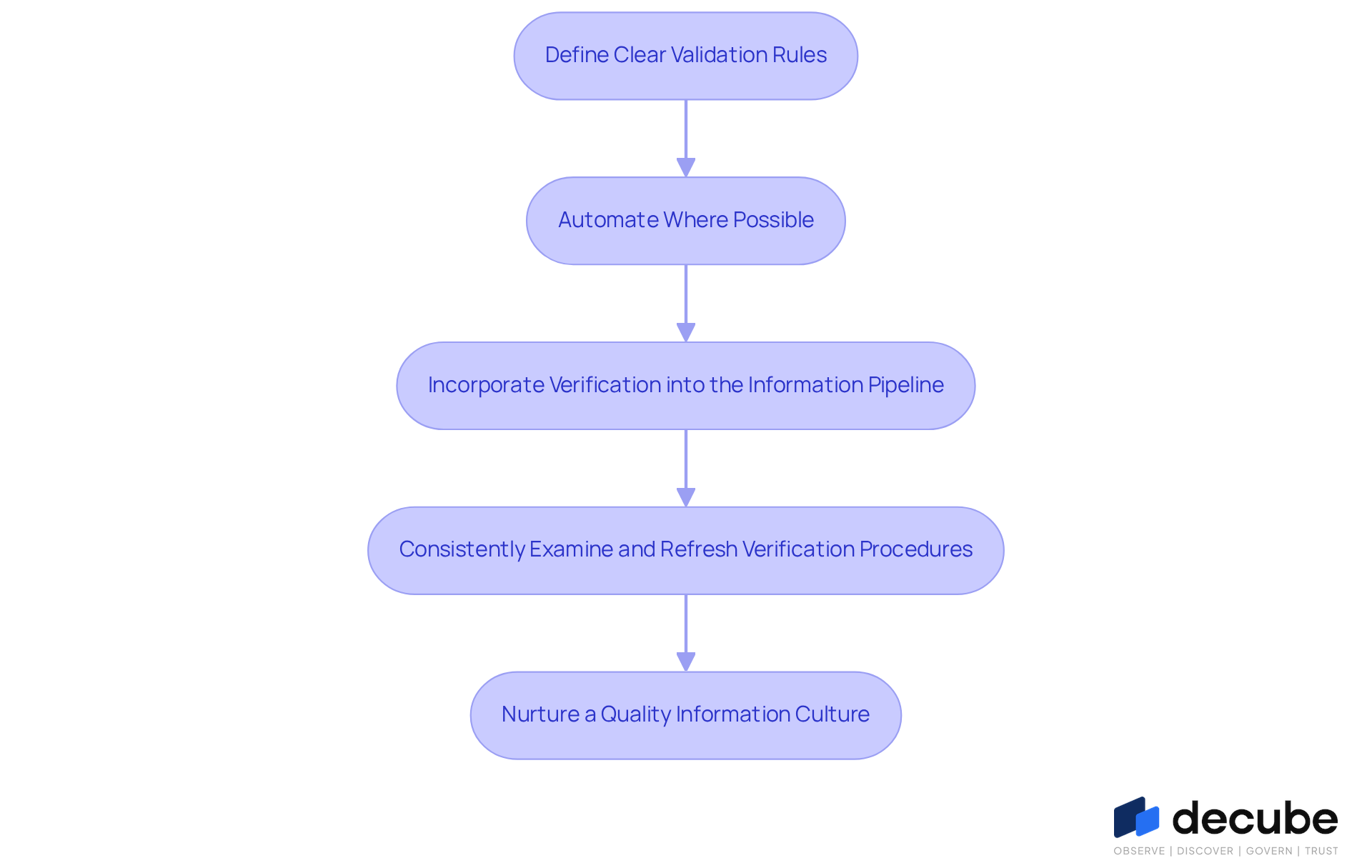

Implement Best Practices for Successful Data Validation Solutions

To ensure data integrity, organizations need to implement effective data validation solutions.

- Define Clear Validation Rules: Establish specific criteria for what constitutes valid data. This clarity helps teams understand expectations and reduces uncertainty in the assessment process. For instance, organizations can implement rules that ensure customer age falls within a specified range, such as between 18 and 100, to prevent extreme values from distorting reports.

- Automate Where Possible: Utilize automation tools to manage repetitive verification tasks. Automated information verification can handle thousands of records in seconds, significantly improving efficiency and minimizing human errors. For instance, automated systems can verify for missing fields or incorrect formatting in real-time, ensuring information accuracy as it enters the system.

- Incorporate Verification into the Information Pipeline: Ensure that verification checks are embedded within the information pipeline, allowing for real-time monitoring and correction of information issues as they arise. This proactive strategy aids in stopping new mistakes from infiltrating systems, providing prompt enhancements in quality. Without proactive verification, organizations risk allowing errors to compromise data integrity.

- Consistently Examine and Refresh Verification Procedures: As information requirements change, so should verification procedures. Frequent evaluations guarantee that verification stays pertinent and efficient, adjusting to evolving information contexts and organizational objectives. Ongoing surveillance and enhancement of validation systems are essential to uphold high standards of information integrity.

- Nurture a Quality Information Culture: Promote a culture that values information quality throughout the entity. This can be accomplished through training, awareness initiatives, and acknowledgment of teams that excel in upholding information integrity. Fostering a culture focused on data can enhance accountability and responsibility among teams, ultimately resulting in improved data quality. Neglecting to foster such a culture can lead to diminished accountability and increased data errors.

By following these best practices, organizations can create robust data validation solutions that enhance data quality, support compliance, and drive better business outcomes. Ultimately, a commitment to data quality can significantly enhance organizational performance and decision-making.

Conclusion

In an era where data-driven decisions are paramount, ensuring the accuracy and reliability of data pipelines is crucial for organizations striving to make informed decisions. The critical role of data validation in maintaining data integrity is increasingly vital in today's data-driven landscape. By implementing robust data validation solutions, organizations can significantly enhance their information governance, mitigate risks, and ultimately drive better business outcomes.

Key insights discussed include the importance of adopting effective validation techniques such as:

- Schema validation

- Automated quality checks

- Real-time monitoring

Organizations often struggle with the sheer volume of data and the inconsistencies that arise from disparate sources, complicating their validation efforts. Addressing these challenges through automation and a culture of quality can lead to substantial improvements in data accuracy and compliance, as evidenced by a 30% increase in accuracy reported by a leading financial services firm.

In a world where data quality directly influences business strategies and operational efficiency, organizations must prioritize the implementation of best practices for data validation. By investing in automated solutions and nurturing a culture that prioritizes data integrity, companies can significantly enhance decision-making and build trust with stakeholders. Ultimately, prioritizing data validation not only strengthens decision-making but also fortifies stakeholder trust, positioning organizations for long-term success.

Frequently Asked Questions

Why is data validation important in modern information pipelines?

Data validation is crucial because inaccurate information can lead to misguided decisions. It ensures the accuracy, completeness, consistency, timeliness, validity, uniqueness, and relevance of information flowing through pipelines, which is essential for organizations that rely on data-driven decision-making.

How does effective data validation impact business strategies?

Effective data validation prevents mistakes from propagating through the information pipeline, which helps avoid erroneous analytics and misguided business strategies, ultimately enhancing operational efficiency.

What features does Decube offer to enhance data validation?

Decube offers an automated crawling feature that provides effortless metadata management, auto-refreshing capabilities, and secure access control through a designated approval flow, significantly improving the data validation process.

How does Decube help organizations comply with legal standards?

Decube ensures compliance with legal standards such as GDPR and HIPAA by providing automated oversight and analytics, helping organizations protect themselves from fines and build trust with customers and stakeholders.

What are the financial consequences of poor information quality?

Poor information quality can incur costs of almost $13 million each year, highlighting the significant financial implications of insufficient information verification methods.

Can you provide an example of the benefits of implementing a continuous information verification framework?

A financial services firm that implemented a continuous information verification framework reported a 30% decrease in information-related errors, greatly enhancing their operational efficiency and compliance stance.

Why is regular evaluation of governance practices important?

Regular evaluations of governance practices are crucial for ongoing enhancement of information verification methods, ensuring that organizations continuously improve their data governance frameworks.

What is the overall cost-benefit analysis of investing in automated data validation solutions like Decube?

The cost of neglecting information verification can far exceed the investment in robust automated solutions like Decube, as effective solutions improve governance efficiency and protect organizations from financial losses associated with poor information quality.

List of Sources

- Understand the Importance of Data Validation in Modern Pipelines

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- The Importance of Data Quality in Business Decision-Making (https://anchorcomputer.com/2024/10/the-importance-of-data-quality-in-business-decision-making)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- 2026 Analytics: The Future of Data-Driven Decision Making (https://sift-ag.com/news/2026-analytics-the-future-of-data-driven-decision-making)

- Explore Effective Data Validation Techniques for Enhanced Quality

- MetaRouter Introduces Schema Enforcement to Tackle Data Quality and Consistency Challenges (https://prnewswire.com/news-releases/metarouter-introduces-schema-enforcement-to-tackle-data-quality-and-consistency-challenges-302386462.html)

- future-processing.com (https://future-processing.com/blog/data-validation)

- 2026 Analytics: The Future of Data-Driven Decision Making (https://sift-ag.com/news/2026-analytics-the-future-of-data-driven-decision-making)

- Chapter 5 Statistical checks | The Data Validation Cookbook (https://data-cleaning.github.io/validate/sect-statisticalchecks.html)

- Identify and Overcome Common Challenges in Data Validation

- future-processing.com (https://future-processing.com/blog/data-validation)

- Data Statistics (2026) - How much data is there in the world? (https://rivery.io/blog/big-data-statistics-how-much-data-is-there-in-the-world)

- Why data teams struggle with data validation (and how to change that) (https://amplitude.com/blog/data-teams-validation)

- The Challenges of Maintaining Data Accuracy at Scale (https://strategydriven.com/2026/01/09/the-challenges-of-maintaining-data-accuracy-at-scale)

- Implement Best Practices for Successful Data Validation Solutions

- How to improve data quality: 10 best practices for 2026 (https://rudderstack.com/blog/how-to-improve-data-quality)

- Data Validation Automation: A Key to Efficient Data Management (https://functionize.com/ai-agents-automation/data-validation)

- future-processing.com (https://future-processing.com/blog/data-validation)

- The Best 10 Data Validation Best Practices in 2025 - Numerous.ai (https://numerous.ai/blog/data-validation-best-practices)

- Ensure Data Quality By Using Data Governance Best Practices (https://consulting.sva.com/insights/ensure-data-quality-by-using-data-governance-best-practices)

_For%20light%20backgrounds.svg)