Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

What Is a Data Catalog? Definition, Importance, and Features

Discover what is data catalog and its significance in managing information assets effectively.

Introduction

Organizations face significant challenges in effectively managing their extensive data resources. A data catalog emerges as a vital tool, acting as a centralized repository that not only organizes metadata but also enhances discoverability and governance. This transformation can lead to more informed decision-making and streamlined operations. Understanding the role of a data catalog could redefine how organizations approach data management and decision-making.

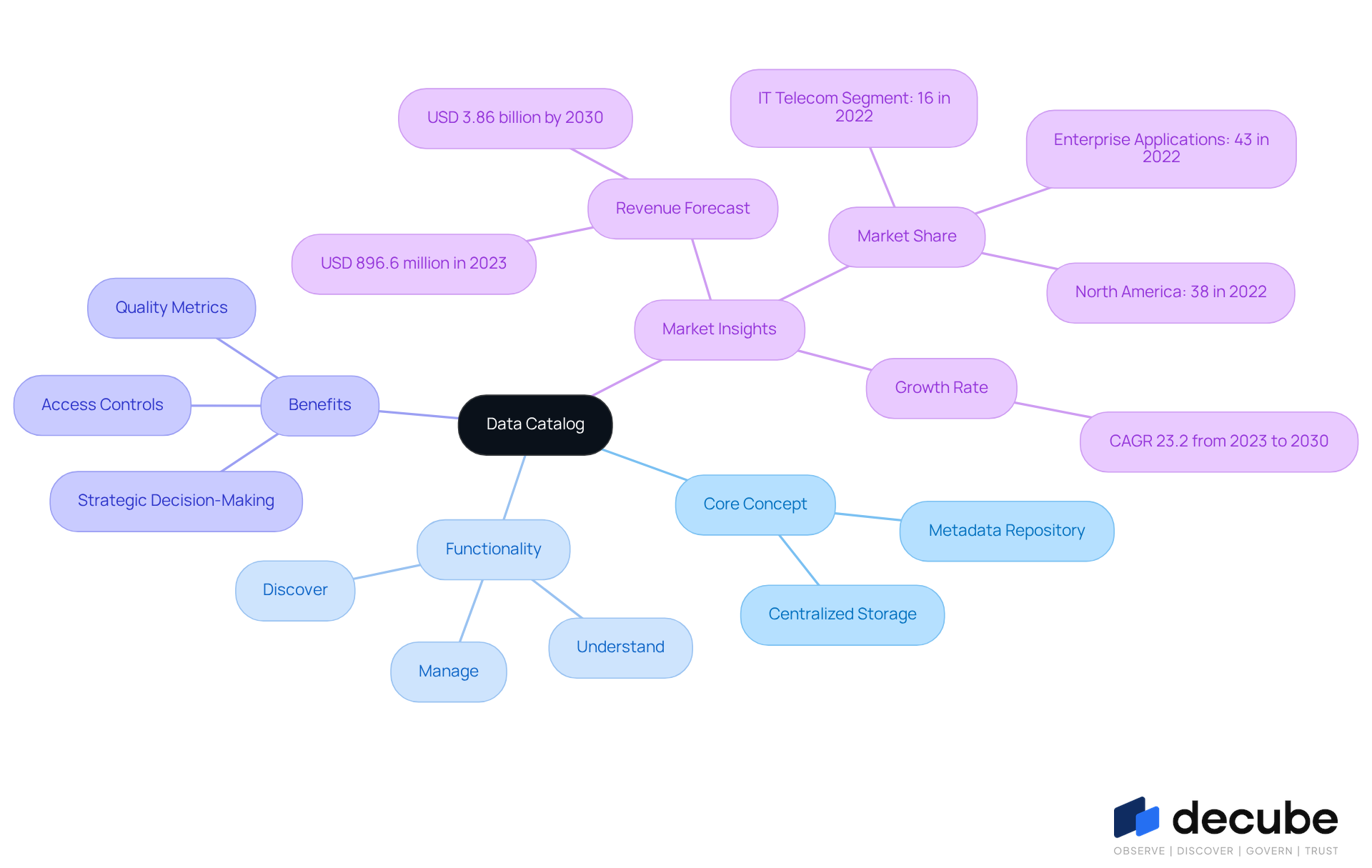

Define Data Catalog: Core Concept and Functionality

In an era where data is paramount, organizations face significant challenges in managing their information assets, particularly when they do not grasp what is data catalog. A metadata repository functions as a centralized storage that organizes and oversees information related to what is data catalog concerning an entity's information assets. It serves as what is data catalog, allowing users to effectively discover, understand, and manage their information. An information repository provides a structured framework that enables users to understand what is data catalog, locate relevant datasets, and assess their quality. Much like a library index helps readers find books, a metadata repository helps users navigate their information assets efficiently.

Generally, what is data catalog refers to a collection that contains vital information such as lineage, quality metrics, and access controls, making it a crucial tool for effective management and oversight in today's data-driven landscape. Significantly, Decube improves this experience with its genuinely unified platform for observability and oversight, featuring an intuitive design that aids in preserving trust in information. The platform's lineage feature highlights the comprehensive information flow across components, ensuring clarity in pipelines and enhancing collaboration among teams.

Numerous entities are currently employing information inventories, such as Decube, to advance their information oversight practices, mirroring a wider trend towards better information management strategies. As organizations increasingly adopt these repositories, they pave the way for more strategic decision-making and enhanced collaboration.

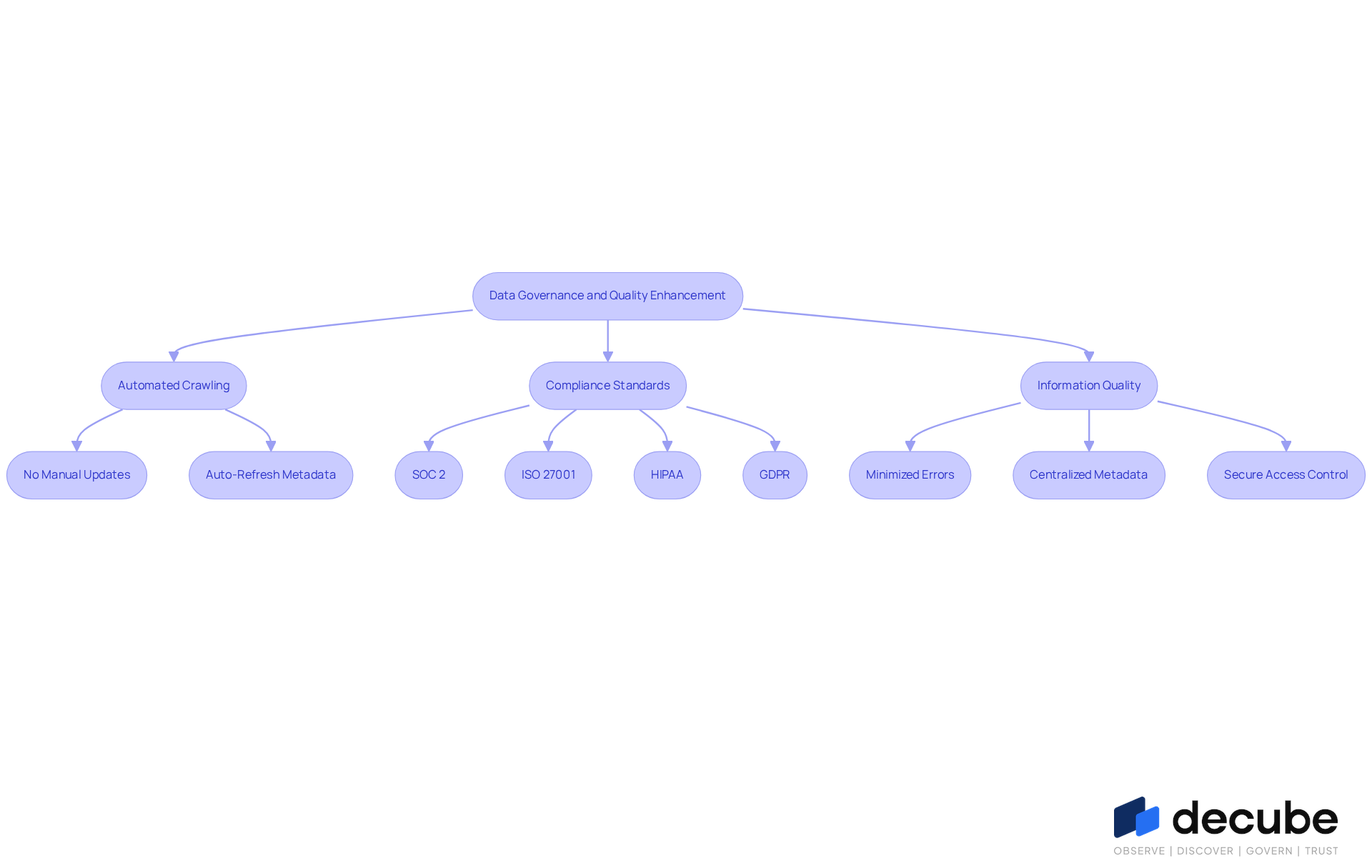

Explore Importance: Enhancing Data Governance and Quality

Managing assets effectively is often hindered by the challenges of manual data updates and compliance requirements. Information catalogs are essential for improving governance and quality, as they provide a structured method for managing assets, which is what is data catalog.

With Decube's automated crawling feature, there is no need for manual updating of metadata; once your sources are connected, the metadata is auto-refreshed, ensuring that information is always current and accessible. This automation not only streamlines processes but also enhances overall governance and quality assurance by facilitating compliance with industry standards such as:

- SOC 2

- ISO 27001

- HIPAA

- GDPR

It ensures that information is properly documented, classified, and accessible, directly contributing to improved quality metrics by minimizing errors associated with outdated information. By centralizing metadata, Decube's catalogs enhance discoverability and help organizations understand what is data catalog, enabling them to swiftly locate and utilize the appropriate information for decision-making.

Additionally, they help maintain information quality by tracking changes and usage patterns, fostering trust among users. This is especially crucial in sectors such as financial services and telecommunications, where information integrity is essential for operational efficiency and regulatory compliance.

Furthermore, Decube's secure access control allows organizations to manage who can view or edit information, enhancing governance through designated approval flows. Ultimately, Decube's capabilities ensure that organizations can maintain high standards of information integrity and governance, which are vital for success in today's regulatory landscape.

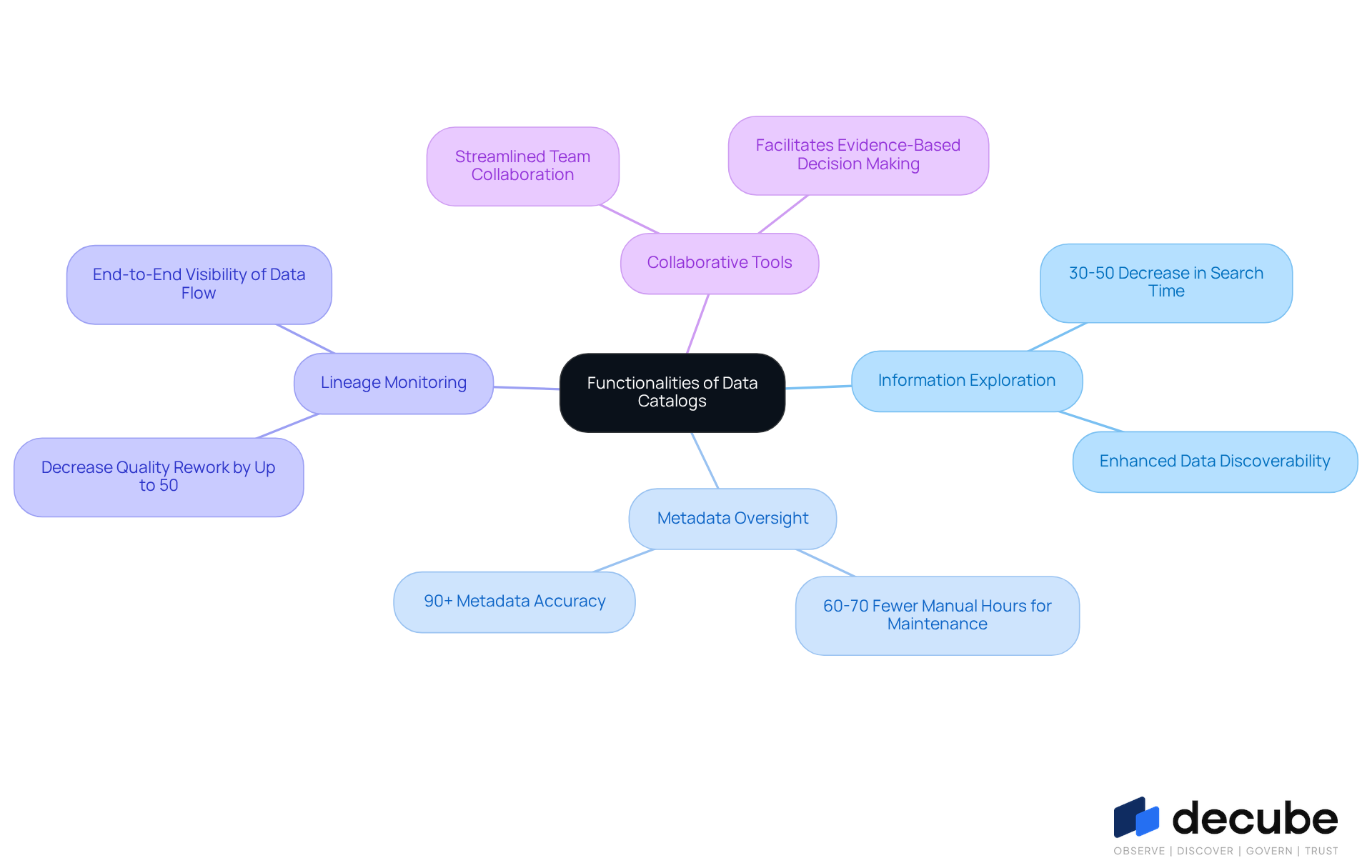

Identify Key Features: Functionalities of Data Catalogs

In today's data-driven landscape, the efficiency of information retrieval is paramount for organizational success. Essential characteristics of information directories include:

- Information exploration

- Metadata oversight

- Lineage monitoring

- Collaborative tools

Research indicates that effective information retrieval strategies can lead to significant time savings, with statistics showing that entities employing contemporary catalogs experience a 30-50% decrease in search time, resulting in substantial operational savings. Metadata management guarantees that all relevant information about information assets is meticulously documented, which is essential for maintaining quality and compliance.

The importance of lineage tracking cannot be overstated, as it provides organizations with comprehensive visibility into information flow, allowing them to trace the origins and transformations of their information. This capability not only improves information governance but also aids compliance efforts, with studies indicating that effective lineage tracking can decrease quality rework by up to 50%. This reduction in quality rework not only saves time but also enhances overall operational efficiency. Expert insights emphasize that active metadata management, including lineage tracking, is crucial for establishing a trustworthy information ecosystem.

Collaboration tools play a vital role in enhancing teamwork on analytics projects, promoting a culture of evidence-based decision-making. These tools enable teams to discuss information assets, raise support tickets, and share insights effortlessly, boosting overall productivity. Sophisticated information directories, like Decube, may also utilize AI-driven capabilities, such as automated quality evaluations and anomaly identification, further enhancing their efficiency in handling complex information environments. Ultimately, the integration of advanced tools and methodologies is essential for fostering a robust information management framework.

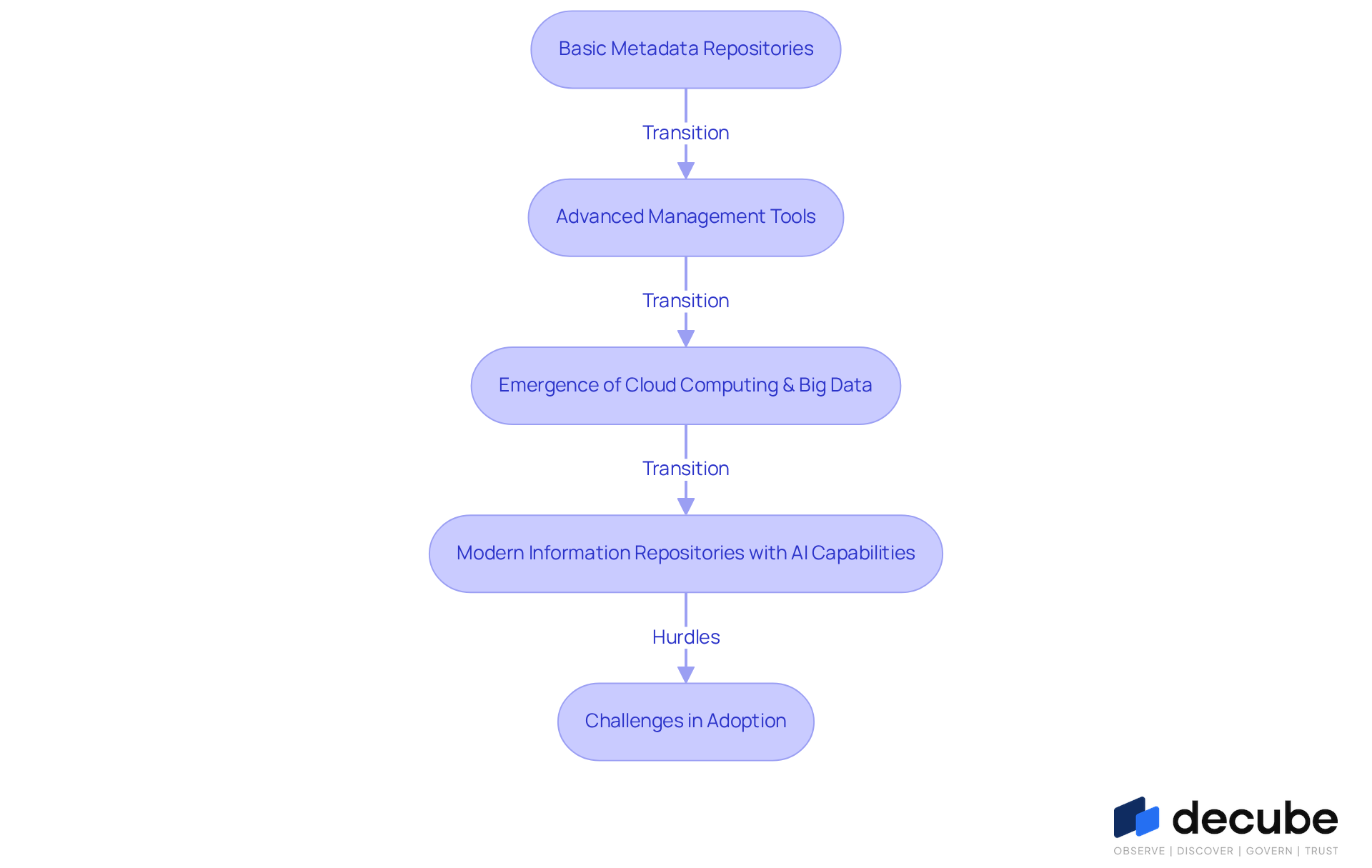

Trace Evolution: Historical Development of Data Catalogs

The evolution of information catalogs has transformed from basic metadata repositories to sophisticated systems that manage extensive data assets effectively. Initially, these catalogs documented database schemas and table structures. As organizations increasingly recognized information as a strategic asset, the demand for advanced management tools grew. The emergence of cloud computing and big data technologies in the 2000s significantly accelerated this progress, resulting in systems capable of handling vast quantities of information from diverse sources.

Today, modern information repositories, such as those offered by Decube, incorporate AI capabilities and features like automated crawling, simplifying metadata management by refreshing content automatically. This capability enhances information observability and management, allowing organizations to control access through designated approval flows. Furthermore, these collections facilitate automated information discovery, quality evaluations, and improved collaboration features, reflecting the growing complexity of information environments.

However, despite the advancements, organizations face significant hurdles in adopting these sophisticated systems due to skill shortages and security inconsistencies. The worldwide information repository market is expected to expand at a compound annual growth rate (CAGR) of 22.6%, indicating a critical shift in how organizations prioritize data governance and compliance in their operations. As organizations adapt to tighter regulatory mandates and the need for trusted data, understanding what is data catalog becomes increasingly important in driving effective governance and informed decision-making.

Conclusion

Navigating the complexities of data management presents significant challenges for organizations today. A data catalog functions as a centralized repository for metadata, enabling users to efficiently discover, understand, and manage their information assets. This structured approach enhances data governance and quality while fostering collaboration and informed decision-making among teams.

The article highlights key aspects of data catalogs, including their importance in improving governance through automated metadata updates, lineage tracking, and secure access controls. Additionally, it underscores the evolution of data catalogs from basic systems to advanced platforms like Decube, which leverage AI capabilities for enhanced efficiency and compliance. These features collectively contribute to significant operational savings and improved information integrity, making data catalogs indispensable in today's data-driven landscape.

As organizations increasingly recognize the significance of effective data management, embracing a robust data catalog becomes essential. Organizations that fail to adopt a robust data catalog may find themselves at a strategic disadvantage in an increasingly data-driven world. The journey toward better data governance and quality begins with understanding and implementing a data catalog that meets the unique needs of each organization.

Frequently Asked Questions

What is a data catalog?

A data catalog is a centralized metadata repository that organizes and oversees information related to an organization's data assets, allowing users to effectively discover, understand, and manage their information.

How does a data catalog function?

A data catalog provides a structured framework that helps users locate relevant datasets, assess their quality, and understand their lineage, similar to how a library index assists readers in finding books.

What kind of information does a data catalog contain?

A data catalog contains vital information such as data lineage, quality metrics, and access controls, making it essential for effective management and oversight of data.

How does Decube enhance the data catalog experience?

Decube offers a unified platform for observability and oversight with an intuitive design that helps maintain trust in information and features a lineage aspect that clarifies information flow across components, promoting collaboration among teams.

Why are organizations adopting data catalogs?

Organizations are adopting data catalogs to improve their information management strategies, enabling more strategic decision-making and enhanced collaboration as they manage their data assets more effectively.

List of Sources

- Define Data Catalog: Core Concept and Functionality

- Data Catalog Market Size & Share | Industry Report, 2030 (https://grandviewresearch.com/industry-analysis/data-catalog-market-report)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Summit Partners | Data Trends: Data Catalogs Hit the Mainstream (https://summitpartners.com/resources/data-trends-data-catalogs-hit-the-mainstream)

- Why the 2026 Data Catalog Is The Google for Enterprise Data | Tredence (https://tredence.com/blog/data-catalog-enterprise-data-2026)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Explore Importance: Enhancing Data Governance and Quality

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Quotes | The Data Governance Institute (https://datagovernance.com/quotes/data-quotes)

- Data Governance Statistics And Facts (2025): Emerging Technologies, Challenges And Adoption, AI, ROI, and Data Quality Insights (https://electroiq.com/stats/data-governance)

- Identify Key Features: Functionalities of Data Catalogs

- Why the 2026 Data Catalog Is The Google for Enterprise Data | Tredence (https://tredence.com/blog/data-catalog-enterprise-data-2026)

- Modern Data Catalog: Features, Benefits & 2026 Guide (https://atlan.com/modern-data-catalog)

- Data Catalogs in 2026: Definitions, Trends, and Best Practices for Modern Data Management (https://promethium.ai/guides/data-catalogs-2026-guide-modern-data-management)

- 15 Essential Features of Data Catalogs To Look For in 2024 (https://atlan.com/data-catalog-features)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Trace Evolution: Historical Development of Data Catalogs

- Why the 2026 Data Catalog Is The Google for Enterprise Data | Tredence (https://tredence.com/blog/data-catalog-enterprise-data-2026)

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- What Is a Data Catalog? Definition, Evolution & Key Features (2026) (https://ovaledge.com/blog/data-catalog-and-its-evolution)

- Summit Partners | Data Trends: Data Catalogs Hit the Mainstream (https://summitpartners.com/resources/data-trends-data-catalogs-hit-the-mainstream)

- Data Catalog Market Size, Growth, Share and Trends 2031 (https://mordorintelligence.com/industry-reports/data-catalog-market)

_For%20light%20backgrounds.svg)