Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Data Quality Audit: 9 Steps to Ensure Data Integrity

Master data quality audit with 9 essential steps to ensure data integrity and reliability.

Introduction

Ensuring the integrity of data has evolved from a mere technical requirement to a strategic imperative for organizations seeking to excel in a data-driven landscape. A comprehensive data quality audit is an essential tool in this pursuit, enabling businesses to systematically assess and improve the accuracy, completeness, and reliability of their information. Yet, many organizations face challenges in effectively conducting these audits. What are the critical steps to achieve meaningful outcomes? This article explores nine essential steps for mastering data quality audits, providing organizations with the insights necessary to enhance their data management practices and gain a competitive advantage.

Understand Data Quality Audits

An information assessment is a systematic evaluation designed to ensure the accuracy, completeness, consistency, and reliability of information. This process is vital for organizations seeking to validate that the information guiding their decisions is both sound and trustworthy. Key dimensions of information quality encompass:

- Accuracy

- Completeness

- Consistency

- Timeliness

- Validity

- Uniqueness

- Relevance

Understanding these characteristics is essential for identifying potential issues that could negatively impact business operations.

Organizations that perform a data quality audit regularly can significantly enhance their decision-making capabilities, as high-quality information serves as the foundation for informed strategies and competitive advantages. With Decube's automated crawling feature, entities can ensure that their metadata is automatically refreshed once sources are connected, eliminating the need for manual updates. This capability improves information observability and supports secure access control, enabling organizations to effectively manage who can view or edit content.

By prioritizing information accuracy checks and utilizing tools such as Decube's automated crawling, companies can proactively address inconsistencies and maintain the integrity of their information. This ultimately leads to improved operational efficiency and heightened customer satisfaction.

Set Clear Objectives for the Audit

Before initiating a quality assessment, it is essential to establish clear goals that align with measurable outcomes. Consider the specific objectives you aim to achieve through the evaluation, such as:

- Enhancing data accuracy

- Ensuring compliance with regulations

- Improving decision-making processes

Document these objectives meticulously and communicate them to all stakeholders involved in the evaluation. This clarity will direct the data quality audit process and facilitate the measurement of its success against predefined goals.

Monitoring metrics related to accuracy, completeness, and timeliness is critical for a data quality audit, as these metrics provide a framework for assessing information integrity. Furthermore, utilizing a catalog of information-an inventory enriched with metadata such as ownership, descriptions, and integrity signals-can enhance the discovery and governance of information assets, ensuring that teams can quickly access the correct details.

Organizations that prioritize clear objectives in their assessments frequently experience higher success rates, as these goals enable focused evaluations and actionable insights. For instance, businesses that have effectively defined clear assessment goals have reported significant improvements in information quality and operational efficiency, illustrating the tangible benefits of this approach.

Choose the Right Data to Audit

Choosing suitable information for your audit is crucial for gaining actionable insights. Start by identifying information sets that are essential to your business operations or those that have previously shown inconsistencies. Key considerations include:

- Information volume

- Usage frequency

- Potential repercussions of information quality issues on decision-making processes

Engaging with stakeholders is vital to ensure that the selected information aligns with the review's goals and effectively addresses significant areas of concern. For instance, organizations that have successfully managed information audits often emphasize the importance of selecting information that reflects their operational realities, thereby enhancing the relevance and accuracy of the audit results.

With Decube's automated crawling feature, organizations can efficiently manage metadata, ensuring that information sets are continuously updated and current. This capability not only streamlines the selection process but also enhances visibility and governance of information. The lineage aspect of Decube illustrates the entire information flow across components, providing clarity that is essential for effective evaluations.

Establishing clear standards for information selection-such as completeness, precision, and relevance-can significantly impact the effectiveness of the review, resulting in improved information integrity and confidence in the insights derived from the analysis. Conducting a thorough review of information is essential for effective leadership in information management, as it aids organizations in identifying bottlenecks and scalability issues, all while leveraging Decube's robust monitoring and analytics tools.

Follow the Data Quality Audit Process

To conduct a successful information assessment, adhere to the following organized steps:

- Preparation: Begin by gathering all necessary documentation and resources, ensuring they align with organizational policies and industry standards. This foundational step establishes the groundwork for conducting a thorough data quality audit.

- Information Profiling: Analyze the selected sets of information to understand their structure and identify potential integrity issues, such as anomalies, duplicates, and missing values. Employing effective information profiling techniques can significantly enhance the accuracy of your findings.

- Evaluation: Assess the information against established performance metrics, focusing on six essential dimensions: accuracy, completeness, consistency, timeliness, uniqueness, and validity. This comprehensive evaluation helps to pinpoint specific areas that require improvement.

- Documentation: Throughout the evaluation process, meticulously record findings and observations. A detailed report should encompass assessments of data quality audit, compliance issues, security risks, and actionable recommendations.

- Reporting: Compile a report that summarizes the audit results, highlighting identified issues and recommendations for improvement. This report serves as a strategic tool for conveying findings and prompting action within the organization.

By following these steps, entities can enhance their information integrity and make informed decisions, ultimately leading to improved operational efficiency and confidence in information-driven processes.

Establish Data Quality Metrics and Standards

To effectively assess information quality, establishing clear metrics and standards that align with industry benchmarks and organizational objectives is essential. Common metrics include accuracy, completeness, consistency, and timeliness. For instance, organizations often aim for a target of 95% accuracy in customer information to ensure reliable insights and decision-making. Completeness, which evaluates the availability of all required information, is another critical metric; a completeness rate of 92% indicates that 80 out of 1,000 records lack essential details, such as email addresses.

A catalog of information serves as a searchable inventory of these metrics, enhanced with metadata that aids teams in quickly locating and understanding the information they are utilizing. Documenting these metrics is vital for the data quality, as it offers a reference point for all stakeholders involved in the audit process. This documentation should clearly define acceptable levels for each metric, ensuring that everyone comprehends the standards to be met. Regular evaluations of these metrics enable companies to monitor progress and identify areas for improvement, fostering a culture of accountability and continuous enhancement in information management. By integrating these metrics into broader governance frameworks, organizations can effectively track information accuracy and align their initiatives with strategic business objectives. Notably, 59% of organizations do not assess their information integrity, underscoring the necessity of establishing clear metrics and standards. As Emily Winks states, "Without metrics, information integrity is merely a goal." With these metrics in place, it transforms into a measurable, accountable business function.

Collect and Analyze Data

After establishing your metrics, the next step is to gather and analyze the information using Decube's advanced capabilities. Begin by employing profiling methods to assess the condition of the selected datasets, checking for duplicates, missing values, and inconsistencies. With Decube's machine learning-powered tests, you can automate the detection of anomalies, ensuring that your data adheres to the required quality standards. Additionally, utilize visualization tools to identify patterns and anomalies effectively, while Decube's smart alerts keep you informed of any issues in real-time. It is crucial to document your findings meticulously, as this information will be vital for the subsequent stages of the audit process, reinforcing your information governance and refining your workflows.

Identify and Document Data Quality Issues

In the process of information analysis, it is crucial to identify and document any emerging issues. Common challenges include:

- Inaccuracies

- Duplicates

- Incomplete records

All of which can significantly impede business performance. For instance, companies face average annual losses of $12.9 million due to insufficient information accuracy, with 47% of newly generated records containing at least one major error.

It is essential to document each issue clearly, detailing its nature, potential impact, and any relevant context. This documentation not only establishes a foundation for developing a remediation plan but also acts as a vital communication tool for stakeholders. By addressing these issues proactively, organizations can mitigate risks associated with poor information, which often leads to lost revenue, inefficiencies, and compliance challenges.

Moreover, effective documentation methods can aid in identifying trends in information inaccuracies, enabling teams to prioritize their responses and enhance overall data integrity.

Develop a Remediation Plan

To effectively tackle information accuracy issues, it is essential to formulate a comprehensive remediation strategy that delineates the necessary steps for resolution. Start by prioritizing problems based on their potential impact on business operations and compliance obligations. This strategic method guarantees that the most critical issues are addressed first, thereby reducing risks and improving operational efficiency. Utilize Decube's automated crawling feature to facilitate seamless metadata management, which aids in the prioritization of issues. Implement machine learning-powered tests, such as null%, regex_match, and cardinality, to assess information integrity and set thresholds for monitoring effectiveness. Assign specific responsibilities to team members for each action item, establishing clear timelines for resolution to uphold accountability. Integrate smart alerts to streamline notifications, ensuring that the team is promptly informed of any critical issues. Finally, ensure that the plan includes strategies for evaluating the effectiveness of the remediation efforts, leveraging Decube's comprehensive capabilities in profiling and management to enhance observability and governance.

Implement Improvements and Monitor Continuously

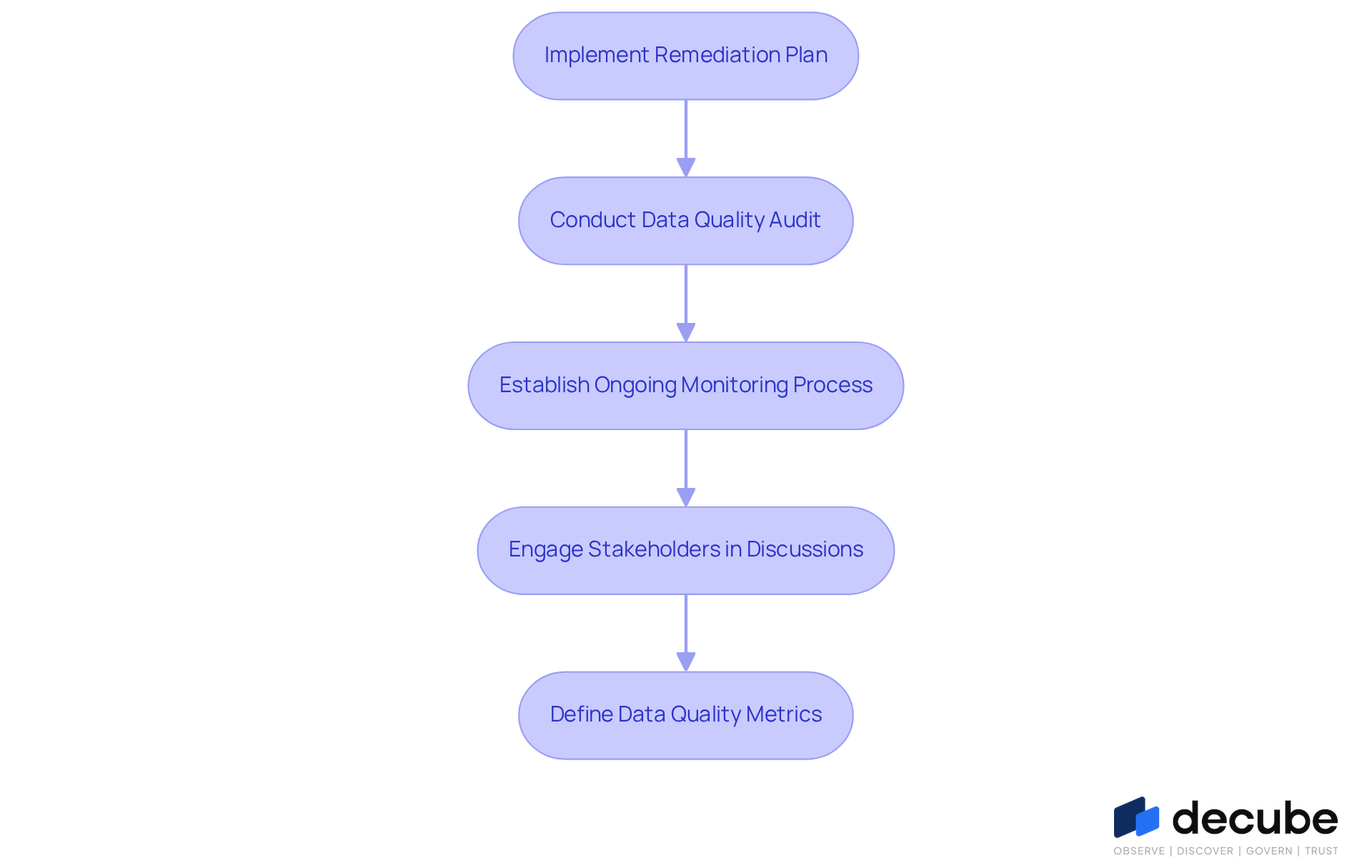

After implementing the remediation plan, it is essential to conduct a data quality audit and establish an ongoing monitoring process to sustain improvements in information integrity. Decube's automated tools are pivotal in tracking critical metrics such as accuracy, completeness, and consistency, enabling organizations to swiftly identify emerging issues. With Decube's automated crawling feature, metadata is continuously updated without the need for manual intervention, facilitating effortless management and secure access control. This capability significantly enhances information observability and governance, particularly given that studies indicate poor information costs the U.S. $3 trillion annually.

Routine evaluations should be scheduled as a data quality audit to reassess information standards, ensuring that improvements are not only maintained but also refined over time. Engaging stakeholders in continuous discussions about information integrity fosters a culture of accountability and ongoing enhancement, which is vital for operational effectiveness. As underscored by Decube's commitment to information integrity, a data quality audit through continuous information assessment is crucial in today’s information-centric landscape, guaranteeing that organizations can rely on accurate and trustworthy information for decision-making. Furthermore, defining relevant data quality metrics is essential for upholding high standards and ensuring that monitoring efforts align with organizational objectives.

Conclusion

In today's data-driven landscape, ensuring data integrity through a comprehensive data quality audit is essential for organizations that seek to make informed decisions based on reliable information. By systematically evaluating data accuracy, completeness, consistency, and other critical dimensions, businesses can identify potential issues that may impede their operational efficiency and strategic initiatives. This guide outlines a structured approach that underscores the importance of:

- Setting clear objectives

- Selecting appropriate data

- Adhering to a defined audit process to achieve optimal results

The key arguments presented throughout this article emphasize the necessity of establishing metrics and standards that align with organizational goals, as well as the importance of continuous monitoring and improvement. By utilizing advanced tools such as Decube’s automated features, organizations can streamline their data quality audits, enhance information governance, and ultimately cultivate a culture of accountability and ongoing enhancement. These best practices not only mitigate risks associated with poor data quality but also contribute to improved decision-making and heightened customer satisfaction.

In an environment where data is pivotal to business success, prioritizing data quality audits is not merely an option; it is a fundamental requirement. Organizations must adopt a proactive approach to managing their data integrity, ensuring they are well-equipped to navigate the complexities of today’s information landscape. By implementing the steps outlined in this guide, companies can transform their data management practices, resulting in enhanced operational effectiveness and a competitive advantage in their respective markets.

Frequently Asked Questions

What is a data quality audit?

A data quality audit is a systematic evaluation designed to ensure the accuracy, completeness, consistency, and reliability of information, which is essential for organizations to validate the soundness and trustworthiness of the information guiding their decisions.

What are the key dimensions of information quality?

The key dimensions of information quality include accuracy, completeness, consistency, timeliness, validity, uniqueness, and relevance.

How can organizations benefit from regular data quality audits?

Organizations that perform regular data quality audits can enhance their decision-making capabilities, as high-quality information serves as the foundation for informed strategies and competitive advantages.

What is Decube's automated crawling feature?

Decube's automated crawling feature allows organizations to automatically refresh their metadata once sources are connected, eliminating the need for manual updates and improving information observability and secure access control.

Why is it important to set clear objectives for a data quality audit?

Setting clear objectives for a data quality audit is essential to align with measurable outcomes, direct the audit process, and facilitate the measurement of its success against predefined goals.

What should organizations consider when choosing data to audit?

Organizations should consider information volume, usage frequency, and the potential repercussions of information quality issues on decision-making processes when selecting data for an audit.

How can stakeholder engagement impact the data audit process?

Engaging with stakeholders ensures that the selected information aligns with the audit's goals and effectively addresses significant areas of concern, enhancing the relevance and accuracy of the audit results.

What role does metadata play in a data quality audit?

Metadata, such as ownership, descriptions, and integrity signals, enhances the discovery and governance of information assets, allowing teams to quickly access the correct details during a data quality audit.

How can establishing clear standards for information selection improve the audit?

Establishing clear standards for information selection, such as completeness, precision, and relevance, can significantly enhance the effectiveness of the review, leading to improved information integrity and confidence in the insights derived from the analysis.

List of Sources

- Understand Data Quality Audits

- Top Data Quality Trends for 2026: Data Trust in the Age of AI (https://qualytics.ai/resources/in/top-data-quality-trends-for-2026-data-trust-in-the-age-of-ai)

- The Importance of Data Quality in Business Decision-Making (https://anchorcomputer.com/2024/10/the-importance-of-data-quality-in-business-decision-making)

- In the News: Elevating Audit Quality: The Impact of Data Analytics and Visualization (https://crunchafi.com/newsroom/elevating-audit-quality-the-impact-of-data-analytics-and-visualization)

- integrate.io (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- The Importance Of Data Quality: Metrics That Drive Business Success (https://forbes.com/councils/forbestechcouncil/2024/10/21/the-importance-of-data-quality-metrics-that-drive-business-success)

- Set Clear Objectives for the Audit

- informatica.com (https://informatica.com/resources/articles/data-quality-metrics-and-measures.html)

- montecarlodata.com (https://montecarlodata.com/blog-data-quality-statistics)

- atlan.com (https://atlan.com/data-quality-metrics)

- The Ultimate Guide to Performing a Data Quality Audit [2026] (https://improvado.io/blog/guide-to-data-quality-audits)

- Choose the Right Data to Audit

- Why Should You Invest in a Data Audit? (https://kleene.ai/blog/why-should-you-invest-in-a-data-audit)

- Industry News 2023 The Increasing Importance of IT Audits to the BoD (https://isaca.org/resources/news-and-trends/industry-news/2023/the-increasing-importance-of-it-audits-to-the-bod)

- The Ultimate Guide to Performing a Data Quality Audit [2026] (https://improvado.io/blog/guide-to-data-quality-audits)

- How To Conduct Data Quality Audits: A Step-by-Step Guide (https://montecarlodata.com/blog-how-to-conduct-data-quality-audits)

- Follow the Data Quality Audit Process

- Elevating audit quality: The impact of data analytics and visualization (https://wolterskluwer.com/en/expert-insights/the-impact-of-audit-data-analytics)

- Transforming Audit Quality with Data Analytics | The IIA (https://theiia.org/en/content/videos/webinar/2026/transforming-audit-quality-with-data-analytics-lessons-from-leading-global-audit-functions)

- Using technology to enhance the audit process (https://ggi.com/news/auditing/using-technology-to-enhance-the-audit-process)

- Data Audits: A Step-by-Step Guide (https://linkedin.com/pulse/data-audits-step-by-step-guide-soltech-inc-gx6me)

- The Ultimate Guide to Performing a Data Quality Audit [2026] (https://improvado.io/blog/guide-to-data-quality-audits)

- Establish Data Quality Metrics and Standards

- Data Quality Metrics Best Practices - Dataversity (https://dataversity.net/articles/data-quality-metrics-best-practices)

- TIA: New Data Center Quality Standard Arriving in 2026 (https://datacentremagazine.com/news/tia-new-data-center-quality-standard-arriving-in-2026)

- Data Quality Dimensions: Key Metrics & Best Practices for 2026 (https://ovaledge.com/blog/data-quality-dimensions)

- atlan.com (https://atlan.com/data-quality-metrics)

- ibm.com (https://ibm.com/think/insights/data-quality-metrics)

- Collect and Analyze Data

- Top 5 Most Common Data Quality Issues ➤ (https://stibosystems.com/blog/data-quality-issues)

- Data quality issues on the rise, finds research | News | Research live (https://research-live.com/article/news/data-quality-issues-on-the-rise-finds-research-/id/5143303)

- pantomath.com (https://pantomath.com/guide-data-observability/data-profiling-techniques)

- 7 Most Common Data Quality Issues | Collibra (https://collibra.com/blog/the-7-most-common-data-quality-issues)

- Identify and Document Data Quality Issues

- dataversity.net (https://dataversity.net/articles/putting-a-number-on-bad-data)

- The True Cost of Poor Data Quality | IBM (https://ibm.com/think/insights/cost-of-poor-data-quality)

- montecarlodata.com (https://montecarlodata.com/blog-data-quality-statistics)

- integrate.io (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Develop a Remediation Plan

- prnewswire.com (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- Data Quality is Not Being Prioritized on AI Projects, a Trend that 96% of U.S. Data Professionals Say Could Lead to Widespread Crises (https://qlik.com/us/news/company/press-room/press-releases/data-quality-is-not-being-prioritized-on-ai-projects)

- The 2025 Remediation Operations Report: Why Organizations Still Struggle in 2025 (https://seemplicity.io/blog/2025-remediation-operations-report-organizations-still-struggle)

- Streamlining Data Remediation: Best Practices Guide (https://bigid.com/blog/data-remediation-guide)

- montecarlodata.com (https://montecarlodata.com/blog-data-quality-statistics)

- Implement Improvements and Monitor Continuously

- The Importance of Real-Time Data Quality Monitoring in Modern Enterprises - DvSum (https://dvsum.ai/blog/the-importance-of-real-time-data-quality-monitoring-in-modern-enterprises)

- Continuous Monitoring for Data Quality: Solutions for Reliable Data (https://anomalo.com/blog/continuous-monitoring-for-data-quality-solutions-for-reliable-data)

- Advanced Data Quality Monitoring: Integrity and Performance (https://acceldata.io/blog/proactive-data-quality-monitoring-accuracy-across-pipelines)

- The Importance of Continuous Monitoring and Maintenance in Data Science | Institute of Data (https://institutedata.com/us/blog/the-importance-of-continuous-monitoring-and-maintenance-in-data-science)

- What is Data Quality Monitoring? | IBM (https://ibm.com/think/topics/data-quality-monitoring-techniques)

_For%20light%20backgrounds.svg)