Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding the Purpose of a Data Dictionary for Data Engineers

Discover the purpose of a data dictionary and its critical role in effective data management.

Introduction

A well-structured data dictionary is essential for effective data management, providing a foundation for clarity and consistency across teams. It acts as a centralized repository, providing clarity and consistency across teams. By defining relationships among data elements, it enables data engineers to improve information quality and compliance, reducing the risk of misinterpretation. Organizations often grapple with the challenges posed by complex data environments, leading to potential misinterpretations and inefficiencies.

How can they effectively leverage data dictionaries to navigate this landscape and ensure robust governance? The strategic use of data dictionaries can transform data governance, enabling organizations to thrive in complex environments.

Define the Data Dictionary: Core Concepts and Components

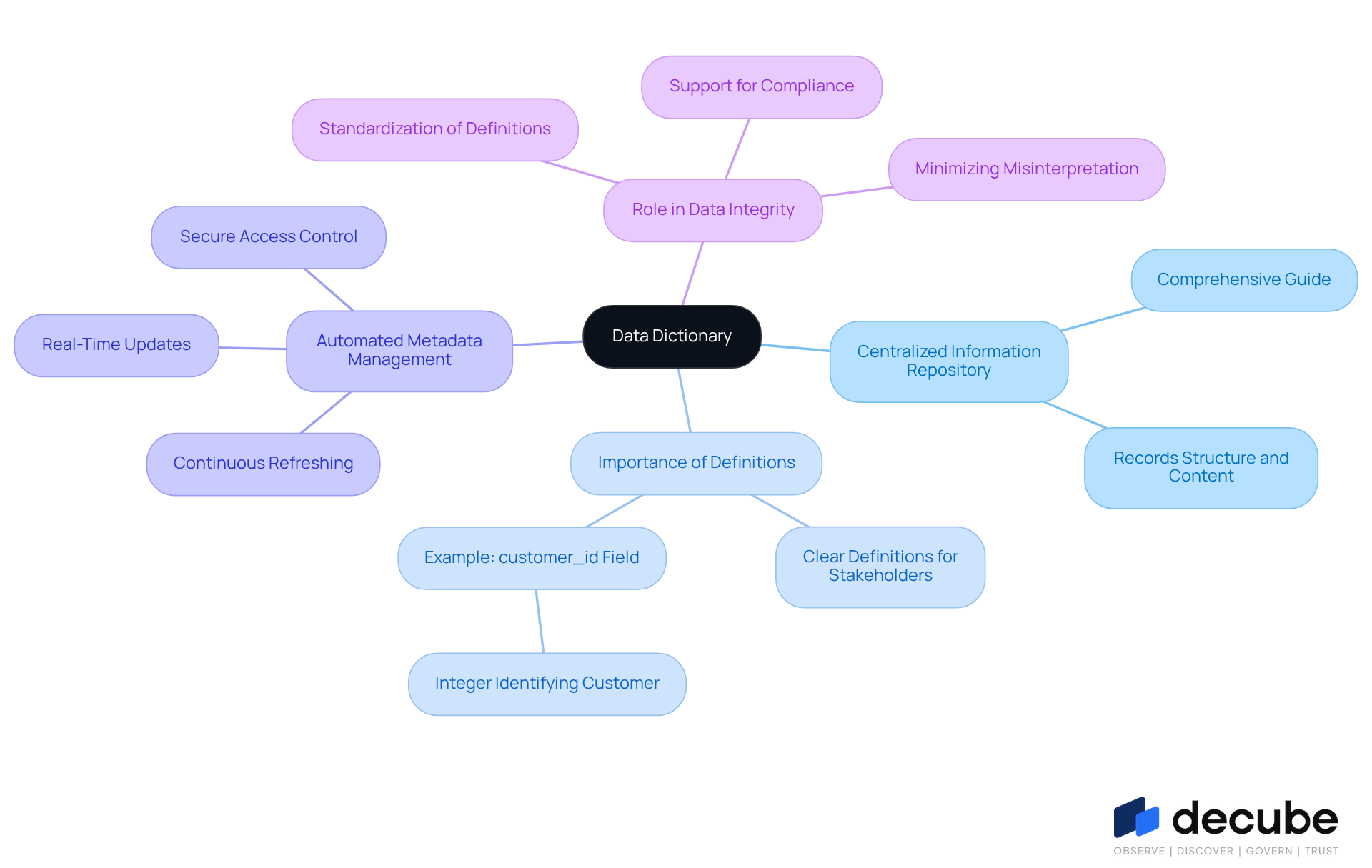

A centralized information repository is essential for effective data management and collaboration among teams. It serves as a comprehensive guide that records the structure, content, and connections of information components within a dataset or database, illustrating the purpose of a data dictionary. Clear definitions in an information repository fulfill the purpose of a data dictionary by helping all stakeholders understand the information being used, which is crucial when multiple teams work with the same data. For instance, specifying that a 'customer_id' field is an integer uniquely identifying a customer clarifies its purpose and usage across various applications.

The importance of information reference guides in information management is underscored by a 2025 IBM Research Report. This highlights a significant barrier to effective technology implementation, as 49% of executives mention inaccuracies and bias as obstacles to technology adoption. Moreover, organizations that utilize efficient information glossaries can improve information quality, as demonstrated by case studies where companies like PhonePe attained a 2,000% increase in information infrastructure while ensuring high availability through strong information management practices.

With Decube's automated crawling capability, organizations can ensure that their information catalogs are continuously refreshed without manual intervention, enhancing information observability and governance. This automated metadata management enables real-time updates, ensuring that all information definitions remain precise and pertinent. Additionally, secure access control features enable organizations to manage who can view or edit information, further supporting compliance and minimizing the risk of misinterpretation across teams.

Experts agree that the purpose of a data dictionary is to standardize definitions, support compliance, and minimize misinterpretation among teams. As we move into 2026, the role of information catalogs will be pivotal in ensuring data integrity and supporting advanced analytical initiatives. Frequent assessments and revisions to information glossaries are crucial to maintain their relevance and alignment with changing business requirements, strengthening their role as a fundamental aspect of effective information governance.

Explain the Importance of a Data Dictionary in Data Management

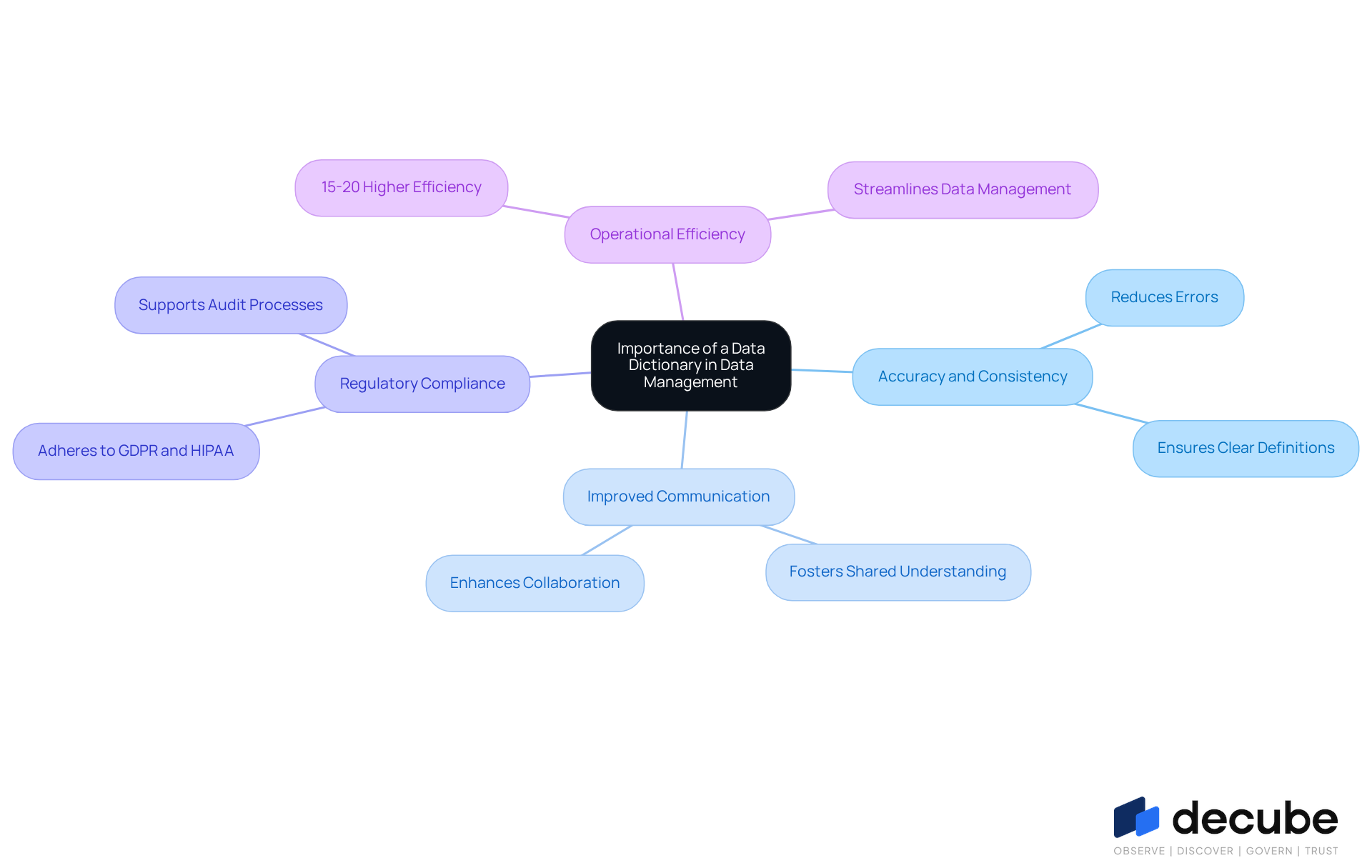

An information dictionary is vital for ensuring accuracy and consistency in complex projects. It significantly improves information quality by providing clear definitions and standards for elements, which helps reduce errors and inconsistencies. In complex projects, clarity is crucial as multiple teams depend on the same information. It also fosters better communication among engineers, analysts, and business stakeholders, ensuring a shared understanding of definitions. This alignment is particularly important in environments where information is frequently shared and utilized across various functions.

Furthermore, an information glossary plays a crucial role in aiding adherence to regulatory standards like GDPR and HIPAA. By recording information lineage and usage, it offers the essential clarity for audits and information management. For example, in a financial services organization, a carefully maintained information repository can ensure that sensitive customer details are managed in accordance with legal requirements, thereby minimizing the risk of non-compliance. Organizations that implement strong information governance practices, including the use of information catalogs, report 15-20% higher operational efficiency, emphasizing the strategic benefit of effective information management. Ultimately, organizations that prioritize effective information management can achieve significant operational advantages and compliance assurance.

Trace the Evolution of Data Dictionaries: Historical Context and Development

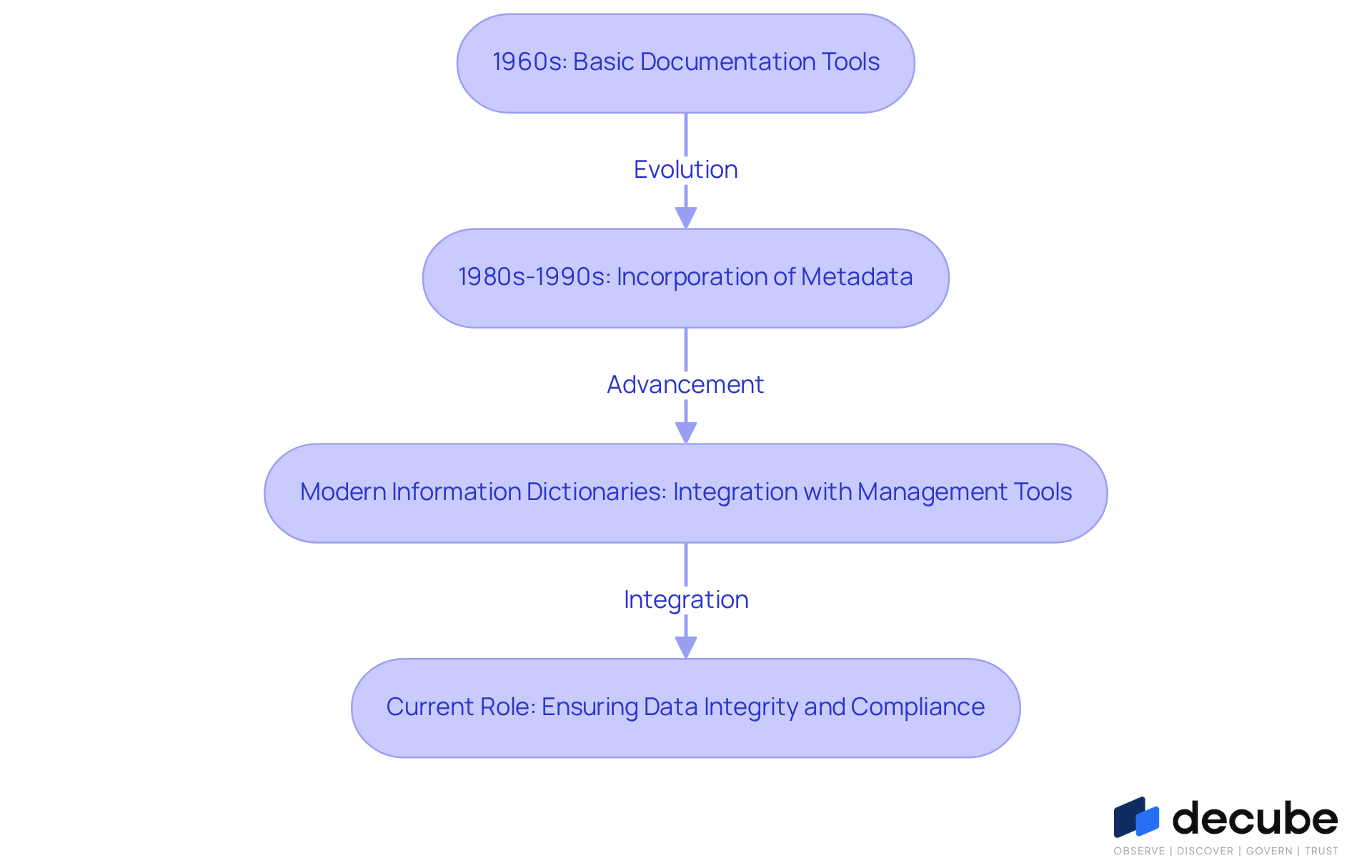

Since the 1960s, the purpose of a data dictionary has evolved from being a simple list to becoming a complex system that enhances information management. Initially, they served as basic documentation tools, but as databases grew more intricate, the purpose of a data dictionary for organized information management became increasingly evident. By the 1980s and 1990s, information repositories began to incorporate metadata for the purpose of a data dictionary, which significantly improved oversight and management methods.

Today, modern information dictionaries fulfill the purpose of a data dictionary by integrating with management tools, providing real-time updates and automated features that improve usability. For instance, Decube's automated crawling feature ensures that once your information sources are linked, metadata is refreshed automatically, eliminating the need for manual updates. This capability enhances information management and allows secure access control through approval processes. Furthermore, contemporary information catalogs can automatically track changes in information schemas and lineage, making them essential in dynamic environments where information is constantly evolving.

This evolution highlights the essential role of robust information governance in ensuring [data integrity and compliance](https://murdio.com/insights/data-governance-trends) in an era defined by rapid information change.

Identify Key Elements of an Effective Data Dictionary

In the absence of a well-structured information glossary, organizations may struggle with data mismanagement and inefficiencies. A useful information glossary must include several essential components to enhance its utility and effectiveness. These components consist of:

- Information Point Names: Clear and unique identifiers for each information point, removing ambiguity.

- Definitions: Brief explanations that clarify what each component signifies and its function within the dataset.

- Information Types: Specifications of the format (e.g., integer, string, date) to guide users in handling and processing.

- Allowed Values: A list of acceptable values for each information unit, which is crucial for preserving information integrity.

- Relationships: Documentation of how various information elements interconnect, essential for understanding context.

- Source Information: Details about the origin of the information, aiding in tracking lineage and ensuring compliance.

- Automation and Human Curation: Balancing automated metadata extraction with human input for business context is essential for maintaining accuracy and reliability in information catalogs. For example, Decube's ML-powered assessments can automatically identify limits for information quality, enhancing the reliability of the information repository.

- Integration with CI/CD Processes: Ensuring that schema changes initiate necessary updates in the information dictionary is vital for keeping the documentation current.

- Living Dictionaries: These continuously monitor connected systems for schema changes, refreshing metadata regularly to maintain information quality. Decube's intelligent notifications aid this process by informing users of any notable changes, thus enhancing continuous information management.

Ultimately, the purpose of a data dictionary extends beyond being a mere reference; it is a cornerstone for effective data governance and informed decision-making.

Conclusion

A data dictionary is not merely a tool; it is a cornerstone of effective data management and collaboration across teams. It serves as a centralized repository that enhances information management, providing clarity on data definitions, structures, and relationships. This ensures that all stakeholders maintain a consistent understanding of the information being utilized. By establishing clear standards and definitions, a data dictionary minimizes the risk of misinterpretation, which is crucial for maintaining data integrity and supporting compliance with regulatory standards.

This article highlights the critical role of a data dictionary through various insights. From its historical evolution from simple documentation tools to complex systems that integrate automation and real-time updates, it is evident that a well-structured data dictionary is essential for effective data governance. Key components such as clear identifiers, definitions, allowed values, and relationships are vital in fostering better communication and reducing errors across multiple teams. Furthermore, the role of automated metadata management and continuous updates ensures that the information remains relevant and accurate.

In today's dynamic data landscape, a robust data dictionary is essential for success. Organizations that prioritize the implementation of effective information management practices, including the use of a comprehensive data dictionary, will enhance operational efficiency and ensure compliance and data quality. Embracing the principles outlined in this article can empower data engineers and organizations alike to leverage their data assets more effectively, driving informed decision-making and fostering a culture of data-driven excellence.

Frequently Asked Questions

What is a data dictionary?

A data dictionary is a centralized information repository that records the structure, content, and connections of information components within a dataset or database, serving as a comprehensive guide for effective data management and collaboration among teams.

Why is a data dictionary important for organizations?

A data dictionary helps all stakeholders understand the information being used, which is crucial when multiple teams work with the same data. It standardizes definitions, supports compliance, and minimizes misinterpretation among teams.

How does a data dictionary improve information quality?

Organizations that utilize efficient information glossaries can improve information quality, as demonstrated by case studies where companies achieved significant increases in information infrastructure while maintaining high availability through strong information management practices.

What challenges do organizations face without a data dictionary?

A lack of clear definitions can lead to inaccuracies and bias, which are significant barriers to effective technology implementation, as noted by 49% of executives in a 2025 IBM Research Report.

How does Decube enhance data dictionary management?

Decube offers automated crawling capabilities that ensure information catalogs are continuously refreshed without manual intervention, enhancing information observability and governance.

What features does Decube provide for information management?

Decube provides automated metadata management for real-time updates and secure access control features to manage who can view or edit information, supporting compliance and minimizing the risk of misinterpretation.

What is the future role of information catalogs?

As we move into 2026, information catalogs will play a pivotal role in ensuring data integrity and supporting advanced analytical initiatives, with frequent assessments and revisions necessary to maintain their relevance.

Why are frequent assessments and revisions important for information glossaries?

Frequent assessments and revisions are crucial to maintain the relevance of information glossaries and ensure alignment with changing business requirements, strengthening their role in effective information governance.

List of Sources

- Define the Data Dictionary: Core Concepts and Components

- Data Dictionary Best Practices (2026 Guide) (https://ovaledge.com/blog/data-dictionary-best-practices)

- Data Dictionary: Essential Tool for Accurate Data Management (https://acceldata.io/blog/why-a-data-dictionary-is-critical-for-data-accuracy-and-control)

- Data Dictionary 2026: Components, Examples, Implementation (https://atlan.com/what-is-a-data-dictionary)

- The 2026 Guide to Data Management | IBM (https://ibm.com/think/topics/data-management-guide)

- Explain the Importance of a Data Dictionary in Data Management

- Data Dictionary 2026: Components, Examples, Implementation (https://atlan.com/what-is-a-data-dictionary)

- Why Every Team Needs a Data Dictionary (https://acceldata.io/blog/data-dictionary)

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- Trace the Evolution of Data Dictionaries: Historical Context and Development

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Top data governance trends: The future of data in 2026 - Murdio (https://murdio.com/insights/data-governance-trends)

- Data Quotes | The Data Governance Institute (https://datagovernance.com/quotes/data-quotes)

- Do Salesforce Data Dictionaries Still Matter in 2026? | Salesforce Ben (https://salesforceben.com/do-salesforce-data-dictionaries-still-matter-in-2026)

- Identify Key Elements of an Effective Data Dictionary

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Compelling Quotes About Data | 6sense (https://6sense.com/blog/compelling-quotes-about-data)

- Data Dictionary 2026: Components, Examples, Implementation (https://atlan.com/what-is-a-data-dictionary)

_For%20light%20backgrounds.svg)