Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master KPI Data Quality: Essential Metrics and Strategies for Data Engineers

Master KPI data quality with essential metrics and strategies for effective data management.

Introduction

In an era where data drives decision-making, the quality of that data is paramount. For data engineers, mastering Key Performance Indicators (KPIs) related to data quality is essential for ensuring reliable insights and operational efficiency. Despite the significance of these metrics, many organizations face challenges in effective monitoring and management.

How can data professionals navigate the complexities of data quality to establish robust systems that enhance accuracy and drive improved business outcomes?

Define Key Performance Indicators (KPIs) for Data Quality

To effectively manage information quality, it is crucial to define specific Key Performance Indicators (KPIs) that reflect the KPI data quality and reliability of your information. Here are some critical KPIs to consider:

- Precision: This KPI evaluates how closely values correspond to true figures. For instance, in a dataset containing customer ages, precision assesses how many entries accurately reflect the actual ages of customers. High precision reduces the risk of errors in analysis and execution, leading to better business decisions.

- Completeness: Completeness assesses whether all necessary information fields are filled. In a customer database, this KPI checks if essential fields like name, address, and email are populated. Complete information is necessary for comprehensive analyses and improved decision-making.

- Consistency: This KPI ensures that information across different systems or datasets is uniform. For example, if a customer’s address is recorded differently in two systems, this inconsistency needs to be flagged. Inconsistent information can create confusion and erode trust in insights.

- Timeliness: Timeliness evaluates if information is current and accessible when required. For example, if sales information is refreshed daily, this KPI assesses the delay between collection and availability. Timely information is critical in sectors like logistics and finance, where decisions must be based on the most recent details.

- Validity: Validity verifies if the information adheres to established formats or standards. For example, a valid email address must contain an '@' symbol and a domain. Ensuring validity helps maintain information integrity throughout its lifecycle.

- Uniqueness: This KPI measures the absence of duplicate records in a dataset. In a customer database, each customer should have a unique identifier. High uniqueness is essential for accurate reporting and effective customer segmentation.

By establishing these KPIs, data engineers can develop a robust structure for overseeing KPI data quality, ensuring that the information utilized for analysis and decision-making is reliable and credible. Organizations that apply these measures effectively can enhance their operational efficiency and sustain a competitive advantage.

Identify Essential Data Quality Metrics to Monitor

Once KPIs are established, the next step is to identify essential quality indicators to monitor KPI data quality. Here are several key metrics to consider:

- Error Rate: This metric tracks the percentage of inaccurate entries within a dataset. A high error rate signals the need for improved data entry processes, as even minor inaccuracies can lead to significant operational challenges.

- Percentage of Correct Values: This measure assesses the ratio of accurate entries to the total number of submissions. Maintaining a high percentage is critical; for example, customer master records should achieve at least 98% accuracy to support compliance reporting.

- Count of Entries Outside Acceptable Ranges: This metric identifies how many entries fall outside predefined acceptable ranges, highlighting potential quality issues that could disrupt business operations.

- Information Downtime: This measure evaluates the total duration during which information is inaccessible or delayed, impacting business operations. Monitoring this metric is vital for ensuring information availability and minimizing disruptions.

- Table Health: This metric assesses the overall condition of tables, considering factors such as the number of missing values and duplicates. Regular audits can help maintain table health and ensure data integrity.

- Information Freshness: This measure evaluates the recency of the information, which is crucial for time-sensitive decision-making. Metrics may include the age of the information and the frequency of updates, with a target of refreshing records within 24 hours to ensure relevance.

By focusing on these metrics, data engineers can gain valuable insights into data integrity and implement necessary improvements, ultimately enhancing operational efficiency and decision-making capabilities.

Implement Effective Monitoring Strategies for Data Quality KPIs

To effectively monitor data quality KPIs, organizations should adopt the following strategies:

- Automated Monitoring Tools: Organizations should utilize sophisticated information monitoring tools that automatically track and report on specified KPIs. Platforms like Decube provide real-time insights into information quality issues, enabling swift responses to anomalies. With Decube's automated crawling feature, there is no need for manual updating of metadata; once sources are connected, the system auto-refreshes, ensuring that information remains current and precise. Automated validation at ingestion offers the quickest ROI by preventing new errors, which is essential for ensuring KPI data quality.

- Regular Audits: Routine evaluations of information standards are crucial to ensure adherence to established criteria and to pinpoint areas for improvement. By 2026, organizations are increasingly acknowledging the necessity for frequent audits to uphold information integrity, as poor information standards cost organizations an average of $12.9 million each year.

- Alerts and Notifications: Establishing alert systems is vital to inform engineers when measurements fall below acceptable levels. This proactive approach enables prompt corrective measures, thereby reducing the effects of information accuracy issues.

- Dashboards and Reporting: Creating user-friendly dashboards that illustrate information integrity indicators allows stakeholders to swiftly understand the current condition of information integrity. Effective visualization assists in decision-making and prioritization of information improvement initiatives.

- Information Accuracy Evaluations: Consistent assessments of information accuracy metrics should be arranged with pertinent teams to address discoveries, obstacles, and tactics for enhancement. Involving cross-functional teams promotes a cooperative strategy for information management.

- Integration with Information Governance Policies: Monitoring strategies must align with broader information governance policies, reinforcing the significance of information integrity across the organization. This integration fosters a culture of accountability and continuous improvement.

By implementing these strategies, organizations can maintain high information standards, ensuring their information remains reliable and trustworthy, which is vital for informed decision-making and operational efficiency.

Overcome Challenges in Monitoring Data Quality KPIs

Monitoring KPI data quality presents several challenges that organizations must navigate to ensure dependable information. Key issues include:

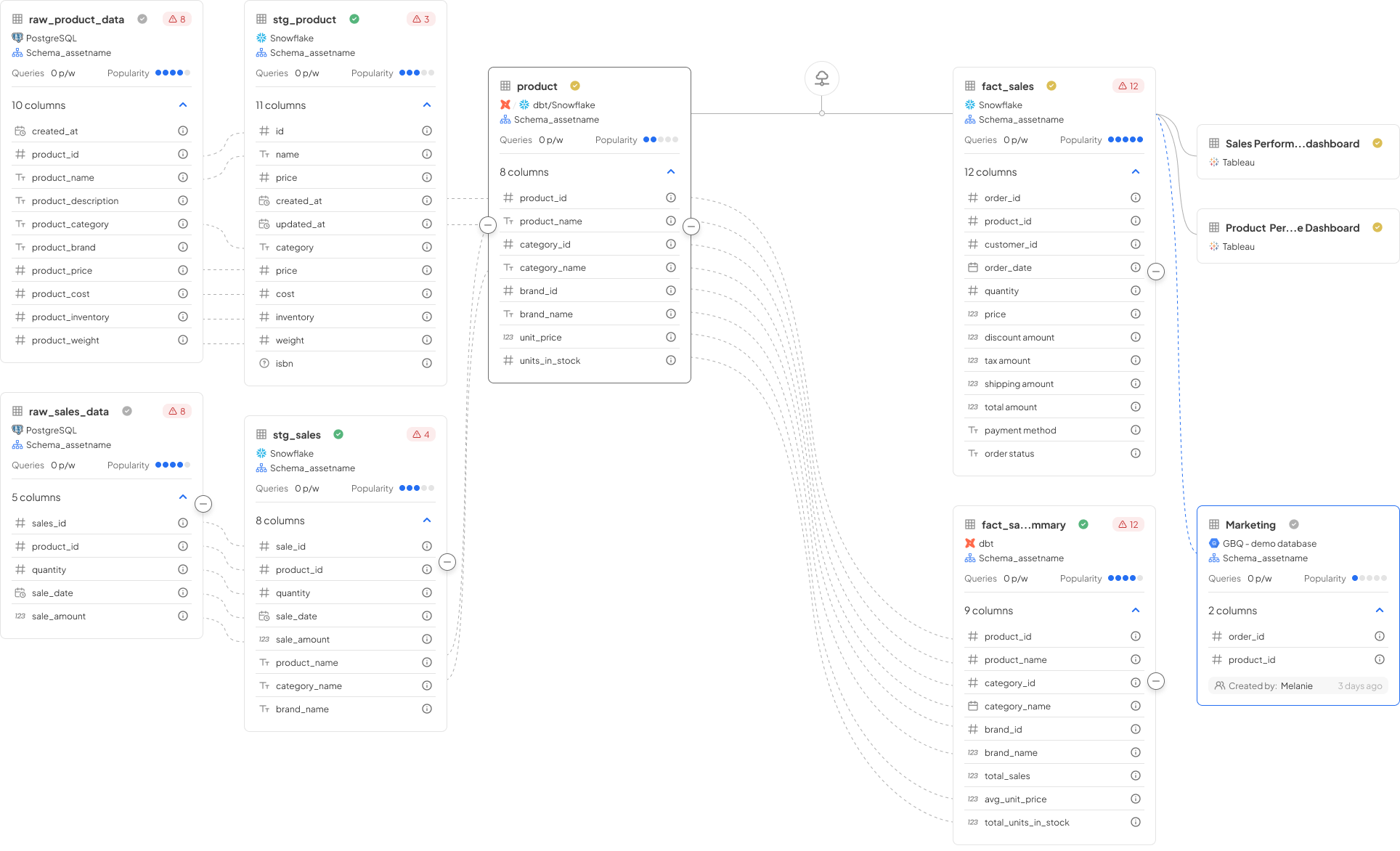

- Information Silos: Diverse systems frequently result in segregated information, complicating standards assessment throughout the organization. To address this, organizations should implement a unified information management platform that integrates information from various sources, such as Decube's Automated Column-Level lineage, facilitating a holistic view of information integrity.

- Lack of Standardization: Inconsistent formats can hinder effective monitoring. Establishing clear information standards and guidelines is essential for ensuring uniformity across datasets, which enhances the KPI data quality of assessments. For instance, organizations like Morrisons have successfully unified their data formats to improve operational efficiency and data visibility.

- Resource Constraints: Limited resources can hinder oversight efforts. Organizations should prioritize key metrics and consider utilizing automated tools, like Decube's oversight abilities, to streamline processes and reduce manual effort, thereby enhancing efficiency.

- Resistance to Change: Teams may be reluctant to embrace new oversight practices. Nurturing a culture focused on information through education and showcasing the importance of information assessment can aid in overcoming this resistance, encouraging wider acceptance of new approaches, especially through insights derived from Decube's platform.

- Complexity of Information: The increasing volume and intricacy of information can complicate monitoring efforts. Employing advanced analytics and machine learning methods, as provided by Decube, can automate the identification of anomalies and trends, simplifying the maintenance of information integrity.

- Insufficient Reporting: Subpar reporting can hide transparency regarding information integrity problems. Creating thorough reporting structures that deliver actionable insights, supported by Decube's strong reporting tools, is essential for enabling informed decision-making and ensuring that stakeholders are aware of information status.

By proactively addressing these challenges with the help of Decube's advanced observability and governance features, organizations can significantly enhance their KPI data quality monitoring efforts, ensuring that their data remains reliable and actionable for informed decision-making.

Conclusion

In conclusion, establishing a robust framework for managing KPI data quality is essential for data engineers who seek to enhance the reliability and credibility of their information. By clearly defining key performance indicators - such as precision, completeness, consistency, timeliness, validity, and uniqueness - organizations can lay a solid foundation for monitoring data quality. These metrics not only facilitate improved decision-making but also contribute to enhanced operational efficiency and a competitive edge in the market.

Throughout this article, we have shared critical insights regarding essential data quality metrics, including error rates, the percentage of correct values, and information freshness. Implementing effective monitoring strategies, such as automated tools, regular audits, and integrated governance policies, can significantly enhance the oversight of data quality KPIs. Moreover, addressing challenges like information silos and lack of standardization is vital for maintaining high data integrity.

Ultimately, prioritizing data quality transcends technical necessity; it is a strategic imperative. Organizations must cultivate a culture of accountability and continuous improvement in their data management practices. By embracing these strategies and overcoming obstacles, businesses can ensure their data remains a reliable asset, empowering informed decision-making and driving long-term success.

Frequently Asked Questions

What are Key Performance Indicators (KPIs) for data quality?

KPIs for data quality are specific metrics that reflect the quality and reliability of information, helping organizations manage information effectively.

What does the Precision KPI measure?

The Precision KPI evaluates how closely values in a dataset correspond to true figures, reducing the risk of errors in analysis and execution.

How is Completeness defined in the context of data quality?

Completeness assesses whether all necessary information fields in a dataset are filled, which is essential for comprehensive analyses and improved decision-making.

What is the purpose of the Consistency KPI?

The Consistency KPI ensures that information across different systems or datasets is uniform, flagging any discrepancies that could lead to confusion or erode trust in insights.

What does Timeliness evaluate in data quality?

Timeliness evaluates whether information is current and accessible when required, which is critical for making decisions based on the most recent details.

How is Validity defined as a KPI?

Validity verifies if the information adheres to established formats or standards, helping to maintain information integrity throughout its lifecycle.

What does the Uniqueness KPI measure?

The Uniqueness KPI measures the absence of duplicate records in a dataset, ensuring each customer or entry has a unique identifier for accurate reporting and effective segmentation.

Why is it important to establish these KPIs?

Establishing these KPIs allows data engineers to create a robust structure for overseeing data quality, ensuring that information used for analysis and decision-making is reliable and credible, ultimately enhancing operational efficiency and competitive advantage.

List of Sources

- Define Key Performance Indicators (KPIs) for Data Quality

- Top Data Quality KPIs to Track in 2025 (https://data-sleek.com/blog/measuring-data-quality-kpi)

- montecarlodata.com (https://montecarlodata.com/blog-data-quality-metrics)

- Top 8 Data Quality Metrics in 2026 | Dagster (https://dagster.io/learn/data-quality-metrics)

- 12 Data Quality Metrics to Measure Data Quality in 2026 (https://lakefs.io/data-quality/data-quality-metrics)

- The Importance Of Data Quality: Metrics That Drive Business Success (https://forbes.com/councils/forbestechcouncil/2024/10/21/the-importance-of-data-quality-metrics-that-drive-business-success)

- Identify Essential Data Quality Metrics to Monitor

- The Impact of Poor Data Quality (and How to Fix It) - Dataversity (https://dataversity.net/articles/the-impact-of-poor-data-quality-and-how-to-fix-it)

- The Importance Of Data Quality: Metrics That Drive Business Success (https://forbes.com/councils/forbestechcouncil/2024/10/21/the-importance-of-data-quality-metrics-that-drive-business-success)

- Data Quality Issues and Challenges | IBM (https://ibm.com/think/insights/data-quality-issues)

- Implementing Data Quality Measures: Improve Accuracy & Trust (https://acceldata.io/blog/data-quality-measures-practical-frameworks-for-accuracy-and-trust)

- The exponential impact of bad data quality (https://validio.io/blog/the-exponential-impact-of-bad-data)

- Implement Effective Monitoring Strategies for Data Quality KPIs

- How to improve data quality: 10 best practices for 2026 (https://rudderstack.com/blog/how-to-improve-data-quality)

- montecarlodata.com (https://montecarlodata.com/blog-data-quality-statistics)

- Data Quality Best Practices - Peliqan (https://peliqan.io/blog/data-quality-best-practices)

- Top 8 Data Quality Metrics in 2026 | Dagster (https://dagster.io/learn/data-quality-metrics)

- A Continual Quest for Improving Data Quality | U.S. Bureau of Economic Analysis (BEA) (https://bea.gov/news/blog/2026-03-16/continual-quest-improving-data-quality)

- Overcome Challenges in Monitoring Data Quality KPIs

- databricks.com (https://databricks.com/blog/data-silos-explained-problems-they-cause-and-solutions)

- Data Silos: The Definitive Guide to Breaking Them Down in 2026 (https://improvado.io/blog/data-silos)

- Breaking Data Silos Across Teams and Systems to Deliver Connected Customer Journeys (https://cxtoday.com/tv/breaking-data-silos-across-teams-and-systems-to-deliver-connected-customer-journeys-csg-cs-0186)

- Data Silos: What They Are and How to Break Free of Them (https://striim.com/blog/data-silos)

- How to Overcome Data Silos & Enable Seamless Integration (https://indeavor.com/blog/overcome-data-silos-for-seamless-integration)

_For%20light%20backgrounds.svg)