Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding Ingestion Data Meaning: Importance and Challenges for Engineers

Explore the significance and challenges of ingestion data meaning in modern information management.

Introduction

Understanding the complexities of data ingestion is essential for engineers operating within the dynamic realm of information management. This foundational process not only dictates the quality and accessibility of data but also significantly impacts an organization's decision-making capabilities. As the volume and complexity of data continue to rise, engineers must confront the challenges of ensuring seamless and efficient data ingestion. By examining the core significance, implications, and hurdles associated with data ingestion, we uncover critical insights that can enable organizations to refine their data strategies and improve overall performance.

Define Data Ingestion: Understanding Its Core Meaning

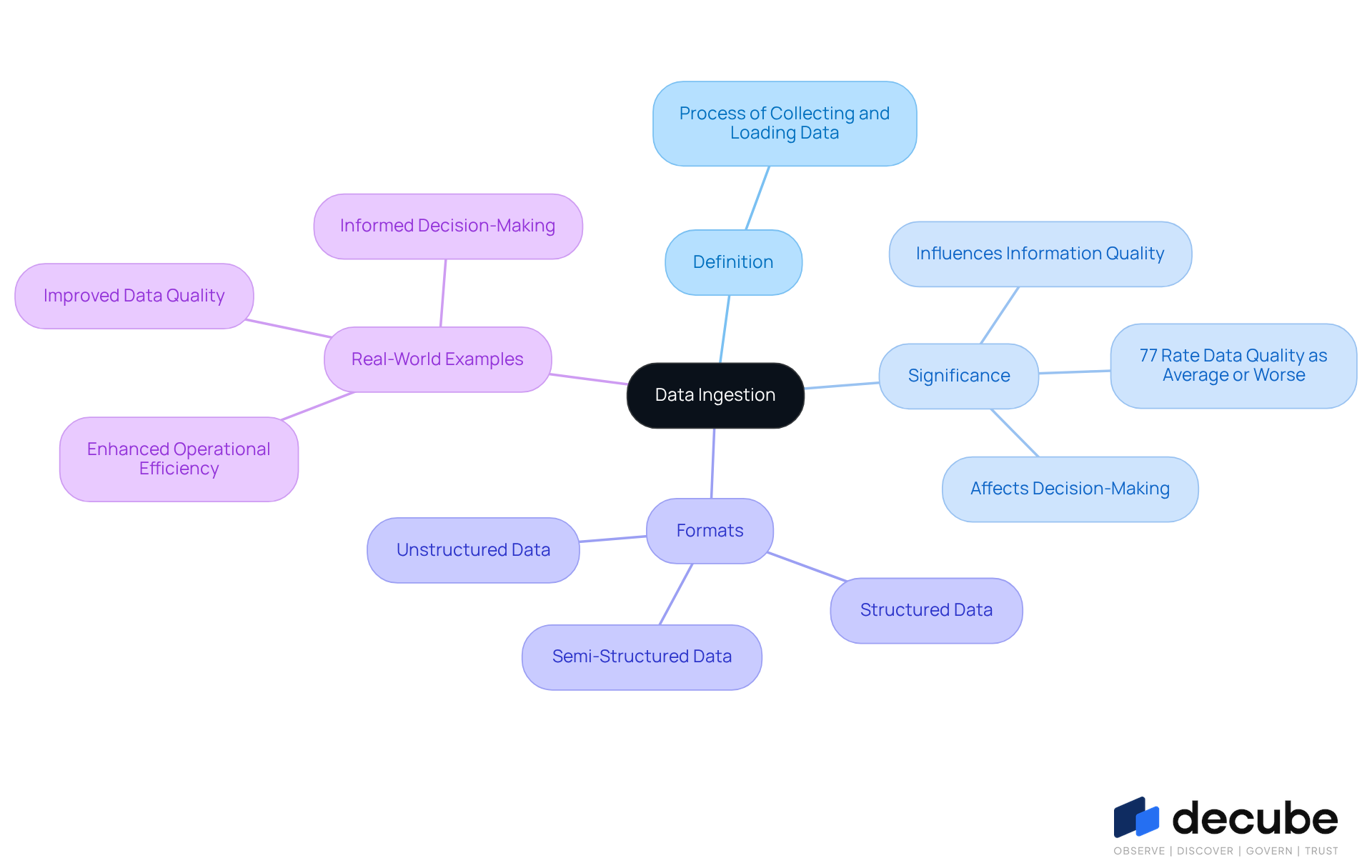

refers to the process of collecting, importing, and loading data from various sources into a centralized system for storage and analysis. This foundational step in information engineering is vital for establishing pipelines that facilitate the seamless flow of information from operational systems to analytical environments. Information can be ingested in multiple formats, including structured data from databases, semi-structured data from APIs, and unstructured data from documents or social media.

The significance of information intake is paramount; it directly influences and accessibility, which subsequently affects decision-making processes across organizations. Statistics reveal that , underscoring the critical need for . Furthermore, as organizations increasingly adopt cloud services - projected to exceed 94 percent by 2026 - efficient information intake becomes essential for navigating the complexities of multi-cloud environments.

Real-world examples illustrate this necessity: companies that implement report enhanced operational efficiency and improved , enabling them to make informed decisions swiftly. In the evolving landscape of , robust intake practices are crucial for ensuring that high-quality information is readily available for analysis and strategic initiatives.

Explore the Importance of Data Ingestion in Modern Data Management

The stands as a cornerstone of modern , empowering organizations to effectively utilize their assets. With Decube's , businesses can automate the gathering and integration of information from various sources, significantly reducing time and resource expenditures. This capability allows engineers to concentrate on strategic initiatives while ensuring that information is readily available for analysis, which is crucial for and operational agility.

Decube enhances through features such as , which provides a clear visualization of across components, and that ensures metadata is continuously refreshed without manual intervention. In an era of increasing information generation, the ingestion data meaning highlights the ability to intake information quickly and accurately, offering a competitive advantage that enables firms to adapt swiftly to market changes and client needs. For example, enterprises employing advanced strategies that enhance ingestion data meaning with Decube can bolster their analytics capabilities, leading to deeper insights and improved business performance.

As organizations prioritize and governance, Decube's focus on cleansing, validating, and monitoring critical datasets becomes increasingly essential, ensuring that the information driving decisions is both precise and relevant. The importance of in decentralized information management fosters collaboration among stakeholders, further enhancing information quality. By establishing clear agreements on information usage and responsibilities, organizations can strengthen trust and accountability in their processes. According to industry forecasts, the global information lakehouse market is projected to reach $74.0 billion by 2033, underscoring the growing importance of effective management strategies. As Ranko Cosic noted, 'Information management in 2026 is about clarity,' highlighting the necessity for clear and efficient processes that align with ingestion data meaning to achieve effective information governance.

Identify Types of Data Ingestion: Methods and Approaches

can be classified into three main types: , , and .

- Batch Ingestion: This method involves collecting and processing information at scheduled intervals, making it ideal for scenarios where real-time input is not critical. For instance, a retail company may ingest sales information daily to analyze trends over time. Batch processing remains essential for and cost-sensitive workloads, allowing organizations to manage resources effectively.

- Real-Time Ingestion: This approach facilitates the continuous flow of data into the system as it is generated, which is crucial for applications requiring . For instance, financial organizations such as PayPal employ immediate data processing to oversee transactions and highlight questionable activities promptly, thereby improving fraud detection capabilities. With more than 82% of entities embracing in their pipeline architectures, this approach is becoming a standard expectation across systems.

- Hybrid Ingestion: Merging both batch and real-time methods, hybrid ingestion provides flexibility to organizations. It allows the processing of large volumes of information periodically while also capturing real-time events. This method is especially advantageous in contexts like IoT applications, where sensor information is continuously produced. Current trends indicate that numerous entities are increasingly adopting hybrid architectures to balance scalability and responsiveness, reflecting a growing preference for this approach in various industries.

In 2026, the integration of hybrid information collection methods is anticipated to increase, with entities acknowledging the necessity for both historical and timely insights to enhance operational efficiency and decision-making.

Examine Challenges in Data Ingestion: Common Issues and Solutions

Information acquisition is a critical process for any analytics-focused organization, yet it is fraught with challenges that can significantly impact the efficiency of information pipelines. Key issues include:

- : The integrity of data during ingestion is essential. Low-quality information can lead to flawed analyses and misguided decision-making. Organizations must implement robust validation checks to identify and rectify errors early in the process of . Notably, 87% of analytics initiatives fail to reach production due to insufficient information quality, underscoring the vital need for stringent .

- Volume and Velocity: The exponential growth of information poses a formidable challenge. Conventional ingestion techniques often struggle to manage the sheer volume and high velocity of incoming streams. For instance, entities like JPMorgan Chase have developed multi-layered quality strategies that incorporate to efficiently handle billions of transactions. Utilizing can also assist organizations in meeting these demands.

- : Ingesting information from diverse sources frequently results in format discrepancies and schema mismatches. Standardizing formats and employing transformation processes are essential strategies to ensure compatibility across systems, thereby enhancing integration.

- Latency: In scenarios requiring real-time information processing, latency can present significant challenges. Delays in processing can result in missed opportunities for timely insights. Establishing effective information pipelines and improving processing speeds are crucial for reducing latency and ensuring that information is accessible when needed.

To effectively tackle these challenges, organizations should invest in advanced that provide features such as automated validation, continuous monitoring, and proactive error management. By addressing these issues directly, data engineers can substantially enhance the reliability and effectiveness of their data ingestion processes, which will clarify the ingestion data meaning and ultimately drive improved business outcomes.

Conclusion

Understanding the nuances of data ingestion is essential for engineers and organizations seeking to optimize their information management strategies. By efficiently collecting, importing, and loading data from various sources, businesses can significantly improve their decision-making capabilities and operational efficiency. The importance of robust data ingestion practices cannot be overstated; they establish the foundation for high-quality information that drives strategic initiatives.

This exploration presents key arguments that emphasize the critical role of data ingestion in contemporary data management. The article examines different methods of ingestion - batch, real-time, and hybrid - each serving distinct purposes and presenting unique challenges. Furthermore, it addresses common obstacles encountered during the ingestion process, such as data quality, volume, and latency, while offering solutions to mitigate these issues. The integration of advanced tools and strategies is vital for ensuring data integrity and responsiveness in an increasingly complex information landscape.

Ultimately, the insights shared highlight the pivotal role of data ingestion in shaping effective data governance and analytics. As organizations navigate the evolving demands of information management, prioritizing efficient ingestion practices will not only enhance data quality but also cultivate a culture of informed decision-making. By embracing these principles, engineers and businesses can fully leverage their data assets, driving innovation and gaining a competitive edge in their respective fields.

Frequently Asked Questions

What is data ingestion?

Data ingestion refers to the process of collecting, importing, and loading data from various sources into a centralized system for storage and analysis.

Why is data ingestion important?

Data ingestion is vital for establishing pipelines that facilitate the seamless flow of information from operational systems to analytical environments, directly influencing information quality and accessibility, which affects decision-making processes across organizations.

What types of data can be ingested?

Information can be ingested in multiple formats, including structured data from databases, semi-structured data from APIs, and unstructured data from documents or social media.

How does the quality of data ingestion impact organizations?

The quality of data ingestion influences information quality and accessibility, which subsequently affects decision-making processes. Statistics show that 77 percent of organizations rate their information quality as average or below, highlighting the need for effective acquisition practices.

What is the future trend regarding cloud services and data ingestion?

Organizations are increasingly adopting cloud services, with projections indicating that over 94 percent will do so by 2026. This trend makes efficient information intake essential for managing the complexities of multi-cloud environments.

What benefits do companies experience from centralized information collection systems?

Companies that implement centralized information collection systems report enhanced operational efficiency and improved data quality, enabling them to make informed decisions swiftly.

Why are robust intake practices crucial in information management?

Robust intake practices are crucial for ensuring that high-quality information is readily available for analysis and strategic initiatives in the evolving landscape of information management.

List of Sources

- Define Data Ingestion: Understanding Its Core Meaning

- 9 Must-read Inspirational Quotes on Data Analytics From the Experts (https://nisum.com/nisum-knows/must-read-inspirational-quotes-data-analytics-experts)

- data.folio3.com (https://data.folio3.com/blog/data-engineering-stats)

- Understanding data ingestion (https://getdbt.com/discover/understanding-data-ingestion)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Explore the Importance of Data Ingestion in Modern Data Management

- medium.com (https://medium.com/@meghrajp008/19-inspirational-quotes-about-data-wisdom-for-a-data-driven-world-fcfbe44c496a)

- cio.com (https://cio.com/article/4117094/data-management-trends-whats-in-whats-out.html)

- What’s in, and what’s out: Data management in 2026 has a new attitude (https://cio.com/article/4117488/whats-in-and-whats-out-data-management-in-2026-has-a-new-attitude.html)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Identify Types of Data Ingestion: Methods and Approaches

- data.folio3.com (https://data.folio3.com/blog/data-engineering-stats)

- Data Ingestion Explained: Types, Tools & Process (2026 Guide) (https://skyvia.com/learn/what-is-data-ingestion)

- Examine Challenges in Data Ingestion: Common Issues and Solutions

- prnewswire.com (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- A Continual Quest for Improving Data Quality | U.S. Bureau of Economic Analysis (BEA) (https://bea.gov/news/blog/2026-03-16/continual-quest-improving-data-quality)

- AI Data Quality in 2026: Challenges & Best Practices (https://aimultiple.com/data-quality-ai)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

_For%20light%20backgrounds.svg)