Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding Data Operations: Definition, Evolution, and Importance

Explore the significance and evolution of data operation in modern information management.

Introduction

Data operations have evolved from basic manual processes to advanced methodologies that enhance efficiency and insight in a data-driven landscape.

With the rapid evolution of technology, organizations are now leveraging frameworks like DataOps to enhance collaboration, automate workflows, and ensure data integrity.

As data volumes and complexities increase, organizations face significant challenges in adapting their operations to leverage data for strategic decision-making.

Failure to adapt could hinder organizations from fully capitalizing on data-driven insights.

Define Data Operations: Core Concepts and Significance

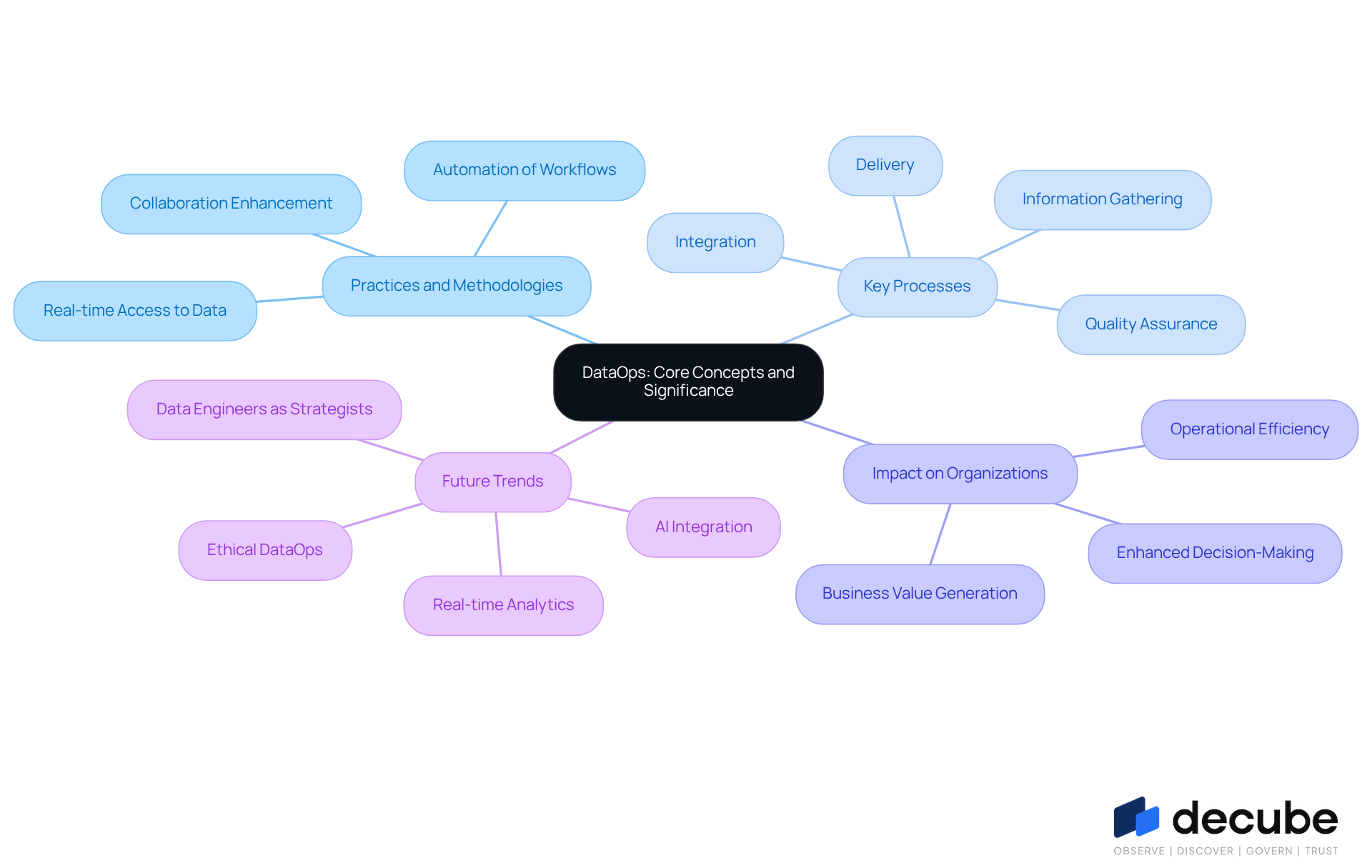

DataOps encompasses a variety of practices and methodologies that are essential for effective data operation and information management throughout its lifecycle. This includes key processes like information gathering, integration, quality assurance, and delivery, which enhance the speed, quality, and reliability of the data operation for analytics. In 2026, information processes significantly enhance collaboration among analytics teams, automate workflows, and ensure that information remains accessible and actionable for informed decision-making.

Organized information operations enable organizations to utilize their assets effectively, driving insights and business value. For example, companies adopting DataOps frameworks, like Decube, have reported substantial enhancements in data operation quality and operational efficiency. With Decube's unified platform, organizations can enhance observability and governance, enabling easy monitoring of information quality and ensuring that it remains accurate and consistent. The platform's automated crawling feature eliminates the need for manual metadata updates, streamlining the management process and enhancing collaboration among teams.

As entities shift to a model where information engineers become strategic partners in decision-making, the focus on collaboration becomes essential. This transition allows for real-time access to high-quality information, enabling teams to respond swiftly to market changes and customer needs. Specialists in the domain emphasize that the advancement of information processes is not simply a technical enhancement but a crucial change in how entities handle information. As articulated by industry leaders, the integration of AI and automation into data operation will redefine workflows, making them more efficient and responsive. By 2026, organizations prioritizing structured information processes, with support from platforms like Decube, will enhance decision-making and ensure compliance, leading to a competitive advantage.

Trace the Evolution of Data Operations: Historical Context and Development

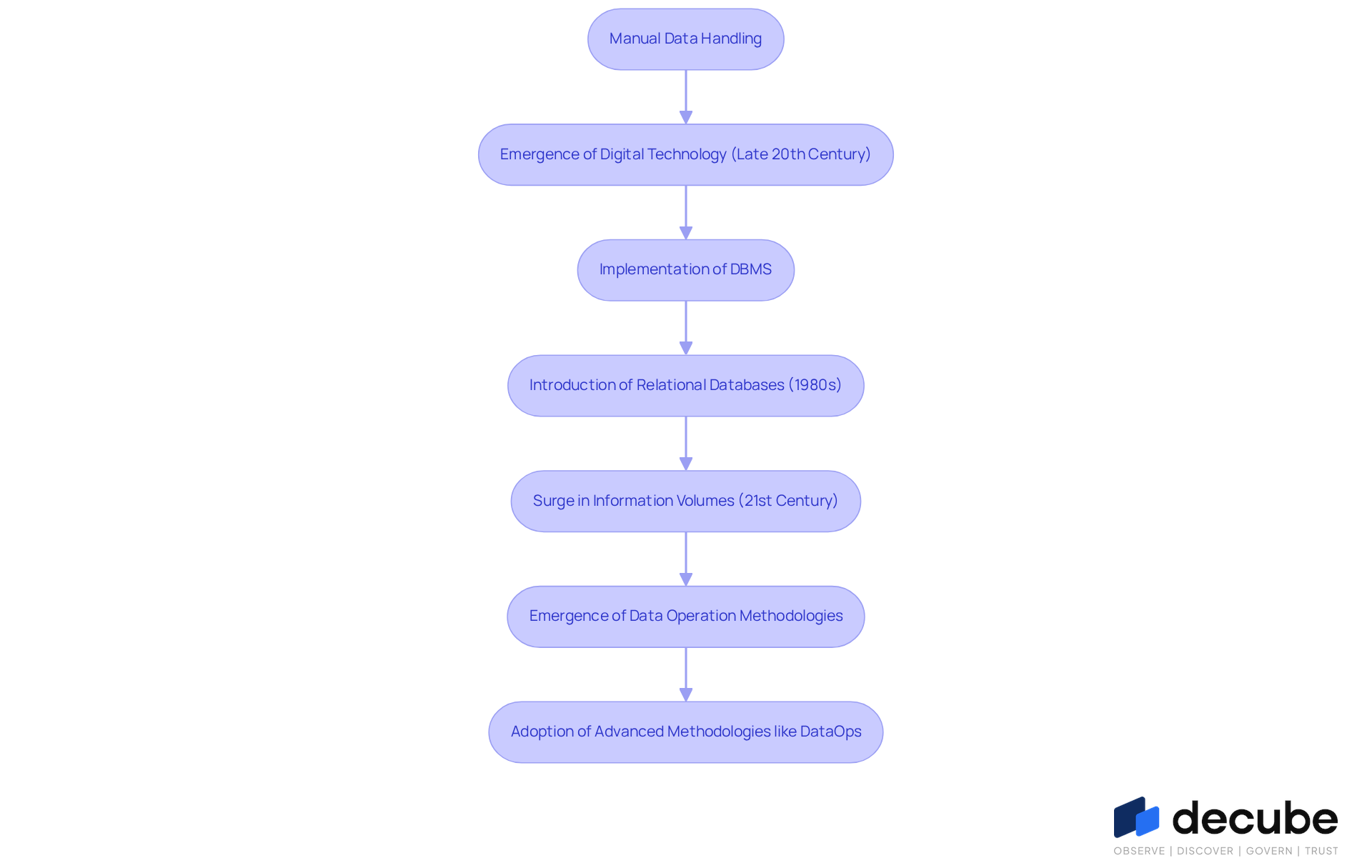

The evolution of information operations reflects a significant shift from manual data handling to sophisticated digital management systems that optimize data operation. Initially, information was stored in physical formats and accessed manually. However, with the emergence of digital technology in the late 20th century, organizations began implementing database management systems (DBMS) that automated information storage and retrieval processes. The introduction of relational databases in the 1980s marked a significant turning point, enabling more complex relationships and queries.

In the 21st century, driven by cloud computing and IoT, information volumes have surged, with Gartner estimating that the average enterprise IT infrastructure generates 2-3 times more operational information each year. Organizations struggle to manage the overwhelming volume of data generated through data operations, leading to inefficiencies and potential errors. This rise in information volume has resulted in greater complexity in managing information, prompting the emergence of data operation methodologies. This methodology incorporates principles from Agile and DevOps, concentrating on continuous integration and delivery of information, thereby improving the speed and quality of insights driven by information.

For instance, the implementation of DataOps at Boston Children’s Hospital has shown remarkable results, including a 40% reduction in research lead time and a 12% improvement in medication adherence. In this context, Decube's automated crawling feature plays a crucial role by ensuring effortless metadata oversight and secure access control, which are essential for effective information governance. Additionally, Decube's comprehensive lineage visualization improves information observability, enabling companies to monitor flow and ensure clarity throughout their pipelines.

This context highlights the need for organizations to adapt their information management practices to effectively handle modern challenges, while also addressing the difficulties encountered in integrating various tools and ensuring effective governance. Embracing advanced methodologies like data operation is essential for organizations to thrive in an increasingly data-driven landscape.

Identify Key Characteristics of Data Operations: Components and Best Practices

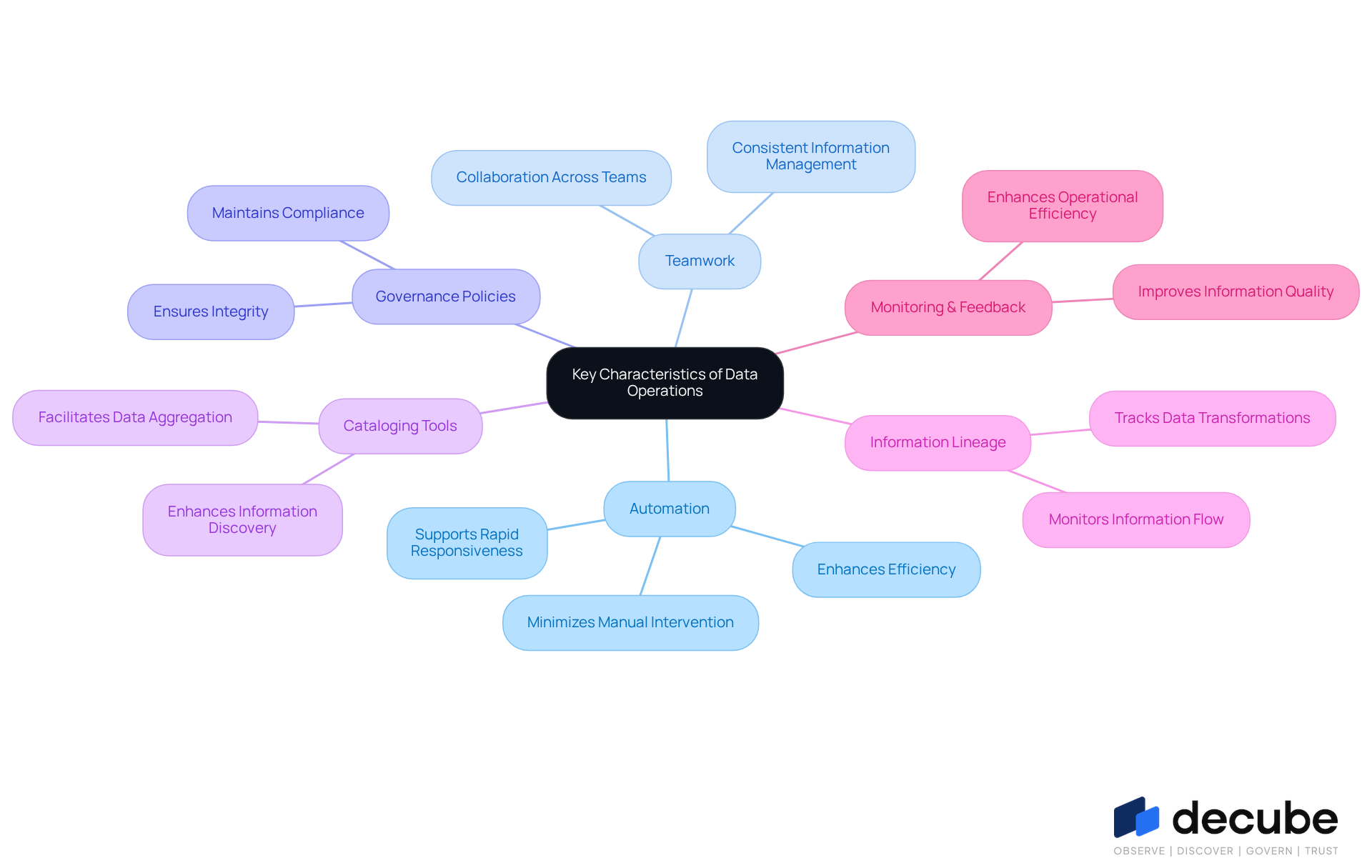

In the realm of information operations, the absence of automation can significantly hinder efficiency and decision-making. Efficient data operations are characterized by automation, teamwork, and a strong focus on quality. Automation minimizes manual intervention in information processes, allowing teams to concentrate on analysis rather than preparation. Cooperation among diverse teams is vital for consistent and efficient information management throughout the organization.

Optimal approaches in data operation include establishing robust governance policies, which are essential for ensuring integrity and compliance. Leveraging cataloging tools enhances information discovery, while creating clear lineage enables entities to monitor information flow and transformations effectively. Regular monitoring and feedback are crucial for improving information quality and ensuring operational efficiency, ultimately making the information a trustworthy resource for decision-making. This leads to improved decision-making capabilities across the organization. Successful entities exemplify these practices, demonstrating the tangible advantages of a well-governed and automated information environment. Ultimately, the integration of these practices not only enhances operational efficiency but also empowers organizations to make informed decisions based on reliable information.

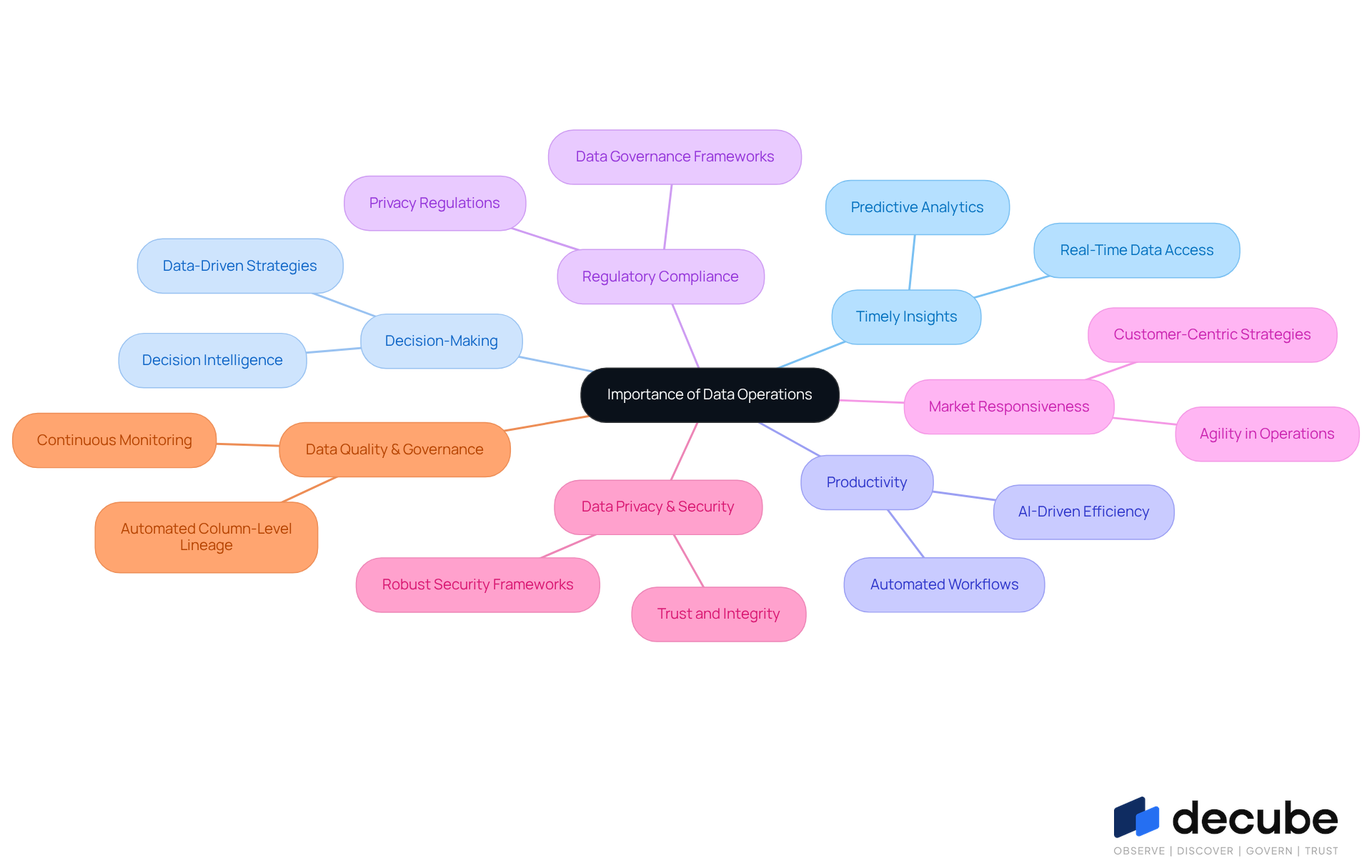

Highlight the Importance of Data Operations: Impact on Modern Data Management

In today's fast-paced business environment, efficient data operation is crucial for organizations seeking timely insights. These processes facilitate quicker and more reliable access to insights derived from data operations. Efficient information management allows companies to enhance decision-making, increase productivity, and ensure regulatory compliance. The incorporation of information processes into business strategies enables companies to respond swiftly to market shifts and client requirements, ultimately driving innovation and growth. Moreover, as data privacy and security become increasingly important, robust operational frameworks assist organizations in maintaining trust and upholding integrity in their data operation practices.

Decube's advanced features, such as automated column-level lineage, illustrate how organizations can achieve improved cataloging and observability. Users have noted that this functionality allows business users to quickly identify issues within reports and dashboards, thereby enhancing overall data quality and governance. These insights highlight how Decube's Data Trust Platform is reshaping modern data management, ensuring compliance while promoting a culture of data integrity. Ultimately, the integration of robust data management practices is essential for maintaining competitive advantage through effective data operations in a data-driven landscape.

Conclusion

Navigating the complexities of modern business environments necessitates efficient data operations. By embracing methodologies like DataOps, organizations can streamline their data management processes, enhancing the speed and reliability of insights critical for informed decision-making. This evolution fosters collaboration among teams and elevates data engineers to the role of strategic partners in achieving business success.

Throughout the article, key points such as the evolution of data operations from manual processes to sophisticated automated systems have been discussed. The importance of integrating robust governance policies, leveraging automation, and fostering teamwork has been emphasized as essential components for improving data quality and operational efficiency. Real-world examples, like those from Decube and Boston Children’s Hospital, illustrate the tangible benefits of adopting advanced data operation practices.

As businesses navigate the challenges of a data-driven landscape, the imperative for organizations is evident: they must prioritize the implementation of effective data operations to maintain a competitive edge. By doing so, they not only enhance their decision-making capabilities but also ensure compliance and uphold data integrity, ultimately driving innovation and growth in their respective industries. Organizations that neglect effective data operations may find themselves unable to leverage their data assets fully, risking their competitive position.

Frequently Asked Questions

What is DataOps?

DataOps encompasses a variety of practices and methodologies essential for effective data operation and information management throughout its lifecycle, including processes like information gathering, integration, quality assurance, and delivery.

What are the key benefits of implementing DataOps?

Implementing DataOps enhances the speed, quality, and reliability of data operations for analytics, improves collaboration among analytics teams, automates workflows, and ensures information remains accessible and actionable for informed decision-making.

How does DataOps impact organizational efficiency?

Organized information operations enable organizations to utilize their assets effectively, driving insights and business value, which leads to substantial enhancements in data operation quality and operational efficiency.

What role does the Decube platform play in DataOps?

Decube's unified platform enhances observability and governance, allowing for easy monitoring of information quality, ensuring accuracy and consistency, and streamlining the management process through automated metadata updates.

How does the shift to a model with information engineers as strategic partners affect decision-making?

This shift allows for real-time access to high-quality information, enabling teams to respond swiftly to market changes and customer needs, emphasizing the importance of collaboration.

What is the significance of integrating AI and automation into data operations?

The integration of AI and automation is expected to redefine workflows, making them more efficient and responsive, which is crucial for enhancing decision-making and ensuring compliance.

What can organizations expect by 2026 regarding structured information processes?

Organizations prioritizing structured information processes, supported by platforms like Decube, will enhance decision-making, ensure compliance, and gain a competitive advantage in their operations.

List of Sources

- Define Data Operations: Core Concepts and Significance

- DataOps.live Launches Major Upgrade to Deliver AI-Ready Data at Enterprise Scale (https://prnewswire.com/news-releases/dataopslive-launches-major-upgrade-to-deliver-ai-ready-data-at-enterprise-scale-302557927.html)

- 2026 DataOps Predictions - Part 1 | APMdigest (https://apmdigest.com/2026-dataops-predictions-1)

- DataOps Trends in 2026: Data Management with NiFi and Spark (https://ksolves.com/blog/big-data/latest-dataops-trends)

- 2026 Analytics: The Future of Data-Driven Decision Making (https://sift-ag.com/news/2026-analytics-the-future-of-data-driven-decision-making)

- 2026 Data Management Trends Shaping Enterprise Decision-Making | Intalio (https://intalio.com/blogs/2026-data-management-trends-shaping-enterprise-decision-making)

- Trace the Evolution of Data Operations: Historical Context and Development

- The Evolution from DevOps to DataOps (https://unraveldata.com/resources/the-evolution-from-devops-to-dataops)

- AIOps and LLMOps: The Evolution of DataOps and Beyond (https://dataops.live/blog/aiops-llmops-evolution-dataops)

- Xops, an overview of the evolution of the Ops movement (https://persistent.com/blogs/an-overview-of-the-evolution-from-devops-to-dataops-to-xops)

- DataOps and the evolution of data analytics (https://processexcellencenetwork.com/big-data-analytics/articles/dataops-and-the-evolution-of-data-analytics)

- A Brief History of Data Management - Dataversity (https://dataversity.net/articles/brief-history-data-management)

- Identify Key Characteristics of Data Operations: Components and Best Practices

- How Automation Helps to Solve the Data-Management Challenge (https://cio.com/article/189464/how-automation-helps-to-solve-the-data-management-challenge.html)

- Data Management Trends in 2026: Moving Beyond Awareness to Action - Dataversity (https://dataversity.net/articles/data-management-trends)

- The power of data management automation (https://redwood.com/article/data-management-automation)

- Top 5 Priorities for Data Leaders in 2026 (https://barc.com/five-priorities-data-leaders-2026)

- AI and Data Strategy in 2026: What Data Leaders Must Get Right (https://analytics8.com/blog/ai-and-data-strategy-in-2026-what-leaders-need-to-get-right)

- Highlight the Importance of Data Operations: Impact on Modern Data Management

- DataOps: Powering Modern Data-Driven Organizations (https://acceldata.io/blog/the-dataops-revolution-conquering-chaos-unlocking-insights)

- How Data Can Drive Business Growth and Innovation (https://velosio.com/blog/how-data-can-drive-business-growth-and-innovation)

- Top Data-Driven Strategies to Maximize Business Efficiency in 2026 (https://apptad.com/blogs/top-data-driven-strategies-to-maximize-business-efficiency-in-2026)

- B2B Business Trends 2026: How AI, data, and trust will shape the next growth era (https://linkedin.com/pulse/b2b-business-trends-2026-how-ai-data-trust-shape-next-growth-era-b554c)

_For%20light%20backgrounds.svg)