Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Maximize Your Data Observability Platforms: Key Strategies and Insights

Unlock the potential of data observability platforms with key strategies and insights for success.

Introduction

Organizations are increasingly challenged to ensure data integrity and operational excellence in a complex information environment. Monitoring the health of data throughout its lifecycle is vital for achieving these goals. The implementation of effective data observability platforms offers businesses a significant opportunity to enhance decision-making processes and overall efficiency.

However, organizations face significant challenges in overcoming obstacles such as:

- Information silos

- Alert fatigue

Successfully addressing these challenges can lead to enhanced decision-making and operational efficiency. This article explores strategies and insights that empower organizations to leverage their data assets effectively.

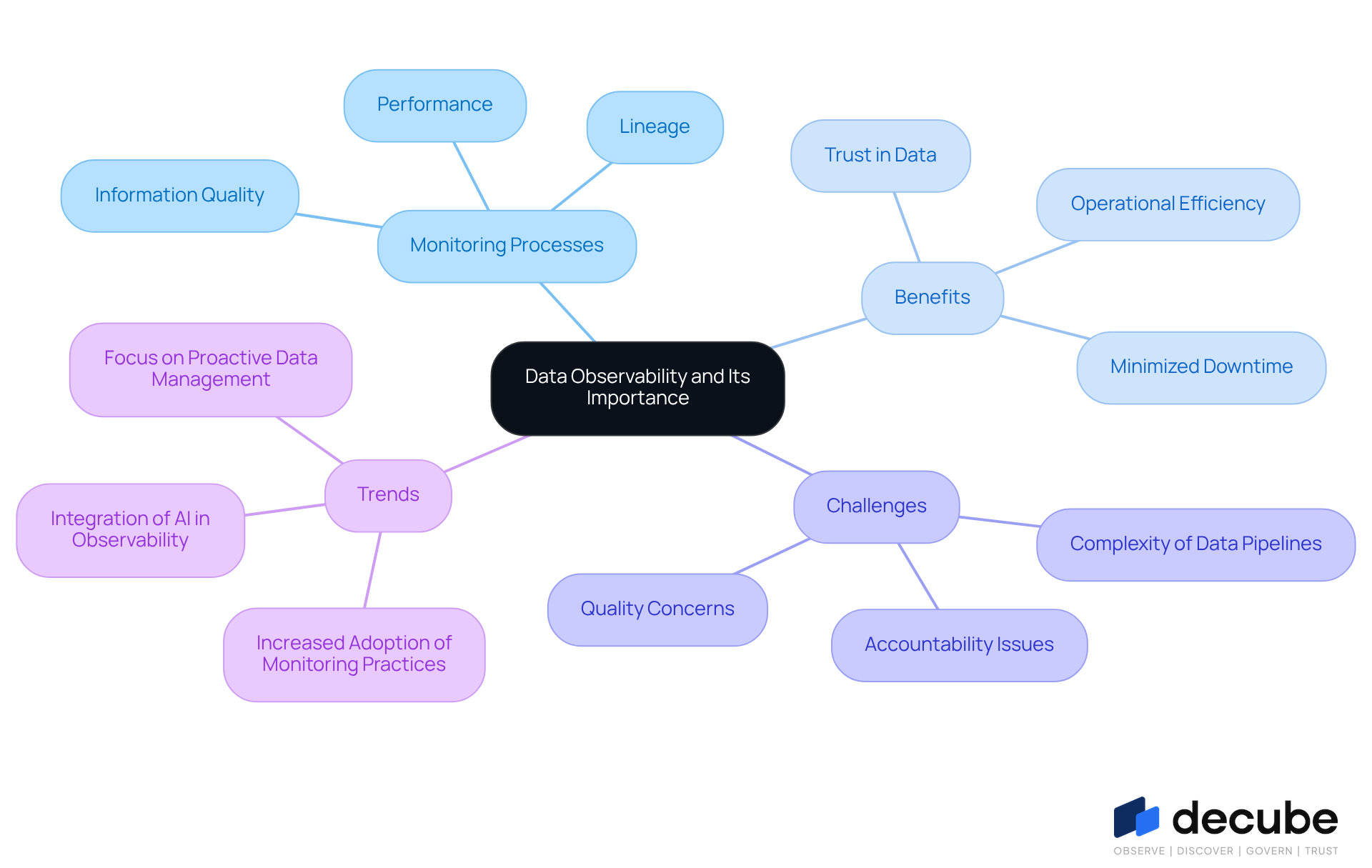

Define Data Observability and Its Importance

Monitoring the health of information throughout its lifecycle is crucial for organizations aiming to maintain [data integrity and operational efficiency](https://snowflake.com/en/fundamentals/data-observability). It encompasses a collection of processes and tools that provide insights into information quality, lineage, and performance, allowing organizations to swiftly identify and resolve potential issues. [Information visibility](https://decube.io/post/10-essential-data-schema-examples-for-effective-database-design) enhances trust in data, minimizes downtime, and boosts operational efficiency. For example, XYZ Corp, a worldwide e-commerce leader, implemented a monitoring platform that enabled real-time oversight and automated alerts for anomalies, significantly enhancing customer satisfaction through improved quality.

Recent trends suggest that organizations are increasingly embracing information monitoring practices to tackle the complexities of contemporary information environments. As organizations face increasing complexity in their information pipelines, the challenge of effective monitoring intensifies. Industry leaders stress that effective information visibility not only reduces risks linked to quality concerns but also promotes a culture of accountability among analytics teams. Without effective monitoring, organizations risk quality concerns and accountability issues, ultimately leading to improved business results.

Furthermore, analytics tools enable rapid detection of inefficiencies and bottlenecks in information pipelines, improving overall operational effectiveness. By investing in these tools, companies can decrease the time spent on quality issues, enabling teams to concentrate on strategic initiatives instead of tackling problems. As mentioned by industry specialists, a successful information monitoring strategy can result in healthier information pipelines, enhanced productivity, and greater customer satisfaction, reinforcing the value of information as a strategic asset. By prioritizing information visibility, organizations can enhance their operational effectiveness and secure a competitive edge in the market.

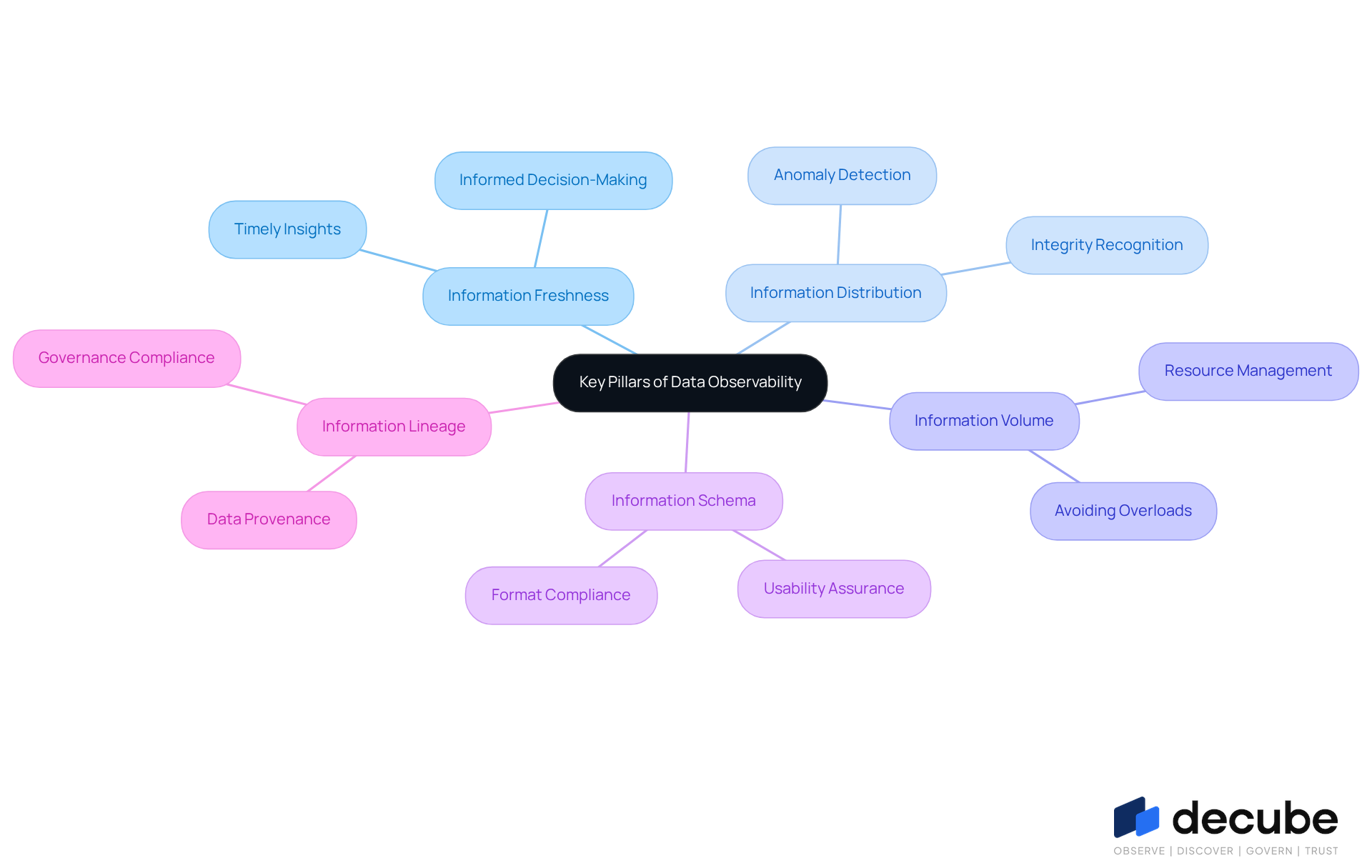

Identify the Key Pillars of Data Observability

To enhance data management capabilities, organizations must focus on the key pillars of data observability:

- Information Freshness: Keeping current information is vital for informed decision-making. Current information makes insights relevant and actionable, directly impacting business results.

- Information Distribution: Observing the spread of information across systems aids in recognizing anomalies and possible issues. Comprehending distribution patterns enables entities to identify irregularities that could impact information integrity.

- Information Volume: Monitoring the quantity of information processed is essential to avoid system overloads and guarantee optimal performance. Effective volume monitoring helps organizations manage resources efficiently and maintain service quality.

- Information Schema: Comprehending the framework of information is essential for identifying alterations that may affect usability. Schema monitoring guarantees that information adheres to anticipated formats, facilitating smoother operations.

- [[[Information Lineage](https://future-processing.com/blog/data-observability)](https://future-processing.com/blog/data-observability)](https://future-processing.com/blog/data-observability): Tracking how information flows from its source to its destination reveals important transformations and dependencies. Lineage tracking is essential for understanding information provenance and ensuring compliance with governance standards.

By prioritizing these pillars, organizations can create a strong monitoring framework using data observability platforms that greatly improves their capacity to handle information efficiently. This strategic focus not only streamlines operations but also empowers organizations to make informed decisions based on reliable data.

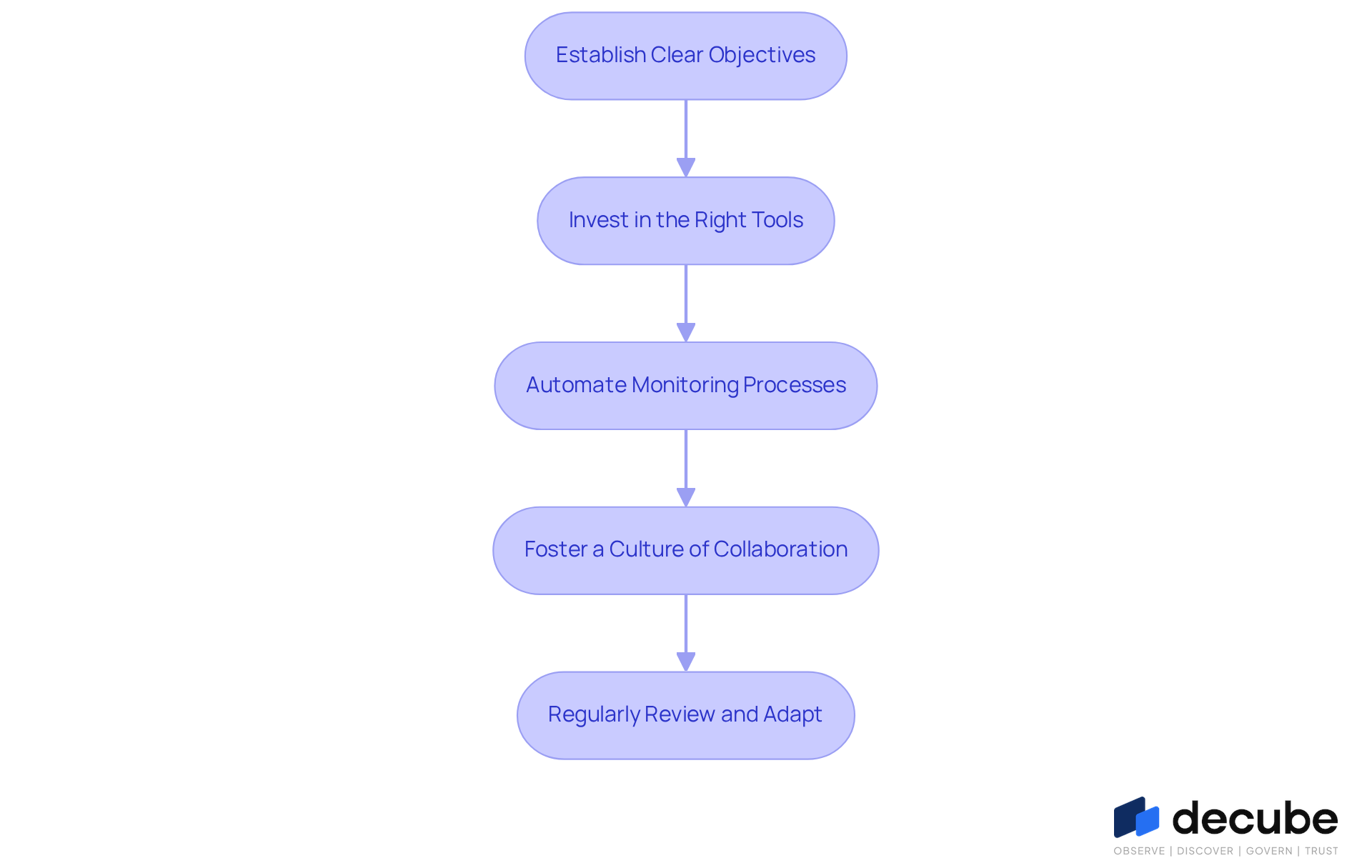

Implement Effective Strategies for Data Observability

To enhance the effectiveness of data observability platforms, organizations must adopt a structured approach that prioritizes clarity. Here are key steps to consider:

- Establish Clear Objectives: Define success for your information monitoring efforts by setting specific metrics and KPIs that align with business outcomes. This clarity aids in assessing the impact of monitoring initiatives.

- Invest in the Right Tools: Choose monitoring platforms that integrate smoothly with your information architecture and provide extensive tracking capabilities. Organizations often struggle with inadequate information when making technology spending decisions. Investing in robust data observability platforms can significantly enhance decision-making and operational efficiency.

- Automate Monitoring Processes: Automating monitoring processes helps maintain quality and performance while reducing manual intervention and errors. This approach allows for early identification of problems, enabling swift recovery and preserving operational integrity.

- Foster a Culture of Collaboration: Encourage cross-functional teams to work together in identifying and resolving information issues. This approach enhances accountability and communication, ensuring that all stakeholders are aligned in maintaining data quality.

- Regularly Review and Adapt: Continuously evaluate the effectiveness of your monitoring practices. Modify your approaches according to changing business requirements and technological progress to guarantee that your monitoring efforts remain relevant and efficient.

Ultimately, these strategies empower organizations to navigate complexities and make informed decisions that drive success.

Address Common Challenges in Data Observability

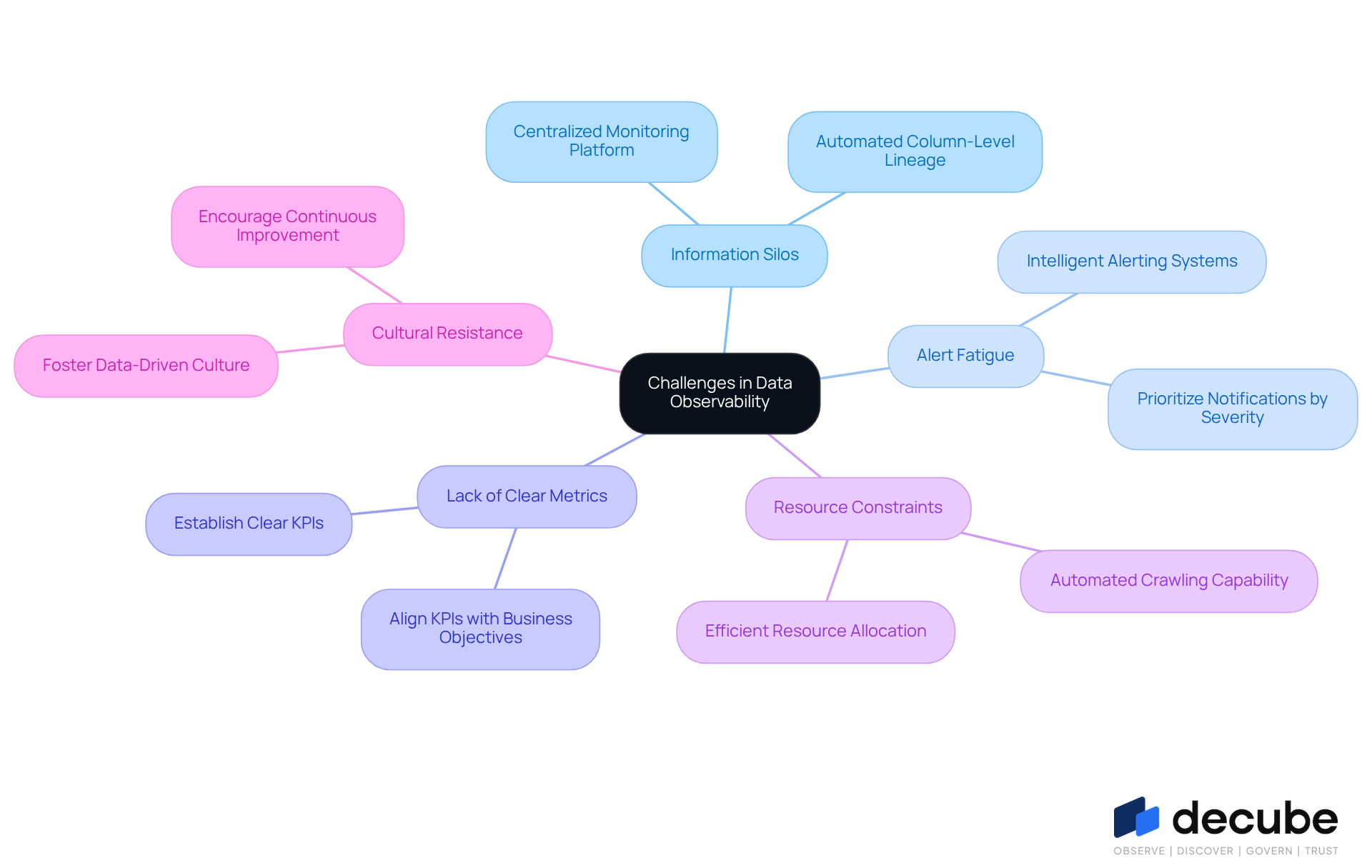

Organizations encounter various challenges in their pursuit of effective data observability, including:

- Information Silos: Fragmented information across various systems can severely limit visibility. To tackle this, organizations should combine their information sources and create a centralized monitoring platform. Decube's automated column-level lineage capability not only assists business users in recognizing problems in reports or dashboards but also offers a thorough perspective of information incidents and their characteristics, effectively surmounting information silos. Users, including Piyush P., have praised this capability for effectively integrating data catalog and monitoring functionalities.

- Alert Fatigue: Excessive alerts can overwhelm teams, leading to critical issues being overlooked. Research indicates that 70% of alerts are false positives, which can lead to alert fatigue and decreased operational efficiency. Implementing intelligent alerting systems that prioritize notifications based on severity is essential for mitigating alert fatigue, ensuring that teams focus on the most pressing concerns.

- Lack of Clear Metrics: Without well-defined metrics, measuring success becomes challenging. Organizations should establish clear key performance indicators (KPIs) that align with their business objectives, enabling effective tracking of progress and outcomes.

- Resource Constraints: Limited resources can hinder visibility initiatives. Decube's automated crawling capability improves information visibility and governance by ensuring that metadata is auto-refreshed once sources are linked. This automation not only maximizes efficiency but also allows organizations to allocate resources more effectively, ensuring critical data assets are managed properly.

- Cultural Resistance: Resistance to change can hinder the implementation of monitoring practices. Fostering a culture that values data-driven decisions and continuous improvement is vital for overcoming this challenge and creating an environment conducive to actionable insights.

By proactively addressing these challenges, organizations can significantly enhance their data observability platforms, leading to more reliable data management and improved operational efficiency. Addressing these challenges not only improves data observability but also fosters a culture of continuous improvement and operational excellence.

Conclusion

Organizations that overlook data observability may find themselves grappling with inefficiencies and compromised data integrity. Implementing effective monitoring strategies and focusing on key aspects like information freshness, distribution, and lineage can enhance decision-making and promote accountability within businesses. Focusing on data visibility reduces risks related to data quality and allows teams to prioritize strategic initiatives over remedial tasks.

Throughout the article, various strategies were highlighted to optimize data observability platforms. Establishing clear objectives, investing in the right tools, automating processes, fostering collaboration, and regularly reviewing practices are all critical steps that organizations can take to overcome common challenges. Addressing issues like information silos, alert fatigue, and cultural resistance plays a crucial role in creating an environment conducive to effective data management.

In conclusion, the importance of data observability cannot be overstated. Organizations must prioritize these practices to navigate the complexities of modern data environments successfully. Neglecting data observability can lead to missed opportunities and increased operational risks. By committing to continuous improvement and leveraging the right strategies, businesses can harness the full potential of their data, driving better outcomes and securing a competitive advantage in the marketplace. Failing to embrace data observability could mean missing out on critical insights that drive success in today's data-centric landscape.

Frequently Asked Questions

What is data observability?

Data observability refers to the processes and tools used to monitor the health of information throughout its lifecycle, providing insights into information quality, lineage, and performance.

Why is data observability important for organizations?

Data observability is crucial for maintaining data integrity and operational efficiency, enhancing trust in data, minimizing downtime, and improving overall operational effectiveness.

How does data observability enhance customer satisfaction?

By implementing monitoring platforms that provide real-time oversight and automated alerts for anomalies, organizations can improve the quality of their data, leading to enhanced customer satisfaction.

What recent trends are influencing data observability practices?

Organizations are increasingly embracing information monitoring practices to address the complexities of modern information environments, leading to greater accountability and reduced risks related to data quality.

What are the consequences of ineffective data monitoring?

Without effective monitoring, organizations may face quality concerns and accountability issues, which can negatively impact business results.

How do analytics tools contribute to data observability?

Analytics tools enable rapid detection of inefficiencies and bottlenecks in information pipelines, allowing teams to focus on strategic initiatives rather than resolving quality issues.

What benefits can organizations expect from a successful data monitoring strategy?

A successful data monitoring strategy can lead to healthier information pipelines, enhanced productivity, greater customer satisfaction, and a competitive edge in the market.

List of Sources

- Define Data Observability and Its Importance

- What Is Data Observability? 5 Key Pillars To Know In 2026 (https://montecarlodata.com/blog-what-is-data-observability)

- What Is Data Observability? A Guide to Data Trust | Snowflake (https://snowflake.com/en/fundamentals/data-observability)

- 10 Essential Metrics for Effective Data Observability | Pantomath (https://pantomath.com/blog/10-essential-metrics-for-data-observability)

- Data Observability Recent News | Data Center Knowledge (https://datacenterknowledge.com/operations-and-management/data-observability)

- Data Observability Case Study: Success Stories and Real-World Examples | Orchestra (https://getorchestra.io/guides/data-observability-case-study-success-stories-and-real-world-examples)

- Identify the Key Pillars of Data Observability

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- Data observability in 2026: a comprehensive guide (https://future-processing.com/blog/data-observability)

- What Is Data Observability? 5 Key Pillars To Know In 2026 (https://montecarlodata.com/blog-what-is-data-observability)

- Implement Effective Strategies for Data Observability

- Observability Trends 2026 | IBM (https://ibm.com/think/insights/observability-trends)

- Six observability predictions for 2026 (https://dynatrace.com/news/blog/six-observability-predictions-for-2026)

- Observability as the backbone of compliance in a new federal cyber era | Federal News Network (https://federalnewsnetwork.com/commentary/2026/04/observability-as-the-backbone-of-compliance-in-a-new-federal-cyber-era)

- Address Common Challenges in Data Observability

- The High Cost of Data Silos: 3 Telling Statistics (https://appian.com/blog/acp/data-fabric/data-silo-costs-statistics)

- Breaking Down Data Silos with Observability: Real-World Success Stories | Rakuten SixthSense (https://sixthsense.rakuten.com/blog/Breaking-Down-Data-Silos-with-Observability-Real-World-Success-Stories)

- 20 best data visualization quotes - The Data Literacy Project (https://thedataliteracyproject.org/20-best-data-visualization-quotes)

_For%20light%20backgrounds.svg)