Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Fact and Dimension in Data Warehouse: Key Concepts and Practices

Discover the essential role of fact and dimension in data warehouse for effective data analysis.

Introduction

Many professionals struggle to differentiate between fact and dimension tables, leading to ineffective data strategies. Understanding these intricacies is essential in data warehousing, where effective data analysis relies on their proper implementation. Mastering the distinctions and applications of these structures is challenging, and many fall into common design pitfalls.

What key concepts and best practices enhance data integrity and performance in a data warehouse? How can professionals leverage these tables effectively?

Define Fact and Dimension Tables in Data Warehousing

In data warehousing, the distinction between fact and dimension in data warehouse tables is crucial for effective data analysis.

- Fact Tables: These tables are designed to store quantitative data for analysis, encompassing metrics such as sales figures, transaction amounts, or other measurable events. Every row in a data set corresponds to a particular event or transaction, and they usually contain foreign keys that connect to attribute groups. For example, a sales data record might log transaction amounts while referencing dimension records that offer context such as customer demographics or product information. Implementing information agreements fosters collaboration among stakeholders, ensuring the data in these records is reliable and high-quality, which enhances decision-making.

- Dimension Records: These records enhance the information held in measure collections by supplying descriptive characteristics. They encompass information like product names, customer details, or time frames, which assist users in filtering and categorizing information for more insightful analysis. Dimension structures frequently evolve at a slower rate compared to measurable entries, representing stable characteristics like product categories and customer segments. By employing Decube's automated crawling capability, organizations can guarantee that metadata is seamlessly managed and maintained, which not only enhances data governance but also improves the accuracy and relevance of the information in numerical records.

The significance of these charts in contemporary information architecture cannot be overstated. As of 2026, more than 90% of medium-to-large enterprises employ metrics and attributes structures in their information repositories, illustrating their essential function in efficient information management. Understanding how the fact and dimension in data warehouse structures connect is crucial for improving analysis and maintaining data integrity. Ralph Kimball, a trailblazer in dimensional modeling, emphasized that fact and dimension in data warehouse are essential structures for establishing a systematic method for analysis, allowing organizations to extract significant insights from their information. Moreover, the incorporation of automated monitoring and analytics within Decube enhances information quality and governance, facilitating improved collaboration across teams. Ultimately, the integration of these structures is vital for organizations aiming to leverage their data for strategic advantage.

Explore Types of Fact and Dimension Tables

Understanding the various types of fact and dimension in data warehouse tables is crucial for optimizing strategies in data warehousing. Fact and dimension tables can be categorized into several types, each serving distinct purposes in data warehousing:

-

Types of Fact Tables:

- Transactional Fact Tables: These tables capture individual transactions, such as sales or orders, with each row representing a single event. For instance, an e-commerce sales fact record might include fields like

customer_id,product_id,date_id,quantity, andrevenue. - Periodic Snapshot Fact Tables: These store data at specific intervals, facilitating trend analysis over time. An example includes monthly sales totals, which help businesses track performance across different periods.

- Accumulating Snapshot Fact Tables: These tables track the progress of a process over time, such as the status of an order from initiation to completion. They update rows to reflect milestones, making them ideal for workflows like order processing and loan approvals.

- Factless Fact Tables: These record events without associated measures, useful for tracking occurrences such as student attendance in classes, where the focus is on the event itself rather than quantifiable metrics.

- Bridge Tables: These handle many-to-many relationships and can retain precomputed summaries, such as monthly sales, improving the capacity to analyze relationships across various aspects.

- Transactional Fact Tables: These tables capture individual transactions, such as sales or orders, with each row representing a single event. For instance, an e-commerce sales fact record might include fields like

Types of Dimension Tables:

- Slowly Changing Dimensions (SCD): These manage changes in dimension attributes over time, ensuring historical accuracy. For instance, a customer aspect might need to reflect changes in customer details while preserving historical data.

- Conformed Dimensions: Commonly used across various data sets, these ensure consistency in reporting. An example is a date aspect used in both sales and inventory fact tables, allowing for unified analysis.

- Junk Attributes: These merge various characteristics that do not belong to other categories, assisting in minimizing clutter in the model and optimizing information management.

- Role-Playing Aspects: These signify various contexts for the same aspect, such as a date aspect utilized for both order date and ship date, enhancing flexibility in reporting.

- Degenerate Attributes: Stored directly within the core structure, these attributes manage many-to-many relationships, illustrated by

order_id, aiding in the handling of complex connections within the information model.

Understanding these categories related to fact and dimension in data warehouse is essential for professionals, as it enables them to develop more effective and tailored models for their analytical needs. As industry specialists highlight, mastering the classification of reality and aspect structures is crucial, as it represents a significant portion of information warehousing principles and is essential for achieving reliable information systems and insightful analytics. Rajesh, a metrics specialist, mentions that 'Fact structures store quantifiable business occurrences and are linked to attribute structures that offer context,' emphasizing the significance of these frameworks in information warehousing. Ultimately, a firm grasp of these classifications can transform how organizations leverage their data for strategic decision-making.

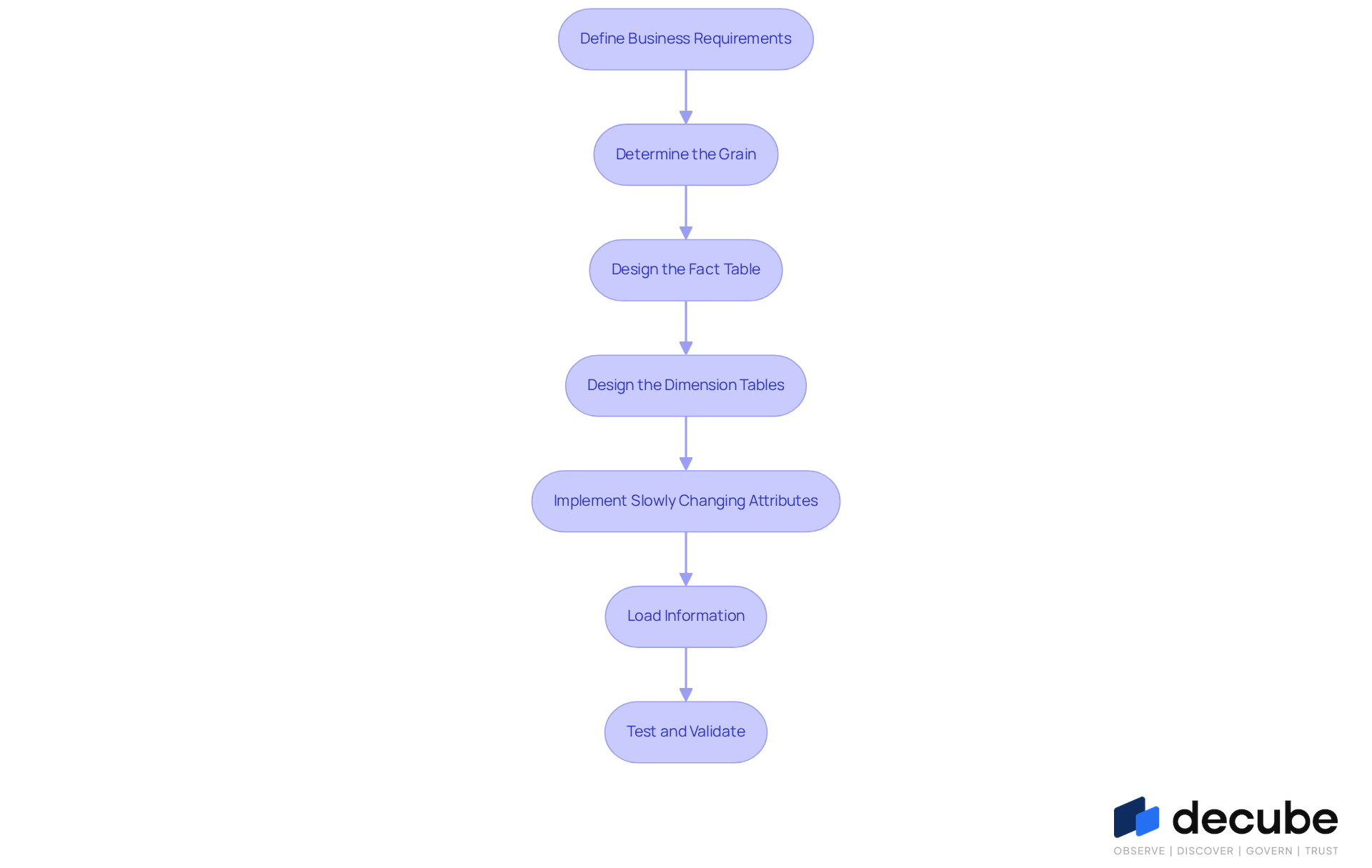

Implement Fact and Dimension Tables in Data Warehousing

Implementing fact and dimension in data warehouse tables requires a systematic approach to ensure effective data analysis.

- Define the Business Requirements: Identify key metrics and aspects essential for analysis by collaborating with stakeholders to gather comprehensive requirements.

- Determine the Grain: Establish the level of detail for your data sets. For instance, when tracking sales, decide if each row represents an individual sale or a daily summary.

- Design the Fact Table: Structure the fact table to include:

- Foreign keys linking to dimension tables.

- Measure columns for quantitative data, ensuring clarity in what each metric represents.

- Design the Dimension Tables: Create dimension tables that encompass:

- Descriptive attributes relevant to the facts, enhancing context for analysis.

- Primary keys for connecting to data collections, ensuring stable relationships.

- Implement Slowly Changing Attributes (SCA): Determine the appropriate SCA type to manage changes in attribute values over time, preserving historical accuracy.

- Load Information: Employ ETL (Extract, Transform, Load) processes to fill the metrics and attribute structures with information from source systems, optimizing for efficiency and performance. Decube's automated crawling feature is essential, ensuring metadata refreshes automatically when sources connect, which streamlines information integration and governance.

- Test and Validate: Conduct thorough testing to ensure information integrity and accuracy, verifying the relationships between fact and dimension structures. Experts emphasize that well-structured layouts in a data warehouse, particularly focusing on fact and dimension, significantly reduce query complexity and enhance speed, underscoring the importance of meticulous design. Additionally, establishing clear access controls and approval processes enhances governance, ensuring that only authorized personnel can modify information.

By adhering to these steps, information specialists can create a robust structure for fact and dimension in data warehouse that enhances effective analysis and overall quality. Frequent assessments of query patterns and performance can improve the design, ensuring that the warehouse adapts to evolving business requirements. Ultimately, a robust design not only enhances performance but also empowers organizations to make informed decisions based on accurate data.

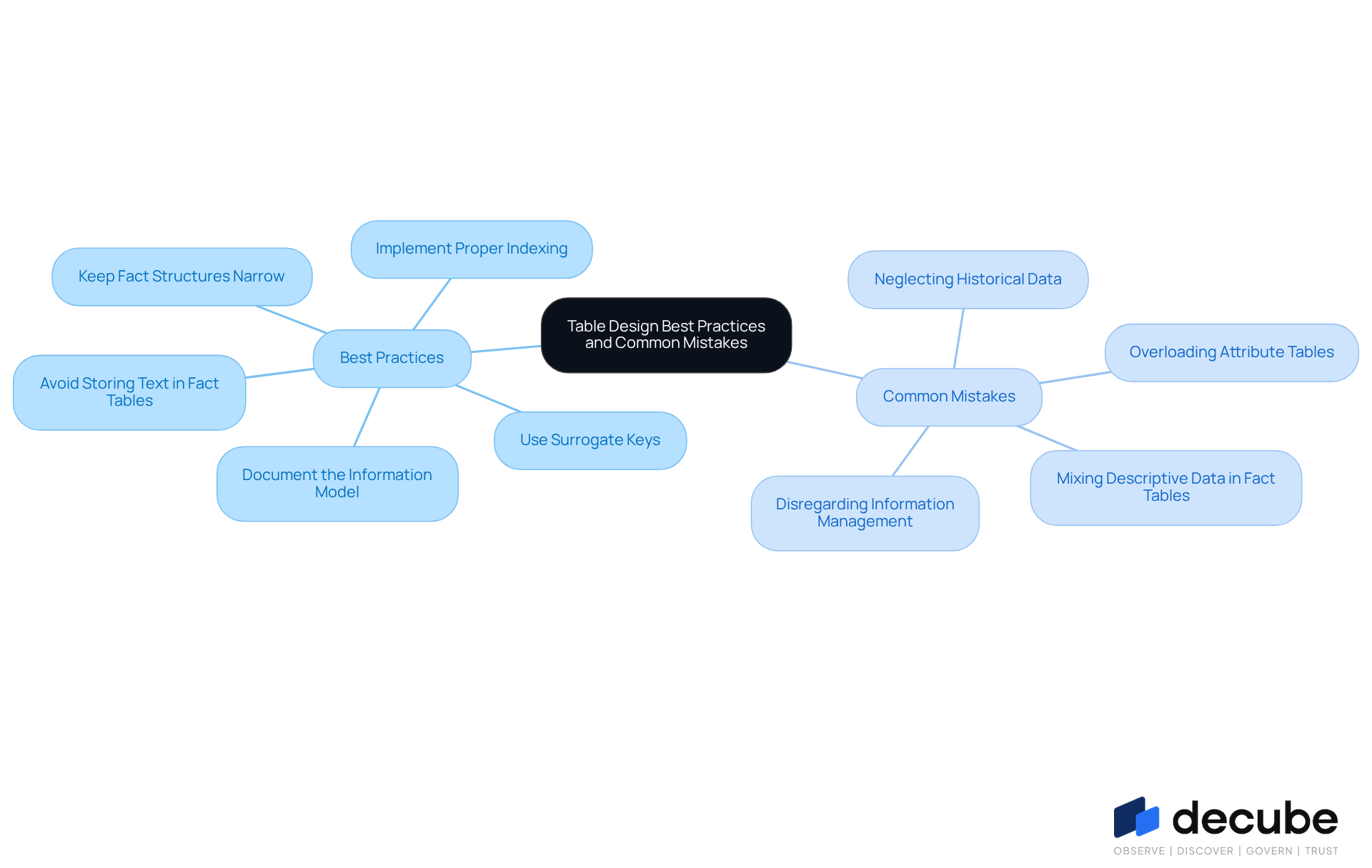

Identify Best Practices and Common Mistakes in Table Design

To ensure optimal performance and data integrity, it is crucial to follow established best practices when structuring fact and dimension in data warehouse along with metrics.

Best Practices:

- Use Surrogate Keys: Implementing surrogate keys in dimension tables enhances uniqueness and significantly improves database performance by streamlining joins and indexing.

- Keep Fact Structures Narrow: Limit the number of columns in fact structures to essential measures and foreign keys. This method not only improves query performance but also streamlines information management.

- Avoid Storing Text in Fact Tables: Fact tables should include only numeric measures; descriptive attributes should be placed in category tables. This separation helps maintain clarity and efficiency in retrieving information.

- Document the Information Model: Clear documentation of the information model, including definitions of measures and dimensions, is essential for facilitating understanding and maintenance. Using Decube's automated crawling feature simplifies this process by refreshing metadata automatically, ensuring that all stakeholders can navigate the information structure effectively.

- Implement Proper Indexing: Strategic indexing, especially on foreign keys in specific records, enhances query performance and speeds up retrieval processes.

Common Mistakes:

- Mixing Descriptive Data in Fact Tables: Including descriptive attributes in fact tables can lead to larger tables and slower queries. To maintain performance, it is essential to keep records of fact and dimension in data warehouse focused on quantitative data.

- Overloading Attribute Tables: Adding excessive attributes to attribute tables complicates queries and can degrade performance. It is important to strike a balance between detail and usability.

- Neglecting Historical Data: Inaccurate reporting can stem from neglecting historical changes in dimensions. Employing Slowly Changing Dimensions (SCD) techniques is essential for maintaining historical accuracy.

- Disregarding Information Management: Failing to implement information governance practices can result in quality issues and compliance risks, compromising the reliability of the warehouse. This oversight can lead to compromised data analysis integrity. Utilizing Decube's automated crawling capability provides improved control over who can see or modify information, thereby enhancing governance and integrity.

By adhering to these best practices and avoiding common pitfalls, data professionals can design effective and efficient data warehouses that utilize fact and dimension in data warehouse to align with business needs and enhance overall data quality. Ultimately, a well-structured data warehouse not only meets business needs but also safeguards the quality and reliability of data.

Conclusion

Organizations that master the concepts of fact and dimension tables position themselves to enhance their data warehousing capabilities significantly. These structures serve as the backbone of effective data analysis, allowing businesses to derive meaningful insights from their data. Understanding the distinct roles of fact tables, which focus on quantitative data, and dimension tables, which provide descriptive context, enables organizations to enhance their analytical processes and decision-making.

Throughout the article, various types of fact and dimension tables were explored, highlighting their specific functions within a data warehouse. From transactional and accumulating snapshot fact tables to slowly changing dimensions and conformed dimensions, each type plays a vital role in maintaining data integrity and facilitating in-depth analysis. Implementing best practices, such as using surrogate keys and avoiding the mixing of descriptive data in fact tables, further ensures that data remains organized and accessible.

Ultimately, the significance of effectively managing fact and dimension tables cannot be overstated. Organizations must prioritize a systematic approach to their data warehousing strategies, employing robust design principles and avoiding common mistakes. As data continues to drive strategic decision-making, mastering these concepts equips organizations to utilize their data with greater effectiveness, fostering a culture of informed insights and operational excellence. Adopting these practices enhances data quality and positions businesses to thrive in a data-driven environment.

Frequently Asked Questions

What are fact tables in data warehousing?

Fact tables are designed to store quantitative data for analysis, such as sales figures or transaction amounts. Each row corresponds to a specific event or transaction and typically contains foreign keys that connect to related dimension records.

What types of data do fact tables contain?

Fact tables contain metrics or measurable events, including transaction amounts, sales figures, and other quantitative data relevant to analysis.

What are dimension records in data warehousing?

Dimension records provide descriptive characteristics that enhance the information in fact tables. They include details like product names, customer information, and time frames, which help users filter and categorize data for more insightful analysis.

How do dimension records differ from fact tables?

Dimension records evolve at a slower rate compared to fact tables and represent stable characteristics such as product categories and customer segments, whereas fact tables contain dynamic, quantitative data.

Why is the relationship between fact and dimension tables important?

Understanding the connection between fact and dimension tables is crucial for improving data analysis and maintaining data integrity, which ultimately enhances decision-making in organizations.

What role do automated crawling capabilities play in data warehousing?

Automated crawling capabilities, such as those provided by Decube, help organizations manage and maintain metadata seamlessly, improving data governance and the accuracy of information in numerical records.

What is the significance of fact and dimension tables in modern information architecture?

More than 90% of medium-to-large enterprises use fact and dimension structures in their information repositories, highlighting their essential role in efficient information management and strategic data utilization.

Who is Ralph Kimball and what is his contribution to data warehousing?

Ralph Kimball is a pioneer in dimensional modeling who emphasized the importance of fact and dimension structures in data warehousing for establishing a systematic approach to analysis and extracting insights from data.

List of Sources

- Define Fact and Dimension Tables in Data Warehousing

- Is Dimensional Data Modeling Still Relevant in the Modern Data Stack? (https://analytics8.com/blog/is-dimensional-data-modeling-still-relevant-in-the-modern-data-stack)

- Fact Vs. Dimension Tables Explained (https://montecarlodata.com/blog-fact-vs-dimension-tables-in-data-warehousing-explained)

- Fact Table vs. Dimension Table: What’s the Difference? | Built In (https://builtin.com/articles/fact-table-vs-dimension-table)

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Data Engineering Stats 2026: Latest Market Insights & Trends (https://data.folio3.com/blog/data-engineering-stats)

- Explore Types of Fact and Dimension Tables

- Fact Tables & Types of Tables in Data Warehousing (https://medium.com/@rajesh_data_ai/fact-tables-types-of-tables-in-data-warehousing-4ca6780de808)

- A Practical Guide to Dimensional Modeling for Data Warehouses (https://oneuptime.com/blog/post/2026-02-13-dimensional-modeling-guide/view)

- Mastering Data Warehouse Modeling for 2026 (https://integrate.io/blog/mastering-data-warehouse-modeling)

- Fact Table vs Dimension Table: Data Warehousing Explained (https://acceldata.io/blog/fact-table-vs-dimension-table-understanding-data-warehousing-components)

- Implement Fact and Dimension Tables in Data Warehousing

- A Practical Guide to Dimensional Modeling for Data Warehouses (https://oneuptime.com/blog/post/2026-02-13-dimensional-modeling-guide/view)

- Fact Table vs Dimension Table: Data Warehousing Explained (https://acceldata.io/blog/fact-table-vs-dimension-table-understanding-data-warehousing-components)

- Mastering Data Warehouse Modeling for 2026 (https://integrate.io/blog/mastering-data-warehouse-modeling)

- Data Analytics Enhancement Stats via ETL — 35 Statistics Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-analytics-enhancement-stats-via-etl)

- Identify Best Practices and Common Mistakes in Table Design

- How to Build Fact Table Design (https://oneuptime.com/blog/post/2026-01-30-fact-table-design/view)

- A Practical Guide to Dimensional Modeling for Data Warehouses (https://oneuptime.com/blog/post/2026-02-13-dimensional-modeling-guide/view)

- Dimensional Modeling: Facts, Dimensions, and Grains (https://dev.to/alexmercedcoder/dimensional-modeling-facts-dimensions-and-grains-3obm)

- Five Common Dimensional Modeling Mistakes and How to Solve Them (https://red-gate.com/blog/five-common-dimensional-modeling-mistakes-and-how-to-solve-them)

_For%20light%20backgrounds.svg)