Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Data Quality Accuracy: Essential Practices for Data Engineers

Enhance your data quality accuracy with essential practices for data engineers.

Introduction

Data quality accuracy is essential for successful data engineering, yet organizations often struggle to maintain high standards due to the complexities of modern data management. By exploring essential practices such as:

- Defining key components of data quality

- Implementing effective governance frameworks

data engineers can significantly enhance the reliability of their information systems. Failure to address these challenges can lead to significant data inaccuracies, undermining the integrity of information systems.

Define Data Quality: Key Components and Importance

Ensuring data quality accuracy is critical for providing reliable information, which encompasses key components such as completeness, consistency, timeliness, and validity. Each of these dimensions plays a vital role in ensuring data quality accuracy, which makes information reliable and suitable for its intended purpose.

- Accuracy pertains to how closely information values align with the true values or a verified source. For instance, if a customer’s address is recorded incorrectly, it can lead to failed deliveries and customer dissatisfaction. Decube enhances data quality accuracy by automatically refreshing metadata, which minimizes the risk of outdated information.

- Completeness indicates whether all necessary information is present. Absence of information can distort analysis and result in erroneous conclusions. With the unified platform, organizations can effectively oversee data quality accuracy, ensuring that all necessary elements are accounted for.

- Consistency ensures that information is the same across various datasets. For example, if a customer’s name is spelled differently in two databases, it can create confusion and errors in reporting. Decube's lineage feature provides clarity in information flow, which is essential for maintaining data quality accuracy and assisting teams in sustaining consistency across datasets.

- Timeliness refers to the information being current and accessible when required. Outmoded information can lead to decisions based on inaccurate details. Decube's automated monitoring capabilities ensure that data quality accuracy is maintained, keeping information current and accessible.

- Validity checks whether the information conforms to defined formats or standards, such as ensuring that dates are in the correct format.

Organizations should recognize the importance of having a cross-functional governance team to manage their information integrity strategy. This governance is crucial for upholding accountability and ensuring that information improvement initiatives align with business goals. Moreover, studies indicate that companies committed to information integrity can attract significantly higher investments, underscoring its financial impact.

However, organizations often struggle with accountability, which can result in unnoticed mistakes in information management. Tackling these challenges is essential for efficient information management and maintaining data integrity. Emerging aspects of information integrity, including conformity, currency, and accessibility, should also be taken into account to provide a comprehensive overview of information standards.

By prioritizing these elements, organizations can significantly enhance their decision-making processes and operational efficiency.

Utilize Data Quality Metrics: Tools for Measurement and Improvement

To enhance data quality accuracy, data engineers must leverage essential metrics and advanced platforms for effective governance and observability. Key metrics include:

- Error Rate: This metric tracks the number of errors in a dataset relative to the total number of records. A high error rate signals an urgent need for corrective actions, as organizations with high error rates often face significant operational challenges. Decube's ML-powered tests automate the detection of such errors, ensuring timely interventions.

- Completeness Ratio: This measures the proportion of complete records against the total number of records, highlighting the critical need for thorough information collection. Incomplete information can disrupt integration and result in expensive penalties, as seen with JPMorgan Chase's USD 350 million fine. Decube's information contract features enhance collaboration and accountability, ensuring completeness in information management.

- Timeliness Metrics: These evaluate how swiftly information is updated and made accessible for use. For instance, measuring the time taken to update customer records can reveal inefficiencies that hinder decision-making. Frequent updates are crucial; 85% of companies associate poor decision-making with outdated information. Decube's smart alerts inform teams of any delays or issues in information freshness, facilitating prompt action.

- Consistency Checks: Regularly comparing information across different systems helps identify discrepancies that need addressing. Inconsistent information can lead to confusion and errors, necessitating standardization to ensure reliability. The extensive features in metadata extraction and information profiling effectively support these checks.

- Information Validation Rules: Implementing automated validation rules during information entry can prevent errors from occurring in the first place. This proactive method is crucial for preserving high information standards and trust, and the platform enables the smooth incorporation of such regulations into current workflows.

By implementing these metrics, organizations can not only safeguard their data integrity but also improve data quality accuracy, drive operational excellence, and ensure compliance. Organizations like Smava and HIVED have effectively adopted such metrics, achieving zero downtime and 99.9% pipeline reliability, respectively, demonstrating the success of a structured strategy for information management.

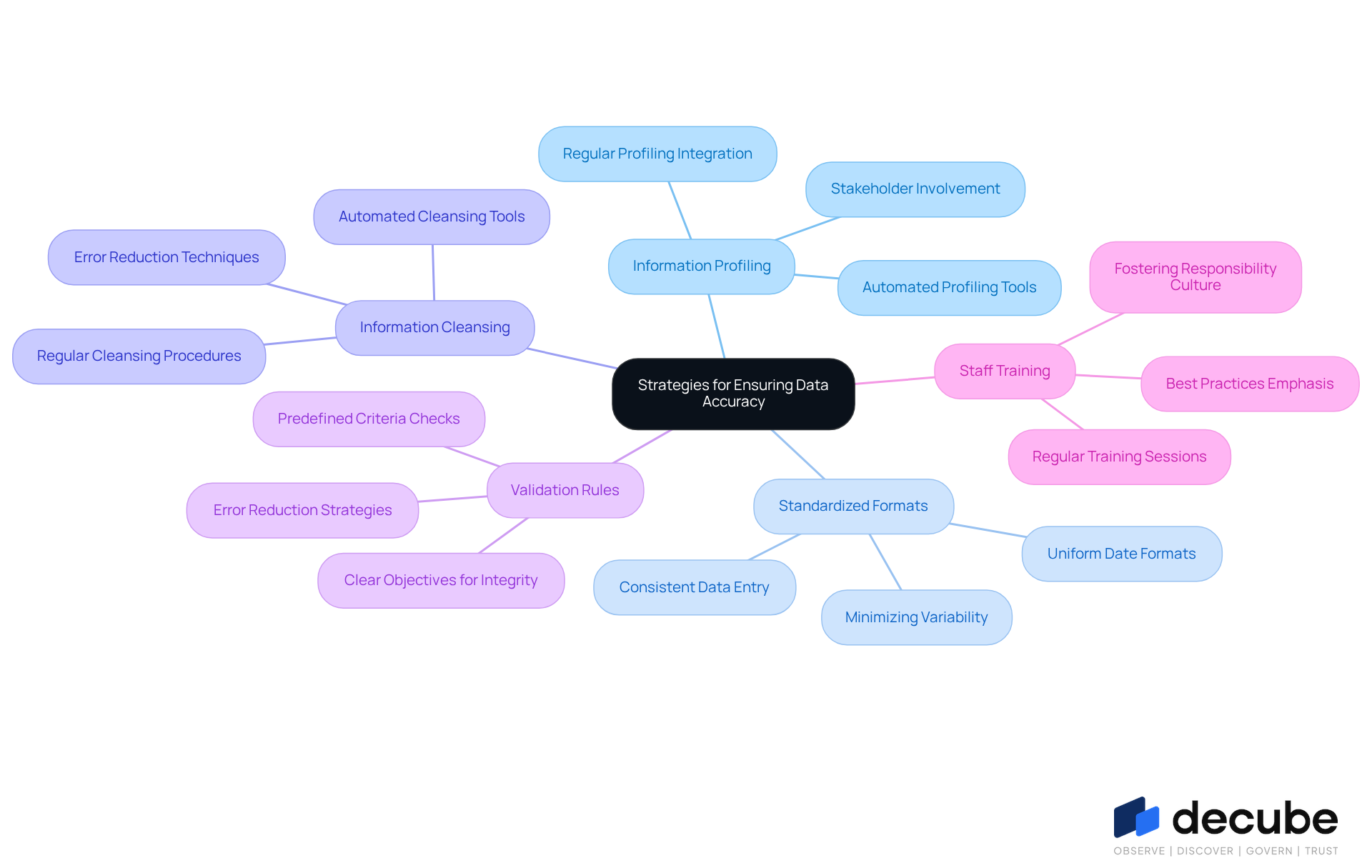

Implement Best Practices: Strategies for Ensuring Data Accuracy

To ensure information remains precise and trustworthy, organizations must consistently examine its structure and standards. Decube's ML-powered tests allow companies to automate profiling, providing real-time insights into data quality accuracy. Information profiling should be integrated at the beginning of any project and conducted regularly, as it is essential for managing high-quality information. This approach enables organizations to make informed decisions based on insights that reflect data quality accuracy.

Implementing standardized formats for information entry minimizes variability and mistakes. For instance, using a consistent date format across all datasets can prevent confusion and enhance information integrity. Decube's automated monitoring capabilities ensure that standardization techniques, such as uniformity in information types and structures, are maintained, significantly improving data quality accuracy and facilitating smoother integration processes. Studies indicate that organizations implementing standardization practices see accuracy metrics improve by up to 25%.

Establishing procedures for regularly cleansing information is essential to achieve data quality accuracy by eliminating duplicates, correcting inaccuracies, and filling in missing values. Automated tools, such as those provided by Decube, simplify this process, ensuring that information integrity is preserved over time. Real-world examples demonstrate that effective information cleansing can lead to substantial improvements in data quality accuracy, boosting overall operational efficiency. For example, businesses utilizing automated cleansing tools report a decrease in information errors by as much as 30%.

Establishing validation rules that verify information against predefined criteria during entry can significantly enhance data quality accuracy by reducing the number of errors introduced into the system, ensuring that only high-quality information is captured. By setting clear objectives for information integrity, organizations can better align their management practices with business goals. As Tom Myers emphasizes, involving business stakeholders in defining these criteria is crucial for success.

Regular training sessions for staff involved in information management emphasize the importance of maintaining information integrity and the best practices to achieve it. Involving employees in the information accuracy process fosters a culture of responsibility and thoroughness, ultimately resulting in more reliable and actionable insights. Organizations that prioritize training report greater adherence to information standards and improved information handling practices.

By applying these strategies, companies can foster a strong culture of data quality accuracy that permeates all tiers of information management, leading to improved reliability of insight-driven conclusions. Ultimately, a commitment to these practices can transform the quality of insights derived from data management.

Establish Governance Framework: Ensuring Continuous Data Quality

To maintain high data quality, organizations must implement a comprehensive data governance framework that addresses several critical components:

- Roles and Responsibilities: Clearly defining roles for data stewardship is essential for accountability in data quality across the organization. Designating owners accountable for particular datasets ensures that there is clear ownership and responsibility, which is vital for upholding high standards. In practice, organizations that establish defined roles frequently observe a significant enhancement in information integrity and adherence to standards.

- Policies and Procedures: It is crucial to create and document guidelines that outline information standards, handling processes, and compliance requirements. This documentation aligns all team members in their approach to information management, fostering a unified strategy that enhances information integrity. Additionally, incorporating an approval flow for viewing or editing information can further strengthen governance practices.

- Regular Audits: Regular evaluations of information integrity are vital for assessing adherence to standards and identifying areas for improvement. This proactive strategy assists organizations in recognizing issues before they escalate, ultimately lowering operational risks and enhancing data quality accuracy.

- Feedback Mechanisms: Creating channels for user feedback is crucial for the ongoing improvement of information management practices. Regular surveys or check-ins can help identify data-related challenges and facilitate discussions on potential improvements, ensuring that the governance framework evolves with user needs.

- Technology Integration: Utilizing technological solutions that assist in information governance, such as automated monitoring tools, can significantly improve information management. These tools offer immediate alerts to information integrity problems, allowing teams to react quickly and efficiently.

By establishing a robust governance framework, organizations can ensure that data quality accuracy is not only maintained but continuously improved. This proactive approach not only safeguards data quality but also cultivates a culture of continuous improvement and accountability in data management.

Conclusion

Ensuring data quality accuracy is essential for reliable insights and effective decision-making in organizations. By focusing on the key components of data quality - accuracy, completeness, consistency, timeliness, and validity - data engineers can create a robust framework that supports high-quality data practices. This commitment enhances operational efficiency and ensures that their data management initiatives align with overarching business goals.

The article emphasizes the importance of utilizing data quality metrics and implementing best practices to drive continuous improvement. Metrics such as error rates, completeness ratios, and timeliness evaluations provide valuable insights into data integrity, while strategies like regular data cleansing, standardized formats, and staff training foster a culture of accountability and precision. Furthermore, establishing a comprehensive governance framework ensures that these practices are consistently upheld, allowing organizations to adapt and respond to evolving data challenges.

Neglecting data quality can lead to misguided decisions and operational setbacks. Ultimately, prioritizing data quality is a strategic imperative that significantly influences an organization's success. By adopting these best practices and continuously refining their approach to data management, organizations can maximize the value derived from their data assets, leading to more informed decisions and better business outcomes. By prioritizing data quality, organizations can navigate the complexities of the data landscape and achieve sustainable success.

Frequently Asked Questions

What are the key components of data quality?

The key components of data quality include accuracy, completeness, consistency, timeliness, and validity.

Why is accuracy important in data quality?

Accuracy is important because it ensures that information values align closely with true values or verified sources, preventing issues such as failed deliveries and customer dissatisfaction.

What does completeness in data quality refer to?

Completeness refers to whether all necessary information is present. The absence of information can distort analysis and lead to erroneous conclusions.

How does consistency affect data quality?

Consistency ensures that information is the same across various datasets. Inconsistencies, such as different spellings of a customer's name in different databases, can create confusion and reporting errors.

What is timeliness in the context of data quality?

Timeliness refers to the information being current and accessible when required. Outdated information can lead to decisions based on inaccurate details.

What does validity mean in relation to data quality?

Validity checks whether information conforms to defined formats or standards, such as ensuring that dates are in the correct format.

Why is a cross-functional governance team important for data quality?

A cross-functional governance team is crucial for managing information integrity, upholding accountability, and ensuring that information improvement initiatives align with business goals.

What challenges do organizations face regarding data quality?

Organizations often struggle with accountability, which can lead to unnoticed mistakes in information management, affecting data integrity.

How can organizations enhance their decision-making processes?

By prioritizing elements such as accuracy, completeness, consistency, timeliness, and validity, organizations can significantly enhance their decision-making processes and operational efficiency.

List of Sources

- Define Data Quality: Key Components and Importance

- Data Quality Management in 2026: Proactive AI, Ethical Frameworks, and Measurable ROI (https://blog.melissa.com/en-au/global-intelligence/data-quality-management-2026)

- Data Quality as a Competitive Advantage: The 2026 Playbook (https://workingexcellence.com/data-quality-as-a-competitive-advantage-the-2026-playbook)

- Data Quality Dimensions: Key Metrics & Best Practices for 2026 (https://ovaledge.com/blog/data-quality-dimensions)

- Forrester Wave Report 2026 for Data Quality: how to read it and why it matters (https://ataccama.com/blog/the-forrester-wave-data-quality-solutions-2026-how-to-read-it-and-why-it-matters-now)

- The 2026 Guide to Data Management | IBM (https://ibm.com/think/topics/data-management-guide)

- Utilize Data Quality Metrics: Tools for Measurement and Improvement

- Top 8 Data Quality Metrics in 2026 | Dagster (https://dagster.io/learn/data-quality-metrics)

- 12 Data Quality Metrics That ACTUALLY Matter (https://montecarlodata.com/blog-data-quality-metrics)

- 9 Data quality metrics you must track (https://ataccama.com/blog/data-quality-metrics-to-track)

- Data Quality Issues and Challenges | IBM (https://ibm.com/think/insights/data-quality-issues)

- Data Quality Metrics & KPIs for Data Engineering Teams in 2026 | Uvik Software (https://uvik.net/blog/data-quality-metrics-kpis)

- Implement Best Practices: Strategies for Ensuring Data Accuracy

- Data Integration Best Practices for 2026: Architecture & Tools (https://domo.com/learn/article/data-integration-best-practices)

- What Is Data Profiling? Process, Best Practices and Tools [2024 Updated] (https://panoply.io/analytics-stack-guide/data-profiling-best-practices)

- Data Profiling Techniques: Best Practices (https://pantomath.com/guide-data-observability/data-profiling-techniques)

- Best Data Profiling Tools in 2026: Full Comparison (https://ovaledge.com/blog/data-profiling-tools)

- Data Profiling: Why It’s Critical, and Some Best Practices. - Data In Harmony (https://datainharmony.com/2025/05/28/data-profiling-why-its-critical-and-some-best-practices)

- Establish Governance Framework: Ensuring Continuous Data Quality

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- Top Data Governance Frameworks in 2026 | EM360Tech (https://em360tech.com/top-10/top-data-governance-frameworks)

- Top 12 Data Governance predictions for 2026 - hyperight.com (https://hyperight.com/top-12-data-governance-predictions-for-2026)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

_For%20light%20backgrounds.svg)