Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

How to Test Data Quality: A Step-by-Step Guide for Data Engineers

Learn effective methods and tools for how to test data quality in this comprehensive guide.

Introduction

Understanding the integrity of data is crucial in the current data-driven landscape, where decisions rely heavily on the accuracy and reliability of information. This guide explores the fundamental principles of data quality, providing data engineers with the necessary knowledge to implement effective testing strategies. As organizations pursue flawless data, they frequently encounter challenges such as integration issues and shifting standards. Therefore, how can data engineers ensure that their data not only meets quality benchmarks but also adapts to the evolving needs of their organizations?

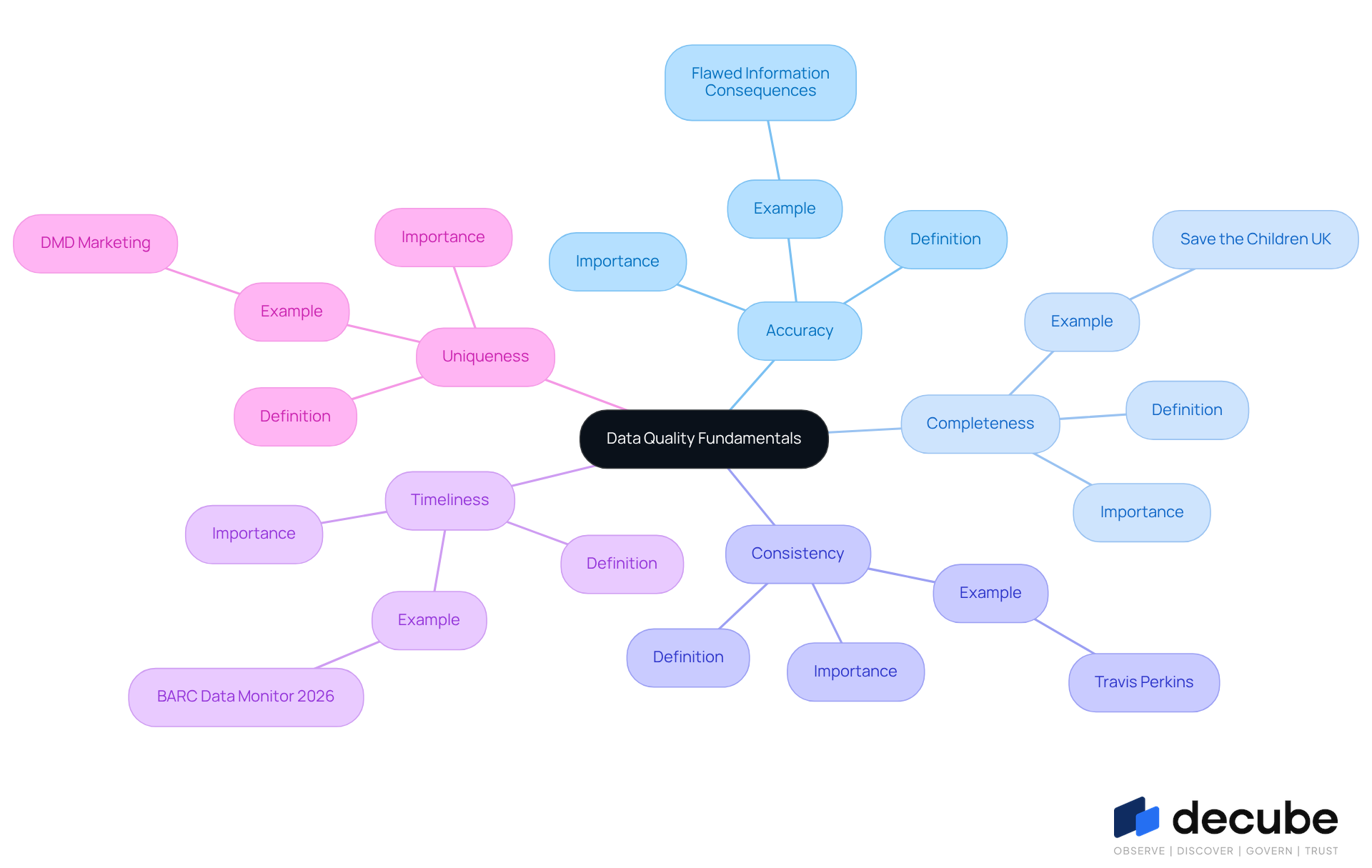

Understand Data Quality Fundamentals

Understanding the essential aspects of is crucial for effective testing and oversight. is characterized by several key attributes:

- Accuracy: Data must accurately reflect the real-world entities it describes. Flawed information can lead to misguided decisions, underscoring the necessity for rigorous validation processes.

- Completeness: All necessary information should be present; missing details can result in flawed conclusions. Organizations such as Save the Children UK have demonstrated that can significantly improve reporting and insights into donor behavior.

- Consistency: Data should remain consistent across various datasets and systems. For example, Travis Perkins improved their online sales by standardizing product information, resulting in a 30% increase in website conversions.

- Timeliness: Data must be current and relevant to the context in which it is utilized. As highlighted in the , is essential for making informed decisions.

- Uniqueness: Each record should be unique, with no duplicates. have prioritized information uniqueness to enhance targeting accuracy, achieving a mail deliverability rate of 95%.

Familiarizing yourself with these dimensions will enable you to identify potential issues within your datasets and learn how to test effectively. As experts emphasize, grasping these principles is vital for organizations aiming to leverage information as a strategic resource in 2026.

Identify Tools for Data Quality Testing

Choosing the appropriate instruments for is essential for successful execution. Here are some popular tools to consider, along with their release years and unique features:

- Great Expectations (2017): An open-source tool that allows users to define expectations for their data and validate them, fostering a culture of accountability in .

- Apache Deequ (2018): A library designed for defining 'unit tests' for information, ensuring integrity in large-scale environments by automating checks and validations.

- Talend: A comprehensive integration tool that includes robust features for ensuring accuracy, making it suitable for organizations aiming to streamline their workflows.

- Monte Carlo (2019): A platform for observability that assists in monitoring in real-time, enabling teams to swiftly recognize and resolve issues as they arise.

- Atlan: A collaborative information workspace that integrates quality checks into workflows, promoting teamwork and efficiency.

- Decube: A powerful solution that enhances and governance through its intuitive design and . Users appreciate its seamless integration with existing and its ability to uphold trust in the information. The platform's lineage feature provides transparency in , ensuring that information remains accurate and consistent, which is crucial for decision-making. Testimonials highlight how Decube has improved and operational effectiveness, establishing it as a strong candidate for organizations seeking to enhance their .

When , consider factors such as the size of your information, the complexity of your pipelines, and your team's expertise. Organizations often face challenges such as integration issues, gaps in ownership, and governance when deploying resources. Connecting to governance is vital, as it enhances accountability and compliance across systems. Incorporating insights from engineers regarding their experiences with these tools can also inform your selection process. For instance, organizations like Vimeo have effectively integrated validation directly into their workflows, thereby improving and operational efficiency.

Execute Data Quality Tests Methodically

To execute effectively, it is essential to follow these methodical steps:

-

Define Your : Clearly outline your goals for testing, such as identifying missing values, ensuring consistency, or validating accuracy. This foundational step is crucial for understanding in your testing strategy. As Idan Novogroder emphasizes, a workflow that focuses on quality must include criteria on and monitoring quality.

-

Select Appropriate Tests: Choose tests that align with your objectives. Common tests include:

- Null Value Checks: Identify and quantify missing data points, which can significantly impact analysis.

- Uniqueness Tests: Ensure that no duplicate records exist, as duplicates can distort insights and lead to erroneous conclusions.

- Referential Integrity Tests: Confirm that relationships between datasets are upheld, ensuring information coherence across systems.

- Regex Match Tests: Validate that information conforms to specified patterns, enhancing integrity.

- Cardinality Tests: Evaluate the uniqueness of values in a dataset, ensuring proper distribution of information.

With Decube's , thresholds for these checks are automatically identified once your information source is connected, streamlining the process.

-

Where Possible: Leverage tools like Decube to . Decube's smart alerts group notifications to prevent overwhelming your team, delivering them directly to your email or Slack. Automation not only decreases manual effort but also improves reliability, which is essential for understanding through consistent oversight of . As highlighted by industry specialists, manual are time-intensive and susceptible to mistakes, rendering automation crucial for scalable information management.

-

Document Your Findings: Maintain a comprehensive record of test results and any noted problems. This documentation is over time and offers a foundation for accountability within your framework. Notably, 74% of participants indicated that business stakeholders recognize problems first, emphasizing the necessity of teamwork in addressing these challenges.

-

Iterate and Enhance: Use your discoveries to refine information standards and tests. Continuous improvement is key; addressing recurring issues promptly can prevent larger problems down the line. Involving team members from various departments in this iterative process can improve accountability and promote a culture of awareness regarding . Decube's enables you to verify discrepancies between datasets, further supporting this iterative enhancement.

By following these steps, data engineers can establish a robust framework for , ultimately leading to more dependable outcomes and informed decision-making. Given that inadequate information standards can result in considerable financial consequences, with organizations forfeiting an average of 31% of affected revenue due to standard concerns, emphasizing effective information testing is crucial.

Monitor and Maintain Data Quality Continuously

To ensure ongoing data quality, implement the following strategies:

- Establish Continuous Monitoring: Utilize tools that provide . This enables immediate detection of issues as they arise. Real-time validation significantly reduces the mean time to resolve information incidents, ensuring that clean information flows through operational and analytical systems.

- Set Up Alerts: Configure notifications for breaches in information standards, allowing for prompt action. , ensuring that engineers can address issues swiftly and uphold .

- : Arrange routine assessments of your information standards processes and outcomes to identify areas for enhancement. Regular evaluations are crucial for sustaining continuous and can assist organizations in learning to adapt to evolving business requirements.

- Engage Stakeholders: Involve information owners and users in discussions about to ensure that everyone understands their role in maintaining it. Engaging various stakeholders outside the analytics team enhances accountability for metrics and fosters a culture of responsibility.

- Enhance Procedures: Continuously evaluate and improve your information accuracy processes based on feedback and findings from audits and monitoring. This iterative approach allows organizations to adapt their strategies and understand over time, ultimately leading to improved decision-making and operational efficiency.

Conclusion

Understanding how to effectively test data quality is essential for data engineers who aim to ensure the reliability and integrity of their datasets. By grasping the fundamental attributes of data quality - accuracy, completeness, consistency, timeliness, and uniqueness - professionals can identify potential issues and implement rigorous testing protocols. This foundational knowledge serves as the cornerstone for a robust data quality framework, ultimately leading to improved decision-making and operational efficiency.

The article outlines a comprehensive approach to data quality testing, emphasizing the importance of selecting appropriate tools, executing tests methodically, and maintaining continuous monitoring. Key insights include:

- The necessity of defining clear testing objectives

- Automating testing processes

- Involving stakeholders in maintaining data integrity

Furthermore, leveraging tools like Decube and Apache Deequ can enhance accuracy and streamline workflows, ensuring that organizations can adapt to evolving data quality challenges.

In conclusion, prioritizing data quality is not merely a technical requirement but a strategic imperative that can significantly impact an organization’s success. By adopting a systematic approach to testing and continuously monitoring data quality, data engineers can foster a culture of accountability and trust in their information systems. Embracing these best practices will not only safeguard against costly errors but also empower organizations to harness the full potential of their data as a valuable strategic asset.

Frequently Asked Questions

What is data quality and why is it important?

Data quality refers to the essential aspects of information integrity that are crucial for effective testing and oversight. It is important because flawed information can lead to misguided decisions, making rigorous validation processes necessary.

What are the key attributes of data quality?

The key attributes of data quality include accuracy, completeness, consistency, timeliness, and uniqueness.

What does accuracy in data quality mean?

Accuracy means that data must accurately reflect the real-world entities it describes. Flawed information can lead to misguided decisions.

Why is completeness important in data quality?

Completeness is important because all necessary information should be present; missing details can result in flawed conclusions. Enhancing information completeness can significantly improve reporting and insights.

How does consistency affect data quality?

Consistency affects data quality by ensuring that data remains uniform across various datasets and systems. For instance, standardizing product information can lead to increased conversions.

What is the significance of timeliness in data quality?

Timeliness signifies that data must be current and relevant to the context in which it is utilized. Timely information is essential for making informed decisions.

What does uniqueness mean in the context of data quality?

Uniqueness means that each record should be unique, with no duplicates. Prioritizing information uniqueness enhances targeting accuracy.

How can understanding data quality attributes benefit organizations?

Familiarizing yourself with these dimensions helps identify potential issues within datasets and learn how to test data quality effectively, which is vital for leveraging information as a strategic resource.

List of Sources

- Understand Data Quality Fundamentals

- Why data quality is key to AI success in 2026 (https://strategy.com/software/blog/why-data-quality-is-key-to-ai-success-in-2026)

- Data Quality in the Real-World: 6 Examples (https://izeno.com/data-quality-in-the-real-world-6-examples)

- BARC News | Data Quality Beats AI Hype (https://barc.com/news/barc-publishes-the-data-bi-and-analytics-trend-monitor-2026)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Identify Tools for Data Quality Testing

- 12 Best Data Quality Tools for 2026 (https://lakefs.io/data-quality/data-quality-tools)

- Data Quality Tools 2026: The Complete Buyer’s Guide to Reliable Data (https://ovaledge.com/blog/data-quality-tools)

- Execute Data Quality Tests Methodically

- lakefs.io (https://lakefs.io/data-quality/data-quality-framework)

- The Annual State Of Data Quality Survey, 2026 (https://montecarlodata.com/blog-data-quality-survey)

- 12 Data Quality Metrics to Measure Data Quality in 2026 (https://lakefs.io/data-quality/data-quality-metrics)

- Data Transformation Challenge Statistics — 50 Statistics Every Technology Leader Should Know in 2026 (https://integrate.io/blog/data-transformation-challenge-statistics)

- Monitor and Maintain Data Quality Continuously

- confluent.io (https://confluent.io/blog/making-data-quality-scalable-with-real-time-streaming-architectures)

- The Annual State Of Data Quality Survey, 2026 (https://montecarlodata.com/blog-data-quality-survey)

- 12 Data Quality Metrics to Measure Data Quality in 2026 (https://lakefs.io/data-quality/data-quality-metrics)

- How to improve data quality: 10 best practices for 2026 (https://rudderstack.com/blog/how-to-improve-data-quality)

_For%20light%20backgrounds.svg)