Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

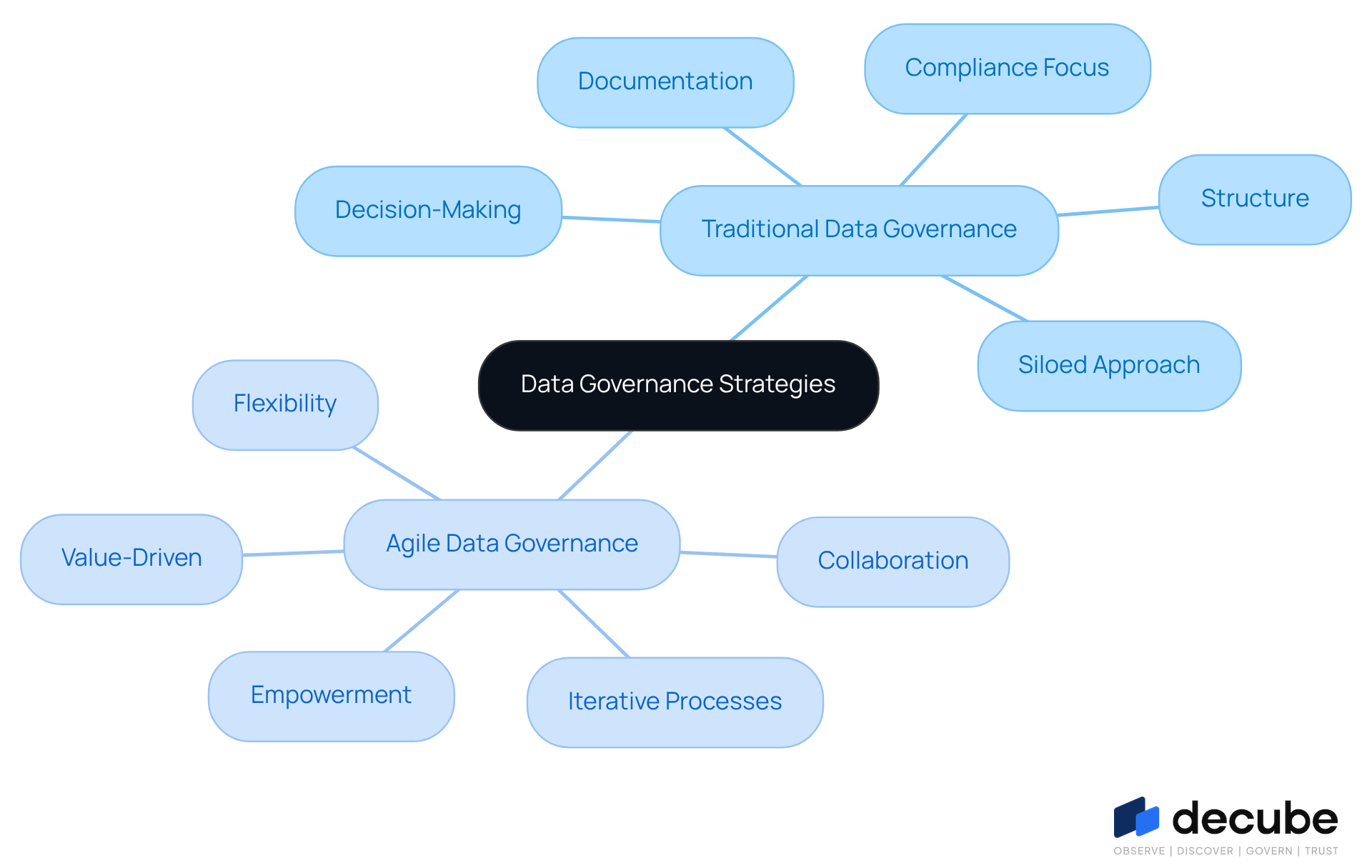

Data Governance Strategies: Traditional vs. Agile Approaches Explained

Explore traditional vs. agile data governance strategies for enhanced organizational efficiency.

Introduction

Organizations face significant challenges in managing information effectively amidst rapid change and complexity. Businesses encounter difficulties in data governance, often caught between traditional frameworks enforcing compliance and agile methodologies emphasizing flexibility and collaboration. This article examines the contrasting approaches of traditional and agile data governance, highlighting their advantages and potential pitfalls. This article will guide organizations in navigating this critical decision to effectively harness their data assets.

Define Data Governance: Traditional vs. Agile Frameworks

Effective information management is crucial for ensuring organizational success in a rapidly changing environment. Information management encompasses the oversight of availability, usability, integrity, and security within an organization. Traditional information management frameworks emphasize a structured, top-down approach focused on compliance, comprehensive documentation, and strict policy adherence. This rigidity often results in delays and missed opportunities for innovation, as organizations often face challenges such as delayed responses to information-related issues and limited adaptability to changing business requirements.

In contrast, agile information management promotes flexibility, collaboration, and iterative enhancement. It empowers cross-functional teams to take ownership of information quality and management processes, enabling quicker adaptations to evolving information requirements. Agile frameworks prioritize guidelines over rigid procedures, fostering a culture of continuous feedback and improvement. This approach proves particularly advantageous in fast-paced environments, where organizations need to respond quickly to emerging information challenges and opportunities. By 2026, the shift towards agile governance is expected to accelerate, with a significant percentage of organizations moving away from traditional models to embrace more adaptive data governance strategies that enhance operational efficiency and data-driven decision-making. As organizations increasingly recognize the limitations of traditional frameworks, the transition to agile governance will become imperative for sustained competitiveness.

Compare Key Characteristics of Traditional and Agile Data Governance

The contrast between traditional and agile data governance strategies reveals significant implications for organizational effectiveness.

Traditional Data Governance Characteristics

- Structure: Highly structured with clearly defined roles and responsibilities, which ensures accountability, though it can sometimes result in rigidity.

- Documentation: Highlights comprehensive documentation and formal procedures, which can hinder responsiveness to information issues.

- Decision-Making: Centralized decision-making processes can hinder agility, resulting in delayed responses to emerging information challenges.

- Compliance Focus: Primarily centered on regulatory compliance and risk management, often at the expense of innovation and flexibility.

- Siloed Approach: Functions in isolation, restricting cooperation among departments and diminishing the overall effectiveness of initiatives.

Agile Data Governance Characteristics

- Flexibility: Adapts quickly to changing data needs and business environments, allowing organizations to remain competitive.

- Collaboration: Promotes cross-functional teams to cooperate on information management initiatives, nurturing a culture of collective responsibility. An example is Decube's automated column-level lineage feature, which allows business users to identify issues in reports or dashboards, thereby enhancing collaboration and transparency.

- Iterative Processes: Employs iterative cycles for ongoing enhancement and feedback, allowing swift modifications to management practices.

- Empowerment: Enables teams to take ownership of information quality and governance practices, enhancing accountability and engagement. Users have remarked that 'Decube's intuitive design helps uphold trust in information, making it simpler to identify issues early on.'

- Value-Driven: Focuses on delivering value quickly and efficiently, prioritizing outcomes over strict adherence to procedures. The smooth integration of Decube with current information stacks exemplifies this value-driven approach, as it enhances both work quality and efficiency.

As organizations move into 2026, the shift towards flexible information management is becoming increasingly clear. This method not only improves responsiveness but also aligns with the necessity for continuous compliance and operational efficiency in a swiftly changing information environment. Effective applications of flexible management, like those employing Decube's features, showcase quantifiable results, such as enhanced information quality and quicker decision-making, establishing it as a strategic resource for contemporary businesses. Moreover, the incorporation of information observability as the enterprise digital backbone and the adoption of a federated management model further demonstrate how agile oversight frameworks can adjust to modern organizational structures and demands. This evolution underscores the critical need for organizations to embrace data governance strategies that enable them to thrive in a dynamic data landscape.

Evaluate Pros and Cons: Choosing Between Traditional and Agile Approaches

Evaluate Pros and Cons: Choosing Between Traditional and Agile Approaches

Pros and Cons of Traditional Data Governance

Pros:

- Stability: Establishes a stable framework for managing data across the organization, ensuring consistency and reliability.

- Compliance Assurance: Emphasizes compliance with regulations, significantly reducing legal risks and enhancing trust with stakeholders.

- Clear Accountability: Defined roles and responsibilities enhance accountability, making it easier to track information ownership and governance.

Cons:

- Inflexibility: Inflexibility can be a significant drawback, as it often slows adaptation to changing data needs or business environments, hindering responsiveness.

- Bureaucracy: Can create bureaucratic hurdles that impede timely decision-making, leading to frustration among teams.

- Siloed Operations: Tends to result in isolated information management, which can hinder collaboration and lead to inconsistent practices.

Pros and Cons of Agile Data Governance

Pros:

- Adaptability: Agile governance allows organizations to swiftly adjust to changing data needs and business priorities, helping them stay competitive in a dynamic market.

- Enhanced Collaboration: Promotes teamwork among groups, enhancing information quality and ensuring that various viewpoints are accounted for in management practices.

- Quicker Time-to-Value: Hastens the provision of data insights and management enhancements, allowing entities to react rapidly to market shifts.

Cons:

- Potential for Chaos: Without clear guidelines, agile governance can lead to inconsistent practices and confusion among teams.

- Resource Intensive: Requires ongoing commitment and resources to maintain agility, which can strain smaller entities.

- Risk of Over-empowerment: Teams may take liberties that compromise information integrity if not properly guided, necessitating strong oversight mechanisms.

In 2026, organizations are progressively acknowledging the necessity for flexible information management as a way to navigate intricate information ecosystems and regulatory environments. Successful implementations frequently emphasize the significance of balancing flexibility with organized oversight, which is often achieved through effective data governance strategies, ensuring that regulatory frameworks develop alongside business needs.

Leverage Modern Tools: Enhancing Governance with Technology

Organizations face mounting challenges in managing data governance effectively, necessitating the adoption of contemporary data governance strategies. These tools are vital for enhancing both traditional and agile frameworks, significantly improving efficiency, compliance, and implementing effective data governance strategies. Key technologies include:

- [[Data Catalogs](https://decube.io/post/transforming-data-quality-decubes-sla-software-engineering-success)](https://decube.io/post/transforming-data-quality-decubes-sla-software-engineering-success): Solutions like Alation and Collibra enable organizations to maintain a comprehensive inventory of data assets, facilitating effective management of data lineage and compliance requirements. By offering transparent insight into information sources, these tools assist enterprises in navigating complex regulatory environments.

- Automated Workflows: Platforms such as Informatica and Atlan streamline governance processes through automation, minimizing manual effort and enhancing operational efficiency. This automation is essential as companies encounter growing information volumes and regulatory requirements.

- Machine Learning: Advanced analytics and machine learning capabilities play a vital role in identifying anomalies within information sets, thereby ensuring higher quality and integrity. This proactive strategy for quality management is essential for organizations aiming to leverage AI effectively.

- Collaboration Platforms: Tools such as Microsoft Teams and Slack improve communication among cross-functional teams, supporting flexible management practices. These platforms encourage teamwork, allowing groups to react quickly to information management challenges and uphold compliance.

Without these advancements, organizations may find themselves ill-equipped to navigate the complexities of compliance and data governance strategies in the near future.

Conclusion

The choice between traditional and agile data governance frameworks presents significant implications for organizational effectiveness. Traditional governance emphasizes a rigid, compliance-focused approach that often stifles innovation and responsiveness. In contrast, agile governance offers flexibility, collaboration, and a value-driven mindset, allowing organizations to adapt swiftly to changing data needs and market dynamics.

Key arguments presented highlight the pros and cons of each approach. Traditional frameworks provide stability, clear accountability, and compliance assurance but can hinder agility and collaboration. On the other hand, agile governance fosters adaptability and teamwork, enabling quicker responses to emerging challenges, yet it requires careful management to avoid inconsistencies and potential chaos. The integration of modern tools, such as data catalogs and automated workflows, enhances the effectiveness of these governance strategies, ensuring organizations can navigate the complexities of compliance and data management.

The transition towards agile data governance is essential for organizations seeking to thrive in a rapidly evolving data landscape. Adopting agile practices, bolstered by advanced technologies, positions organizations to enhance operational efficiency and drive data-driven decision-making. Organizations that do not embrace agile governance risk falling behind competitors who effectively utilize these frameworks for strategic growth.

Frequently Asked Questions

What is data governance?

Data governance refers to the overall management of the availability, usability, integrity, and security of data within an organization.

What are the characteristics of traditional information management frameworks?

Traditional information management frameworks emphasize a structured, top-down approach that focuses on compliance, comprehensive documentation, and strict policy adherence.

What are the drawbacks of traditional information management frameworks?

The rigidity of traditional frameworks can lead to delays, missed opportunities for innovation, and challenges in responding to information-related issues and changing business requirements.

How does agile information management differ from traditional frameworks?

Agile information management promotes flexibility, collaboration, and iterative enhancement, empowering cross-functional teams to take ownership of information quality and management processes.

What are the benefits of adopting an agile framework for information management?

Agile frameworks prioritize guidelines over rigid procedures, fostering a culture of continuous feedback and improvement, which allows organizations to quickly adapt to evolving information requirements.

Why is the shift towards agile governance expected to accelerate by 2026?

As organizations recognize the limitations of traditional frameworks, the transition to agile governance is seen as imperative for enhancing operational efficiency and data-driven decision-making, which will be crucial for sustained competitiveness.

List of Sources

- Define Data Governance: Traditional vs. Agile Frameworks

- Why data governance is the cornerstone of trustworthy AI in 2026 (https://strategy.com/software/blog/why-data-governance-is-the-cornerstone-of-trustworthy-ai-in-2026)

- Top Data Governance Frameworks in 2026 | EM360Tech (https://em360tech.com/top-10/top-data-governance-frameworks)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- Data Transformation Challenge Statistics — 50 Statistics Every Technology Leader Should Know in 2026 (https://integrate.io/blog/data-transformation-challenge-statistics)

- Data Governance trends for 2026 that definitely weren’t written by AI (https://thedatagovernanceplaybook.substack.com/p/data-governance-trends-for-2026-that)

- Compare Key Characteristics of Traditional and Agile Data Governance

- Data Governance trends for 2026 that definitely weren’t written by AI (https://thedatagovernanceplaybook.substack.com/p/data-governance-trends-for-2026-that)

- Top 12 Data Governance predictions for 2026 - hyperight.com (https://hyperight.com/top-12-data-governance-predictions-for-2026)

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- Data Governance Trends in 2024 - Dataversity (https://dataversity.net/articles/data-governance-trends-in-2024)

- What’s in, and what’s out: Data management in 2026 has a new attitude (https://cio.com/article/4117488/whats-in-and-whats-out-data-management-in-2026-has-a-new-attitude.html)

- Evaluate Pros and Cons: Choosing Between Traditional and Agile Approaches

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Agile Statistics and Facts: Adoption, Market Size & Trends (2025) (https://electroiq.com/stats/agile-statistics)

- Data Governance in 2026: A Reality Check and Blueprint for Success (https://medium.com/@sdezoysa/data-governance-a-reality-check-and-a-blueprint-for-2026-1801c5a475ea)

- Data Governance Strategy: 7-Step Framework That Works 2026 (https://sranalytics.io/blog/data-governance-strategy)

- Data Governance in 2026: Key Strategies for Enterprise Compliance and Innovation (https://community.trustcloud.ai/article/data-governance-in-2025-what-enterprises-need-to-know-today)

- Leverage Modern Tools: Enhancing Governance with Technology

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- The 20 Biggest AI Governance Statistics and Trends of 2025 (https://knostic.ai/blog/ai-governance-statistics)

- Top data governance trends: The future of data in 2026 - Murdio (https://murdio.com/insights/data-governance-trends)

- United States Data Governance Market Size: Strategic Insights & Growth Outlook (https://linkedin.com/pulse/united-states-data-governance-market-size-strategic-insights-fhs7c)

- Data Governance in 2026: How AI and Cloud Will Redefine Enterprise Control | Zenxsys Blog (https://zenxsys.com/blog/data-governance-in-2026-how-ai-and-cloud-will-redefine-enterprise-control-blog)

_For%20light%20backgrounds.svg)