Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Compare 5 Essential Data Ingestion Tools for Your Needs

Compare the top 5 data ingestion tools based on features, pros, and cons to suit your needs.

Introduction

Understanding the nuances of data ingestion is crucial for organizations aiming to effectively leverage data-driven decision-making.

With a wide array of tools available, each offering distinct features and capabilities, selecting the appropriate data ingestion solution can significantly influence operational efficiency and data quality.

However, organizations face challenges in navigating the complexities of batch versus real-time ingestion, integration capabilities, and cost considerations.

Which tools stand out in addressing diverse business needs, and how can organizations ensure they make the most informed choice?

Define Data Ingestion: Understanding the Basics

Information gathering involves the systematic collection and importation of data from diverse sources into a centralized system for storage and analysis. This process is crucial for organizations that depend on data-driven decision-making. Acquiring information can include various content types, such as structured, semi-structured, and unstructured formats, and it represents the initial step in the information pipeline. Efficient information intake ensures that details are readily accessible for analytics, reporting, and operational processes, thereby enhancing overall quality and accessibility.

A key component of effective data ingestion tools is their integration with a catalog, which acts as a searchable inventory of assets enriched with metadata, including:

- Owners

- Descriptions

- Classifications

- Quality

- Lineage

This integration enables teams to quickly discover, understand, and trust the correct information. Features such as lineage visualization and quality indicators within a catalog are vital for ensuring accuracy and compliance in information management. Furthermore, information observability is essential for maintaining quality and trust in modern information ecosystems, making it imperative for organizations to implement robust data ingestion tools that include these elements.

Explore Types of Data Ingestion: Batch vs. Real-Time

Data intake can be categorized into two primary types: batch processing and real-time processing. Batch ingestion involves collecting and processing information at scheduled intervals, making it suitable for scenarios where immediate availability is not critical. This approach is often more economical and simpler to manage, particularly for substantial amounts of information. For instance, entities in financial services frequently employ batch processing for activities such as end-of-day reporting, where information can be gathered and analyzed without the necessity for real-time insights.

In contrast, real-time ingestion captures information continuously as it is generated, enabling organizations to make timely decisions based on the most current details. This method is crucial for applications requiring prompt insights, such as fraud detection, where systems must analyze transaction information within milliseconds to identify suspicious activities. Real-time information processing allows businesses to react to changes, anomalies, or opportunities almost immediately, thereby enhancing operational efficiency and customer engagement.

The platform enhances both batch and real-time data collection processes through its automated crawling feature, which ensures that metadata is updated automatically without manual intervention. This capability streamlines information management and strengthens governance by allowing organizations to control who can view or edit details through a designated approval flow. For batch processing, Decube's automated crawling can significantly reduce the time spent on manual updates, enabling quicker data aggregation and reporting. In real-time processing, it guarantees that the most current metadata is always accessible, facilitating faster decision-making and response times.

The choice between batch and real-time data input depends on specific use cases and business requirements. While batch processing is advantageous for its cost efficiency and simplicity, real-time data intake offers significant benefits in terms of responsiveness and agility. As the discussion continues, engineers emphasize the importance of assessing the context: 'The real question in 2026 is not 'Which one is better?' but rather 'When should you use batch processing, and when does streaming make more sense?'' This perspective highlights the evolving landscape of information acquisition techniques and the necessity for organizations to adapt their strategies accordingly.

Compare Key Features of Leading Data Ingestion Tools

When evaluating data ingestion tools, several key features warrant consideration:

- Integration Capabilities: The ability to connect with various information sources, including databases, APIs, and cloud services, is paramount. Data ingestion tools like Fivetran and Apache NiFi excel in this area, with Fivetran supporting over 700 connectors and Apache NiFi providing a visual interface for managing complex workflows. Decube also distinguishes itself with its seamless integration capabilities, allowing users to connect effortlessly with existing information stacks through data ingestion tools, thereby enhancing overall governance and observability.

- Information Transformation: Built-in transformation features enable users to clean and format information during ingestion. Talend is particularly recognized as one of the leading data ingestion tools for its robust transformation capabilities, allowing entities to handle both structured and unstructured information effectively. Decube complements this with its automated crawling feature, which enhances metadata management and ensures secure access control, further supporting information quality.

- Scalability: The tool's capacity to manage increasing information volumes without performance degradation is essential for expanding organizations. For instance, data ingestion tools such as Apache Kafka are designed for high-throughput environments, ensuring low-latency input suitable for real-time analytics. The system architecture is also built to scale, offering reliable observability across growing information infrastructures.

- Real-Time Processing: Data ingestion tools such as Apache Kafka and AWS Kinesis are specifically designed for real-time information ingestion, making them ideal for applications that require immediate insights, such as fraud detection and customer behavior analysis. The platform enhances real-time processing through its contract module, which virtualizes and operates monitors, ensuring consistent information quality.

- User-Friendliness: A user-friendly interface can significantly reduce the learning curve and improve adoption rates among teams. Platforms like Hevo Data and Integrate.io, known for their intuitive designs, are effective data ingestion tools that facilitate easier onboarding for users. Decube is commended for its outstanding UX/UI, making it one of the best-designed information products available, which aids teams in managing complex tasks with ease.

- Cost: Pricing models vary significantly, with some tools charging based on the number of connectors or volume of information. Understanding the cost implications of each tool is essential for budget-conscious organizations, as mid-market tools typically range from $1,000 to $5,000 per month, while enterprise platforms often start at $50,000 per year. Decube provides a comprehensive solution that can potentially reduce expenses related to the management and troubleshooting of information quality through data ingestion tools.

- Protection and Management: Integrated security protocols during information ingestion are essential for ensuring privacy and compliance. As Gartner anticipates that 80% of analytics governance initiatives will fail by 2027, selecting data ingestion tools with strong governance features is crucial. Decube's platform emphasizes trust and governance, offering transparency in information pipelines and streamlining collaboration among teams. According to Improvado, "The key is matching the integration pattern to the business requirement - not defaulting to real-time because it sounds more modern.

Evaluate Pros and Cons of Each Data Ingestion Tool

-

Fivetran:

- Pros: Fivetran offers fully managed, zero-maintenance pipelines, complemented by an extensive library of over 700 pre-built connectors. Its strong real-time capabilities facilitate quick data replication with minimal lag.

- Cons: However, it incurs elevated expenses for comprehensive information sources, particularly when handling substantial quantities. Additionally, its restricted customization options may limit adaptability in pipeline design.

-

Apache NiFi:

- Pros: Apache NiFi is highly customizable, enabling users to construct automated flows from source to destination, incorporating transformations along the way. It enhances operational efficiency by supporting both batch and real-time ingestion through data ingestion tools. Furthermore, its robust data lineage capabilities allow for effective tracking of data flow.

- Cons: On the downside, NiFi presents a steeper learning curve, necessitating more technical expertise for effective setup and management. Scalability concerns also arise, as it may not scale as efficiently as alternatives like Spark.

- User Satisfaction: Users have rated the initial setup experience highly, with some awarding it a perfect score for ease of use. Nonetheless, concerns regarding reliability and the need for additional features have been noted.

- Real-World Example: A project engineer emphasized NiFi's user-friendly interface, which simplifies the design of popular workflows. Another user highlighted its capability to organize ingestion as directed graphs, thereby enhancing monitoring capabilities.

-

Talend:

- Pros: Talend boasts robust data transformation features that support complex ETL processes. It also benefits from strong community support, providing valuable resources and assistance for users.

- Cons: However, it can be complex to configure, leading to longer setup times. Additionally, licensing costs may accumulate, rendering it less budget-friendly for some organizations.

-

Apache Kafka:

- Pros: Apache Kafka excels in real-time data streaming, making it suitable for high-throughput applications. It is highly scalable, accommodating growing data needs, and enjoys strong community and ecosystem support.

- Cons: Conversely, it requires significant infrastructure investment and can be complex to manage, necessitating a skilled technical team.

-

Hevo Data:

- Pros: Hevo Data features a user-friendly interface that simplifies the data ingestion process, making it ideal for small to medium-sized businesses with straightforward needs. Its pricing structure is also affordable.

- Cons: However, it offers limited advanced features compared to larger tools, which may hinder scalability for enterprise requirements. Additionally, it may not support intricate information transformations as effectively as its competitors.

-

Decube:

- Pros: Decube provides automated column-level lineage, enhancing data cataloging and observability. It integrates seamlessly with existing data stacks, improving data quality and trust. Its intuitive monitoring features simplify data governance. Users appreciate the platform's ability to maintain information accuracy and consistency, fostering collaboration among teams. Moreover, it includes ML-powered assessments for information quality and intelligent alerts that reduce notification overload, further enhancing user experience.

- Cons: While highly effective, some users may encounter a challenging initial learning curve as they adapt to its advanced features.

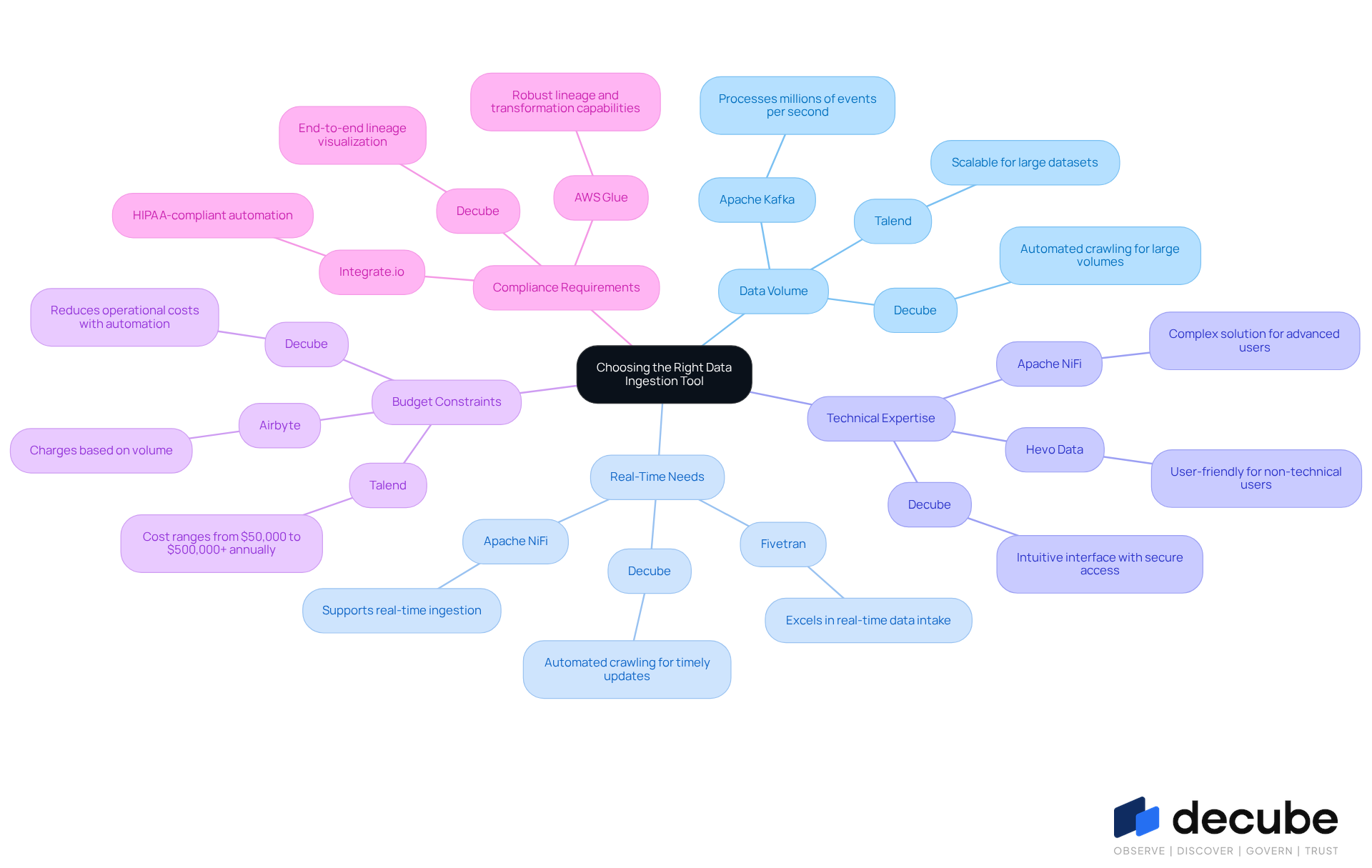

Assess Suitability: Choosing the Right Tool for Your Needs

When selecting a data ingestion tool, organizations must evaluate several critical factors:

- Data Volume: For those managing large datasets, tools like Apache Kafka, which can process millions of events per second, and Talend are ideal due to their exceptional scalability. Decube's automated crawling feature also supports large information volumes by automatically refreshing metadata once sources are connected, ensuring that content remains current and accessible.

- Real-Time Needs: Instant information availability is essential for many applications, particularly in sectors like finance and healthcare. Data ingestion tools like Fivetran and Apache NiFi excel in real-time information intake, allowing organizations to react rapidly to shifting circumstances. Decube enhances this capability with its automated crawling, which ensures that metadata is always up-to-date, facilitating timely decision-making. Instantaneous acquisition is crucial for applications requiring prompt information processing, such as fraud detection.

- Technical Expertise: Organizations with limited technical resources may benefit from user-friendly platforms like Hevo Data, which simplify the ingestion process. Conversely, teams with robust technical capabilities might prefer more complex solutions like Apache NiFi, which serves as one of the advanced data ingestion tools for managing intricate data flows. Decube strikes a balance by providing an intuitive interface for metadata management while also allowing for secure access control through designated approval flows.

- Budget Constraints: Cost considerations are paramount; entities should assess the total cost of ownership, which includes licensing, maintenance, and operational expenses. For example, Talend's enterprise package total cost of ownership varies from $50,000 to $500,000+ annually, whereas Airbyte's pricing model for data ingestion tools relies on volume, making it an adaptable choice for different budgets. Decube's automated crawling feature can also reduce operational costs by minimizing the need for manual updates and oversight, making it a cost-effective choice for organizations.

- Compliance Requirements: Industries with stringent information governance mandates must prioritize tools that provide comprehensive compliance features. Data ingestion tools such as Integrate.io and AWS Glue provide robust lineage and transformation capabilities, ensuring compliance with regulations like GDPR and HIPAA. Decube enhances compliance efforts by providing end-to-end lineage visualization, which is essential for upholding governance standards.

Real-world examples illustrate these factors: a healthcare technology firm improved its information ingestion processes by adopting AWS Glue, which automated information discovery and cataloging, thus enhancing compliance and operational efficiency. Similarly, entities utilizing Apache Kafka have reported substantial improvements in processing speed and reliability, essential for applications demanding real-time insights. With Decube's automated crawling, organizations can further enhance their data observability and governance, ensuring that their data management practices are both efficient and compliant.

Conclusion

Selecting the right data ingestion tool is essential for organizations that seek to leverage data effectively for informed decision-making. Understanding data ingestion as a systematic process - collecting and importing data from various sources into a centralized system - is crucial. The choice of the appropriate tool can significantly enhance data accessibility, quality, and governance, ultimately driving operational efficiency.

Key features of leading data ingestion tools include:

- Integration capabilities

- Information transformation

- Scalability

- Real-time processing

- User-friendliness

- Cost

- Security management

Tools such as Fivetran, Apache NiFi, Talend, and Decube each present unique advantages and drawbacks, catering to diverse organizational needs. The analysis of batch versus real-time ingestion further illustrates that the choice of method should align with specific business requirements and use cases.

In conclusion, organizations must carefully assess their data ingestion needs, taking into account factors such as:

- Data volume

- Real-time requirements

- Technical expertise

- Budget constraints

- Compliance mandates

By doing so, they can select a data ingestion tool that not only meets current demands but also supports future growth and adaptability. Embracing the right data ingestion strategy is vital for fostering a data-driven culture, ensuring that timely and accurate information is readily available to decision-makers.

Frequently Asked Questions

What is data ingestion?

Data ingestion is the systematic collection and importation of data from various sources into a centralized system for storage and analysis. It is crucial for organizations that rely on data-driven decision-making and involves different content types, including structured, semi-structured, and unstructured formats.

Why is efficient data ingestion important?

Efficient data ingestion ensures that information is readily accessible for analytics, reporting, and operational processes, thereby enhancing overall quality and accessibility.

What role does a catalog play in data ingestion?

A catalog acts as a searchable inventory of assets enriched with metadata, such as owners, descriptions, classifications, quality, and lineage. This integration helps teams quickly discover, understand, and trust the correct information.

What are the types of data ingestion?

Data ingestion can be categorized into two primary types: batch processing and real-time processing.

What is batch processing in data ingestion?

Batch processing involves collecting and processing information at scheduled intervals, making it suitable for scenarios where immediate availability is not critical. It is often more economical and easier to manage, especially for large amounts of data.

What is real-time processing in data ingestion?

Real-time processing captures information continuously as it is generated, allowing organizations to make timely decisions based on the most current data. This method is essential for applications that require immediate insights, such as fraud detection.

How does automated crawling enhance data ingestion?

Automated crawling updates metadata automatically without manual intervention, streamlining information management and strengthening governance. It reduces the time spent on manual updates for batch processing and ensures that the most current metadata is accessible for real-time processing.

How do organizations decide between batch and real-time data ingestion?

The choice between batch and real-time data ingestion depends on specific use cases and business requirements. Batch processing is advantageous for its cost efficiency and simplicity, while real-time ingestion offers benefits in responsiveness and agility.

What is the evolving perspective on data ingestion methods?

The evolving perspective emphasizes the importance of context in data ingestion strategies. Organizations are encouraged to assess when to use batch processing and when streaming makes more sense, highlighting the need to adapt strategies as technology and requirements change.

List of Sources

- Define Data Ingestion: Understanding the Basics

- Data Statistics (2026) - How much data is there in the world? (https://rivery.io/blog/big-data-statistics-how-much-data-is-there-in-the-world)

- Top Content on LinkedIn (https://linkedin.com/pulse/data-ingestion-service-market-production-share-trends-size-nxuic)

- What Is Data Ingestion? 5 Benefits & Best Practices (https://boomi.com/blog/data-ingestion-guide)

- Understanding Ingestion Data Meaning: Importance and Challenges for Engineers | Decube (https://decube.io/post/understanding-ingestion-data-meaning-importance-and-challenges-for-engineers)

- Explore Types of Data Ingestion: Batch vs. Real-Time

- Batch vs Streaming in 2026: When to Use What? (https://medium.com/towards-data-engineering/batch-vs-streaming-in-2026-when-to-use-what-df9df7af72fe)

- The Competitive Advantages of Real-Time Data Streaming in Financial Services (https://blog.technologent.com/the-competitive-advantages-of-real-time-data-streaming-in-financial-services)

- 13 Data Ingestion Tools in 2026 Compared: Batch, Real-Time, and CDC (https://estuary.dev/blog/data-ingestion-tools)

- How to do real-time data processing for modern analytics 2026 (https://tinybird.co/blog/real-time-data-processing)

- What is Real-Time Analytics? A Complete Guide (2026) | Engineering | ClickHouse Resource Hub (https://clickhouse.com/resources/engineering/what-is-real-time-analytics)

- Compare Key Features of Leading Data Ingestion Tools

- Top 10 AI Powered Data Ingestion Tools to Try in 2026 (https://unitedtechno.com/top-10-ai-powered-data-ingestion-tools)

- 6 Best Data Ingestion Tools for Scalable Data Pipelines (https://ovaledge.com/blog/data-ingestion-tools)

- Best Data Integration Tools in 2026: Top 10 Platforms Compared (https://improvado.io/blog/best-data-integration-tools)

- Top 11 Data Ingestion Tools for 2026 | Integrate.io (https://integrate.io/blog/top-data-ingestion-tools)

- Top 20 Data Ingestion Tools in 2026: The Ultimate Guide (https://datacamp.com/blog/data-ingestion-tools)

- Evaluate Pros and Cons of Each Data Ingestion Tool

- Fivetran 2026 Verified Reviews, Pros & Cons (https://trustradius.com/products/fivetran/reviews/all)

- Apache NiFi: Pros and Cons 2026 (https://peerspot.com/products/apache-nifi-pros-and-cons)

- Fivetran: Pros and Cons 2026 (https://peerspot.com/products/fivetran-pros-and-cons)

- Fivetran Review 2026: Pros and Cons for Data Teams (https://integrate.io/blog/fivetran-review)

- Fivetran vs. Talend: Pros & Cons 2026 Comparison | Rivery (https://rivery.io/etl-tools-compared/fivetran-vs-talend)

- Assess Suitability: Choosing the Right Tool for Your Needs

- 6 Best Data Ingestion Tools for Scalable Data Pipelines (https://ovaledge.com/blog/data-ingestion-tools)

- Top 11 Data Ingestion Tools for 2026 | Integrate.io (https://integrate.io/blog/top-data-ingestion-tools)

- Modernizing data ingestion: How to choose the right ETL platform for scale (https://cio.com/article/4008522/modernizing-data-ingestion-how-to-choose-the-right-etl-platform-for-scale.html)

- Top 20 Data Ingestion Tools in 2026: The Ultimate Guide (https://datacamp.com/blog/data-ingestion-tools)

- Data Ingestion — Part 2: Tool Selection Strategy (https://medium.com/the-modern-scientist/data-ingestion-part-2-tool-selection-strategy-07c6ca7aeddb)

_For%20light%20backgrounds.svg)