Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Essential Data Stewardship Tools for Effective Governance

Discover essential data stewardship tools for effective governance and informed decision-making.

Introduction

Effective governance in today’s data-driven landscape relies on robust data stewardship, a practice that safeguards the integrity, accessibility, and security of information assets. Organizations can achieve significant benefits by implementing appropriate tools that not only enhance decision-making but also reduce risks associated with data mismanagement. However, with a multitude of data stewardship solutions available, organizations must discern which tools will best address their unique needs and comply with regulatory requirements.

Define Data Stewardship and Its Importance in Governance

Information management involves the comprehensive administration and oversight of an organization's information assets, ensuring their quality, accessibility, and security. This practice is crucial for maintaining information integrity and adhering to regulatory standards. Effective information management fosters trust in the data, enhances decision-making capabilities, and mitigates risks associated with information mismanagement.

For instance, healthcare organizations typically face 8% to 12% in duplicate patient records, with larger health systems experiencing rates as high as 15%. By implementing robust information management practices, particularly those supported by Decube's Unified Data Trust Platform, organizations can significantly reduce these discrepancies through the use of data stewardship tools. This ensures that their information remains accurate, consistent, and compliant with industry regulations.

Such practices not only support strategic objectives but also empower organizations to make informed decisions, ultimately driving operational efficiency and enhancing overall business performance.

Compare Key Data Stewardship Tools and Their Features

A variety of information management tools are available, each designed to meet specific organizational needs. For instance:

- Collibra: Known for its robust data governance capabilities, Collibra excels in data cataloging, policy management, and lineage tracking. It is particularly well-suited for large enterprises, providing a centralized catalog of information assets and supporting structured stewardship workflows. Organizations in regulated industries benefit from its strong compliance reporting features; however, effective operation often necessitates a significant investment and a dedicated governance team.

- Informatica: This platform is distinguished by its comprehensive information quality management and advanced information integration capabilities. Informatica ensures that information remains accurate and accessible across various platforms, making it a preferred choice for companies that require precise lineage for auditing and regulatory compliance. While its complexity may pose challenges for smaller teams, it offers robust security and compliance features essential for larger enterprises.

- Alation: Focusing on information cataloging, Alation enhances information discovery and collaboration among users. Its search-first design enables business users to quickly locate relevant datasets, significantly improving adoption rates for self-service analytics. Although it excels in usability and trust in information assets, organizations seeking strong policy enforcement may need to integrate it with other tools for comprehensive oversight.

- Decube: Notable for its AI-driven insights and real-time monitoring capabilities, Decube integrates seamlessly with modern information stacks. It offers sophisticated anomaly detection and automated oversight features, ensuring that information pipelines operate efficiently and quality issues are proactively addressed.

Selecting data stewardship tools should align with the specific management needs of the organization, considering factors such as scale, complexity, and regulatory requirements.

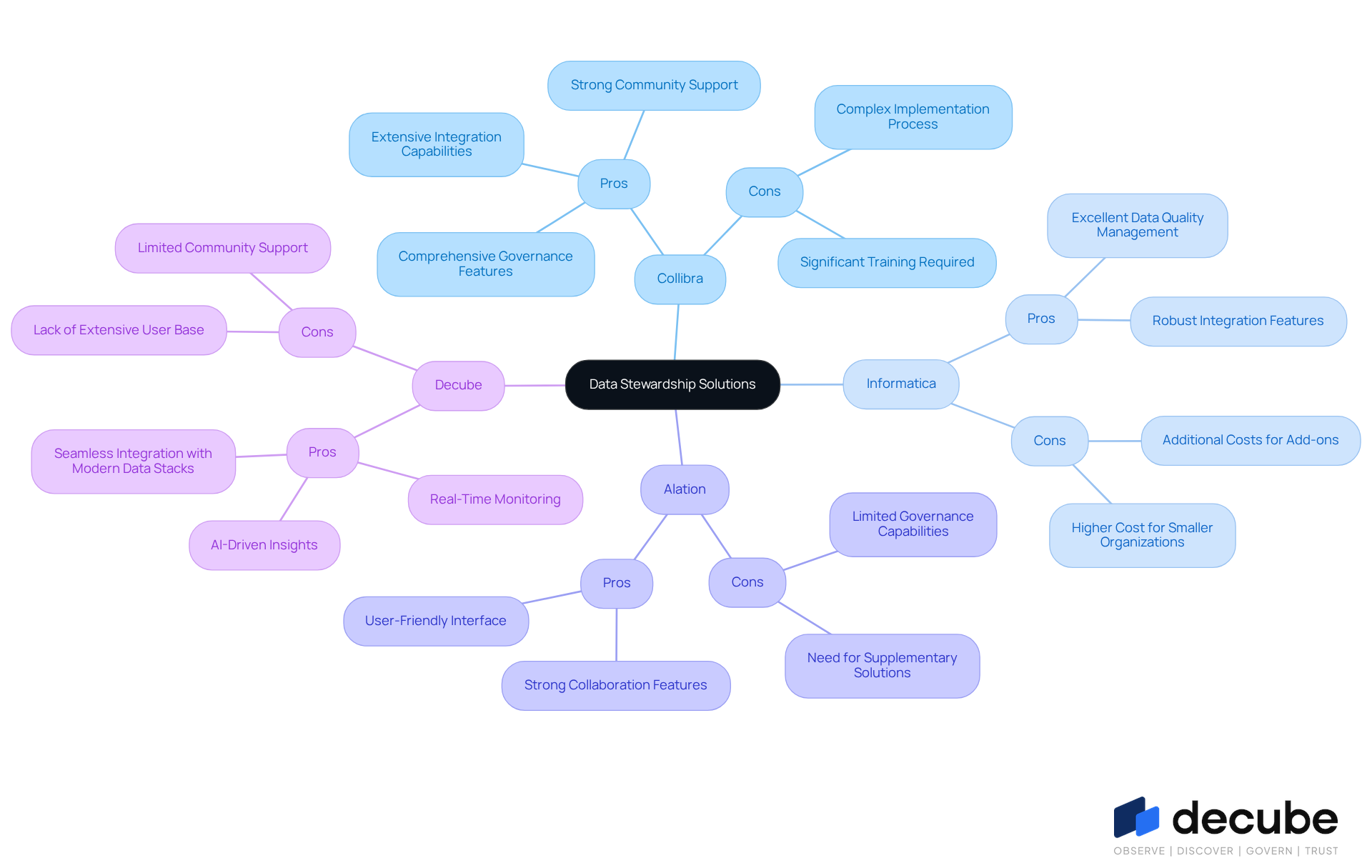

Evaluate Pros and Cons of Leading Data Stewardship Solutions

Collibra:

Pros: Collibra provides a comprehensive suite of governance features, strong community support, and extensive integration capabilities. This makes it particularly suitable for large organizations with complex governance needs.

Cons: However, the implementation process can be complex, often necessitating significant training for users, which may hinder adoption and efficiency.

Informatica:

Pros: Informatica is renowned for its excellent data quality and integration features. It is widely recognized in the industry for its robust capabilities.

Cons: The higher cost associated with Informatica can pose a barrier for smaller organizations, potentially limiting its accessibility.

Alation:

Pros: Alation is known for its user-friendly interface and strong collaboration features. It facilitates easy access and sharing of data among teams, thereby enhancing data literacy.

Cons: In comparison to more comprehensive tools, Alation has restricted capabilities in governance, which may necessitate the use of supplementary solutions to meet complete governance needs.

Decube:

Pros: Decube features advanced AI-driven insights, real-time monitoring, and seamless integration with modern data stacks. This positions it as a strong contender in the landscape of data stewardship tools.

Cons: As a newer player in the market, Decube may lack the extensive user base and community support that more established tools offer.

This assessment provides companies with a clear structure to evaluate their options based on specific requirements and budget constraints, ensuring they select the most appropriate information management solution.

Examine User Experiences and Case Studies in Data Stewardship

User experiences and case studies provide critical insights into the effectiveness of various data stewardship tools.

Collibra: A financial services firm implemented Collibra to enhance its data governance framework. This initiative resulted in improved compliance with regulatory standards and a notable reduction in data-related errors.

Informatica: A healthcare organization utilized Informatica to streamline its information integration processes. This led to improved quality and quicker reporting times, which were essential for timely patient care decisions.

Alation: A retail company adopted Alation to enhance information accessibility across departments. This tool facilitated better collaboration and data-driven decision-making, ultimately boosting sales performance.

Decube: A telecommunications provider utilized Decube's AI-driven insights to oversee information quality in real-time. This proactive approach enabled them to address information issues before they impacted operations, significantly enhancing their governance practices. Users have commended Decube for its well-crafted observability features, particularly the lineage mapping capability, which demonstrates the entire information flow across components. This user-focused design not only improves information quality and trust but also reflects Decube's commitment to outstanding customer service and intuitive monitoring solutions.

These case studies illustrate how various organizations have successfully utilized data stewardship tools to meet their governance objectives.

Conclusion

Effective data stewardship is fundamental to strong governance, ensuring organizations manage their information assets with integrity and compliance. By implementing appropriate tools, businesses can enhance the quality and accessibility of their data while significantly mitigating risks associated with mismanagement. The examination of various data stewardship tools underscores the necessity of aligning technology with organizational needs to cultivate a culture of informed decision-making and operational efficiency.

In this article, we have analyzed key tools such as Collibra, Informatica, Alation, and Decube, focusing on their unique features, advantages, disadvantages, and real-world applications.

- Collibra is notable for its governance capabilities.

- Informatica excels in ensuring information quality.

- Alation promotes collaboration.

- Decube provides AI-driven insights.

Each tool presents distinct benefits and challenges, highlighting the importance of selecting a solution that aligns with the specific requirements of an organization, including scale, complexity, and regulatory demands.

Ultimately, the importance of data stewardship extends beyond mere compliance; it involves fostering trust and enabling data-driven decision-making at all levels of an organization. As businesses navigate an increasingly data-centric landscape, investing in the right stewardship tools becomes essential. Organizations are encouraged to critically evaluate their options, leveraging insights from user experiences and case studies to drive successful governance outcomes and ensure sustainable business performance.

Frequently Asked Questions

What is data stewardship?

Data stewardship refers to the comprehensive administration and oversight of an organization's information assets, ensuring their quality, accessibility, and security.

Why is data stewardship important in governance?

Data stewardship is crucial for maintaining information integrity, adhering to regulatory standards, fostering trust in data, enhancing decision-making capabilities, and mitigating risks associated with information mismanagement.

What are the consequences of poor information management in healthcare organizations?

Poor information management can lead to issues such as duplicate patient records, with healthcare organizations facing rates of 8% to 12%, and larger systems experiencing rates as high as 15%.

How can organizations reduce discrepancies in their information management?

Organizations can significantly reduce discrepancies by implementing robust information management practices and utilizing data stewardship tools, such as those supported by Decube's Unified Data Trust Platform.

What are the benefits of effective information management practices?

Effective information management practices support strategic objectives, empower organizations to make informed decisions, drive operational efficiency, and enhance overall business performance.

List of Sources

- Define Data Stewardship and Its Importance in Governance

- The Role of Data Stewards Today: Key Responsibilities & Challenges | Alation (https://alation.com/blog/role-of-data-stewards)

- The Role of Data Governance and Stewardship in Data Strategy - Data Ladder (https://dataladder.com/the-role-of-data-governance-and-stewardship-in-data-strategy)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Data Stewardship: Turning Data Governance into Business Impact (https://proarch.com/blog/data-stewardship-the-people-and-process-of-operationalizing-data-governance)

- Compare Key Data Stewardship Tools and Their Features

- 10 Top Data Governance Software Tools in 2026 (https://complereinfosystem.com/blogs/top-data-governance-software-tools-2026)

- Data Governance Software Options 2026 (https://viewpointanalysis.com/post/data-governance-software-options-2026)

- Best Data Governance Tools for Enterprises: 2026 Guide | AtScale (https://atscale.com/blog/best-data-governance-tools)

- Data Governance Tools: 7 to Know in 2026 (https://enov8.com/blog/data-governance-tools)

- Top 11 Data Governance Tools for 2026 | Integrate.io (https://integrate.io/blog/top-data-governance-tools)

- Evaluate Pros and Cons of Leading Data Stewardship Solutions

- Collibra vs Informatica: What's Better for Data Cataloging and Governance? (https://data.world/resources/compare/collibra-vs-informatica)

- Best Data Governance Platforms in 2026 | Evaluate & Compare (https://atlan.com/know/data-governance-platforms)

- Data Governance Tools: 7 to Know in 2026 (https://enov8.com/blog/data-governance-tools)

- Data Governance Market Size, Growth Drivers, Size And Forecast 2031 (https://mordorintelligence.com/industry-reports/data-governance-market)

- Examine User Experiences and Case Studies in Data Stewardship

- How Data Stewardship Protects Healthcare Organizations (https://gaine.com/articles/how-data-stewardship-protects-healthcare-organizations)

- Top 10: Data Governance Tools (https://technologymagazine.com/top10/top-10-data-governance-tools)

- FedGeoDay 2026: Building the Ecosystem for Federal Data Stewardship (https://newlighttechnologies.com/blog/fedgeoday-2026-building-the-ecosystem-for-federal-data-stewardship)

- Data Governance Tools: 7 to Know in 2026 (https://enov8.com/blog/data-governance-tools)

- 7 Best Data Governance Tools: Buyer's Guide - OvalEdge (https://ovaledge.com/blog/top-data-governance-tools)

_For%20light%20backgrounds.svg)