Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices to Build a Strong Culture of Data

Discover best practices for cultivating a strong culture of data within your organization.

Introduction

In today’s competitive landscape, organizations must prioritize the establishment of a robust data culture to remain viable. By embracing best practices that emphasize:

- Leadership commitment

- Employee empowerment

- Strong governance

- Cross-functional collaboration

organizations can unlock the full potential of their data assets. Despite the recognized importance of data culture, many organizations struggle to implement effective strategies. Leaders must identify effective methods to foster an environment conducive to data-driven decision-making amidst evolving market demands. This article outlines essential strategies organizations can adopt to cultivate a strong data culture, ensuring they thrive in the data-driven age.

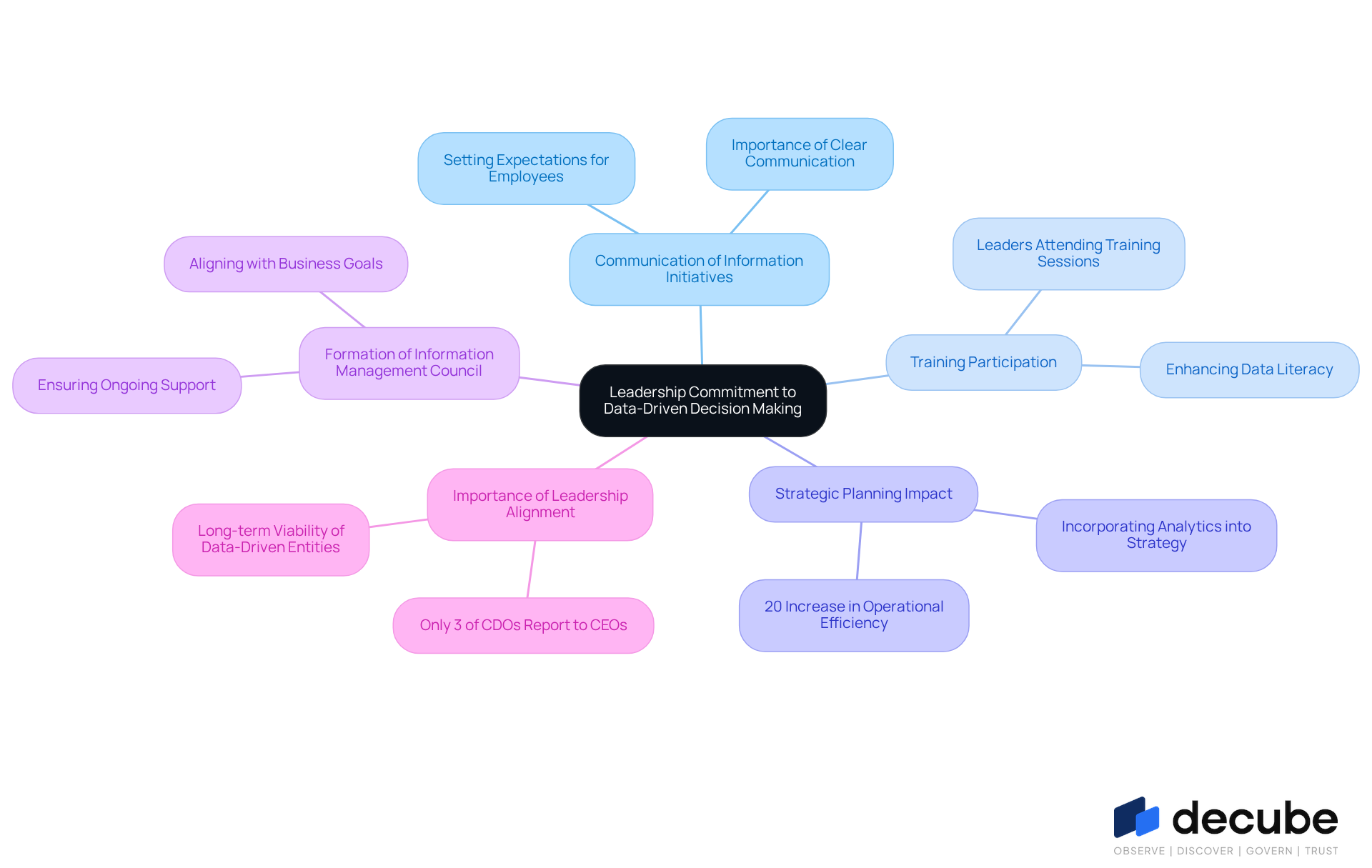

Cultivate Leadership Commitment to Data-Driven Decision Making

To foster a culture of evidence-based decision-making, organizations must prioritize the communication of information initiatives. Leaders should actively participate in these initiatives, demonstrating their commitment by attending training sessions and integrating information into their own decision-making processes. For instance, a financial services company that incorporated analytics into its strategic planning achieved a remarkable 20% increase in operational efficiency, highlighting the significant impact of leadership involvement.

As organizations face increasing pressure from consumers and communities, complicating their ability to implement effective information management strategies, forming a council for information management that includes key leaders can ensure ongoing support and alignment with overall business goals. Significantly, just 3% of Chief Data Officers (CDOs) presently report directly to the CEO, emphasizing the essential requirement for leadership alignment in information management. This alignment is crucial as data-driven entities are more viable in the long term and better prepared to adapt to economic challenges.

Decube's automated crawling feature helps organizations enhance information observability and governance by simplifying metadata management and ensuring secure access control. This capability enables automatic updates of metadata once sources are linked, ensuring that information remains current and trustworthy. Testimonials from users, such as Piyush P., highlight how Decube's automated column-level lineage effectively combines cataloging with observability. These features enhance information quality and foster collaboration among teams, underscoring the importance of leadership commitment to a robust information culture.

Ultimately, the commitment of leadership to information management will determine an organization's resilience in the face of evolving economic landscapes.

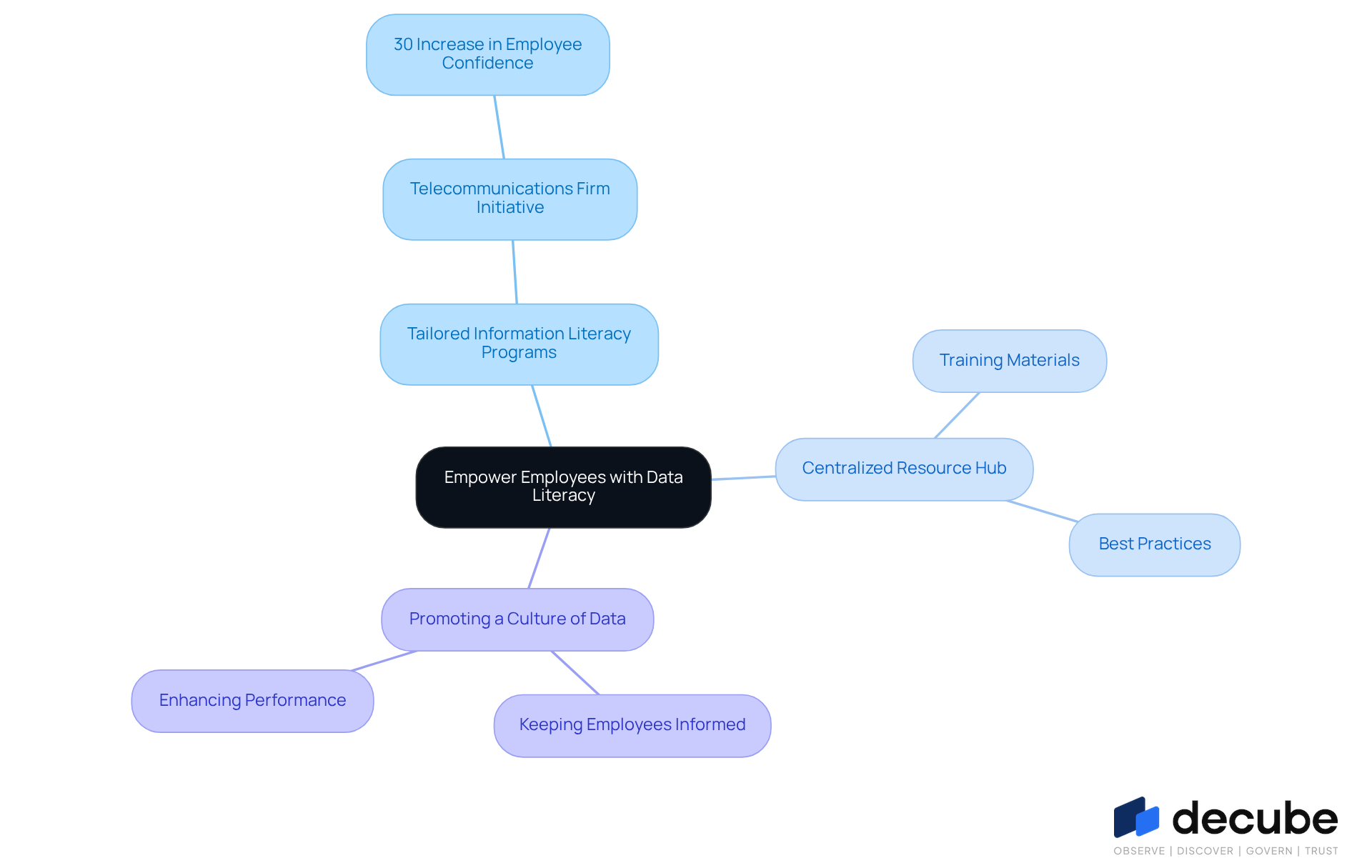

Empower Employees with Data Literacy and Training Resources

To navigate the complexities of modern information landscapes, organizations must prioritize tailored information literacy programs that cater to diverse skill levels. For instance, a telecommunications firm implemented a literacy initiative, leading to a notable 30% increase in employee confidence in utilizing information for decision-making.

Establishing a centralized resource hub where employees can easily access training materials and best practices not only enhances the learning experience but also fosters a culture of data and continuous improvement among employees.

Promoting a culture of data is essential for keeping employees informed about the latest tools and techniques, thereby enhancing overall performance and engagement. Without such initiatives, organizations risk stagnation in employee development and decision-making capabilities.

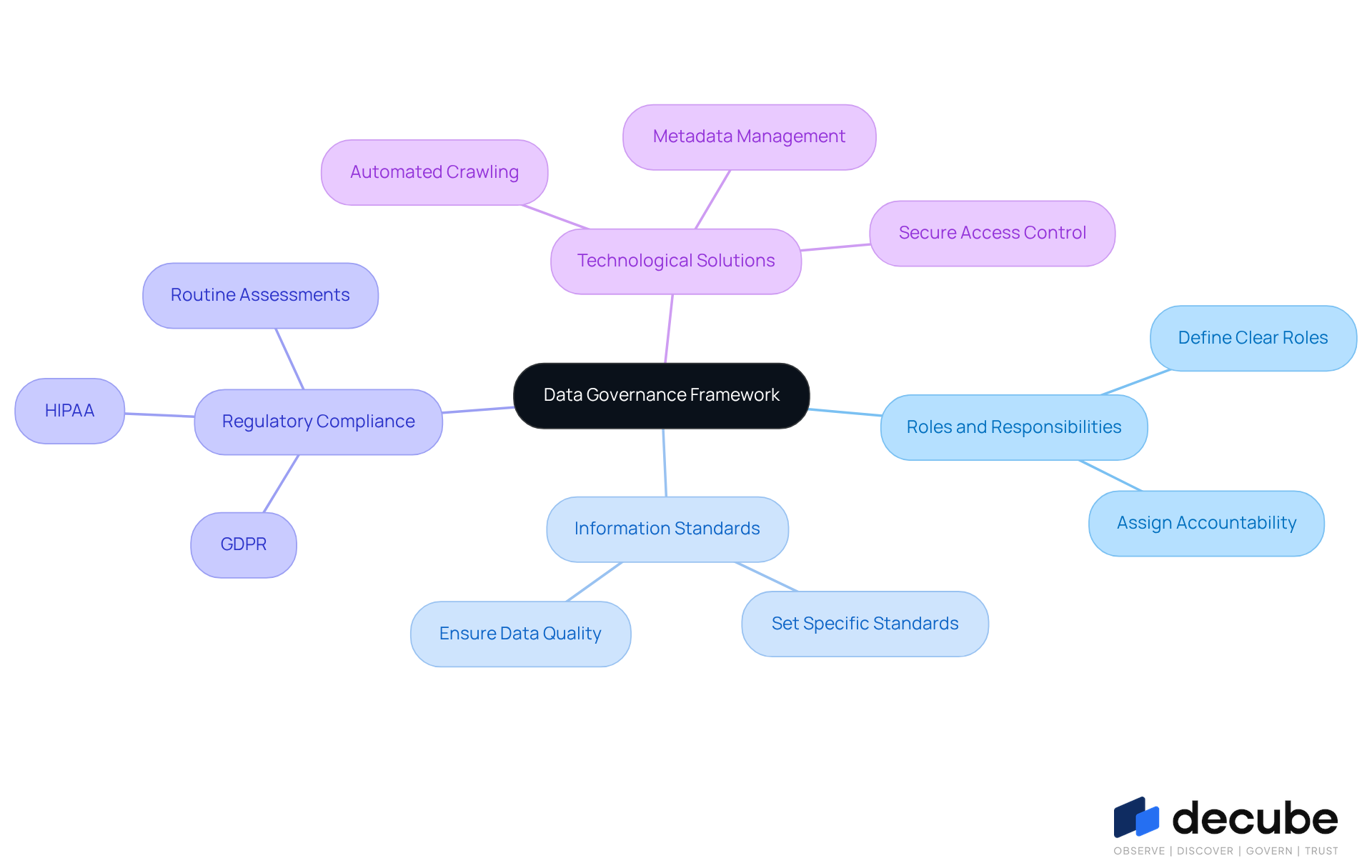

Implement Strong Data Governance Frameworks for Quality and Compliance

To establish effective information management frameworks, organizations must define roles and responsibilities clearly, set specific information standards, and ensure adherence to regulations such as GDPR and HIPAA. For instance, a healthcare organization that established a comprehensive information management framework achieved a remarkable 40% decrease in errors, resulting in significantly enhanced patient outcomes.

Poor information accuracy can lead to financial losses, with firms projected to lose approximately 12% of their yearly income due to insufficient management. Routine assessments and evaluations of oversight practices are crucial for adjusting to changing regulations and upholding high information quality standards. This is underscored by the fact that 84% of digital transformation initiatives fail due to inadequate information quality and oversight.

Furthermore, employing technological solutions like Decube's automated crawling feature can enhance governance efficiency and precision, ultimately promoting a culture of data accountability and trust in information management. This feature enables effortless metadata management and secure access control, ensuring that information remains trustworthy and consistent.

As Karolina Fox states, "Organizations that fail to automate will find themselves paralyzed by compliance bottlenecks, while those who adapt will thrive in the autonomous era." Ultimately, organizations that prioritize information management will not only comply with regulations but also enhance their operational effectiveness.

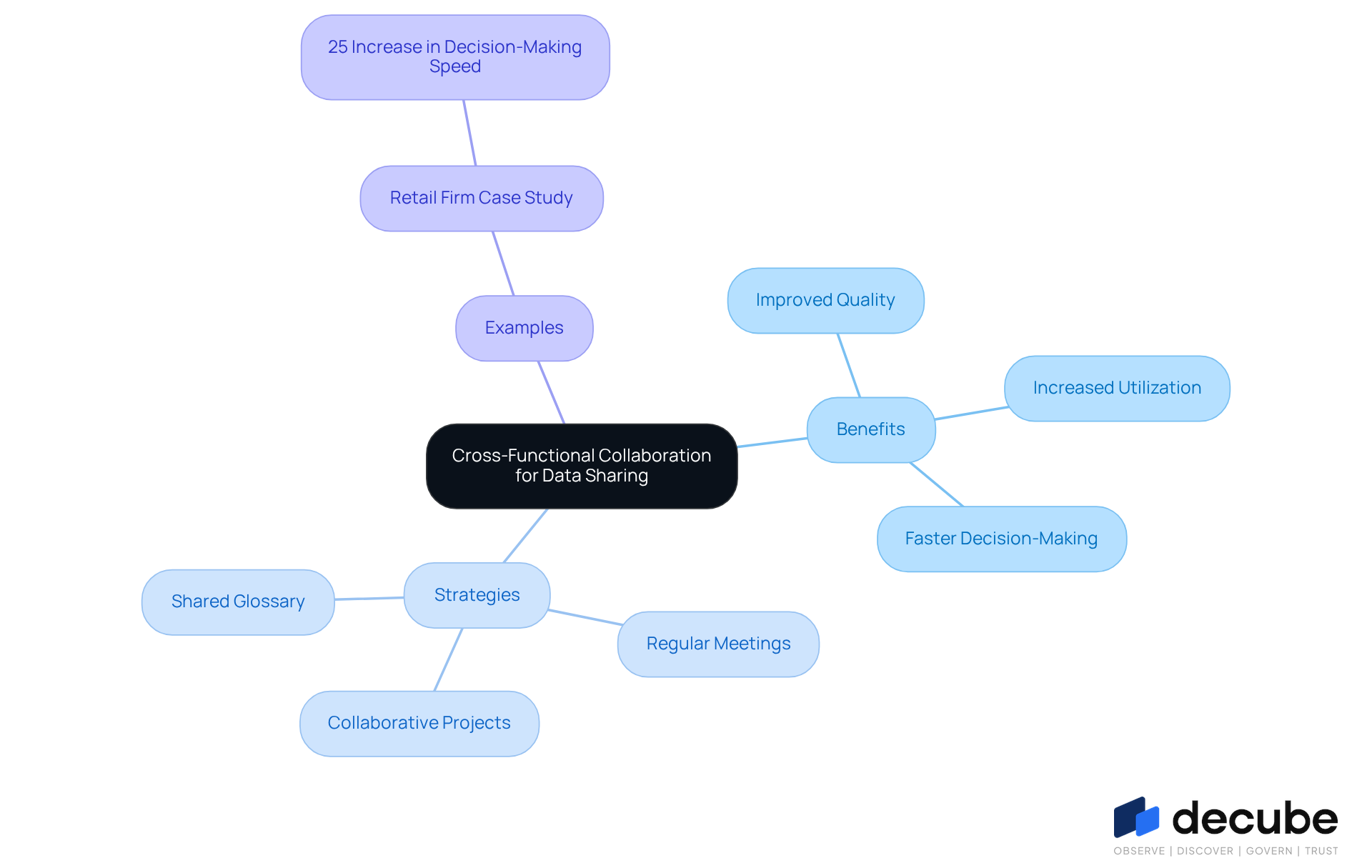

Foster Cross-Functional Collaboration for Enhanced Data Sharing

To enhance data management and decision-making, organizations must prioritize cross-functional collaboration on information initiatives. This collaboration enables teams to share insights and best practices, ultimately improving quality and utilization. Regular inter-departmental meetings and collaborative projects are essential for improving communication and fostering a deeper understanding among teams.

For instance, a prominent retail firm that implemented cross-functional information teams experienced a remarkable 25% increase in the speed of insights-driven decision-making. Furthermore, establishing a shared glossary of terms and definitions is crucial for effective communication about data, promoting a unified approach to data management and collaboration.

Ultimately, fostering a culture of data collaboration can transform how organizations leverage data for strategic advantage.

Conclusion

In today's competitive landscape, cultivating a robust data culture is essential for organizations aiming to thrive. Building a strong culture of data is a strategic imperative that can drive significant improvements in decision-making and operational efficiency. By prioritizing leadership commitment, empowering employees through data literacy, establishing strong governance frameworks, and fostering cross-functional collaboration, organizations can create an environment where data is valued and effectively utilized.

The key practices outlined include:

- The necessity for leaders to actively engage in data initiatives, which has been shown to improve operational outcomes, as demonstrated by specific case studies from leading organizations.

- Providing tailored training resources empowers employees, ensuring they have the skills needed to leverage data effectively.

- Implementing a strong governance framework safeguards data quality and compliance.

- Promoting collaboration across departments enhances the sharing of insights and best practices.

Ultimately, embracing these best practices will not only enhance an organization's ability to navigate complex information landscapes but also position it for long-term success. It is crucial for organizations to recognize the transformative power of a strong data culture and take actionable steps towards fostering it. Organizations that fail to prioritize data culture risk stagnation and obsolescence in a rapidly evolving market.

Frequently Asked Questions

Why is leadership commitment important for data-driven decision making?

Leadership commitment is crucial because it fosters a culture of evidence-based decision-making, ensuring that information initiatives are prioritized and effectively integrated into organizational processes.

How can leaders demonstrate their commitment to data-driven decision making?

Leaders can demonstrate their commitment by actively participating in training sessions, incorporating data into their own decision-making processes, and supporting information management initiatives.

What impact can leadership involvement have on organizational efficiency?

Leadership involvement can lead to significant improvements in operational efficiency, as evidenced by a financial services company that achieved a 20% increase in efficiency by incorporating analytics into its strategic planning.

What challenges do organizations face in implementing effective information management strategies?

Organizations face increasing pressure from consumers and communities, which complicates their ability to implement effective information management strategies.

How can forming a council for information management benefit organizations?

Forming a council for information management that includes key leaders can ensure ongoing support and alignment with overall business goals, facilitating better information governance.

What is the current state of Chief Data Officers (CDOs) in relation to leadership alignment?

Currently, only 3% of Chief Data Officers report directly to the CEO, highlighting the need for better leadership alignment in information management.

How does Decube's automated crawling feature enhance information governance?

Decube's automated crawling feature simplifies metadata management and ensures secure access control by automatically updating metadata once sources are linked, keeping information current and trustworthy.

What are the benefits of Decube's automated column-level lineage?

Decube's automated column-level lineage combines cataloging with observability, enhancing information quality and fostering collaboration among teams.

What is the overall impact of leadership commitment to information management on an organization?

The commitment of leadership to information management is vital for an organization's resilience, particularly in adapting to evolving economic landscapes.

List of Sources

- Cultivate Leadership Commitment to Data-Driven Decision Making

- The Importance of Data-Driven Leadership - hireneXus (https://hirenexus.com/the-importance-of-data-driven-leadership)

- What Will Great Business Leadership Look Like in 2026? (https://aspeninstitute.org/blog-posts/what-will-great-business-leadership-look-like-in-2026)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- Data-Driven Decision Making & The Rise Of The Chief Data Officer (https://councils.forbes.com/blog/data-driven-decision-making-the-rise-of-the-chief-data-officer)

- The Power of Data-Driven Decision-Making in Leadership (https://velosio.com/blog/the-power-of-data-driven-decision-making-in-leadership)

- Empower Employees with Data Literacy and Training Resources

- Employee Training Statistics and Trends to Know in 2026 (https://d2l.com/blog/employee-training-statistics)

- Data Literacy Skills Gap in Enterprise: 2026 Insights (https://datacamp.com/blog/the-data-literacy-skills-gap-in-enterprise-why-table-stakes-still-aren-t-universal)

- Learning Drives Results in 2026 Report | Jeffrey Riley posted on the topic | LinkedIn (https://linkedin.com/posts/jeffreylriley_trends-2026-reinforcing-the-strategic-value-activity-7452027183151316992-eo3d)

- 2026 Training Industry Statistics: Data, Trends & Predictions | Research.com (https://research.com/careers/training-industry-statistics)

- Implement Strong Data Governance Frameworks for Quality and Compliance

- Top data governance trends: The future of data in 2026 - Murdio (https://murdio.com/insights/data-governance-trends)

- Data Governance Statistics And Facts (2025): Emerging Technologies, Challenges And Adoption, AI, ROI, and Data Quality Insights (https://electroiq.com/stats/data-governance)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- Foster Cross-Functional Collaboration for Enhanced Data Sharing

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- How to Implement Cross Functional Collaboration in 2026 (https://mockflow.com/blog/cross-functional-collaboration)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- How enterprises are rethinking collaborative analytics (https://cio.com/article/4148653/how-enterprises-are-rethinking-collaborative-analytics.html)

- How cross-functional teams rewrite the rules of IT collaboration (https://cio.com/article/4065346/how-cross-functional-teams-rewrite-the-rules-of-it-collaboration.html)

_For%20light%20backgrounds.svg)