Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Effective Data Discovery Platforms

Explore best practices for leveraging data discovery platforms to enhance data management and governance.

Introduction

Data discovery platforms are crucial for organizations navigating the complexities of modern data management. These specialized tools empower businesses to efficiently locate, analyze, and leverage their information, driving informed decision-making and fostering a competitive edge. Implementing these platforms presents significant challenges that necessitate strategic best practices for success. Identifying essential features and approaches can transform data discovery into a streamlined process that enhances operational efficiency and compliance.

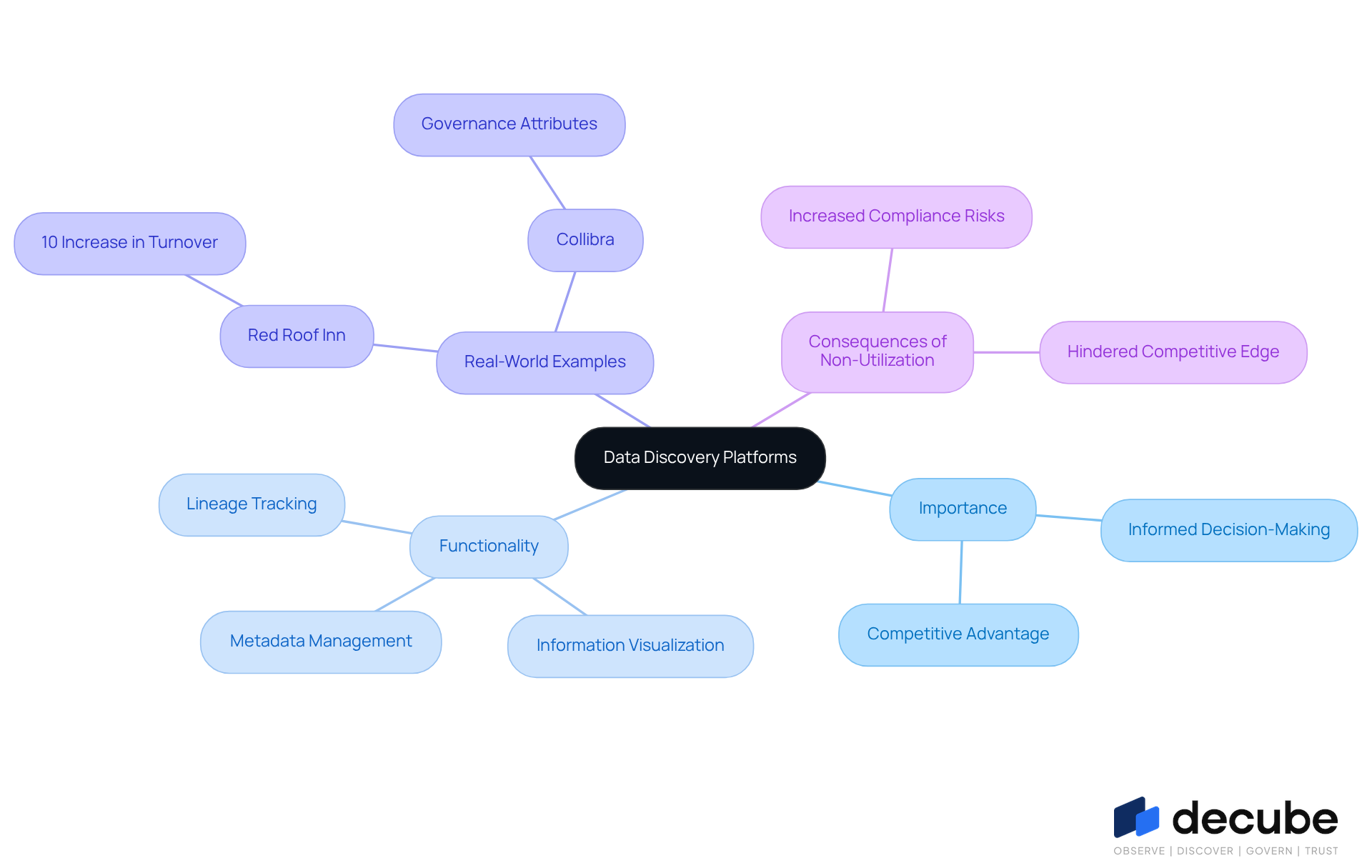

Define Data Discovery Platforms and Their Importance

As information complexity escalates, organizations face significant challenges in managing their data effectively. Data discovery platforms are tailored software tools that enable entities to find, comprehend, and utilize their information from various sources. These data discovery platforms are crucial for enabling exploration and analysis, allowing users to uncover insights that promote informed decision-making. Analytics-oriented organizations that effectively utilize these systems are 23 times more likely to acquire customers and six times more likely to retain them, highlighting the competitive advantage gained through efficient information management.

Recent advancements in information retrieval systems have further improved their capabilities. For example, systems such as Atlan and Collibra have incorporated active metadata management, which automates actions based on metadata changes, streamlining workflows and enhancing user experience. These innovations are essential for organizations seeking to uphold adherence to regulations like GDPR and HIPAA, as they offer structured methods for governance and quality assurance.

Real-world examples demonstrate the effectiveness of these systems in ensuring compliance and governance. For instance, Red Roof Inn utilized analytics to initiate targeted marketing campaigns based on flight cancellations and weather conditions, leading to a 10% rise in turnover. Similarly, entities utilizing systems like Collibra benefit from extensive governance attributes that guarantee information is handled with compliance context, thus decreasing the likelihood of unregulated information usage.

By providing tools for information visualization, metadata management, and lineage tracking, data discovery platforms enable organizations to utilize their resources effectively. Decube distinguishes itself with its automated crawling capability, ensuring effortless metadata management and enhancing information observability and governance. This system simplifies workflows while ensuring secure access control, making it a vital tool for organizations today. This results in enhanced operational effectiveness and strategic understanding, emphasizing the importance of information exploration systems. Ultimately, the failure to leverage information exploration systems can hinder an organization's ability to thrive in a competitive landscape.

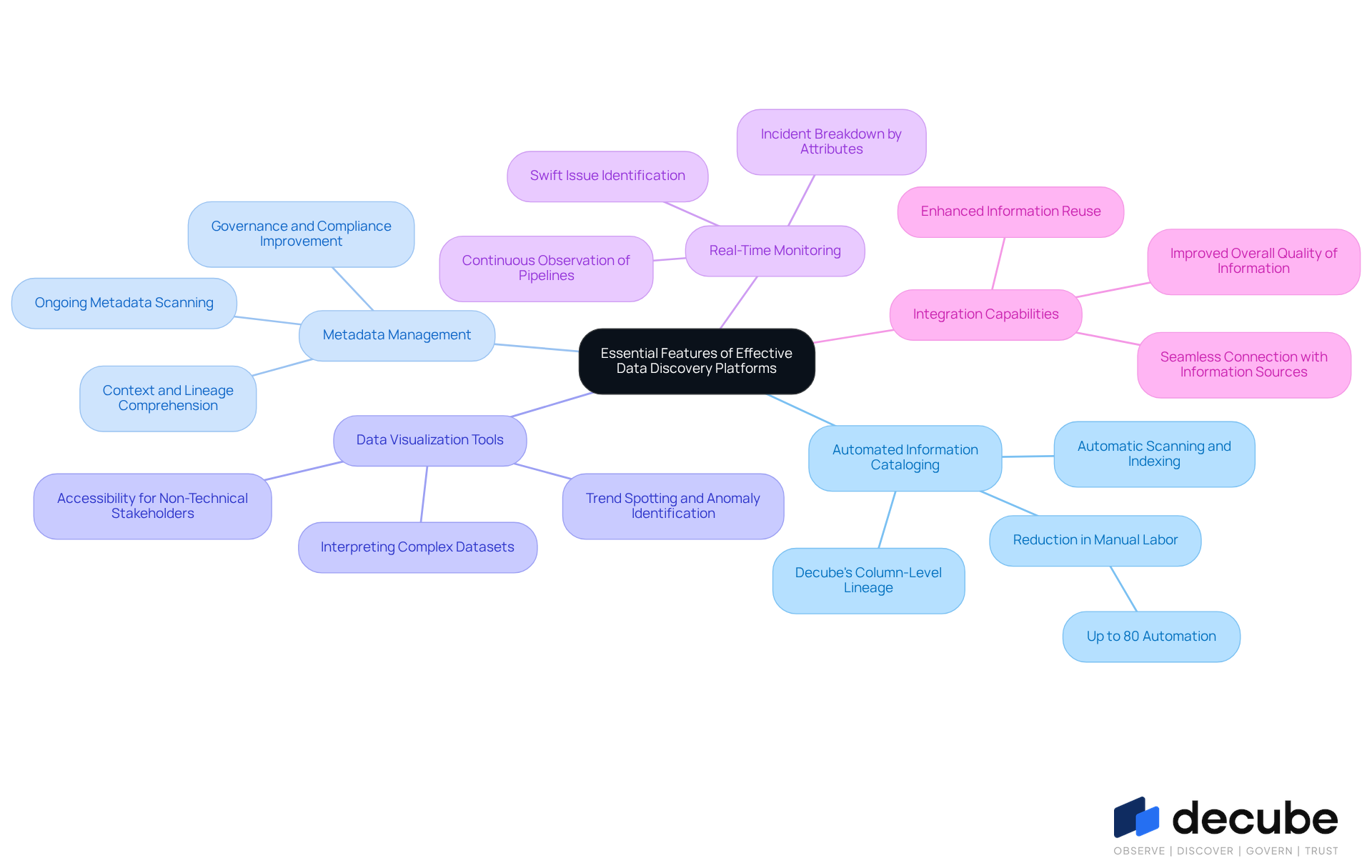

Identify Essential Features of Effective Data Discovery Platforms

To optimize functionality, effective data discovery platforms must integrate essential features that enhance data management and governance:

- Automated Information Cataloging: This feature facilitates the automatic scanning and indexing of information across diverse sources, ensuring that all assets are readily discoverable, thereby streamlining the data management process. Organizations utilizing automated cataloging report substantial decreases in manual labor, with some achieving up to 80% automation in their information discovery processes. Decube excels in this area with its automated column-level lineage, providing a perfect blend of information catalog and observability modules.

- Metadata Management: Strong metadata management features are essential for comprehending the context and lineage of information. This improves governance and compliance, enabling organizations to uphold transparency and trust in their information assets. Ongoing metadata scanning is essential for maintaining accuracy as environments change. Decube's lineage feature highlights the comprehensive information flow across components, enhancing users' comprehension of their information.

- Data Visualization Tools: Data visualization tools play a crucial role in interpreting complex datasets, making insights accessible to non-technical stakeholders. These tools empower users to spot trends and identify anomalies quickly, fostering informed decision-making. This accessibility is crucial for fostering collaboration across departments.

- Real-Time Monitoring: Continuous observation of pipelines is essential for identifying anomalies or issues swiftly. This proactive approach helps maintain information integrity and allows organizations to respond swiftly to any quality concerns. Decube's system features efficient monitoring tools that offer incident breakdowns by attributes, which is especially advantageous for entities with several business segments.

- Integration Capabilities: The capacity to seamlessly connect with various information sources and tools enhances the system's flexibility and usability. This integration is crucial for entities to enhance information reuse and improve overall quality of information.

By integrating these characteristics, entities can guarantee that their data discovery platforms efficiently assist their information management and governance goals, ultimately enhancing operational effectiveness and compliance. Ultimately, these features empower organizations to navigate their data landscapes with confidence and precision.

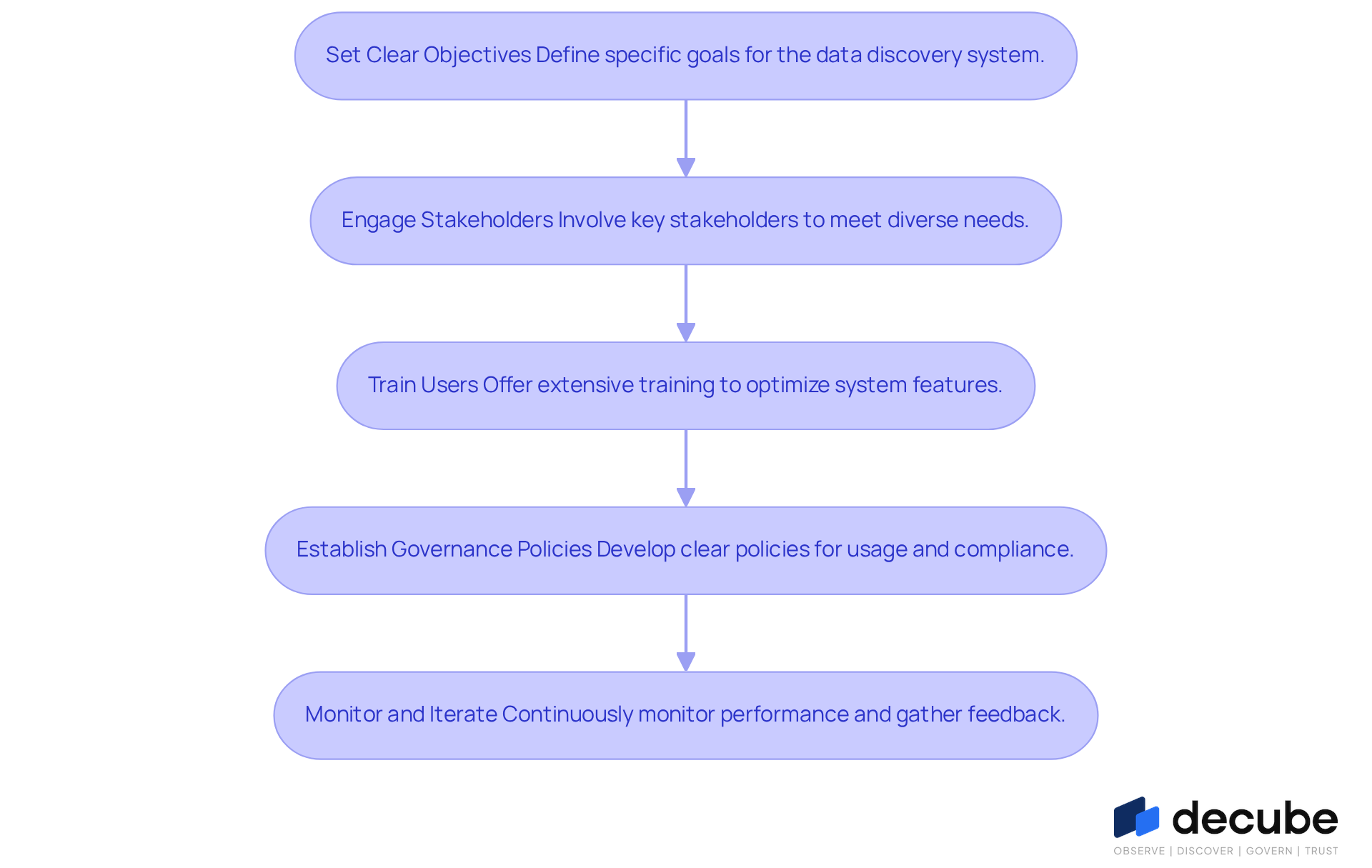

Implement Best Practices for Successful Data Discovery Deployment

To successfully deploy data discovery platforms, organizations must navigate various challenges and implement strategic best practices:

- Set Clear Objectives: Define specific goals for the data discovery system, such as improving data quality or enhancing compliance. This clarity assists in aligning efforts with organizational needs and ensures that the system provides measurable outcomes.

- Engage Stakeholders: Involve key stakeholders from various departments to ensure the system meets diverse needs and fosters collaboration. Effective stakeholder engagement is essential, as studies show that entities with greater stakeholder involvement experience a 15% rise in employee retention and enhanced project results.

- Train Users: Offer extensive training for users to optimize the system's features and promote adoption throughout the organization. Training ensures that users are equipped to leverage the platform effectively, enhancing overall productivity.

- Establish Governance Policies: Develop clear information governance policies that outline usage, access controls, and compliance requirements. This framework is essential for responsible information management and helps mitigate risks associated with misuse.

- Monitor and Iterate: Continuously monitor the platform's performance and gather user feedback to make necessary adjustments and improvements. Frequent evaluations assist companies in adjusting to evolving information landscapes and stakeholder requirements.

Implementing these practices enables organizations to enhance their information retrieval efforts with data discovery platforms, leading to improved governance and more effective decision-making. Failure to adopt these best practices may lead to significant inefficiencies and hindered decision-making capabilities.

Overcome Challenges in Data Discovery Implementation

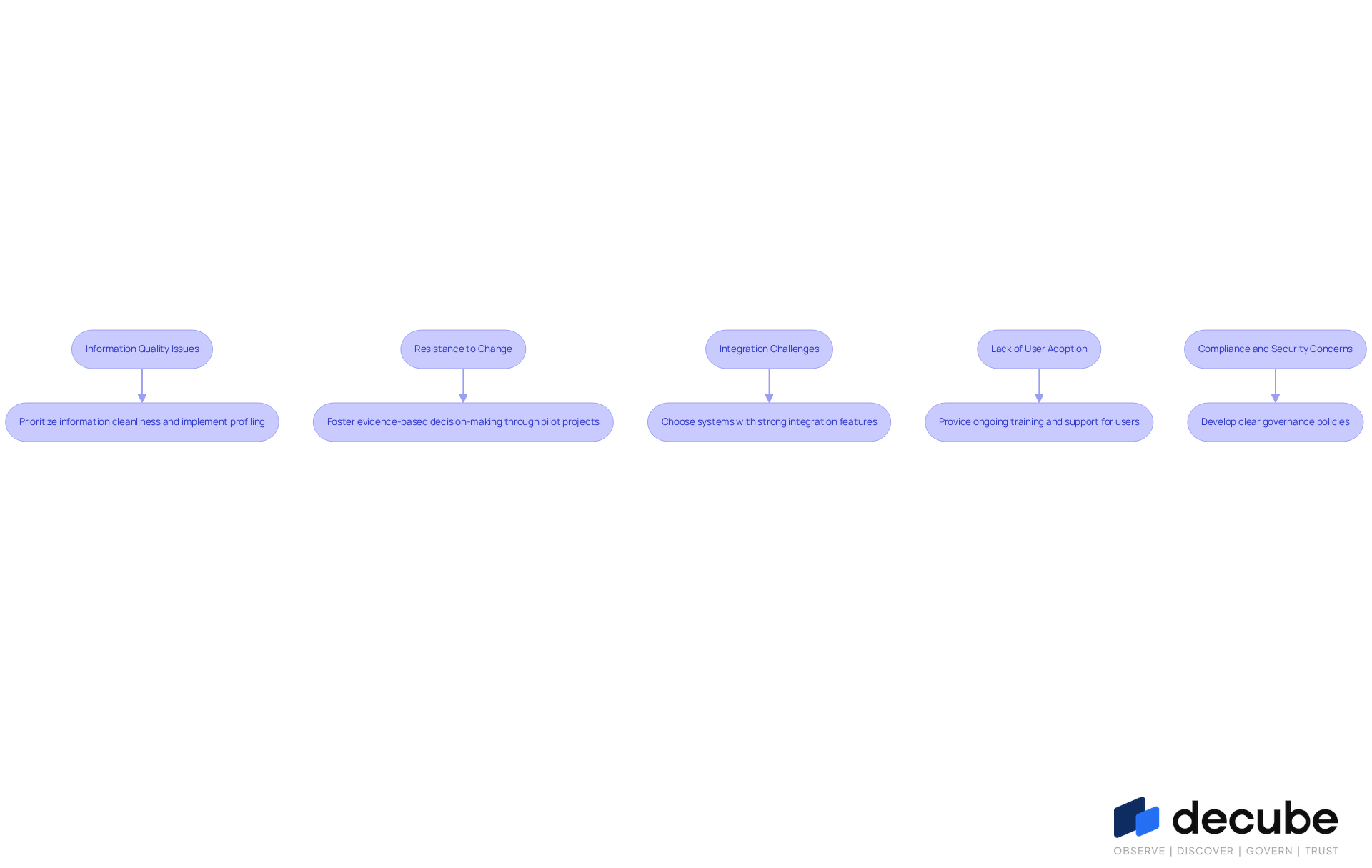

Organizations frequently encounter significant hurdles during the implementation of data discovery platforms. Here are effective strategies to navigate these obstacles:

- Information Quality Issues: Prioritize the cleanliness and precision of information before deployment. Implement comprehensive information profiling and cleansing processes to rectify inconsistencies. Decube's automated monitoring feature improves information quality by allowing early detection of issues, ensuring it remains reliable for decision-making.

- Resistance to Change: Foster a culture of evidence-based decision-making by demonstrating the advantages of the information discovery system through targeted pilot projects and compelling success stories. Users praise Decube for its intuitive design, which fosters trust and facilitates a smoother transition for stakeholders.

- Integration Challenges: Choose systems that offer strong integration features with current frameworks. Decube seamlessly integrates with various data connectors, minimizing disruption and facilitating smooth data flow, making it easier for teams to adopt the new tools without significant operational hurdles.

- Lack of User Adoption: Ongoing training and support for users can effectively showcase the system's benefits and ease of use. Testimonials highlight Decube's excellent customer service, where team members take the time to understand business needs, significantly enhancing user adoption rates.

- Compliance and Security Concerns: Develop clear governance policies to ensure that the platform adheres to relevant regulations. Decube's features, such as secure access control and automated crawling for metadata management, enhance governance and compliance efforts, mitigating risks and building trust in information management processes.

By effectively navigating these challenges, organizations can achieve enhanced operational efficiency through the use of data discovery platforms for better data management.

Conclusion

Organizations often struggle to make sense of vast amounts of data, making data discovery platforms essential tools for navigating complex information landscapes. Leveraging these platforms allows businesses to gain insights that improve decision-making and boost operational efficiency. Successfully adopting these systems can give organizations a competitive advantage in today's data-driven environment.

Throughout the article, we have identified key practices and features that enhance the effectiveness of data discovery platforms. These include:

- Automated information cataloging

- Robust metadata management

- Data visualization tools

- Real-time monitoring

- Seamless integration capabilities

Additionally, implementing strategic best practices - such as:

- Setting clear objectives

- Engaging stakeholders

- Providing user training

- Establishing governance policies

- Continuously monitoring performance

ensures that organizations can maximize the benefits of their data discovery initiatives.

Embracing data discovery platforms is more than just a tech upgrade; it’s a strategic move for organizations that want to succeed in a competitive market. By overcoming common challenges and adopting best practices, businesses can enhance their data management capabilities, foster collaboration, and drive informed decision-making. Investing in effective data discovery strategies is crucial for unlocking the potential for growth and innovation.

Frequently Asked Questions

What are data discovery platforms?

Data discovery platforms are tailored software tools that help organizations find, comprehend, and utilize their information from various sources, enabling exploration and analysis for informed decision-making.

Why are data discovery platforms important?

They are crucial for managing data effectively, allowing organizations to uncover insights that lead to better decision-making. Organizations that utilize these systems are significantly more likely to acquire and retain customers.

How do data discovery platforms enhance competitive advantage?

By enabling efficient information management, organizations that effectively use data discovery platforms can outperform competitors, being 23 times more likely to acquire customers and six times more likely to retain them.

What recent advancements have improved data discovery platforms?

Recent advancements include the incorporation of active metadata management, which automates actions based on metadata changes, streamlining workflows and enhancing user experience.

How do data discovery platforms help with compliance and governance?

They provide structured methods for governance and quality assurance, helping organizations adhere to regulations like GDPR and HIPAA, and ensuring information is handled with compliance context.

Can you provide an example of a successful application of a data discovery platform?

Red Roof Inn used analytics from a data discovery platform to launch targeted marketing campaigns based on flight cancellations and weather conditions, resulting in a 10% increase in turnover.

What features do data discovery platforms offer?

They offer tools for information visualization, metadata management, lineage tracking, and automated crawling, which enhance information observability and governance.

What is Decube and how does it stand out among data discovery platforms?

Decube is a data discovery platform that distinguishes itself with automated crawling capabilities, ensuring effortless metadata management and enhancing information observability and governance.

What are the consequences of not leveraging data discovery systems?

Failing to utilize information exploration systems can hinder an organization's ability to thrive in a competitive landscape, affecting operational effectiveness and strategic understanding.

List of Sources

- Define Data Discovery Platforms and Their Importance

- 5 Stats That Show How Data-Driven Organizations Outperform Their Competition (https://keboola.com/blog/5-stats-that-show-how-data-driven-organizations-outperform-their-competition)

- Preparing for 2026: AI-Powered Data Discovery Can Help Agencies Get Back on Track and Meet Goals - Government Technology Insider (https://governmenttechnologyinsider.com/preparing-for-2026-ai-powered-data-discovery-can-help-agencies-get-back-on-track-and-meet-goals)

- Best Data Discovery Platforms in 2026 (https://opencart.com/blog/best-data-discovery-platforms-in-2026)

- Data Discovery Platforms: 8 Solutions to Know in 2026 | Dagster (https://dagster.io/learn/data-discovery-platform)

- Identify Essential Features of Effective Data Discovery Platforms

- Compare 6 Data Discovery Tools for 2026: Find Your Best Fit | Decube (https://decube.io/post/compare-6-data-discovery-tools-for-2026-find-your-best-fit)

- Best Data Discovery and Classification Tools of 2026 (https://cyberhaven.com/blog/data-discovery-classification-tools)

- Best Data Discovery Platforms in 2026 (https://opencart.com/blog/best-data-discovery-platforms-in-2026)

- Data Discovery Platforms: 8 Solutions to Know in 2026 | Dagster (https://dagster.io/learn/data-discovery-platform)

- Best 6 data discovery software for faster insights (https://fivetran.com/learn/data-discovery-software)

- Implement Best Practices for Successful Data Discovery Deployment

- Stakeholder Engagement Effectiveness Statistics (https://zoetalentsolutions.com/stakeholder-engagement-effectiveness)

- Best Data Governance Practices for 2026 (https://straive.com/blogs/data-governance-best-practices)

- 8 Data Discovery Best Practices - Securiti (https://securiti.ai/blog/data-discovery-best-practices)

- Stakeholder engagement variability across public, private and public-private partnership projects: A data-driven network-based analysis - PMC (https://pmc.ncbi.nlm.nih.gov/articles/PMC9821786)

- 8 Best Practices for Effective Data Discovery and Classification (https://bedrockdata.ai/resources/8-data-discovery-and-classification-best-practices)

- Overcome Challenges in Data Discovery Implementation

- 7 CDP Challenges in 2026 (And How to Overcome Them) (https://cdp.com/articles/common-cdp-challenges)

- 2026 Data Management Trends and What They Mean For You | Alation (https://alation.com/blog/data-management-trends)

- Data Discovery Platforms: 8 Solutions to Know in 2026 | Dagster (https://dagster.io/learn/data-discovery-platform)

- New Global Research Points to Lack of Data Quality and Governance as Major Obstacles to AI Readiness (https://prnewswire.com/news-releases/new-global-research-points-to-lack-of-data-quality-and-governance-as-major-obstacles-to-ai-readiness-302251068.html)

- Data Quality Issues and Challenges | IBM (https://ibm.com/think/insights/data-quality-issues)

_For%20light%20backgrounds.svg)