Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Effective Alerting Automation in Data Pipelines

Discover best practices to enhance alerting automation in data pipelines for improved efficiency.

Introduction

Data pipeline management presents significant challenges that can impede performance and decision-making. Effective alerting automation is a vital strategy that helps organizations enhance data quality, streamline operations, and respond more quickly to critical issues. However, how can teams ensure that their alerting systems are not only efficient but also adaptive to the ever-evolving landscape of data? This discussion will outline best practices for implementing effective alerting automation within data pipelines, providing insights that can significantly enhance operational efficiency and bolster data integrity.

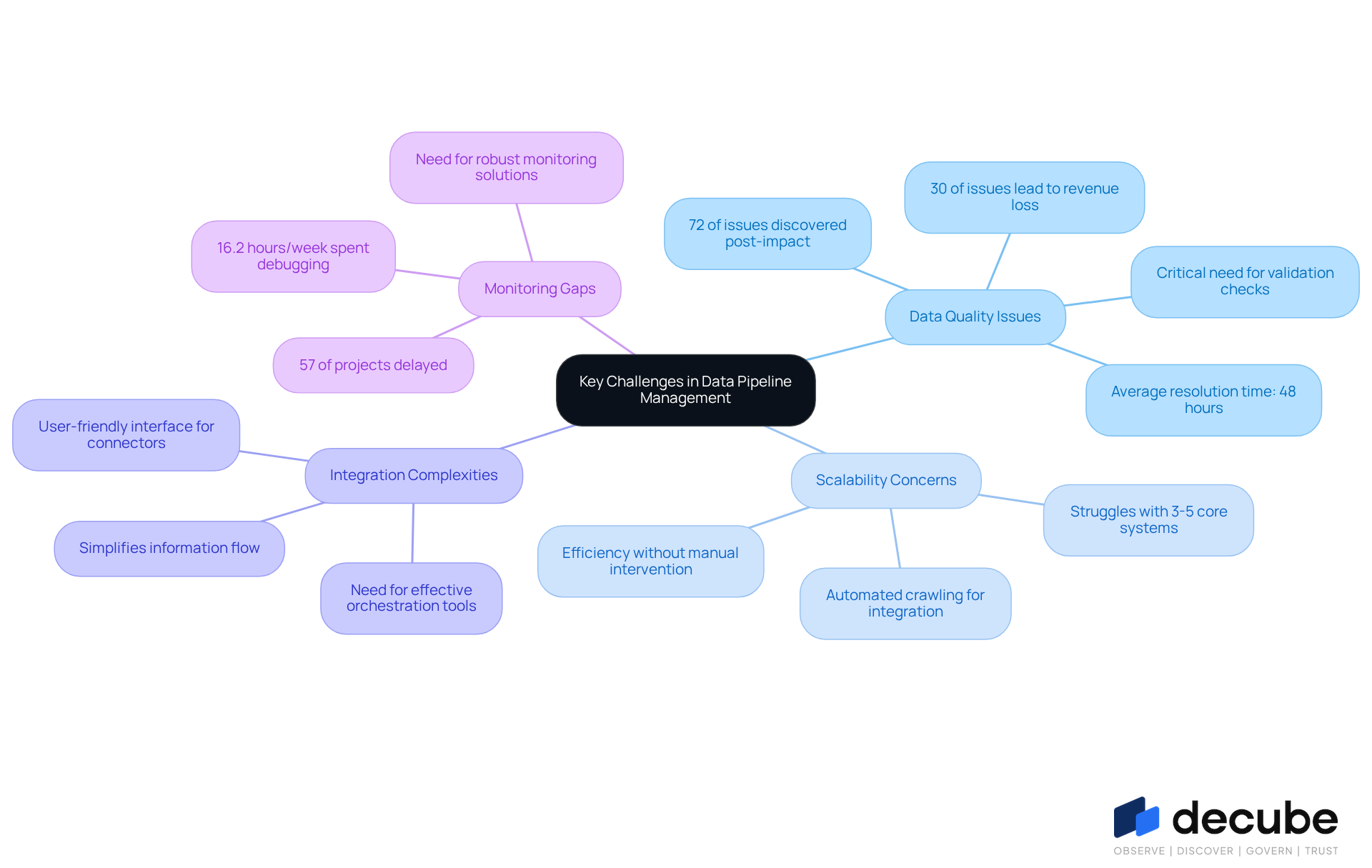

Identify Key Challenges in Data Pipeline Management

Data pipeline management is fraught with challenges that can undermine performance and reliability. Key issues include:

- Data Quality Issues: Erroneous or inconsistent data can lead to misleading insights. Research shows that a staggering 72% of quality issues are identified only after they’ve already affected business decisions, highlighting the critical need for robust validation checks to prevent such occurrences. The platform addresses this with its alerting automation features, ensuring that information quality is upheld and problems are identified early, thus aiding improved decision-making.

- Scalability Concerns: The increasing volume of information necessitates efficient scaling of data pipelines. Midmarket companies often struggle with three to five core systems that have never been properly reconciled, complicating scalability efforts. Decube's automated crawling capability enables seamless integration with current information stacks, improving scalability without the requirement for extensive manual intervention.

- Integration Complexities: The complexity of managing information from various sources and formats can hinder operational efficiency. This complexity necessitates effective orchestration tools to ensure seamless integration and information flow. The platform simplifies this process by offering a user-friendly interface that supports various connectors, making integration clear and efficient.

- Monitoring Gaps: Inadequate visibility into workflow performance can result in undetected failures. Data teams spend an average of 16.2 hours per week debugging issues, with 57% of project deliveries delayed due to these gaps. This lack of visibility highlights the need for robust monitoring solutions and alerting automation to ensure operational efficiency, allowing information flows to operate smoothly and efficiently.

Addressing these challenges is vital for establishing a resilient information pipeline that supports effective alerting automation.

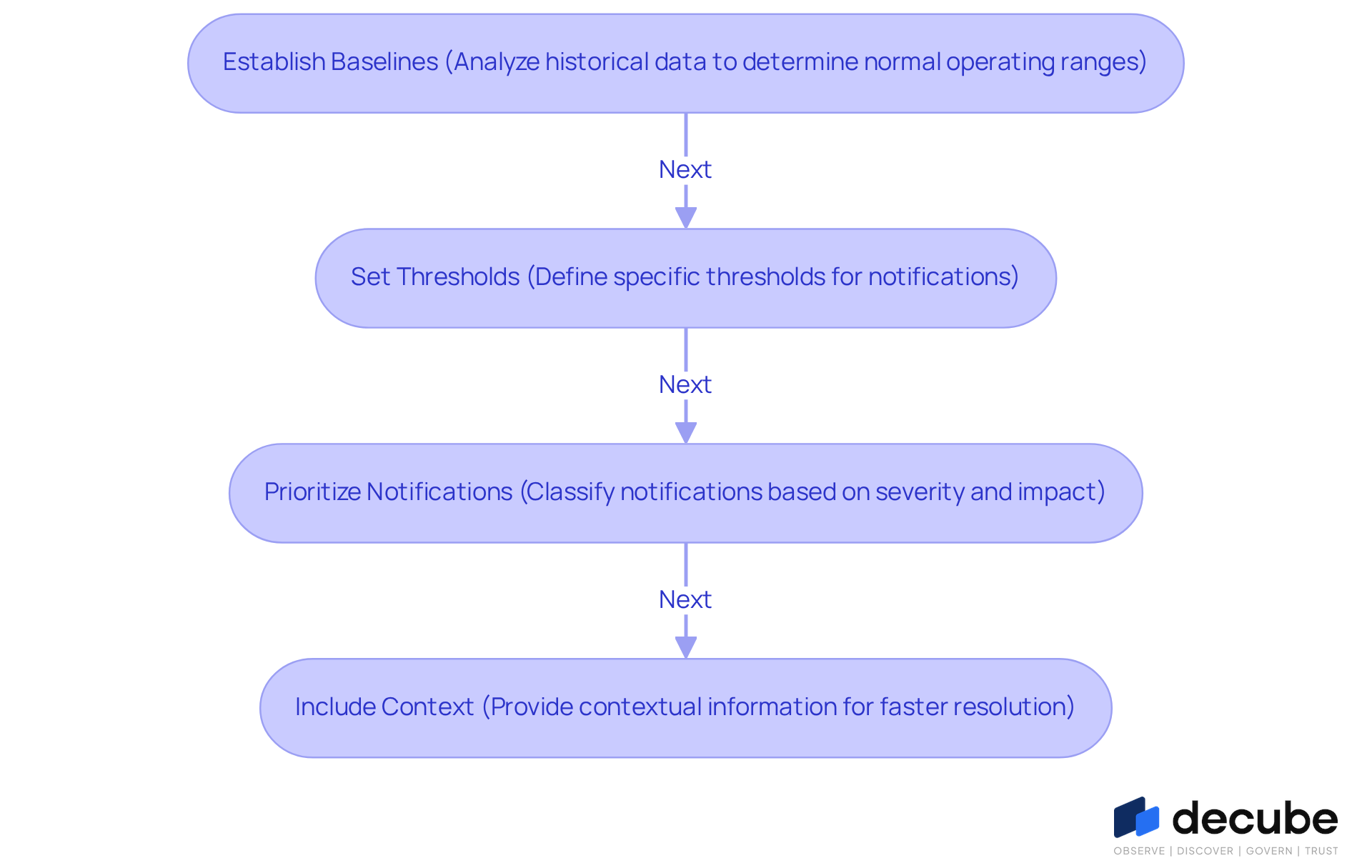

Define Clear Alerting Criteria for Automation

Without a structured approach to alerting automation, organizations risk prolonged response times to critical issues. To implement efficient alerting automation, organizations must establish clear criteria that determine when signals should be activated. Consider the following steps:

- Establish Baselines: Analyze historical data to determine normal operating ranges for key metrics, such as data latency and error rates. For example, organizations can track transaction volumes to recognize usual patterns, enabling them to establish suitable notification thresholds.

- Set Thresholds: Define specific thresholds that, when exceeded, will activate notifications. For instance, if information processing duration surpasses a set threshold, a notification should be produced. Employing absolute thresholds can assist in recognizing significant deviations from anticipated information flow.

- Prioritize Notifications: Classify notifications based on severity and impact, ensuring that critical issues are addressed promptly while less significant ones are deprioritized. This prioritization helps teams focus on resolving the most impactful issues first, enhancing overall operational efficiency.

- Include Context: Ensure notifications offer contextual information, such as the impacted information source and possible consequences, to enable faster resolution. Contextual alerts can significantly reduce response times by guiding teams directly to the source of the issue.

By establishing these criteria, organizations can enhance their alerting automation, making it not only more effective but also more responsive. Ultimately, the absence of these criteria can lead to missed opportunities for timely interventions and operational improvements.

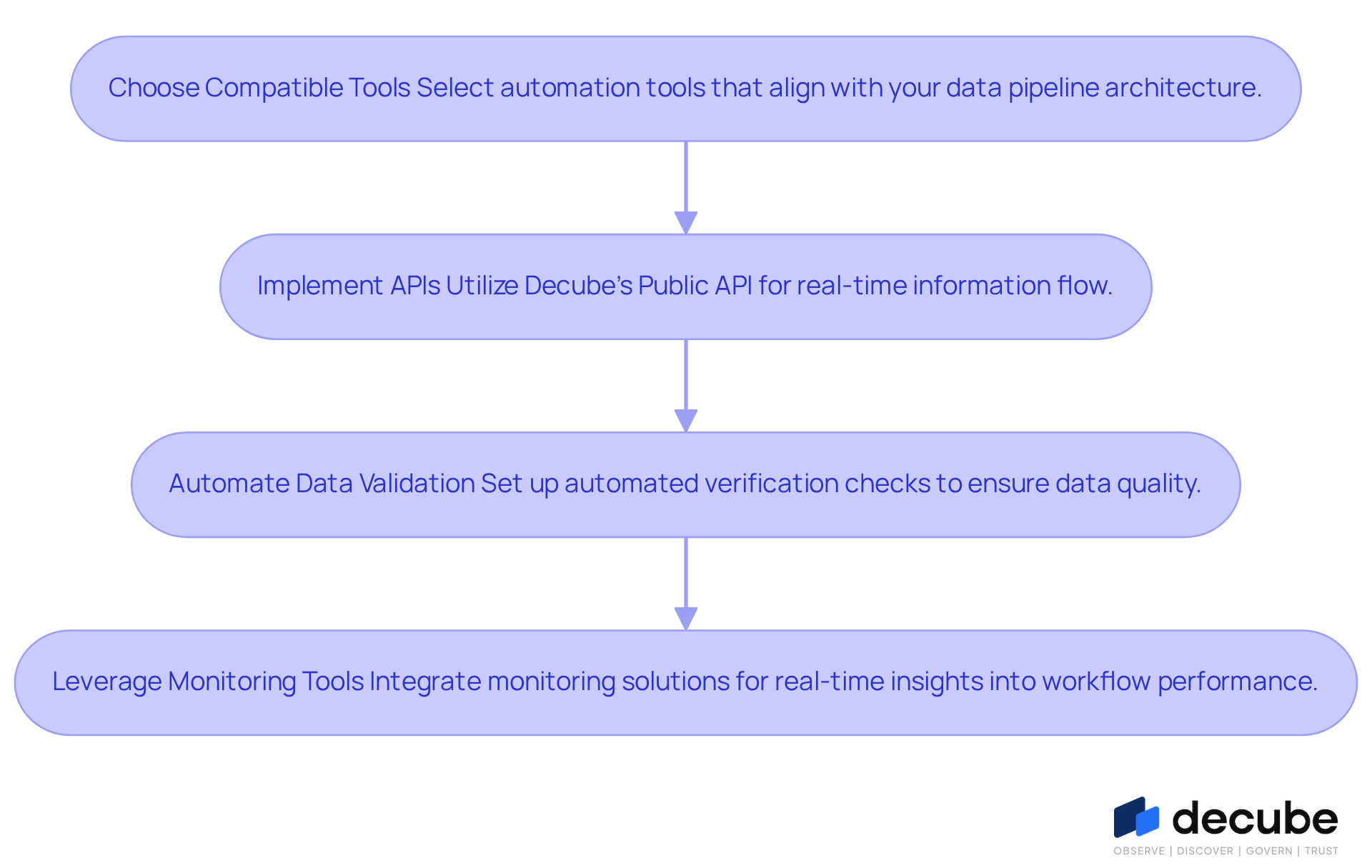

Integrate Automation Tools with Existing Data Pipelines

Organizations must navigate the complexities of integrating automation tools with their existing information workflows to enhance alerting automation. Here are key steps to achieve this:

- Choose Compatible Tools: Select automation tools that align with your data pipeline architecture and can easily integrate with existing systems. This ensures smooth operation and minimizes disruptions. AI-powered ETL tools play a crucial role in effectively managing information workflows, ensuring that organizations can adapt to evolving data demands.

- Implement APIs: Utilize Decube's Public API to facilitate communication between automation tools and information sources, enabling real-time information flow and notification generation. This improves responsiveness and efficiency, aligning with current trends in API utilization for alerting automation of information flow.

- Automate Data Validation: Set up automated verification checks within the process to ensure data quality before triggering notifications. This reduces the likelihood of false positives and enhances the reliability of alerts. Research indicates that organizations can achieve a return on investment from AI-powered ETL tools within 6-12 months, underscoring their financial viability.

- Leverage Monitoring Tools: Integrate monitoring solutions that provide real-time insights into workflow performance. Decube's automated crawling feature allows for effortless metadata management and secure access control, ensuring timely responses to potential issues. Jez Humble emphasizes that engaging with challenging tasks can significantly reduce discomfort and improve workflow efficiency, making monitoring tools essential for sustaining workflow health.

By effectively integrating these tools, organizations can create a cohesive environment that enhances the reliability and responsiveness of their information pipelines. Ultimately, this strategic integration fosters a more agile and responsive organizational framework.

Monitor and Adjust Alerting Systems Regularly

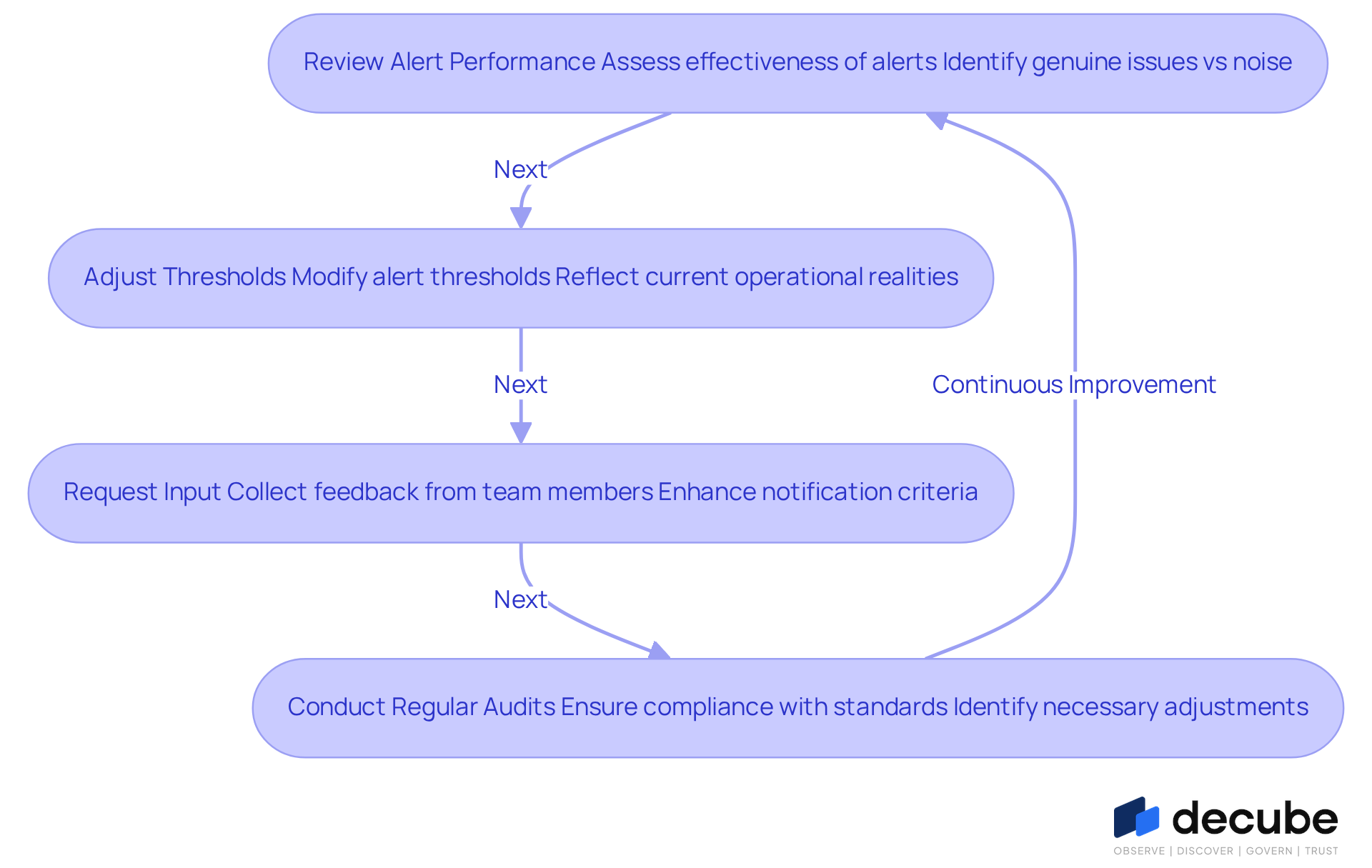

To maintain the effectiveness of alerting automation, organizations must continuously monitor and adjust their alerting systems. Key practices include:

- Review Alert Performance: Periodically assess the performance of alerts to determine their effectiveness in identifying genuine issues versus generating noise. Excessive noise from alerts can undermine the credibility of the alerting system. Grasping the lineage of information is vital here, as it illustrates the entire journey of the information, assisting organizations in ensuring accuracy and tracing errors. One user noted that Decube's information contract module effectively virtualizes and manages monitors, simplifying complex tasks.

- Adjust Thresholds: As information patterns evolve, revisit and modify alert thresholds to reflect current operational realities, ensuring they remain relevant. Significantly, schema drift represents 7.8% of quality incidents, highlighting the necessity to oversee only 50-100 business-critical tables that impact decision-making. Utilizing Decube's advanced features can significantly enhance data quality and lineage oversight.

- Request Input: Collect responses from team members who react to notifications to pinpoint areas for enhancement and adjust notification criteria accordingly. Feedback loops are essential for improving machine learning accuracy in alerting automation systems, thereby enhancing overall responsiveness. Testimonials highlight that teams appreciate the platform's daily use and its seamless integration with various connectors.

- Conduct Regular Audits: Implement regular audits of the alerting system to ensure compliance with industry standards and internal policies. Systematic audits help identify necessary adjustments, maintaining alignment with best practices and operational goals. Decube's information contract module can streamline governance processes, ensuring that monitoring aligns with compliance needs.

This proactive approach not only strengthens the reliability of alerting automation but also fosters a culture of continuous improvement in data management.

Conclusion

Implementing effective alerting automation in data pipelines is crucial for enhancing operational efficiency and ensuring data integrity. By addressing key challenges such as data quality, scalability, integration complexities, and monitoring gaps, organizations can create a robust framework that supports timely and accurate decision-making. Establishing clear alerting criteria, integrating appropriate automation tools, and maintaining regular monitoring are essential practices that lead to improved data pipeline management.

Throughout the article, several best practices were highlighted:

- Identifying and overcoming data quality issues

- Setting clear thresholds for alerts

- Integrating automation tools seamlessly with existing systems

- Regularly adjusting alerting systems based on performance

All contribute to a more resilient data pipeline. By taking these steps, organizations can significantly reduce response times to critical issues and enhance their overall operational effectiveness.

In summary, organizations that neglect proactive alerting automation risk operational inefficiencies and compromised data integrity. By adopting these best practices, organizations enhance their responsiveness to challenges and position themselves to leverage data strategically in decision-making. Embracing these strategies will ultimately lead to a more agile, efficient, and reliable data pipeline that drives success in an increasingly data-driven landscape.

Frequently Asked Questions

What are the main challenges in data pipeline management?

The main challenges include data quality issues, scalability concerns, integration complexities, and monitoring gaps.

How do data quality issues affect decision-making?

Erroneous or inconsistent data can lead to misleading insights, and research indicates that 72% of quality issues are identified only after they have impacted business decisions. This underscores the importance of robust validation checks.

What solutions does the platform offer to address data quality issues?

The platform includes alerting automation features to uphold information quality and identify problems early, thereby aiding improved decision-making.

Why are scalability concerns significant for midmarket companies?

Midmarket companies often struggle with three to five core systems that have not been properly reconciled, complicating their ability to efficiently scale data pipelines.

How does Decube address scalability challenges?

Decube's automated crawling capability enables seamless integration with existing information stacks, improving scalability without requiring extensive manual intervention.

What are the complexities associated with integration in data pipeline management?

Managing information from various sources and formats can hinder operational efficiency, necessitating effective orchestration tools for seamless integration.

How does the platform simplify integration complexities?

The platform offers a user-friendly interface with various connectors, making the integration process clear and efficient.

What are the consequences of monitoring gaps in data pipeline management?

Inadequate visibility into workflow performance can lead to undetected failures, with data teams spending an average of 16.2 hours per week debugging issues and 57% of project deliveries delayed due to these gaps.

What solutions are needed to address monitoring gaps?

Robust monitoring solutions and alerting automation are essential to ensure operational efficiency and allow information flows to operate smoothly.

List of Sources

- Identify Key Challenges in Data Pipeline Management

- Data Pipeline Failures Cost Enterprises $3 Million per Month, Fivetran Benchmark Finds | Press | Fivetran (https://fivetran.com/press/data-pipeline-failures-cost-enterprises-3-million-per-month-fivetran-benchmark-finds)

- The State of Data Quality 2024: Analysis of 1000+ Data Pipelines (https://medium.com/datachecks/the-state-of-data-quality-2024-analysis-of-1000-data-pipelines-46fb2f5e3b51)

- The Data Problems Undermining Midmarket AI Projects In 2026 (https://mescomputing.com/news/2026/ai/the-data-problems-undermining-midmarket-ai-projects-in-2026)

- Define Clear Alerting Criteria for Automation

- 10 Essential Metrics for Effective Data Observability | Pantomath (https://pantomath.com/blog/10-essential-metrics-for-data-observability)

- Set Threshold Alerts for Pipeline Data Volume - Mezmo Developer Docs (https://docs.mezmo.com/telemetry-pipelines/pipeline-threshold-alerts)

- Data pipeline monitoring: Tools and best practices (https://rudderstack.com/blog/data-pipeline-monitoring)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Integrate Automation Tools with Existing Data Pipelines

- Continuous delivery Quotes by Jez Humble (https://goodreads.com/work/quotes/13558958-continuous-delivery)

- Top 10 AI Data Pipeline Automation Tools in 2026: Features, Pros, Cons & Comparison (https://devopsschool.com/blog/top-10-ai-data-pipeline-automation-tools-in-2025-features-pros-cons-comparison)

- Data Pipeline Efficiency Statistics (https://integrate.io/blog/data-pipeline-efficiency-statistics)

- 32 of the Best AI and Automation Quotes To Inspire Healthcare Leaders - Blog - Akasa (https://akasa.com/blog/automation-quotes)

- AI-Powered ETL Market Projections — 35 Statistics Every Data Leader Should Know in 2026 (https://integrate.io/blog/ai-powered-etl-market-projections)

- Monitor and Adjust Alerting Systems Regularly

- Data pipeline monitoring: Tools and best practices (https://rudderstack.com/blog/data-pipeline-monitoring)

- Data Pipeline Efficiency Statistics (https://integrate.io/blog/data-pipeline-efficiency-statistics)

- Data Quality Alerts: Setup, Best Practices & Reducing Fatigue (https://atlan.com/know/data-quality-alerts)

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

_For%20light%20backgrounds.svg)