Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Database Dictionary Examples for Data Engineers

Explore diverse database dictionary examples essential for data engineers' observability and governance.

Introduction

In the dynamic field of data engineering, the importance of robust database dictionaries is paramount. These critical tools not only facilitate efficient data management but also improve observability and governance, ensuring data accuracy and accessibility. As organizations face the challenge of managing increasing volumes of information, a pressing question emerges: how can data engineers effectively utilize various database solutions to enhance their workflows? This article examines ten essential database dictionary examples, highlighting their distinctive features and the significant advantages they bring to contemporary data practices.

Decube: A Comprehensive Data Dictionary for Observability and Governance

Decube functions as a comprehensive information dictionary, integrating observability and governance features that are vital for information engineers. It offers a centralized repository for information, ensuring that assets are thoroughly documented and readily accessible. Key functionalities include:

- Automated crawling for seamless metadata management, which features auto-refreshing capabilities that eliminate the need for manual updates.

- Column-level lineage mapping.

- Automated policy management.

These tools empower organizations to maintain high information quality and comply with industry standards such as SOC 2 and GDPR. This integrated approach not only enhances information integrity but also facilitates across various business functions. Additionally, Decube's approval flow for accessing or editing information reinforces access control, while its business glossary initiative fosters collaboration and domain-level ownership among teams.

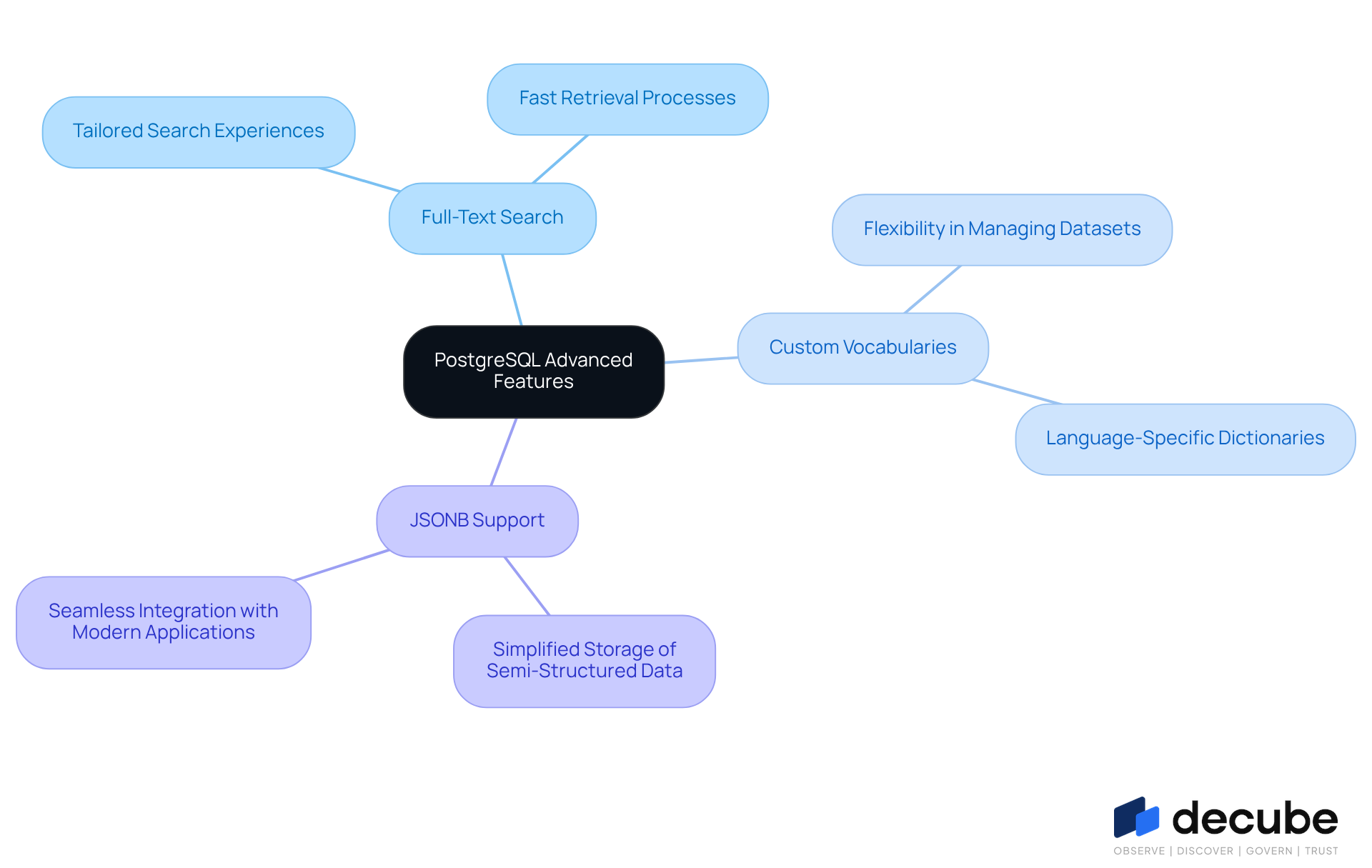

PostgreSQL: Leveraging Advanced Features in Database Dictionaries

PostgreSQL offers advanced features that significantly enhance its functionality as a reference tool, including full-text search capabilities and custom vocabularies. These features enable engineers to create , thereby improving retrieval processes. The ability to define custom dictionaries for specific languages or applications provides greater flexibility in managing diverse datasets. Additionally, PostgreSQL's support for JSONB formats simplifies the storage of semi-structured information, facilitating seamless integration with modern applications.

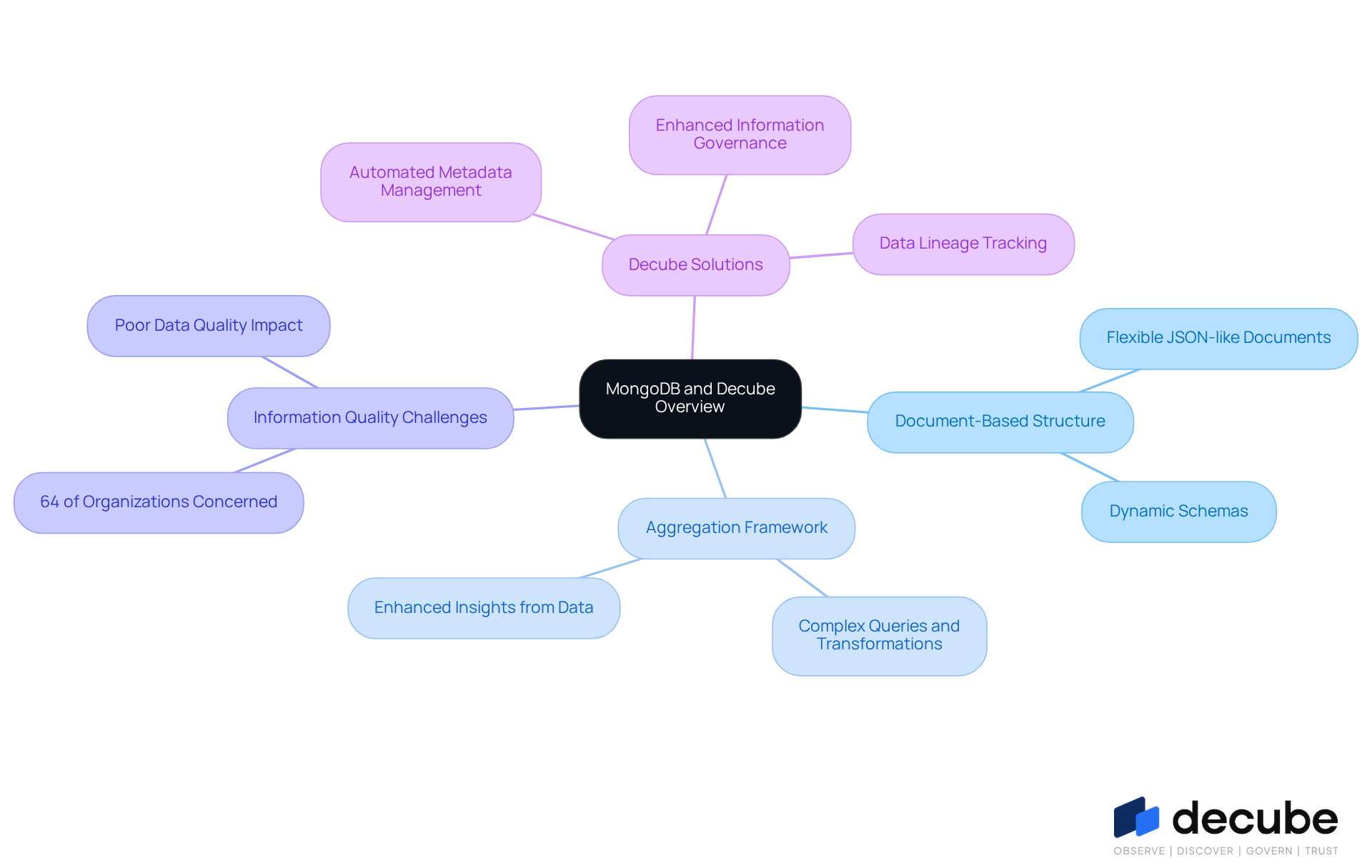

MongoDB: Understanding Document-Based Database Dictionaries

MongoDB functions as a document-focused system, storing information in flexible, JSON-like documents that accommodate dynamic schemas. This adaptability is crucial for information specialists, allowing them to respond swiftly to evolving information requirements without the constraints of rigid frameworks. The database dictionary example within MongoDB includes vital metadata regarding collections, documents, and indexes, providing a comprehensive overview of the database's architecture.

Utilizing MongoDB's aggregation framework enables engineers to execute complex queries and transformations, significantly enhancing their capacity to derive valuable insights from extensive collections. Nevertheless, challenges persist, as 64% of organizations identify insufficient information quality as their primary integrity concern, underscoring the need for robust management solutions.

Decube's automated crawling feature addresses this issue by facilitating seamless metadata management and secure access control, thereby enhancing information observability and governance. By integrating Decube, information engineers can ensure their data remains accurate and consistent, streamlining collaboration across teams and improving overall information quality.

Furthermore, Decube's lineage feature bolsters clarity within information pipelines, enabling teams to track data flow and maintain trust in their content. With the to reach USD 105.40 billion by 2026, MongoDB's flexible schemas and advanced features, combined with Decube's capabilities, position them as a preferred choice for modern engineering practices.

MySQL: The Classic Choice for Database Dictionary Management

MySQL's information catalog acts as a centralized repository for metadata concerning objects such as tables, columns, and indexes. The transition to MySQL 8.0 has significantly enhanced this feature by storing the dictionary in transactional tables, which not only boosts performance but also enhances reliability. Data engineers can effectively leverage MySQL's INFORMATION_SCHEMA to query metadata, yielding critical insights into database structure and usage patterns. This capability is vital for maintaining information integrity and adhering to governance policies, thereby enabling organizations to manage their assets efficiently and comply with regulatory requirements.

To further enhance , integrating Decube's unified trust platform can be transformative. Decube offers advanced quality monitoring through machine learning-powered tests and intelligent alerts, ensuring that information remains accurate and consistent. Its seamless integration with existing information stacks, including MySQL, empowers engineers to monitor quality effortlessly, detect issues early, and maintain transparency across pipelines. This combination of MySQL's robust information management with Decube's advanced capabilities presents a comprehensive solution for effective governance and observability.

Oracle Database: Enterprise Solutions in Database Dictionaries

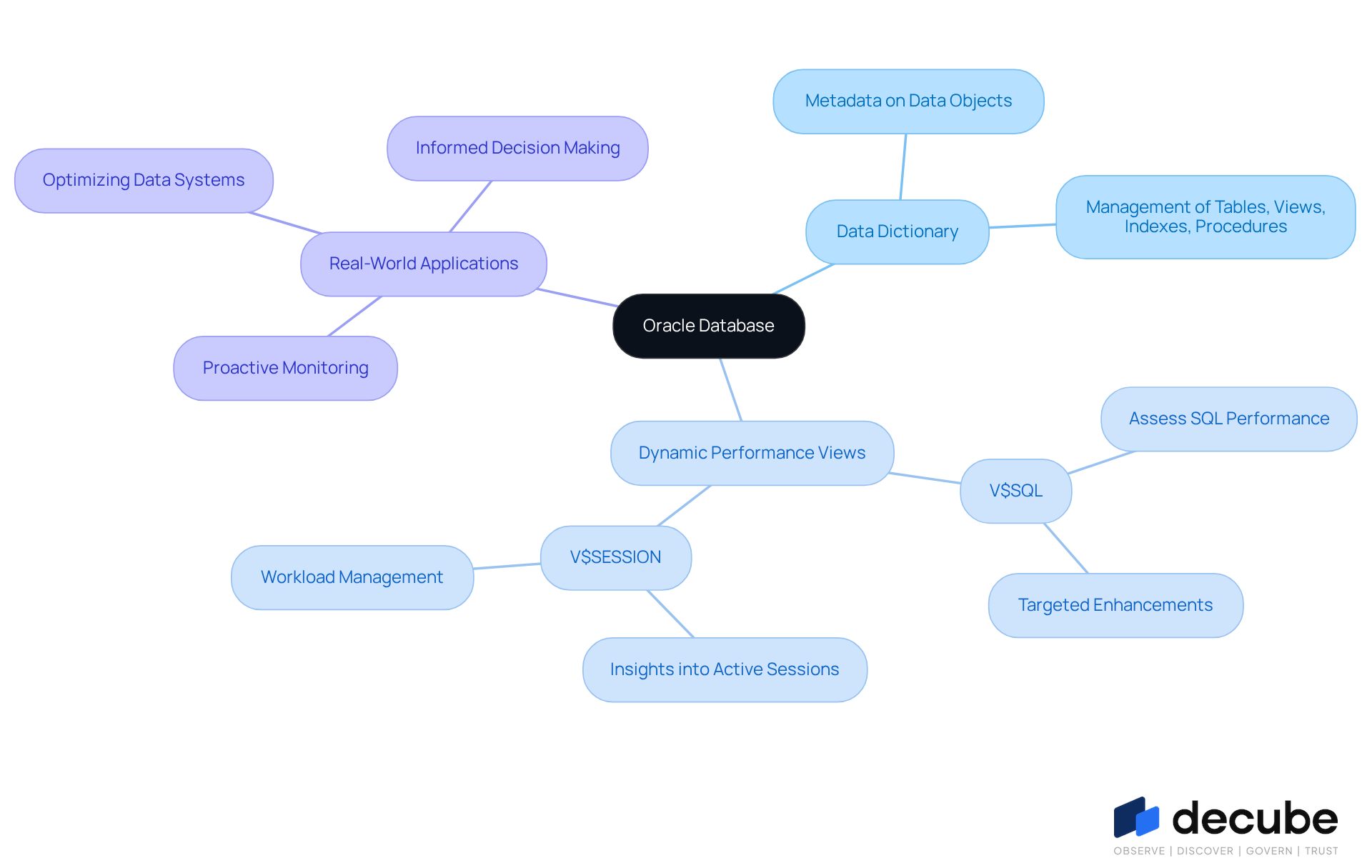

Oracle Database features a comprehensive data dictionary that provides detailed metadata about all data objects, including tables, views, indexes, and stored procedures, which are essential for effective management. Data specialists can utilize Oracle's dynamic performance views to monitor system performance and optimize queries efficiently. In 2023, Oracle Cloud Infrastructure (OCI) introduced new services, including advanced machine learning features and enhanced security, which further augment the capabilities of these performance views.

Real-world applications of dynamic performance views illustrate their effectiveness in optimizing data systems. For instance, by analyzing the V$SQL view, analysts can assess the performance of SQL statements, enabling targeted enhancements that lead to faster query responses and reduced resource consumption. Additionally, the V$SESSION view provides insights into active sessions, aiding professionals in managing workloads and ensuring optimal database performance.

Expert insights highlight the strategic advantage of leveraging these dynamic performance views. They facilitate proactive monitoring and empower engineers to make informed decisions that enhance overall system efficiency. In 2024, Oracle's cloud services and license support segment generated nearly US$39.4 billion in revenue, underscoring the significance of Oracle's solutions in the current market landscape. The benefits extend beyond performance; Oracle's robust security features, along with its database dictionary example, ensure compliance with industry regulations while managing large volumes of data seamlessly. This comprehensive approach positions Oracle Database as a striving to maintain high data quality and operational efficiency. Furthermore, updates to dynamic performance views in 2026 reflect Oracle's commitment to continuous improvement in its data management solutions.

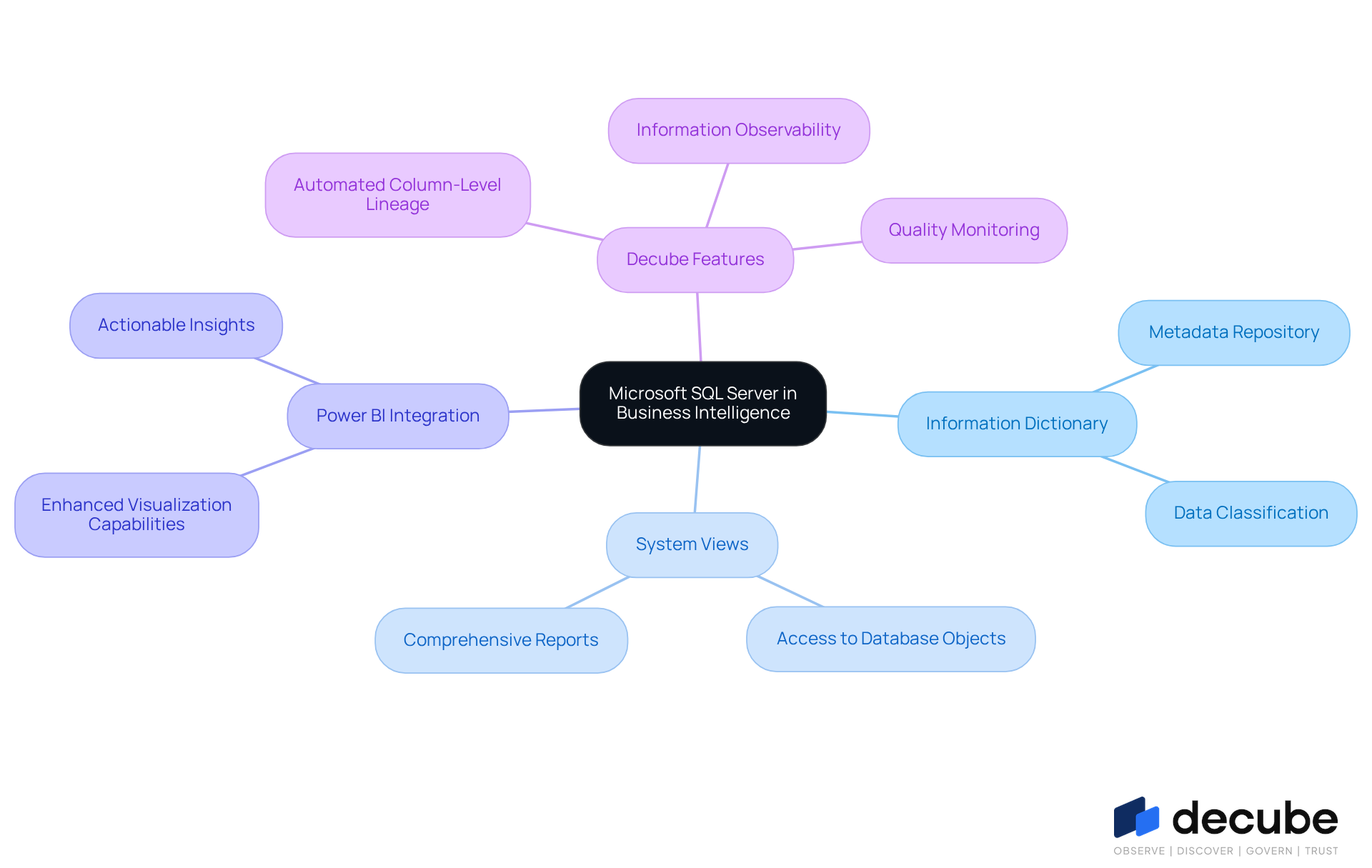

Microsoft SQL Server: Integrating Database Dictionaries with Business Intelligence

Microsoft SQL Server's information dictionary serves as a vital repository of metadata, essential for effective business intelligence applications. Data engineers can leverage SQL Server's system views to access detailed information about database objects, which facilitates the creation of comprehensive reports and dashboards. The integration of SQL Server with Power BI significantly enhances visualization capabilities, enabling organizations to derive actionable insights from their data. Furthermore, SQL Server's information classification features are crucial for identifying and protecting sensitive information, ensuring compliance with governance policies. This organized approach not only simplifies information management but also allows organizations to maintain high quality and trust.

Incorporating Decube's automated column-level lineage and information observability features can further augment SQL Server's capabilities. Users have noted that Decube's and seamless integration with existing information stacks, such as MySQL, greatly enhance quality monitoring and incident detection. By utilizing Decube alongside SQL Server, engineers can ensure their data remains accurate and consistent, ultimately supporting improved decision-making processes.

SQLite: A Lightweight Database Dictionary for Mobile Applications

SQLite is recognized as a self-contained, serverless database engine, particularly well-suited for mobile applications. The database dictionary example offers essential metadata regarding tables, columns, and types, enabling developers to manage information with precision. The lightweight architecture of SQLite allows for rapid setup and deployment, making it a preferred choice among mobile developers. Notably, SQLite has been integrated into the Android OS since 2006 and included in Apple's iOS in 2007, highlighting its importance in mobile development.

Furthermore, SQLite is utilized in Internet of Things (IoT) devices for storing sensor data and device configurations, demonstrating its reliability and compact size. By leveraging SQLite's capabilities, engineers can implement local storage solutions that enhance application performance while ensuring information integrity. This architecture is particularly beneficial in mobile information management, where efficiency and reliability are paramount.

As OTW Master emphasizes, SQLite's role in the digital landscape is poised to expand, making it a vital topic of study for those involved in information management. With by 2013, SQLite's widespread adoption underscores its relevance in the industry.

Redis: Utilizing Key-Value Stores in Database Dictionaries

Redis serves as an in-memory key-value store, providing rapid access to information and robust storage capabilities. Its information dictionary facilitates effective management of key-value pairs, making it ideal for applications in caching and real-time analytics. Engineers can leverage Redis's data structures, such as hashes and lists, to optimize both retrieval and storage processes. Furthermore, Redis's ability to persist data ensures that organizations maintain data integrity while enjoying the speed and flexibility inherent in in-memory processing.

Cassandra: Distributed Database Dictionaries for Scalability

Cassandra is a distributed NoSQL database specifically designed to manage large volumes of information across multiple nodes, establishing itself as a cornerstone of modern information architectures. Its information repository serves as a vital source of metadata, detailing tables, columns, and types of information. This structure enables data engineers to navigate and manage information effectively within a distributed environment.

Unlike a conventional database dictionary example that merely lists technical fields and definitions, Cassandra's encompasses business context, ownership, lineage, quality indicators, and governance policies. This approach transforms information from being merely recorded to becoming functional. The platform's horizontal scaling capabilities allow organizations to seamlessly enhance their information infrastructure, accommodating increasing information demands without sacrificing performance.

For instance, companies like Netflix, which processes petabytes of information in Cassandra, and Apple, operating over 75,000 nodes, leverage Cassandra's architecture to ensure rapid access and reliability. Furthermore, automated metadata harvesting, query and pipeline parsing, and scheduled crawls keep assets up-to-date, while steward workflows and change notifications maintain the accuracy of definitions and ownership over time.

It is crucial for professionals in the field to ensure that network and hardware resources are sufficient when scaling Cassandra, gradually adding nodes to avoid overloading individual nodes. By utilizing Cassandra's advanced features, information specialists can implement effective management strategies that uphold data integrity and availability, ultimately driving operational efficiency and supporting business growth.

Apache Hive: Managing Large Datasets with Database Dictionaries

Apache Hive serves as a robust warehousing solution built on Hadoop, specifically designed for querying and managing large datasets. The database dictionary example plays a crucial role, providing essential metadata about tables, columns, and types. This functionality enables information specialists to . By integrating seamlessly with Hadoop, Hive supports the efficient processing of extensive datasets, making it an indispensable tool for organizations seeking to derive valuable insights from vast amounts of data. Additionally, data engineers can leverage Hive's capabilities to implement effective data governance strategies, ensuring data quality and compliance throughout their operations.

Conclusion

In conclusion, the importance of database dictionaries in data engineering is paramount. These tools are vital for enhancing data management, ensuring that information remains organized, accessible, and compliant with industry standards. By utilizing various database dictionaries, data engineers can streamline their workflows, elevate data quality, and support informed decision-making within organizations.

Key examples such as:

- Decube

- PostgreSQL

- MongoDB

- MySQL

- Oracle

- Microsoft SQL Server

- SQLite

- Redis

- Cassandra

- Apache Hive

demonstrate the diverse functionalities and benefits of different database dictionaries. Each platform presents unique features tailored to specific data management requirements, ranging from Decube's enhanced observability and governance to the flexibility offered by MongoDB’s document-based approach and the robust performance monitoring capabilities of Oracle.

As the field of data engineering evolves, adopting these database dictionary solutions is essential for organizations aiming to uphold high data quality and operational efficiency. Data engineers should actively explore and implement these tools to fully leverage their data assets while adhering to best practices in governance and compliance. The future of data management hinges on the effective application of these dictionaries, facilitating informed decision-making and strategic growth.

Frequently Asked Questions

What is Decube and what are its main features?

Decube is a comprehensive information dictionary designed for observability and governance, providing a centralized repository for information. Its key features include automated crawling for metadata management, column-level lineage mapping, and automated policy management.

How does Decube help organizations maintain information quality?

Decube helps organizations maintain high information quality and comply with industry standards such as SOC 2 and GDPR by providing tools for metadata management, access control, and facilitating informed decision-making.

What role does the approval flow in Decube play?

The approval flow in Decube reinforces access control by managing who can access or edit information, ensuring that data management is secure and regulated.

What is the business glossary initiative in Decube?

The business glossary initiative in Decube fosters collaboration and domain-level ownership among teams, enhancing communication and understanding of information across the organization.

What advanced features does PostgreSQL offer for database dictionaries?

PostgreSQL offers advanced features like full-text search capabilities and custom vocabularies, allowing engineers to create tailored search experiences and manage diverse datasets effectively.

How does PostgreSQL handle semi-structured information?

PostgreSQL supports JSONB formats, which simplifies the storage of semi-structured information and facilitates seamless integration with modern applications.

What is the primary function of MongoDB in relation to database dictionaries?

MongoDB functions as a document-focused system that stores information in flexible, JSON-like documents, accommodating dynamic schemas and allowing for quick responses to evolving information requirements.

What capabilities does MongoDB provide for data analysis?

MongoDB's aggregation framework enables engineers to execute complex queries and transformations, enhancing their ability to derive valuable insights from extensive collections of data.

What information quality challenges do organizations face, and how does Decube address them?

64% of organizations identify insufficient information quality as a primary concern. Decube addresses this by providing automated crawling for metadata management and secure access control, enhancing information observability and governance.

How does Decube's lineage feature contribute to information clarity?

Decube's lineage feature allows teams to track data flow within information pipelines, enhancing clarity and trust in the content by showing how data moves through the system.

_For%20light%20backgrounds.svg)