Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master the Data Curation Process: Steps, Tools, and Solutions

Master the data curation process with essential steps and tools for improved data quality and decision-making.

Introduction

The surge in data generation across industries has made effective data curation more critical than ever. This guide outlines the essential steps, tools, and solutions necessary for mastering the data curation process, enabling organizations to transform raw information into strategic assets. However, as the landscape becomes increasingly complex, organizations face key challenges in ensuring data integrity and usability. Exploring these intricacies highlights not only the importance of structured curation but also the innovative technologies that can streamline the journey from data chaos to clarity.

Define Data Curation and Its Importance

The is essential as it involves organizing, maintaining, and enhancing content to ensure its accuracy, accessibility, and usability throughout its lifecycle. Key activities in the data curation process involve:

- Information identification

- Cleansing

- Transformation

- Preservation

These steps are vital for maintaining and relevance, which are crucial for informed decision-making within organizations.

Recent trends in information curation practices highlight the integration of advanced analytics and AI-driven tools to streamline these processes. Organizations are increasingly adopting , such as Decube's automated crawling feature, which ensures effortless metadata management by automatically refreshing information once sources are connected. This feature not only enhances information integrity and adherence to industry standards such as SOC 2, ISO 27001, HIPAA, and GDPR but also safeguards privacy and security while minimizing risks associated with .

The impact of efficient information management on decision-making is significant. Statistics show that organizations with a robust data curation process experience , leading to better business outcomes. As Carly Fiorina aptly stated, "The goal is to , and knowledge into insight." This transformation is crucial for organizations aiming to leverage information as a . Furthermore, as Jonathan Rosenberg noted, "; those who handle it effectively are the samurai," underscoring the strategic importance of mastering information management.

Real-world examples illustrate the effectiveness of information organization. Individuals such as Piyush P. have praised Decube's automated , which provides an ideal blend of . This feature enables business users to swiftly identify issues within reports and dashboards, thereby enhancing operational efficiency and collaboration. By prioritizing information management, organizations can not only improve their information quality but also empower their teams to make evidence-based decisions that foster success.

Outline the Steps of the Data Curation Process

The comprises several essential steps that organizations should follow to ensure high-quality data management:

- : Begin by identifying the sources of information relevant to your organization. Assess the quality and relevance of this information to ensure alignment with operational needs. Effective information identification significantly enhances decision-making and operational efficiency.

- : This phase involves purging the information by removing duplicates, correcting errors, and normalizing formats. Information cleansing is crucial for maintaining accuracy; low-quality information can jeopardize usability and lead to costly errors. Statistics indicate that organizations investing in information cleansing experience substantial improvements in integrity and operational efficiency.

- : Convert the information into a format suitable for analysis. This may include aggregating, normalizing, or enriching the information to maximize its value. Proper transformation ensures that the information is compatible with various analytical tools and applications.

- Metadata Creation: Enhance datasets by adding metadata, which improves discoverability and usability. Metadata should include details about the content's origin, structure, and context, facilitating user understanding and effective utilization of the information.

- : Develop strategies for the long-term storage and protection of information, ensuring it remains accessible and usable over time. This is particularly important as information can lose value if not properly maintained.

- : Regularly evaluate the information for quality and relevance, implementing necessary modifications to maintain its integrity. Ongoing monitoring helps prevent information hoarding and ensures that only valuable information is retained.

By adhering to these steps, organizations can establish a robust data curation process that enhances quality and supports informed decision-making. For example, companies like Tide have successfully implemented these practices, resulting in improved compliance and operational efficiency.

Identify Tools and Technologies for Data Curation

A variety of tools and technologies play a crucial role in facilitating the :

- Cataloging Tools: Solutions such as Alation and Collibra are instrumental in cataloging assets, enhancing discoverability and management. Alation is particularly noted for its and broad integration ecosystem, while Collibra excels in environments requiring rigorous governance and compliance.

- Information Integrity Tools: Instruments like Talend and Informatica are essential for information cleansing and integrity monitoring. These platforms assist organizations in preserving . Studies indicate that up to 80% of information practitioners' time is spent addressing poor quality. Utilizing these tools can significantly in information, as it is estimated that it costs one dollar to verify information, ten dollars to rectify it afterward, and one hundred dollars to take no action.

- Metadata Management Tools: Tools such as Microsoft Azure Catalog and Apache Atlas aid in managing metadata, which is crucial for improving the usability and discoverability of information. The evolution of metadata management is shifting towards integrated solutions that embed metadata into analytics workflows, making it actionable and trusted.

- Platforms: Platforms like Fivetran and Stitch enable seamless integration of information from multiple sources, streamlining the ingestion process and ensuring that information is readily accessible for analysis.

- : Comprehensive solutions like Decube offer robust , including policy management and lineage tracking. These features are essential for ensuring compliance with industry standards such as GDPR and HIPAA.

By utilizing these tools, organizations can significantly enhance their information management efforts, optimize processes, and improve their data curation process, ultimately leading to better decision-making and operational efficiency.

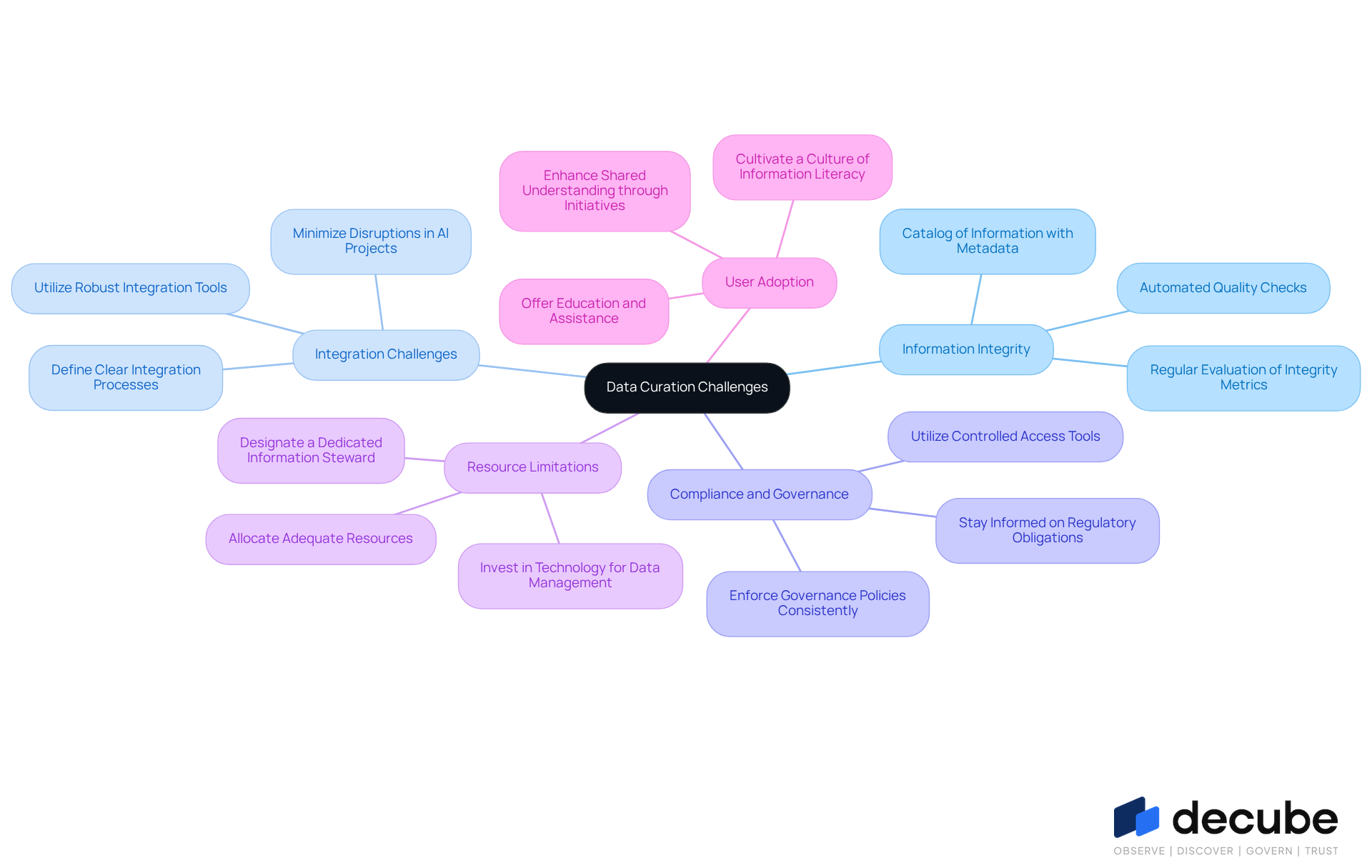

Address Challenges and Troubleshoot Data Curation Issues

Data curation presents several challenges that organizations must navigate effectively to maintain high data quality and integrity.

- Information Integrity Concerns: Organizations should routinely evaluate , as inadequate information integrity can lead to significant financial losses. Implementing automated quality checks can help identify and rectify issues promptly, ensuring that information remains reliable for decision-making. A catalog of information is essential in this process, as it provides a searchable inventory of assets enriched with metadata, enabling teams to quickly discover and trust the right information.

- Integration Challenges: Effective is vital, as many AI project failures stem from information-related issues. Organizations must define clear integration processes and utilize robust tools to minimize disruptions. Companies that prioritize strong integration in their transformation initiatives can achieve returns significantly higher than those with poor integration.

- Compliance and Governance: Staying informed about is crucial, particularly as many information leaders identify governance as a major obstacle to AI progress. Consistent enforcement of governance policies across the organization can mitigate risks associated with compliance and enhance quality. Utilizing Decube's capabilities allows for to information, reinforcing governance practices.

- Resource Limitations: Allocating adequate resources, including staff and technology, is essential for supporting . Designating a dedicated information steward can assist in supervising the organization process, ensuring that management practices are effectively executed.

- User Adoption: Cultivating a culture of information literacy is essential for promoting user involvement with curated content. Offering education and assistance can help users comprehend the significance of information management, ultimately resulting in improved use of information resources. Decube's business glossary initiative enhances shared understanding and domain-level ownership, further promoting user engagement.

By proactively addressing these challenges and leveraging Decube's features, organizations can enhance their , ensuring that their data remains a valuable asset in driving informed decision-making.

Conclusion

Mastering the data curation process is crucial for organizations that seek to leverage their information as a strategic asset. By effectively organizing, maintaining, and enhancing data, businesses can ensure its accuracy, accessibility, and usability throughout its lifecycle. This comprehensive approach to data curation empowers organizations to make informed decisions, ultimately driving improved business outcomes.

The article delineates the critical steps involved in the data curation process, which include:

- Information identification

- Cleansing

- Transformation

- Metadata creation

- Preservation

- Ongoing oversight

Each of these steps is vital for maintaining information integrity and relevance. Furthermore, the integration of advanced tools and technologies, such as automated solutions and metadata management platforms, significantly enhances the efficiency of these processes, enabling organizations to respond swiftly to evolving information needs.

As organizations navigate the complexities of data curation, addressing challenges such as information integrity, integration issues, compliance, and user adoption becomes essential. By prioritizing effective data management practices and employing appropriate tools, businesses can transform their data into valuable insights. Ultimately, adopting a robust data curation strategy not only fosters operational efficiency but also positions organizations for success in an increasingly data-driven landscape.

Frequently Asked Questions

What is data curation?

Data curation is the process of organizing, maintaining, and enhancing content to ensure its accuracy, accessibility, and usability throughout its lifecycle.

What are the key activities involved in the data curation process?

The key activities in the data curation process include information identification, cleansing, transformation, and preservation.

Why is data curation important for organizations?

Data curation is important because it maintains information integrity and relevance, which are crucial for informed decision-making within organizations.

What recent trends are influencing information curation practices?

Recent trends include the integration of advanced analytics and AI-driven tools to streamline data curation processes, with organizations adopting automated solutions for efficient metadata management.

How does Decube's automated crawling feature enhance data curation?

Decube's automated crawling feature enhances data curation by automatically refreshing information once sources are connected, improving information integrity and adherence to industry standards while safeguarding privacy and security.

What impact does efficient information management have on decision-making?

Efficient information management significantly improves information quality, leading to better business outcomes and enabling organizations to leverage information as a strategic asset.

Can you provide a quote that emphasizes the importance of transforming information?

Carly Fiorina stated, "The goal is to transform information into knowledge, and knowledge into insight," highlighting the importance of this transformation for organizations.

What does Jonathan Rosenberg say about information management?

Jonathan Rosenberg noted, "Information is the sword of the 21st century; those who handle it effectively are the samurai," emphasizing the strategic importance of mastering information management.

How does Decube's automated column-level lineage feature benefit users?

Decube's automated column-level lineage feature enables business users to quickly identify issues within reports and dashboards, enhancing operational efficiency and collaboration.

What overall benefits do organizations gain by prioritizing information management?

By prioritizing information management, organizations can improve their information quality and empower their teams to make evidence-based decisions that foster success.

List of Sources

- Define Data Curation and Its Importance

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- 19 Inspirational Quotes About Data: Wisdom for a Data-Driven World (https://medium.com/@meghrajp008/19-inspirational-quotes-about-data-wisdom-for-a-data-driven-world-fcfbe44c496a)

- 9 Must-read Inspirational Quotes on Data Analytics From the Experts (https://nisum.com/nisum-knows/must-read-inspirational-quotes-data-analytics-experts)

- Data Driven Decisions Quotes (16 quotes) (https://goodreads.com/quotes/tag/data-driven-decisions)

- 50 Quotes About Data & Analytics: More Than Just Numbers | RED² Digital (https://red2digital.com/en/quotes-about-data-analytics)

- Outline the Steps of the Data Curation Process

- Data Curation Explained: How To Make Data More Valuable (https://montecarlodata.com/blog-data-curation-explained)

- Data Curation: Process, Importance & Examples (2025) (https://atlan.com/know/data-curation-101)

- The Importance of Data Curation: A Step-by-Step Guide (https://medium.com/@oliviamiller048/the-importance-of-data-curation-a-step-by-step-guide-d622ef23568)

- Transforming Research with Data Curation Practices (https://cos.io/blog/data-curation)

- Identify Tools and Technologies for Data Curation

- 12 Best Data Quality Tools for 2026 (https://lakefs.io/data-quality/data-quality-tools)

- 11 Best Metadata Management Tools for 2026 (https://domo.com/learn/article/best-metadata-management-tools)

- Data Quality Tools 2026: The Complete Buyer’s Guide to Reliable Data (https://ovaledge.com/blog/data-quality-tools)

- Data Catalog Market Size, Share & Forecast Report 2035 (https://researchnester.com/reports/data-catalog-market/5172)

- Address Challenges and Troubleshoot Data Curation Issues

- AI Data Quality in 2026: Challenges & Best Practices (https://aimultiple.com/data-quality-ai)

- Data Transformation Challenge Statistics — 50 Statistics Every Technology Leader Should Know in 2026 (https://integrate.io/blog/data-transformation-challenge-statistics)

- Top 15 Famous Data Science Quotes | Towards Data Science (https://towardsdatascience.com/top-15-famous-data-science-quotes-f2e010b8d214)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

_For%20light%20backgrounds.svg)