Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Manual vs. Technological Analysis Approaches: Key Insights for Data Engineers

Compare manual and technological analysis approaches to enhance data efficiency and quality.

Introduction

In the rapidly changing field of data engineering, organizations face a critical decision regarding their analysis approaches.

- Manual methods can be time-consuming and prone to human error, despite offering depth and contextual understanding.

- On the other hand, technological methods promise speed and scalability but may overlook nuanced insights that only human analysts can discern.

Organizations must find a way to balance the strengths of both approaches to enhance data quality and governance. Finding this balance is essential for maximizing operational efficiency and ensuring robust data governance.

Define Manual and Technological Approaches to Data Analysis

In the realm of data analysis, the choice of analysis approaches, including manual and technological methods, significantly impacts operational efficiency. Manual information analysis includes conventional methods where engineers or analysts directly engage with datasets. Common methods, such as spreadsheet manipulation and qualitative coding, provide nuanced insights but require significant time and effort. In contrast, technological methods utilize automated tools and software to process and analyze information. These methods employ algorithms, machine learning, and visualization tools to efficiently manage large collections, providing quick insights and reducing human error.

By 2026, a considerable portion of organizations is focusing on investments in information and AI infrastructure, with 90% of business leaders acknowledging the significance of these technologies. This shift underscores the growing reliance on technological methods over manual processes, which often leads to inefficiencies and potential inaccuracies. Understanding these definitions is crucial for evaluating the strengths and weaknesses of each method in practical applications, particularly as organizations strive to enhance information quality and operational efficiency through different analysis approaches.

In this context, the role of information catalogs becomes essential; they function as a searchable inventory of assets enhanced with metadata, including ownership, stewardship, and access governance. Attributes like lineage visualization and quality indicators improve self-service analytics, guaranteeing that engineers can depend on precise and high-quality information for their evaluations. Additionally, implementing information agreements fosters collaboration among stakeholders, which is crucial for effective decentralized information management, converting unprocessed information into dependable assets and improving the quality and reliability of insights obtained from both manual and technological evaluation methods.

Examine Methodologies: Manual vs. Technological Approaches

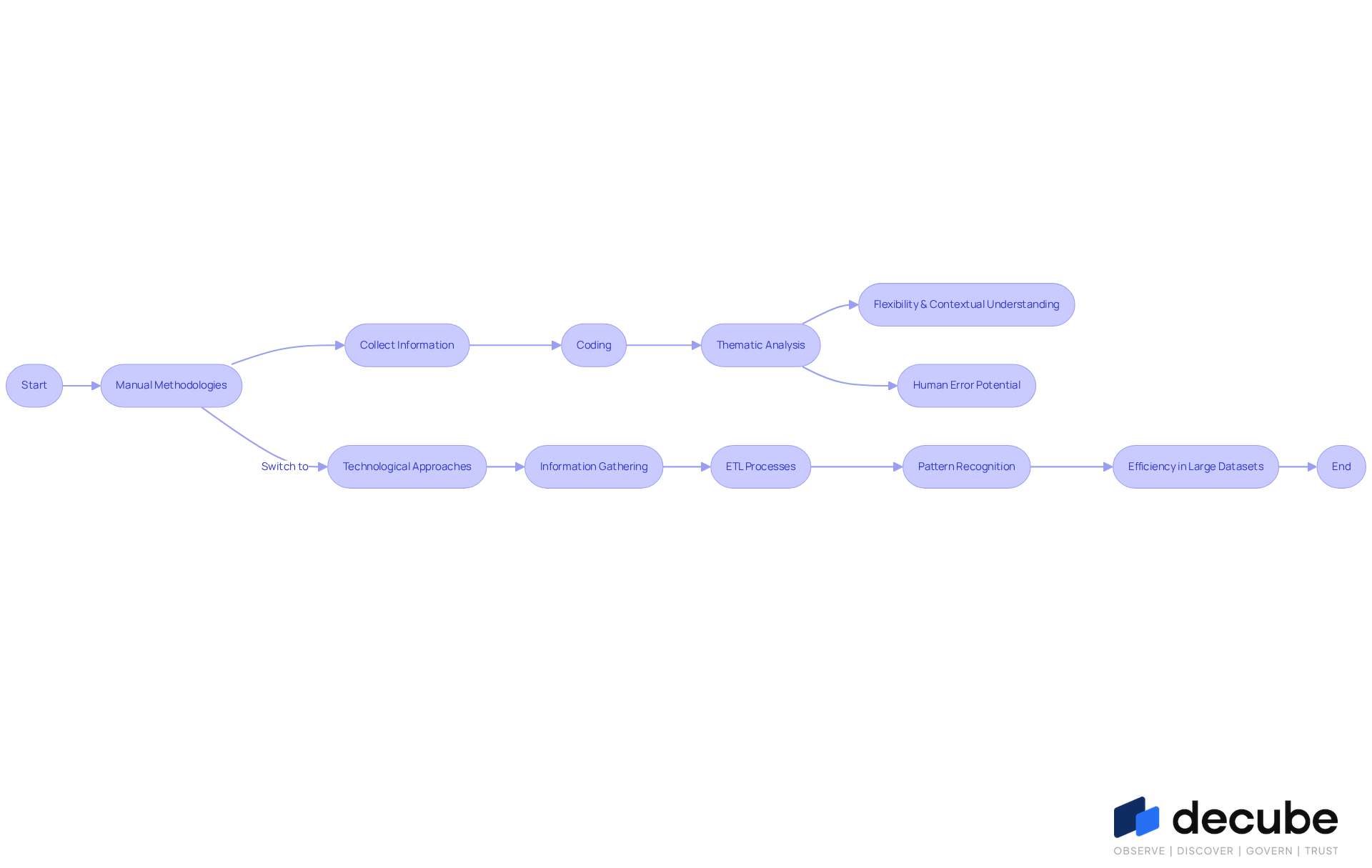

Manual methodologies typically involve collecting information through surveys or interviews, followed by coding and thematic analysis. While manual methodologies provide flexibility and a detailed contextual understanding, they are often hindered by time constraints and the potential for human error. Analysts frequently employ tools such as Excel for organizing and visualizing information, which can enhance the analysis process.

In contrast, technological methods utilize software tools for information gathering, processing, and evaluation. Automated information pipelines, for instance, can efficiently extract, transform, and load (ETL) information, while machine learning algorithms identify patterns and anomalies in real-time. This level of efficiency is especially beneficial for managing large datasets, where manual review becomes impractical.

Therefore, selecting the appropriate analysis approaches is crucial, as they directly impact the efficiency and accuracy of the insights derived from the data.

Analyze Strengths and Weaknesses of Each Approach

While manual information examination offers unique insights, it also presents significant challenges that must be addressed. Analysts can customize their approaches to the specific context of the information, leading to richer interpretations. This method, while insightful, often demands significant time and is susceptible to human error, particularly in information entry and analysis.

In contrast, technological approaches excel in speed and scalability. Automated systems can swiftly process vast datasets, significantly reducing the risk of errors associated with manual handling. For instance, human error rates in manual information entry can reach around 4% without verification steps, emphasizing the potential for mistakes in conventional methods.

However, this reliance on technology can result in critical oversights that human analysts might catch. Moreover, dependence on technology raises concerns regarding privacy and security, especially if systems are insufficiently managed.

As organizations increasingly implement systems for processing information like Decube, the advantages become evident: these systems can reduce error rates by as much as 80%, improving overall efficiency and precision in information handling. Decube's automated column-level lineage feature offers business users insights into report and dashboard issues, fostering enhanced collaboration and trust in information.

Recognizing these limitations is crucial for organizations aiming to leverage both human and technological strengths in their analysis approaches. As Edward Tufte stresses, 'Above all else, present the information,' reminding us of the significance of clarity and context in interpreting details.

Discuss Implications for Data Quality and Governance

The impact of manual versus technological methods on information quality is profound and multifaceted. Skilled analysts performing manual examination can significantly improve information quality by leveraging their understanding of the context to identify and rectify issues in real-time. Despite the advantages of manual examination, the potential for human error poses significant challenges.

Conversely, technological methods, such as Decube's self-operating crawling feature, enhance information quality through machine-driven validation checks and machine learning-powered anomaly detection, which help guarantee consistency and reliability of the information. Decube's AI-driven metadata engines automate lineage tracking and provide continuous updates, including the auto-refreshing of metadata, while identifying patterns and anomalies, further enhancing governance practices. Additionally, Decube's secure access control feature allows organizations to manage who can view or edit information, reinforcing governance.

Without robust governance, system processes risk propagating errors, leading to significant information integrity challenges. To mitigate these risks, effective information governance frameworks must be established to oversee both manual and automated analysis approaches. This includes implementing comprehensive policies for information handling, monitoring, and quality assurance, ensuring compliance with standards such as SOC 2 and GDPR.

The five primary principles of information governance - Accountability, Transparency, Quality, Security/Privacy, and Compliance - should guide these frameworks. Such governance not only safeguards data integrity but also fosters trust in data-driven decision-making, which is increasingly vital as organizations rely on automated systems like Decube to manage their data landscapes. As organizations increasingly depend on automated systems, the need for robust governance frameworks becomes imperative to ensure data integrity and trustworthiness.

Conclusion

The analysis of manual versus technological approaches in data engineering uncovers significant distinctions that impact operational efficiency and data quality. Manual methods provide personalized insights and contextual understanding; however, they are often limited by time constraints and human error. Conversely, technological approaches leverage automation and advanced algorithms to process large datasets swiftly, enhancing accuracy and reliability. As organizations shift towards technology-driven solutions, recognizing these distinctions is crucial for optimizing data analysis processes.

Key insights from the article highlight the strengths and weaknesses inherent in both methodologies:

- Manual analysis provides flexibility and depth; however, the potential for human error and inefficiencies can significantly undermine the advantages of manual analysis.

- Technological methods excel in scalability and speed, yet they can overlook nuances that human analysts might catch.

This necessitates the development of robust frameworks that integrate both methodologies to safeguard data integrity and trustworthiness.

In light of these insights, it is crucial for data engineers and organizations to carefully evaluate their analysis strategies. Embracing a hybrid model that combines the strengths of both manual and technological methods can lead to enhanced decision-making and improved data governance. Ultimately, the choice of analysis strategy will shape the future of data governance and operational success.

Frequently Asked Questions

What are manual approaches to data analysis?

Manual approaches to data analysis involve conventional methods where engineers or analysts directly engage with datasets, such as spreadsheet manipulation and qualitative coding. These methods provide nuanced insights but require significant time and effort.

What are technological approaches to data analysis?

Technological approaches utilize automated tools and software to process and analyze information. They employ algorithms, machine learning, and visualization tools to efficiently manage large datasets, providing quick insights and reducing human error.

How are organizations investing in data analysis technologies by 2026?

By 2026, a significant portion of organizations is focusing on investments in information and AI infrastructure, with 90% of business leaders recognizing the importance of these technologies to enhance operational efficiency.

What are the advantages of technological methods over manual processes?

Technological methods often lead to improved efficiency and accuracy, as they can handle large volumes of data quickly and reduce the likelihood of human error, which is more prevalent in manual processes.

What role do information catalogs play in data analysis?

Information catalogs serve as a searchable inventory of assets enhanced with metadata, including ownership, stewardship, and access governance. They improve self-service analytics by providing quality indicators and lineage visualization, ensuring that engineers can rely on accurate and high-quality information.

How do information agreements contribute to data management?

Implementing information agreements fosters collaboration among stakeholders, which is crucial for effective decentralized information management. They help convert unprocessed information into reliable assets, improving the quality and reliability of insights obtained from both manual and technological evaluation methods.

List of Sources

- Define Manual and Technological Approaches to Data Analysis

- Data and AI: Key trends to watch for in 2026 (https://datafoundation.org/news/blogs/813/813-Data-and-AI-Key-trends-to-watch-for-in-)

- Automation vs Manual Work Efficiency Stats 2020–2025 (https://technologyradius.com/statistic/work-efficiency-automation-vs-manual-2020-2025-stats)

- What AI Data Analysis Trends Will Dominate 2026? (https://findanomaly.ai/ai-data-analysis-trends-2026)

- 20 best data visualization quotes - The Data Literacy Project (https://thedataliteracyproject.org/20-best-data-visualization-quotes)

- Examine Methodologies: Manual vs. Technological Approaches

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Automation vs Manual Work Efficiency Stats 2020–2025 (https://technologyradius.com/statistic/work-efficiency-automation-vs-manual-2020-2025-stats)

- Manual Internet Research vs Automation: Accuracy in 2026 (https://tinkogroup.com/manual-internet-research-vs-automation)

- Analyze Strengths and Weaknesses of Each Approach

- 7 Human Error Statistics For 2025 - DocuClipper (https://docuclipper.com/blog/human-error-statistics)

- 101 Data Science Quotes (https://dataprofessor.beehiiv.com/p/101-data-science-quotes)

- 100 Essential Data Storytelling Quotes (https://effectivedatastorytelling.com/post/100-essential-data-storytelling-quotes)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Discuss Implications for Data Quality and Governance

- Data Governance in 2026: How AI and Cloud Will Redefine Enterprise Control | Zenxsys Blog (https://zenxsys.com/blog/data-governance-in-2026-how-ai-and-cloud-will-redefine-enterprise-control-blog)

- Why data governance is the cornerstone of trustworthy AI in 2026 (https://strategy.com/software/blog/why-data-governance-is-the-cornerstone-of-trustworthy-ai-in-2026)

- Enterprise Data Governance 2026: A Strategic Priorities Guide (https://bluent.com/blog/enterprise-data-governance-priorities)

- Gartner Announces Top Predictions for Data and Analytics in 2026 (https://gartner.com/en/newsroom/press-releases/2026-03-11-gartner-announces-top-predictions-for-data-and-analytics-in-2026)

_For%20light%20backgrounds.svg)