Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Best Practices for Anomaly Detection Software in Data Pipelines

Implement best practices for anomaly detection software to enhance data quality in pipelines.

Introduction

Anomaly detection software has emerged as a crucial element in managing data pipelines, acting as a safeguard against unexpected deviations that could jeopardize data integrity. By accurately identifying irregular patterns - ranging from sudden spikes in data volume to subtle shifts in distribution - organizations can significantly improve their decision-making processes and operational efficiency.

However, the path to effective anomaly detection is not without its challenges. High false positive rates and the complexities inherent in diverse data environments can hinder success. Organizations must navigate these obstacles to ensure their data remains reliable and actionable.

Define Anomaly Detection in Data Pipelines

The use of anomaly detection software in information pipelines is essential for recognizing points, events, or patterns that significantly deviate from expected behavior. This encompasses sudden spikes in information volume, unexpected changes in information distribution, or irregularities that may be identified by anomaly detection software as underlying issues such as data corruption or system failures. Efficient identification of these irregularities through anomaly detection software is crucial for maintaining high data quality, enabling organizations to rely on their information for informed decision-making.

For instance, a manufacturing firm that implemented anomaly identification techniques reduced downtime by 40% and saved $1 million annually in maintenance costs by proactively addressing equipment issues. Similarly, an e-commerce platform that employed anomaly detection to analyze user behavior and transaction patterns achieved a 30% reduction in fraud within six months.

Furthermore, organizations utilizing Decube's automated crawling feature benefit from enhanced information observability and governance through streamlined metadata management and secure access control. User insights highlight how Decube's automated column-level lineage and incident monitoring features provide business users with a clear understanding of report and dashboard issues, ultimately enhancing information quality and trust.

With typical breach costs projected to reach $4.88 million in 2026, the importance of identifying irregularities in preventing such breaches cannot be overstated. By establishing robust anomaly detection software, organizations can detect and resolve potential information issues before they escalate into significant problems, thereby improving operational efficiency and ensuring information integrity.

Explore Techniques for Effective Anomaly Detection

Effective anomaly detection in data pipelines can be achieved through a variety of techniques, including:

- Statistical Methods: These methods utilize statistical tests to identify values that deviate from expected ranges. Techniques such as Z-scores and control charts are frequently employed to observe distributions and highlight anomalies based on statistical significance. Ensuring high information quality is essential, as poor data can lead to inaccurate outcomes. Decube enhances this process by providing a cohesive platform that guarantees information quality through its user-friendly monitoring capabilities, utilizing anomaly detection software for early identification of issues.

- Machine Learning Algorithms: Techniques such as Isolation Forest, One-Class SVM, and Autoencoders leverage historical data to learn patterns and detect irregularities. These models excel in recognizing complex deviations that may not be apparent through traditional methods, thereby improving identification capabilities. For instance, Decube's ML-powered tests use anomaly detection software to automatically determine thresholds for quality, ensuring that irregularities are flagged promptly, which is crucial for maintaining trust.

- Rule-Based Systems: Establishing predefined guidelines for acceptable ranges of data facilitates swift irregularity detection. For example, if sales data exceeds a specified threshold, an alert can be triggered, prompting immediate investigation. Decube's smart alerts feature, part of its anomaly detection software, organizes notifications to prevent overwhelming users, ensuring that critical issues are addressed without unnecessary distractions.

- Hybrid Approaches: Combining statistical methods with machine learning techniques can significantly enhance accuracy in detection. By leveraging the strengths of both approaches, organizations can more effectively identify irregularities and minimize false positives. Decube supports this hybrid approach with its automated monitoring and analytics features, which foster collaboration among teams and simplify the identification process through anomaly detection software.

Applying these techniques necessitates a comprehensive understanding of data characteristics and the specific types of irregularities that need to be identified. With Decube's advanced data observability and governance features, including end-to-end lineage visualization, enterprises can transform raw insights into reliable assets, ensuring a tailored approach to irregularity identification.

Identify and Overcome Challenges in Anomaly Detection

Implementing anomaly detection in data pipelines presents several significant challenges that organizations must navigate:

- High False Positive Rates: Anomaly identification systems frequently generate false positives, leading to unnecessary alerts that can drain resources and distract teams from genuine issues. To address this, organizations should focus on fine-tuning their identification algorithms and establishing precise thresholds. Methods such as Z-Score and Interquartile Range (IQR) can enhance the precision of identifying irregularities by recognizing outliers based on statistical metrics.

- Data Quality Issues: The effectiveness of anomaly identification is heavily influenced by the quality of the foundational information. Inaccurate, incomplete, or inconsistent data can result in misleading outcomes. Organizations must prioritize data cleansing and enhancement processes to ensure that the information fed into recognition systems is reliable and reflective of typical behavior. This includes addressing common anomalies such as NULL values, schema changes, and distribution errors, which can significantly impact detection success rates. Decube's automated crawling feature plays a crucial role here, ensuring that metadata is auto-refreshed and current, thereby enhancing data quality and governance.

- Complex Information Environments: As organizations integrate various data sources and types, maintaining a comprehensive view of behavior becomes increasingly intricate. Investing in robust data observability tools, such as those offered by Decube, is essential for effectively monitoring flows and understanding the context of anomalies. These tools can help identify patterns and trends, enabling teams to respond proactively to potential issues.

- Scalability: With the rapid increase in data volumes, identification systems must be capable of scaling without compromising performance. Cloud-based solutions provide the flexibility required to handle increased data loads efficiently. By utilizing scalable frameworks, enterprises can ensure that their anomaly detection software remains effective as their data ecosystems evolve.

By proactively addressing these challenges, organizations can significantly enhance their anomaly identification capabilities, leading to more reliable data pipelines and improved decision-making processes.

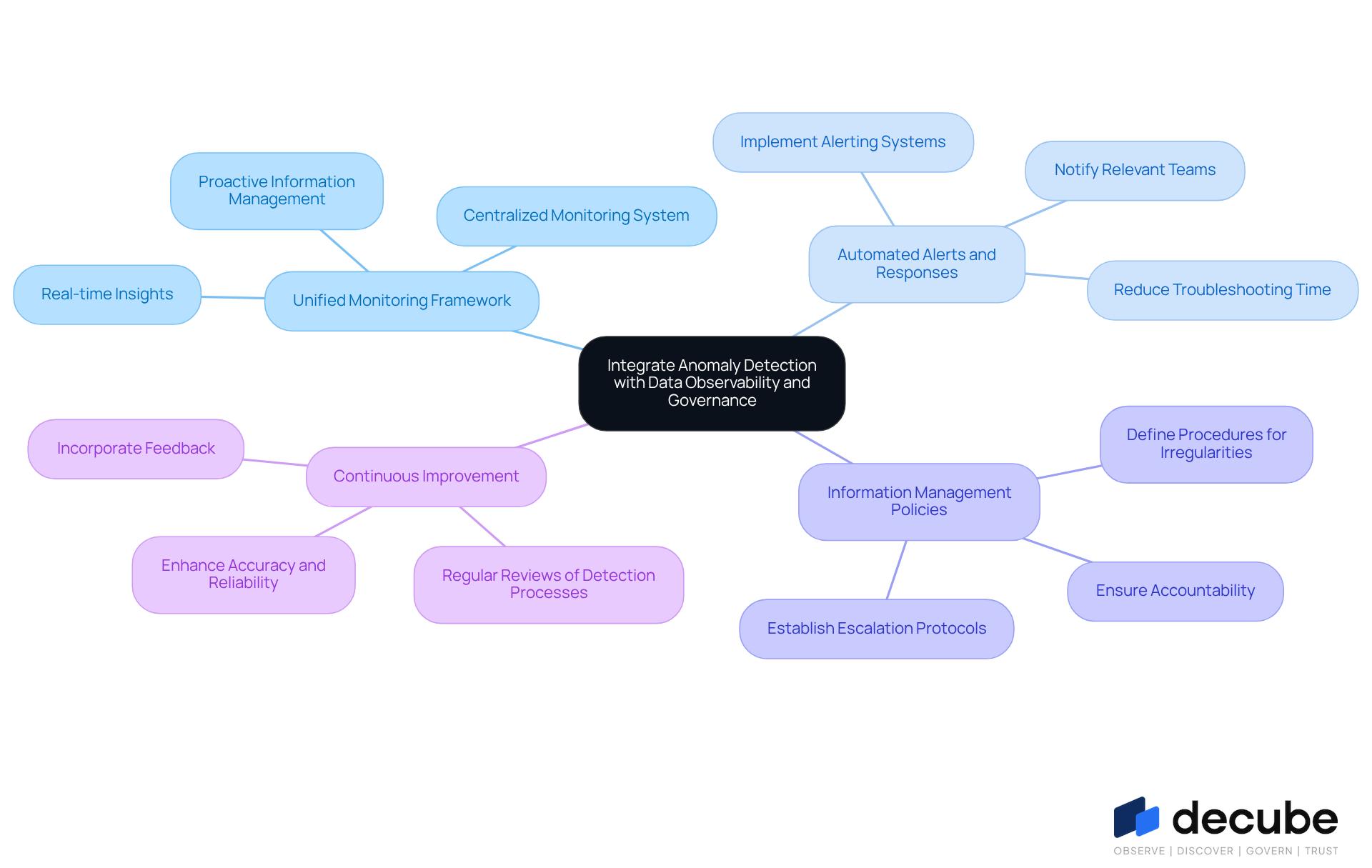

Integrate Anomaly Detection with Data Observability and Governance

Integrating anomaly detection with data observability and governance requires adherence to several best practices:

- Unified Monitoring Framework: A centralized monitoring system should be established, combining irregularity detection with insight observability tools. This integration provides real-time insights into information quality and integrity, promoting a proactive approach to information management.

- Automated Alerts and Responses: It is essential to implement automated alerting systems that notify relevant teams upon detecting irregularities. This capability facilitates swift action to address potential issues, significantly reducing troubleshooting time and enhancing operational efficiency.

- Information Management Policies: Organizations must create and implement comprehensive information management policies that clearly define procedures for addressing irregularities. This includes escalation protocols and documentation requirements, ensuring accountability and clarity in response actions.

- Continuous Improvement: Regular reviews and refinements of irregularity detection processes should be conducted based on feedback and performance metrics. This iterative approach enhances accuracy and reduces false positives, ultimately leading to more reliable insights.

By integrating these elements, organizations can establish a robust framework that employs anomaly detection software to not only detect anomalies but also ensure compliance with data governance standards, thereby fostering trust in their data assets.

Conclusion

Implementing anomaly detection software in data pipelines is a critical strategy for organizations aiming to maintain data integrity and operational efficiency. By identifying irregularities that deviate from expected patterns, businesses can proactively address potential issues, ultimately enhancing decision-making and safeguarding valuable data assets.

Key techniques for effective anomaly detection include:

- Statistical methods

- Machine learning algorithms

- Rule-based systems

- Hybrid approaches

Challenges such as high false positive rates, data quality issues, and the complexities of integrating various data sources have been highlighted, along with practical solutions to overcome these obstacles. The integration of anomaly detection with data observability and governance practices is essential for fostering a robust data management framework.

The significance of anomaly detection in data pipelines cannot be overstated. Organizations are encouraged to adopt best practices that streamline the detection process while enhancing data governance and observability. By doing so, they can ensure that their data remains a reliable asset, driving informed decisions and fostering trust across all levels of the organization.

Frequently Asked Questions

What is anomaly detection in data pipelines?

Anomaly detection in data pipelines refers to the use of software to identify points, events, or patterns that significantly deviate from expected behavior, such as sudden spikes in data volume or unexpected changes in data distribution.

Why is anomaly detection important for organizations?

Anomaly detection is crucial for maintaining high data quality, enabling organizations to rely on their information for informed decision-making and to proactively address potential issues like data corruption or system failures.

Can you provide an example of how anomaly detection has benefited a company?

A manufacturing firm that implemented anomaly detection techniques reduced downtime by 40% and saved $1 million annually in maintenance costs by proactively addressing equipment issues.

How has anomaly detection impacted e-commerce platforms?

An e-commerce platform that employed anomaly detection to analyze user behavior and transaction patterns achieved a 30% reduction in fraud within six months.

What additional benefits does Decube's automated crawling feature provide?

Decube's automated crawling feature enhances information observability and governance through streamlined metadata management and secure access control, helping organizations manage data more effectively.

What features of Decube assist in improving information quality?

Decube's automated column-level lineage and incident monitoring features provide business users with a clear understanding of report and dashboard issues, ultimately enhancing information quality and trust.

What are the projected costs of data breaches, and why is anomaly detection relevant?

The typical breach costs are projected to reach $4.88 million in 2026, highlighting the importance of anomaly detection in identifying irregularities to prevent breaches and improve operational efficiency.

How does anomaly detection contribute to operational efficiency?

By establishing robust anomaly detection software, organizations can detect and resolve potential information issues before they escalate, thereby improving operational efficiency and ensuring information integrity.

List of Sources

- Define Anomaly Detection in Data Pipelines

- Anomaly Detection: A Key to Better Data Quality (https://firsteigen.com/blog/anomaly-detection)

- Anomaly Detection Case Studies (https://meegle.com/en_us/topics/anomaly-detection/anomaly-detection-case-studies)

- Why anomaly detection matters in data quality and how GX just made it easier (https://greatexpectations.io/blog/why-anomaly-detection-matters-in-data-quality-and-how-gx-just-made-it-easier)

- Global Anomaly Detection Market Poised for Strong Growth as AI-Driven Security Demands and Enterprise Automation Accelerate: Verified Market Research® (https://globenewswire.com/news-release/2026/03/05/3250411/0/en/Global-Anomaly-Detection-Market-Poised-for-Strong-Growth-as-AI-Driven-Security-Demands-and-Enterprise-Automation-Accelerate-Verified-Market-Research.html)

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Explore Techniques for Effective Anomaly Detection

- Anomaly Detection Case Studies (https://meegle.com/en_us/topics/anomaly-detection/anomaly-detection-case-studies)

- Anomaly Detection Machine Learning: How It Works (https://labelyourdata.com/articles/anomaly-detection-machine-learning)

- Using Statistical Methods in Anomaly Detection - Apriorit (https://apriorit.com/dev-blog/anomaly-detection-with-statistical-methods)

- A Brief Review of Machine Learning Techniques for Anomaly Detection (https://medium.com/@naseefcse/a-brief-review-of-machine-learning-techniques-for-anomaly-detection-325815ceedf2)

- Identify and Overcome Challenges in Anomaly Detection

- What are the challenges in anomaly detection? (https://milvus.io/ai-quick-reference/what-are-the-challenges-in-anomaly-detection)

- Data Quality Anomaly Detection: Everything You Need To Know (https://montecarlodata.com/blog-data-quality-anomaly-detection-everything-you-need-to-know)

- 8 Steps to Reduce False Positives with AI Threat Detection - N-able (https://n-able.com/blog/reduce-false-positives-ai-threat-detection)

- False Positive Reduction: How AI Improves Security Alert Accuracy - Avatier (https://avatier.com/blog/false-positive-reduction-ai)

- Are you struggling with implementing data pipelines? - Engineering.com (https://engineering.com/are-you-struggling-with-implementing-data-pipelines)

- Integrate Anomaly Detection with Data Observability and Governance

- Unlocking better data governance with Anomaly Detection alerts and Event Approval | Signals & Stories (https://mixpanel.com/blog/data-governance-anomaly-detection-alerts-event-approval)

- In an AI world, why is data governance important? (https://datagalaxy.com/en/blog/why-is-data-governance-important)

- Seven quotes to keep your data project on track (https://medium.com/decathlondigital/seven-quotes-to-keep-your-data-project-on-track-61e0acaa4cfc)

- Top 15 Famous Data Science Quotes | Towards Data Science (https://towardsdatascience.com/top-15-famous-data-science-quotes-f2e010b8d214)

_For%20light%20backgrounds.svg)