Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Successful Cloud Data Migration

Discover essential strategies for effective cloud data migration and ensure data integrity.

Introduction

Cloud data migration represents a crucial process that can significantly enhance an organization's operational efficiency and scalability. As businesses increasingly transition their data and applications to the cloud, grasping the complexities of this migration becomes vital for achieving success. This article explores best practices that not only ensure a seamless migration but also protect data integrity and compliance. Given the multitude of strategies available, how can organizations effectively navigate potential challenges and secure a successful migration?

Understand Cloud Data Migration Fundamentals

Cloud data migration involves the transfer of information, applications, and various business components from on-premises systems to cloud-based environments. Key concepts include:

- Data Types: Understanding the different types of data being migrated - structured, semi-structured, and unstructured - is crucial. This knowledge facilitates the customization of transition strategies tailored to the specific needs of each data type.

- Relocation Models: Familiarity with various relocation models is essential. As of 2026, lift-and-shift methods account for 38.3% of migration activities, while refactoring and replatforming strategies are growing at an annual rate of 22.35%. Choosing the appropriate model significantly impacts the efficiency and effectiveness of cloud data migration during the transition process.

- Compliance and Security: Adhering to compliance standards such as SOC 2, ISO 27001, HIPAA, and GDPR is vital during the transition to safeguard sensitive information. Organizations must prioritize compliance to mitigate potential legal and financial repercussions, as 59% of cybersecurity experts identify security and adherence as major obstacles to cloud data migration.

- Continuous Monitoring: After migration, continuous monitoring is critical for identifying security risks and vulnerabilities. This ongoing vigilance helps organizations maintain compliance and protect sensitive information in the cloud. Decube's automated crawling feature enhances information observability by refreshing metadata automatically, ensuring governance practices are upheld without manual intervention. This capability allows organizations to maintain accurate and up-to-date metadata, thereby improving information quality and governance.

- Stakeholder Engagement: Identifying key stakeholders, including IT teams, information engineers, and business leaders, is essential for ensuring alignment and support throughout the migration project. Engaging stakeholders early fosters collaboration and aids in addressing issues related to information governance and security. Additionally, understanding the cost implications of implementing Decube's trust platform, which may lead to minor billing increases due to additional scans (estimated at around 2-3%), is crucial for effective budgeting and resource allocation.

By developing a solid understanding of these fundamentals, organizations can more effectively navigate the complexities of cloud data migration and position themselves for success.

Explore Effective Cloud Migration Strategies

When planning a cloud data migration, organizations should consider several key strategies:

- Lift-and-Shift: This approach entails moving applications and data to the cloud with minimal modifications, offering a quick and cost-effective solution. However, it may not fully leverage cloud-native features, potentially limiting optimization opportunities. In 2026, success rates for lift-and-shift transfers reached approximately 90%, underscoring its effectiveness when executed properly. It is crucial to recognize that performance issues can gradually arise post-transfer, necessitating ongoing performance tuning to avert performance drift.

- Replatforming: This strategy involves implementing specific enhancements to applications during the transition, allowing organizations to capitalize on online capabilities without a complete overhaul. Businesses that modernize their applications during migration experience a 40% improvement in ROI compared to simple rehosting, making this a compelling option for many.

- Refactoring: Refactoring requires re-architecting applications to fully utilize cloud-native features, thereby enhancing performance and scalability. Although this method demands more time and resources, it can yield significant long-term benefits, particularly for organizations aiming to optimize their cloud investments.

- Phased Migration: Adopting a phased approach enables organizations to migrate in stages, thereby reducing risk and facilitating better management of resources and timelines. This method is especially advantageous for complex environments where dependencies must be meticulously managed. Understanding these dependencies prior to transition is essential to prevent complications later.

- Automated Tools: Employing cloud transfer tools that automate data transfer can minimize human error and ensure a smoother transition. Decube's automated crawling feature enhances data observability and governance by ensuring that metadata is efficiently managed and auto-refreshed once sources are connected. Additionally, Decube's integrated information trust platform provides automated column-level lineage, allowing organizations to visualize data flows and maintain integrity throughout the transition process. AI-powered transition tools, including those offered by Decube, have reduced timelines from years to weeks, achieving success rates of around 89% in 2026, particularly enhancing the lift-and-shift strategy.

By carefully selecting the appropriate transition approach and leveraging Decube's features, enterprises can significantly improve their chances of a successful cloud data migration while ensuring data quality and compliance with regulations. The focus should be on understanding dependencies and viewing the transition as a business initiative rather than merely a technical project.

Implement Robust Data Governance and Quality Assurance

To ensure effective data governance and quality during cloud migration, organizations should take several key steps:

- Conduct a Data Audit: A thorough audit of existing data is essential before migration. This procedure assists in identifying duplicates, inaccuracies, and compliance issues, which are vital for upholding information integrity. For instance, a global shoe retailer improved its information management by identifying and eliminating unnecessary datasets during its cloud data migration, thereby optimizing its operations.

- Establish Governance Policies: Developing clear information governance policies is crucial. These policies should delineate roles, responsibilities, and procedures for information management during and after migration. Effective governance ensures that information quality standards are upheld, which is increasingly recognized as a strategic driver for sustainable growth.

- Utilize Information Quality Tools: Implementing information quality tools that provide real-time monitoring and validation is critical. These tools facilitate the prompt detection and resolution of issues, thereby enhancing operational efficiency. For example, organizations employing automated information quality tools have reported significant improvements in decision-making processes and customer engagement.

- Train Staff: Educating all team members involved in the transition on information governance practices is essential. This ensures that everyone understands the importance of maintaining information quality and adheres to established protocols.

- Document Information Lineage: Maintaining clear documentation of information lineage is crucial for tracking information flow and transformations throughout the migration process. This practice is essential for compliance and auditing purposes, as it provides a single source of truth for key performance indicators across business units.

By prioritizing these information governance and quality assurance measures, companies can mitigate risks associated with information migration and ensure a successful cloud data migration to the cloud environment.

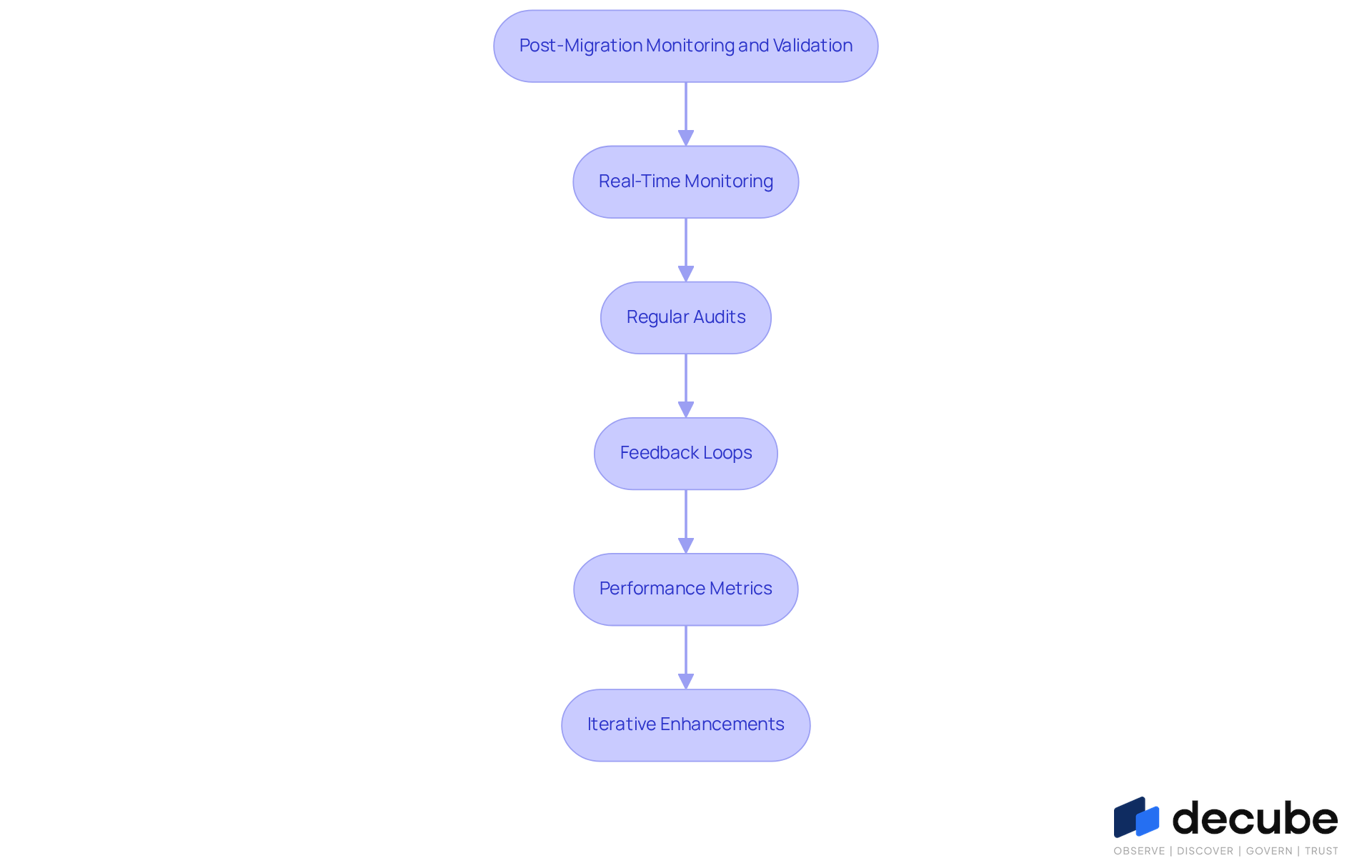

Ensure Continuous Monitoring and Validation Post-Migration

After completing cloud data migration, organizations must prioritize continuous monitoring and validation practices to ensure data integrity and compliance.

- Real-Time Monitoring: Organizations should implement monitoring tools that provide real-time tracking of data flows and system performance. This capability enables immediate detection of anomalies or issues, which is crucial for maintaining operational efficiency.

- Regular Audits: Conducting frequent audits is essential to evaluate information integrity and adherence to governance policies. These audits help ensure that all information remains precise and complies with established standards.

- Feedback Loops: Establishing mechanisms for users to report information quality issues is vital. This feedback is essential for swiftly addressing problems and improving overall information reliability.

- Performance Metrics: It is important to define and monitor key performance indicators (KPIs) related to information quality and system performance. Tracking these metrics allows organizations to assess the effectiveness of their cloud data migration strategies.

- Iterative Enhancements: Organizations should utilize insights gained from observations and evaluations to make iterative improvements to governance practices. This continuous improvement approach supports long-term success and adaptability in a dynamic cloud environment.

By ensuring robust monitoring and validation processes, organizations can uphold high data quality and compliance, ultimately bolstering their business objectives and enhancing decision-making capabilities.

Conclusion

Successfully navigating the complexities of cloud data migration relies on a thorough understanding of its fundamental principles and best practices. Organizations must comprehend the nuances of various data types, relocation models, compliance requirements, and the critical role of stakeholder engagement. By adopting a strategic approach to migration and utilizing tools that enhance data quality and governance, businesses can facilitate a smoother transition to the cloud.

Key insights include:

- The importance of selecting the appropriate migration strategy-whether lift-and-shift, replatforming, or refactoring.

- The necessity for robust data governance and continuous monitoring post-migration.

- Implementing comprehensive audits.

- Establishing clear governance policies.

- Employing real-time monitoring tools.

These are essential steps in maintaining data integrity and compliance throughout the process.

Ultimately, embracing these best practices not only mitigates risks associated with cloud data migration but also positions organizations for long-term success in a cloud-centric landscape. As the demand for cloud solutions continues to rise, the focus on effective migration strategies and ongoing data governance will be crucial. Organizations should view cloud migration as an opportunity for innovation and efficiency, ensuring they remain competitive in today's rapidly evolving digital environment.

Frequently Asked Questions

What is cloud data migration?

Cloud data migration involves transferring information, applications, and various business components from on-premises systems to cloud-based environments.

What types of data are involved in cloud data migration?

The types of data include structured, semi-structured, and unstructured data. Understanding these types is crucial for customizing transition strategies.

What are the different relocation models in cloud data migration?

Various relocation models include lift-and-shift, refactoring, and replatforming. As of 2026, lift-and-shift methods account for 38.3% of migration activities, while refactoring and replatforming are growing at an annual rate of 22.35%.

Why is compliance important during cloud data migration?

Compliance with standards such as SOC 2, ISO 27001, HIPAA, and GDPR is vital to safeguard sensitive information and mitigate potential legal and financial repercussions.

What are the major obstacles to cloud data migration identified by cybersecurity experts?

According to 59% of cybersecurity experts, security and adherence to compliance standards are major obstacles to cloud data migration.

Why is continuous monitoring necessary after migration?

Continuous monitoring is critical for identifying security risks and vulnerabilities, helping organizations maintain compliance and protect sensitive information in the cloud.

How does Decube enhance information observability during cloud data migration?

Decube's automated crawling feature refreshes metadata automatically, ensuring governance practices are upheld without manual intervention, thus improving information quality and governance.

Who are the key stakeholders in a cloud data migration project?

Key stakeholders include IT teams, information engineers, and business leaders. Engaging them early fosters collaboration and helps address issues related to information governance and security.

What should organizations consider regarding costs during cloud data migration?

Organizations should consider the cost implications of implementing Decube's trust platform, which may lead to minor billing increases due to additional scans, estimated at around 2-3%.

List of Sources

- Understand Cloud Data Migration Fundamentals

- Cloud Security and Compliance Updates Expected in 2026 (https://databank.com/resources/blogs/cloud-security-and-compliance-updates-expected-in-2026)

- Ensuring security and compliance during cloud migration - AZ Big Media (https://azbigmedia.com/business/ensuring-security-and-compliance-during-cloud-migration)

- Why 2026 Is The Year Cloud Migration Accelerates With Confidence (https://forbes.com/councils/forbestechcouncil/2026/02/23/why-2026-is-the-year-cloud-migration-accelerates-with-confidence)

- Data Migration Trends in 2026: Strategy, Security, and Scalability (https://medium.com/@kanerika/data-migration-trends-in-2026-strategy-security-and-scalability-2191d4dde1f7)

- Cloud Migration Statistics for 2026 (https://auvik.com/franklyit/blog/cloud-migration-statistics)

- Explore Effective Cloud Migration Strategies

- Why 2026 Is The Year Cloud Migration Accelerates With Confidence (https://forbes.com/councils/forbestechcouncil/2026/02/23/why-2026-is-the-year-cloud-migration-accelerates-with-confidence)

- Cloud Migration in 2026 What's Changed and Why It's Easier Now (https://linkedin.com/pulse/cloud-migration-2026-whats-changed-why-its-easier-now-piecyfer-ui8ff)

- The Hidden Costs of Lift and Shift Cloud Migrations | XEOX's blog (https://xeox.com/blog/the-hidden-costs-of-lift-and-shift-cloud-migrations)

- Cloud Migration and Application Modernization Trends in 2026 | Insphere (https://inspheresolutions.com/insights/articles/cloud-migration-modernization-key-trends)

- Implement Robust Data Governance and Quality Assurance

- 12 Best Data Quality Monitoring Tools of 2026 (An Honest Review) | MetricsWatch (https://metricswatch.com/blog/data-quality-monitoring-tools)

- Data Governance Best Practices for 2026 | Drive Business Value with Trusted Data (https://alation.com/blog/data-governance-best-practices)

- sganalytics.com (https://sganalytics.com/blog/data-quality-tools)

- 10 Steps to Updating Your 2026 Data Governance Strategy (https://lovelytics.com/post/10-steps-to-updating-your-2026-data-governance-strategy)

- Ensure Continuous Monitoring and Validation Post-Migration

- Why cloud migration is key to realizing AI value in financial services | The Microsoft Cloud Blog (https://microsoft.com/en-us/microsoft-cloud/blog/financial-services/2026/03/30/why-cloud-migration-is-key-to-realizing-ai-value-in-financial-services)

- The 2026 Data Quality and Data Observability Commercial Software Landscape | DataKitchen (https://datakitchen.io/the-2026-data-quality-and-data-observability-commercial-software-landscape)

- Cloud migration in regulated environments: Validation implications and best practices (https://idbs.com/2026/03/cloud-migration-in-regulated-environments-validation-implications-and-best-practices)

- What Cloud Migration Looks Like for Businesses in 2026 (https://computersolutionseast.com/blog/cloud-solutions/cloud-migration-2026-guide)

- What Is Cloud Data Migration Strategy in 2026 (https://cloudester.com/cloud-data-migration-strategy)

_For%20light%20backgrounds.svg)