Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

4 Best Practices for Effective Data Catalog Architecture

Discover essential best practices for building an effective data catalog architecture.

Introduction

Establishing a robust data catalog architecture is essential for organizations seeking to fully leverage their information assets. By concentrating on critical components such as:

- Metadata repositories

- Search engines

- Governance frameworks

organizations can develop a system that not only streamlines data management but also improves compliance and user engagement. Nevertheless, given the constantly changing landscape of data regulations and integration challenges, how can organizations ensure that their data catalog remains effective and adaptable to future demands?

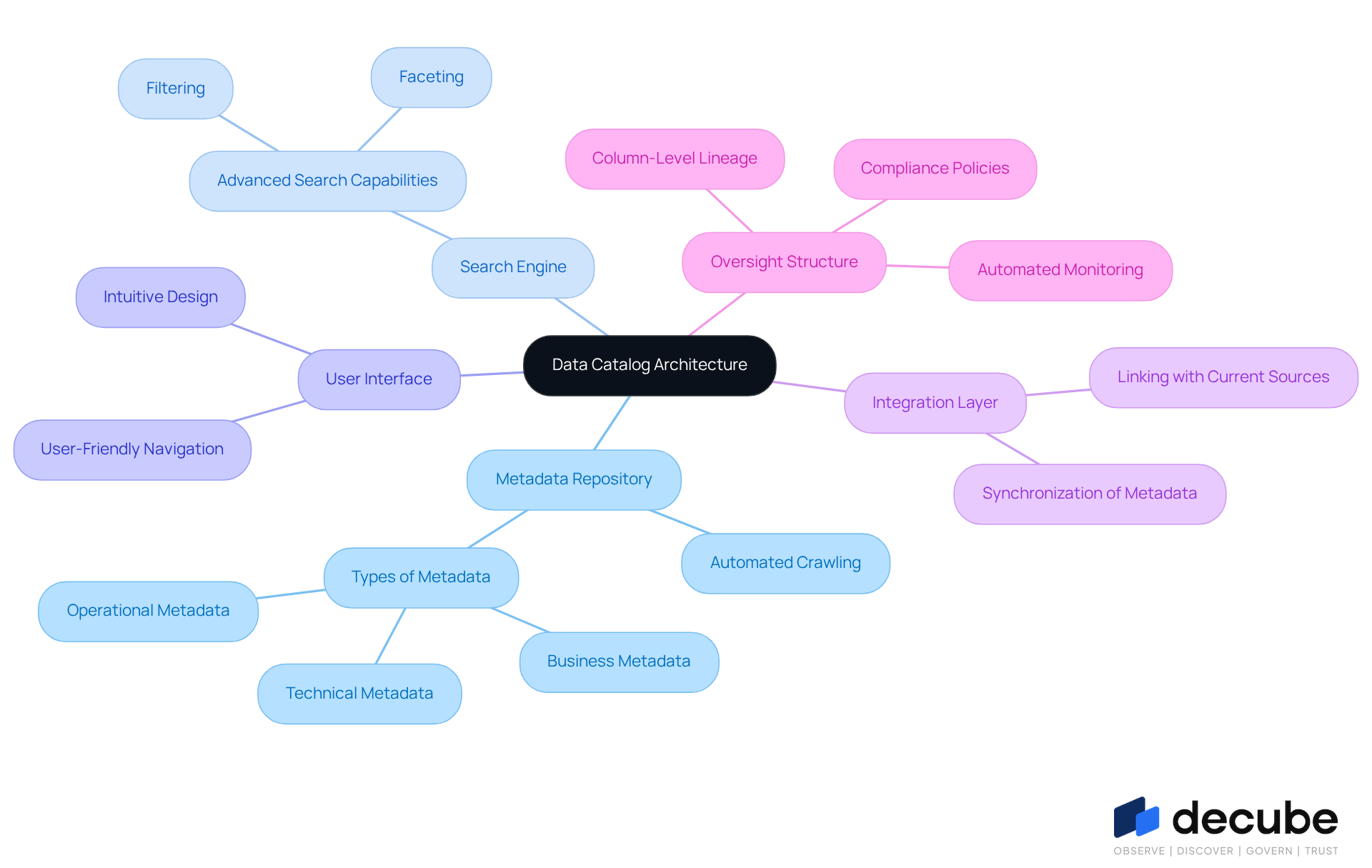

Identify Core Components of Data Catalog Architecture

To establish a robust data catalog architecture, organizations must focus on several essential components:

- Metadata Repository: Serving as the foundation of the information directory, the metadata repository centralizes all details about information assets. With Decube's automated crawling feature, metadata is refreshed automatically once sources are connected, ensuring the catalog remains current without manual intervention. It should accommodate various metadata types, including technical, business, and operational metadata, to ensure comprehensive governance and usability.

- Search Engine: A robust search engine is crucial for enabling individuals to quickly find and retrieve information assets. It should incorporate advanced search capabilities, such as filtering and faceting, to enhance the user experience and facilitate efficient information discovery.

- User Interface: The front-end interface must be intuitive and user-friendly, allowing users to browse the collection effortlessly and perform essential tasks such as information discovery and asset management.

- Integration Layer: This component is vital for linking the catalog with current sources and tools, ensuring smooth information flow and synchronization of metadata across different platforms. Decube's integration features support this process, enhancing overall information management.

- Oversight Structure: Incorporating oversight policies into the architecture is essential for compliance with regulations and standards, such as SOC 2 and GDPR, while also preserving information quality and security. Decube's unified information trust platform facilitates this by providing automated monitoring and analytics, which are critical for effective management. Additionally, the automated column-level lineage feature enables engineers to monitor information flow across components, enhancing observability and ensuring integrity.

By concentrating on these elements, organizations can develop a data catalog architecture that not only meets current needs but is also prepared to address future challenges efficiently, leveraging Decube's advanced features for improved observability and governance.

Integrate Data Catalog with Existing Infrastructure

To effectively integrate a data catalog with existing infrastructure, organizations should adopt several best practices:

- Identify Key Information Sources: Organizations should begin by outlining all information sources that will contribute to the inventory, including databases, information lakes, and third-party applications. This foundational step ensures comprehensive coverage of information assets.

- Utilize APIs for Integration: Leveraging APIs to connect the information catalog with other systems facilitates real-time information synchronization. This method ensures that metadata remains current, thereby improving the dependability of information access and utilization.

- Automate Metadata Collection: Implementing automated processes for metadata extraction and updates can significantly reduce initial cataloging time by up to 65% compared to manual methods while minimizing errors and freeing up resources for more strategic tasks. Decube's platform excels in this area, offering automated PII classification and ensuring that sensitive information is managed securely and in compliance with regulations such as GDPR and CCPA.

- Establish Information Lineage Tracking: Integrating lineage tracking capabilities provides visibility into information flow and transformations. This feature assists users in comprehending the origin and journey of information assets, which is essential for adherence to regulations like GDPR and CCPA. Decube's information lineage capabilities ensure that telecom companies can track where sensitive information resides and how it is accessed, maintaining audit readiness at all times.

- Ensure Compatibility with Management Tools: It is crucial to ensure that the catalog can seamlessly integrate with existing management tools. This compatibility is essential for effectively enforcing information policies and compliance measures, thereby reducing the risk of violations and enhancing overall information management. Decube's platform supports both on-premise and cloud-native deployments, ensuring that information governance processes are streamlined and effective.

- Address Potential Pitfalls: Organizations should be aware of common challenges in information integration, such as technical debt and the need for role-specific training. These factors can hinder successful implementation and should be addressed proactively.

By following these practices, organizations can foster a unified information environment where the inventory significantly enhances information management capabilities, ultimately promoting better decision-making and operational efficiency. Furthermore, as the global information directory market is anticipated to expand considerably, investing in these best practices will yield significant benefits, underscoring the necessity of efficient information directory integration.

Establish Governance and Compliance Frameworks

To establish effective governance and compliance frameworks for a data catalog, organizations should implement the following strategies:

- Define Governance Policies: Clearly outline information governance policies that dictate how information is managed, accessed, and shared. This includes specifying ownership of information, access controls, and quality standards, which are essential for compliance with regulations such as GDPR and HIPAA.

- Implement Role-Based Access Control (RBAC): Establish RBAC to ensure that only authorized users can access sensitive information. This method not only enhances information security but also aligns with current trends in information management, thereby reducing the risk of breaches and ensuring compliance with legal requirements. Decube's automated crawling feature supports this by enabling organizations to manage who can view or edit information, streamlining access control and ensuring secure information handling.

- Routine Evaluations and Oversight: Conduct routine assessments of information access and usage to ensure compliance with management policies. Maintaining comprehensive audit trails that record changes, access history, and policy updates is essential. Utilizing automated monitoring tools, such as those offered by Decube, can help identify anomalies or unauthorized access attempts, thereby reinforcing compliance and security measures.

- Documentation and Customized Training: Maintain extensive documentation of management policies and provide customized training programs for users based on their roles. This ensures that all stakeholders understand their responsibilities regarding data management, fostering a culture of accountability and awareness.

- Engage Stakeholders: Involve key stakeholders from various departments in the management process to ensure that policies are practical and aligned with business objectives. This cross-functional teamwork is essential for the effective execution of management initiatives.

By creating a robust governance framework, organizations can enhance the reliability and trustworthiness of their information repository, ultimately aiding better operational decisions, minimizing regulatory risk, and ensuring adherence to evolving regulations.

Engage Users and Provide Comprehensive Training

To foster user engagement and ensure effective training for a data catalog, organizations should implement the following best practices:

- Conduct Needs Assessments: Prior to training, evaluating the specific requirements and skill levels of participants is essential. This customized strategy guarantees that training programs are relevant and efficient, addressing the distinct challenges individuals face when navigating the information repository.

- Develop Comprehensive Training Materials: Organizations should create a variety of training resources, including instruction manuals, video tutorials, and FAQs. These resources assist individuals in effectively utilizing the information directory and enhance their overall learning experience.

- Provide Practical Training Sessions: Arranging hands-on training sessions allows participants to interact with the information repository in real-life scenarios. This experiential learning approach significantly enhances knowledge retention and application.

- Encourage Feedback and Iteration: Actively soliciting input from individuals regarding both the training process and the information repository itself is crucial. Utilizing this feedback for continuous improvement aids in refining the training program and enhances the usability of the collection.

- Identify and Appreciate Participation: Recognizing users who actively engage with the information repository and contribute to its success is vital. Acknowledging their efforts can motivate others to participate, fostering a culture of data-driven decision-making within the organization.

By implementing these strategies, organizations can ensure that their data catalog is not only adopted but also utilized to its full potential, driving better insights and outcomes.

Conclusion

A well-structured data catalog architecture is essential for organizations that seek to manage their information assets effectively. By concentrating on core components such as:

- A metadata repository

- A robust search engine

- An intuitive user interface

- An integration layer

Businesses can develop a system that not only addresses current demands but also adapts to future challenges. Establishing a governance framework further ensures compliance and security, while engaging users through comprehensive training cultivates a culture of data-driven decision-making.

Key practices emphasize the significance of:

- Identifying information sources

- Utilizing APIs for seamless integration

- Automating metadata collection

- Implementing role-based access control

These strategies streamline operations and enhance the overall reliability and trustworthiness of the data catalog. Moreover, fostering user engagement through tailored training and feedback mechanisms guarantees that all stakeholders can leverage the catalog's capabilities to their fullest potential.

Ultimately, investing in an effective data catalog architecture and adhering to best practices is not merely a technical necessity; it is a strategic imperative. As data continues to expand in volume and complexity, organizations must prioritize these practices to unlock insights, enhance decision-making, and maintain a competitive edge in their respective industries. Embracing these principles will pave the way for a more organized, compliant, and user-friendly information environment.

Frequently Asked Questions

What is the purpose of a metadata repository in data catalog architecture?

The metadata repository serves as the foundation of the information directory, centralizing all details about information assets and ensuring the catalog remains current through automated refreshing of metadata once sources are connected.

Why is a search engine important in a data catalog?

A robust search engine is crucial for enabling individuals to quickly find and retrieve information assets, incorporating advanced search capabilities like filtering and faceting to enhance user experience and facilitate efficient information discovery.

What features should a user interface have in a data catalog?

The user interface must be intuitive and user-friendly, allowing users to browse the collection effortlessly and perform essential tasks such as information discovery and asset management.

What role does the integration layer play in data catalog architecture?

The integration layer is vital for linking the catalog with current sources and tools, ensuring smooth information flow and synchronization of metadata across different platforms.

How does an oversight structure contribute to data catalog architecture?

An oversight structure incorporates policies for compliance with regulations and standards, preserving information quality and security. It facilitates automated monitoring and analytics, which are critical for effective management.

What advanced features does Decube offer for data catalog architecture?

Decube offers features such as automated monitoring, analytics, and an automated column-level lineage feature that enables engineers to monitor information flow across components, enhancing observability and ensuring integrity.

List of Sources

- Identify Core Components of Data Catalog Architecture

- Data Catalog for AI: Capabilities, Uses & Tooling in 2026 (https://atlan.com/know/data-catalog-for-ai)

- Data Catalog Market: Size, Growth & Key Trends for 2026 (https://ovaledge.com/blog/data-catalog-market)

- Enterprise Data Catalog: The Complete Guide for 2026 - Murdio (https://murdio.com/insights/enterprise-data-catalog)

- Data Catalog in 2026 - Why It is a Must Have for Your Enterprise Data (https://sganalytics.com/blog/data-catalog-2026-for-enterprise-data)

- Data Catalogs in 2026: Definitions, Trends, and Best Practices for Modern Data Management (https://promethium.ai/guides/data-catalogs-2026-guide-modern-data-management)

- Integrate Data Catalog with Existing Infrastructure

- Data Catalog Statistics and Facts (2026) (https://scoop.market.us/data-catalog-statistics)

- Data Catalogs in 2026: Definitions, Trends, and Best Practices for Modern Data Management (https://promethium.ai/guides/data-catalogs-2026-guide-modern-data-management)

- Top 5 Data Infrastructure Trends to Watch in 2026 | APMdigest (https://apmdigest.com/top-5-data-infrastructure-trends-watch-2026)

- How to Handle Data Catalog Integration (https://oneuptime.com/blog/post/2026-01-24-data-catalog-integration/view)

- Updating Data Architecture for 2026 with Informatica, Dataiku, Qlik, and CData (https://dbta.com/Editorial/News-Flashes/Updating-Data-Architecture-for-2026-with-Informatica-Dataiku-Qlik-and-CData-173717.aspx)

- Establish Governance and Compliance Frameworks

- Data Governance & Compliance Framework: Best Practices 2026 (https://ovaledge.com/blog/data-governance-and-compliance)

- The Role Of Data Governance In Meeting Evolving Privacy Regulations (https://forbes.com/councils/forbesbusinesscouncil/2025/05/05/the-role-of-data-governance-in-meeting-evolving-privacy-regulations)

- The Future of Data Governance: Policies and Compliance Essentials | Rakuten SixthSense (https://sixthsense.rakuten.com/blog/The-Future-of-Data-Governance-Policies-and-Compliance-Essentials)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Data Governance and Compliance: Act of Checks & Balances (2024) (https://atlan.com/data-governance-and-compliance)

- Engage Users and Provide Comprehensive Training

- 18 of Our Favorite Quotes About the Power of Training & Development - Abilitie (https://abilitie.com/blog/2018-7-6-18-of-our-favorite-quotes-about-the-power-of-training-development)

- Our Top 16 Favourite Training and Development Quotes | Discovery ADR Group (https://discovery-adr.com/our-top-16-favourite-training-and-development-quotes)

- Training Needs Analysis Is No Longer Optional: A Global Playbook - GP Strategies (https://gpstrategies.com/blog/training-needs-analysis-is-no-longer-optional-a-global-playbook)

- July Spotlight: Start with the Gaps — Conducting a Training Needs Assessment | PHF (https://phf.org/july-spotlight-start-with-the-gaps-conducting-a-training-needs-assessment)

- Conducting a Training Needs Assessment in 2026 (https://ins-globalconsulting.com/news-post/training-needs-assessment)

_For%20light%20backgrounds.svg)