Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Key Trends Shaping the Sensitive Data Discovery Market

Explore the key trends shaping the sensitive data discovery market and their impact on compliance.

Introduction

The sensitive data discovery market is undergoing a significant transformation, propelled by technological advancements and heightened regulatory demands. Organizations now face a distinct opportunity to strengthen their information governance strategies through innovative solutions that emphasize compliance and data security. As this landscape evolves, businesses must consider how to effectively navigate the complexities of sensitive data management to stay ahead of the curve.

Decube: Transforming Data Governance and Observability in Sensitive Data Discovery

Decube is leading the way in transforming information management and visibility within the sensitive data discovery market. By offering a comprehensive platform that seamlessly integrates information observability, cataloging, and oversight, Decube enables organizations to effectively manage within the sensitive data discovery market. Its advanced features, including machine learning-powered anomaly detection and real-time monitoring, empower businesses to uphold high standards of information quality and compliance with industry regulations such as SOC 2 and GDPR. This holistic approach not only but also builds trust among stakeholders, positioning Decube as a preferred choice for enterprises navigating the complexities of governance.

AI and Machine Learning Integration: Enhancing Sensitive Data Discovery Capabilities

The integration of AI and machine learning into critical information discovery tools is fundamentally transforming how organizations identify and manage confidential information. These advanced technologies facilitate automated scanning and classification of data, significantly reducing the time and effort required for compliance. For example, Decube's machine learning-powered tests, such as null% and regex_match, efficiently analyze large datasets to reveal patterns and anomalies, ensuring that confidential information is accurately identified and safeguarded.

With features like smart alerts, Decube enhances , allowing organizations to detect issues early and maintain trust in their information. As regulatory scrutiny intensifies, the adoption of AI and machine learning not only boosts operational efficiency but also fortifies compliance efforts, establishing it as a vital trend in the sensitive data discovery market. Organizations employing AI for information classification report a notable increase in efficiency, with studies showing that automated systems can cut classification time by as much as 70%. Furthermore, with the global volume of information projected to exceed 394 zettabytes by 2028, this shift towards automation is essential for entities aiming to navigate the complexities of information governance and compliance in 2026.

Rising Regulatory Pressures: Driving Demand for Sensitive Data Discovery Solutions

As regulatory frameworks tighten, entities increasingly face the necessity of adopting critical information discovery solutions to ensure compliance with regulations such as GDPR and HIPAA. These regulations mandate not only the protection of confidential information but also require businesses to demonstrate adherence through effective information management practices. The pressure to conform is underscored by statistics indicating that 94% of entities incorporate compliance into their risk evaluation activities, highlighting its significance in critical information management.

This growing demand for tools that effectively identify, classify, and protect confidential information is evident, as 81% of entities actively pursue ISO 27001 certification, reflecting a commitment to organized, risk-focused security frameworks. Companies that implement these solutions proactively not only mitigate the risk of non-compliance but also enhance their reputation and trustworthiness among customers and regulators.

Successful examples include organizations utilizing BigID to automate Subject Access Requests (DSAR), which streamlines compliance processes and reduces operational overhead. This illustrates the tangible benefits of .

Cloud Adoption: The Shift Towards Cloud-Based Sensitive Data Discovery Solutions

The increasing adoption of cloud technologies is reshaping the information discovery landscape. Organizations are increasingly choosing cloud-based solutions for their scalability and flexibility, which are essential for efficiently managing large volumes of confidential information. By leveraging cloud infrastructure, businesses can deploy tools for the sensitive data discovery market that integrate seamlessly with their existing systems, enabling real-time monitoring and compliance. Notably, 94% of companies report improved security after adopting cloud solutions, highlighting the integrated security features that enhance information protection. This makes particularly appealing for organizations aiming to strengthen their information management frameworks. Furthermore, with 95% of new digital workloads expected to be generated on cloud-native platforms by 2026, the impact of cloud technologies on information management efficiency is undeniable, encouraging organizations to adopt more effective governance practices.

Managing Growing Data Volumes: Challenges and Solutions in Sensitive Data Discovery

As organizations generate and collect more information than ever, managing the increasing volumes presents significant challenges within the sensitive data discovery market. The vast scale of data can result in inefficiencies and a heightened risk of non-compliance if not properly addressed. To overcome these obstacles, it is essential for organizations to implement robust information management frameworks that integrate .

Decube's advanced information quality monitoring solutions leverage machine learning to enhance the discovery process. These solutions automatically identify quality thresholds and provide intelligent alerts, thereby preventing notification overload. Additionally, Decube's automated crawling capabilities ensure that metadata is effectively managed and maintained, which in turn improves visibility and governance.

Such approaches are vital for ensuring that confidential information is accurately recognized and efficiently handled within the sensitive data discovery market, even as data volumes continue to rise.

Prioritizing Data Privacy and Security: A Key Trend in Sensitive Data Discovery

In the current information-driven landscape, prioritizing privacy and security is essential for shaping the confidential information discovery market. Organizations understand that goes beyond mere regulatory compliance; it is vital for establishing and sustaining customer trust. A significant 73% of Americans have experienced internet fraud or attacks, underscoring the urgent need for robust information protection measures.

By adopting comprehensive information management strategies that prioritize privacy and security, organizations can effectively mitigate risks associated with breaches and non-compliance. Furthermore, the deployment of advanced information discovery tools equipped with security features ensures that confidential information is protected throughout its lifecycle, thereby enhancing customer trust and loyalty.

Holistic Approaches: Integrating Observability, Governance, and Quality in Data Discovery

A comprehensive strategy for information management is increasingly vital in the sensitive data discovery market. By integrating observability, governance, and information quality, organizations can establish a robust framework that significantly enhances their capacity to manage within the sensitive data discovery market. This integration facilitates of information pipelines, enabling the rapid identification and resolution of quality issues.

Moreover, a unified approach fosters collaboration between IT and business teams, allowing organizations to view data as a strategic asset while ensuring compliance with regulatory requirements. Industry insights highlight that organizations with well-developed information governance and quality programs achieve 15-20% greater operational efficiency, underscoring the essential role of integrated observability and governance in attaining superior information management outcomes.

Automation in Sensitive Data Discovery: Streamlining Processes for Efficiency

Automation is reshaping the information discovery landscape by streamlining processes and enhancing operational efficiency. Organizations are increasingly adopting automated tools that can swiftly scan, classify, and safeguard confidential information across various environments. This shift alleviates the associated with compliance and diminishes the risk of human error, a common challenge in information management.

For example, companies employing AI-driven compliance automation, such as Decube's machine learning-powered tests and intelligent alerts, report processing times that are up to 70% faster and a reduction in compliance costs by approximately 30%.

Decube's automated workflows and crawling capabilities ensure that confidential information is continuously monitored and effectively managed, allowing for prompt responses to compliance issues as they arise. This proactive approach enhances compliance accuracy and aligns with evolving regulatory requirements, establishing automation as a crucial component of modern information management strategies.

Cross-Functional Collaboration: Bridging IT and Business Teams in Data Discovery

Cross-functional cooperation is crucial for bridging the gap between IT and business teams in sensitive information discovery initiatives. Effective information governance depends on the collaboration and input of various stakeholders, including:

- Engineers

- Compliance officers

- Business leaders

By cultivating a culture of teamwork, organizations can align their information discovery processes with business objectives while ensuring compliance with evolving regulatory requirements. This collaborative approach not only enhances but also fosters innovation, enabling organizations to utilize their resources more efficiently. Notably, 62% of organizations identify information management as the primary barrier to AI adoption, underscoring the need for coordinated efforts to improve information lineage and quality standards.

Moreover, organizations that emphasize collaboration experience a significant reduction in compliance costs-up to 35%-and see revenue increases of 5-15% from effectively executed transformations. As we approach 2026, the importance of aligning IT and business in information discovery will continue to grow, with 65% of information leaders prioritizing oversight concerns related to AI and information quality, highlighting the critical nature of this collaboration.

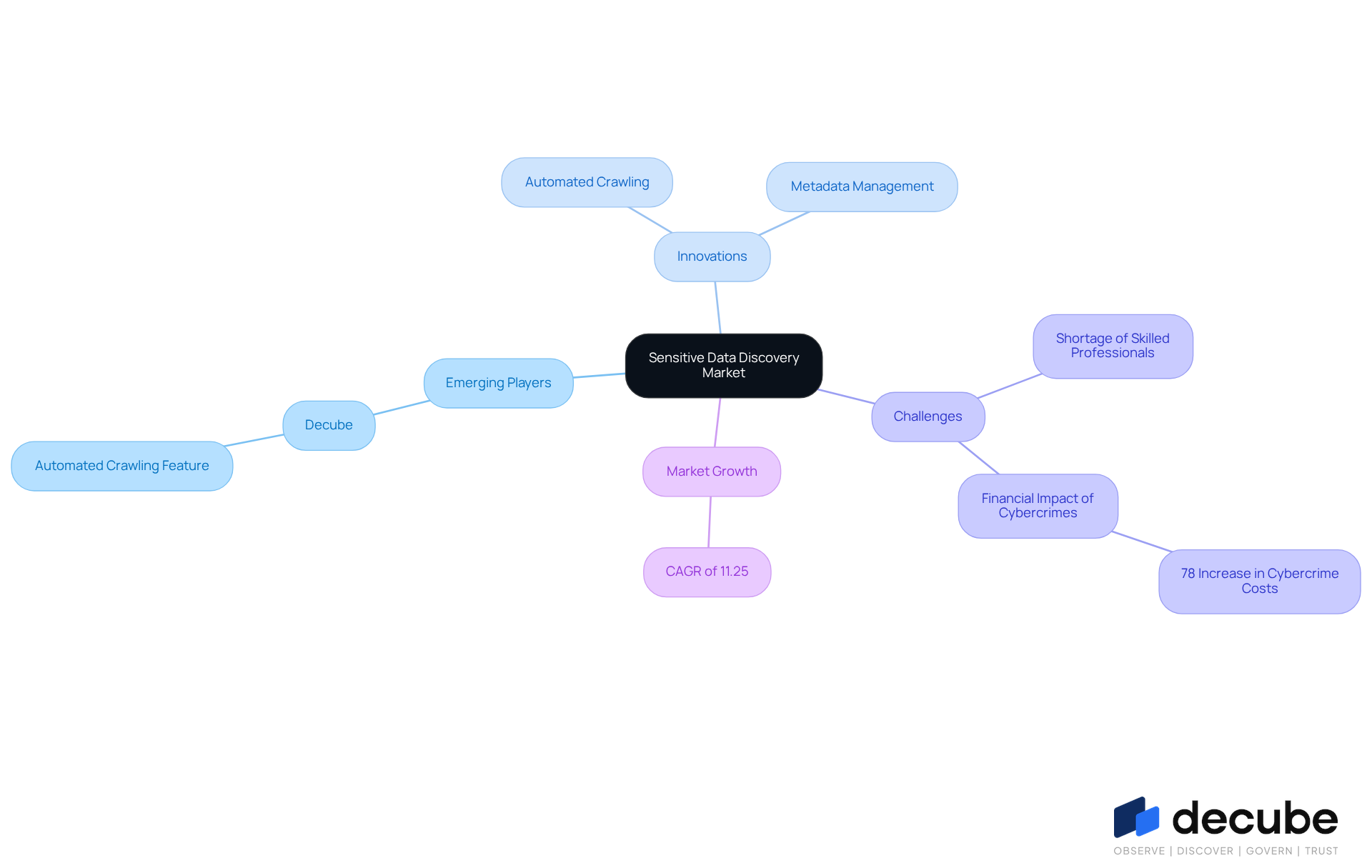

Emerging Players and Innovations: Shaping the Future of Sensitive Data Discovery Market

The sensitive data discovery market is undergoing significant transformations, driven by emerging players and innovative technologies. New entrants are introducing solutions that challenge traditional methods of information management and compliance. Notably, Decube's automated crawling feature stands out, providing seamless metadata management that enhances data observability and governance. By automatically refreshing connected sources, Decube alleviates the burden of manual updates, allowing companies to focus on strategic initiatives.

Despite these advancements, organizations face challenges stemming from a shortage of skilled professionals, which limits their ability to fully leverage these innovations. Additionally, the financial impact of cybercrimes has surged by nearly 78% over the past four years, complicating information management for IT teams and underscoring the urgent need for effective oversight solutions. As competition intensifies, established players like Decube must adapt by evolving their offerings and embracing new technologies.

This dynamic landscape presents opportunities for organizations to explore that better address their sensitive data discovery market needs. The market is projected to grow at a compound annual growth rate (CAGR) of 11.25% from 2025 to 2035, reflecting the increasing demand for effective data governance solutions that can keep pace with the rapid evolution of data privacy regulations and security threats.

Conclusion

The sensitive data discovery market is rapidly evolving, driven by advancements in technology, regulatory pressures, and the necessity for robust information governance. Organizations must prioritize innovative solutions that enhance their ability to manage and protect confidential information effectively. Embracing trends such as AI integration, cloud adoption, and automation allows businesses to streamline processes while ensuring compliance with stringent regulations.

Key insights highlight the transformative role of platforms like Decube in enhancing data governance through observability and quality management. The increasing demand for compliance tools, alongside challenges posed by growing data volumes, underscores the importance of a holistic approach to information management. Organizations that foster cross-functional collaboration between IT and business teams will be better equipped to navigate the complexities of sensitive data discovery.

Looking ahead, embracing these trends is not merely a strategic advantage but a necessity for organizations aiming to thrive in a data-driven landscape. As the market continues to grow and evolve, proactive engagement with emerging technologies and practices will be crucial. Prioritizing data privacy and security, while fostering an environment of collaboration, will ultimately enhance trust and reliability in information management practices, paving the way for sustained success in the sensitive data discovery market.

Frequently Asked Questions

What is Decube and what does it offer?

Decube is a platform that transforms information management and visibility within the sensitive data discovery market. It offers a comprehensive solution that integrates information observability, cataloging, and oversight, enabling organizations to manage sensitive data effectively.

How does Decube enhance data governance?

Decube enhances data governance through advanced features like machine learning-powered anomaly detection and real-time monitoring, which help organizations maintain high standards of information quality and comply with industry regulations such as SOC 2 and GDPR.

What role do AI and machine learning play in sensitive data discovery?

AI and machine learning facilitate automated scanning and classification of data, significantly reducing the time and effort required for compliance. They enable efficient analysis of large datasets to identify patterns and anomalies, ensuring accurate identification and safeguarding of confidential information.

How does Decube improve quality monitoring?

Decube enhances quality monitoring with features like smart alerts, allowing organizations to detect issues early and maintain trust in their information management practices.

What are the implications of rising regulatory pressures on sensitive data discovery?

Rising regulatory pressures, such as GDPR and HIPAA, necessitate the adoption of information discovery solutions to ensure compliance. Companies must protect confidential information and demonstrate adherence through effective information management practices.

What percentage of entities incorporate compliance into their risk evaluation activities?

94% of entities incorporate compliance into their risk evaluation activities, highlighting its significance in critical information management.

What is the significance of ISO 27001 certification for organizations?

81% of entities actively pursue ISO 27001 certification, reflecting a commitment to organized, risk-focused security frameworks that enhance their reputation and trustworthiness among customers and regulators.

Can you provide an example of how organizations benefit from compliance tools?

Organizations utilizing tools like BigID to automate Subject Access Requests (DSAR) streamline compliance processes and reduce operational overhead, demonstrating the tangible benefits of integrating compliance tools into their management strategies.

_For%20light%20backgrounds.svg)